Cloud Computing for IT Pros (5/6): Publishing Windows Azure Application to Public Cloud

Technorati Tags: service,cloud,azure,fabric,saas,paas,iaas

This series focusing on cloud essentials for IT professionals includes:

- Part 1: What Is Service

- Part 2: What Is Cloud

- Part 3: Windows Azure Computing Model

- Part 4: Fabric Controller and AppFabric

- Part 5: Publishing Windows Azure Application to Public Cloud

- Part 6: Building Private Cloud of IaaS

Compared with on-premises computing, deployment to cloud is much easier. A few clicking operations will do. And I believe this is probably why many have jumped into a conclusion that we, IT professionals, are going to lose our jobs in the cloud era. There is some, not much but some in my view, truth to it. However, not so fast, I say. Bringing cloud into the picture does dramatically reduce cost and complexities in some areas, yet at the same time cloud also introduces many new nuances which demand IT disciplines to develop criteria with business insights. No, I do not think so. IT professionals are not going to lose jobs, but to become more directly than ever affecting the bottom-line since with cloud computing everything is attached with a dollar sign and every IT operation has a direct cost implication. In this article, I discuss some routines and additional considerations for deployment to cloud. Notice through this article, in the context of cloud computing I use the terms, application and service, interchangeably.

Compared with on-premises computing, deployment to cloud is much easier. A few clicking operations will do. And I believe this is probably why many have jumped into a conclusion that we, IT professionals, are going to lose our jobs in the cloud era. There is some, not much but some in my view, truth to it. However, not so fast, I say. Bringing cloud into the picture does dramatically reduce cost and complexities in some areas, yet at the same time cloud also introduces many new nuances which demand IT disciplines to develop criteria with business insights. No, I do not think so. IT professionals are not going to lose jobs, but to become more directly than ever affecting the bottom-line since with cloud computing everything is attached with a dollar sign and every IT operation has a direct cost implication. In this article, I discuss some routines and additional considerations for deployment to cloud. Notice through this article, in the context of cloud computing I use the terms, application and service, interchangeably.

Deployment Tasks

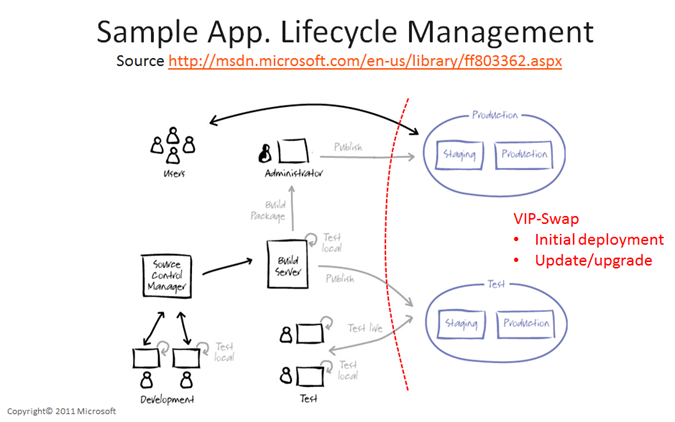

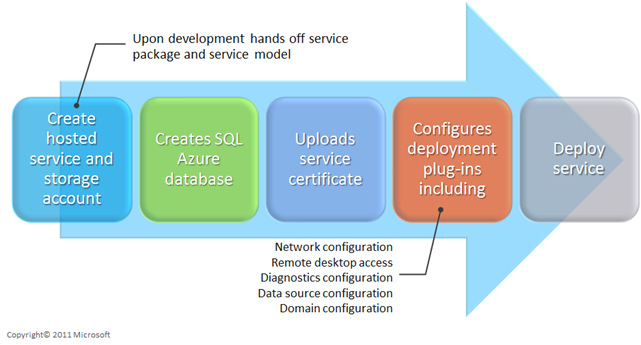

For IT professionals, deploying a Windows Azure service to cloud starts with development hands over the gold bits and configuration file, as depicted in the schematic below.

In Visual Studio with Windows Azure SDK, a developer can create a cloud project, build a solution, and publish the solution with an option to generate a package which zips the code and in company with a configuration file defining the name and number of instance of Compute Role. This package and the configuration file are what gets uploaded into Windows Azure platform, either through the UI of Windows Azure Developer Portal or programmatically with Windows Azure API. For those not familiar with the steps and operations to develop and upload a cloud application into Windows Azure platform, I highly recommend you invest the time to walk through the labs which are well documented and readily available.

Application Lifecycle Management

In a typical enterprise deployment process, developers code and test applications locally or in an isolated environment and go through the build process to promote the code to an integrated/QA test environment. And upon finishing tests and QA, the code is promoted to production. For cloud deployment, the idea is much the same other than at some point the code will be placed in cloud and tests will be conducted through cloud before becoming live in Internet. Below is a sample of application lifecycle where both the production and the main test environments are in cloud.

Traditionally when developing and deploying applications on premises, one challenge (and it can be a big one) is to to try to keep the test environment as closely mimicking the production as possible to validate the developed code, data, operations, and procedures. Sometimes, this can be a major undertaking due to ownership, corporate politics, financial constraints, technical limitations, discrepancies between test environment and the production, etc. And the stories of applications behave as expected in test environment, yet generate numerous security and storage violations, fatal errors, and crash hard once in production are many times heard. This will be different when testing Windows Azure services. It turns out the user experience of promoting code from staging to production in cloud can be pleasant and something to look forward to.

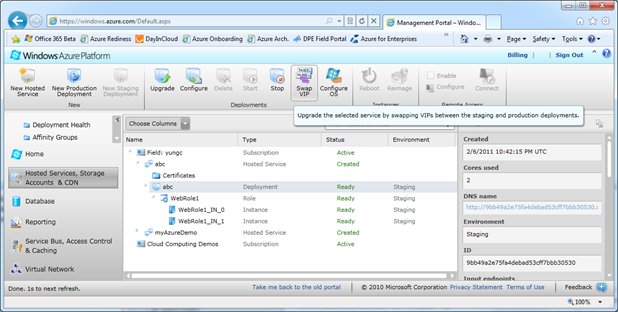

VIP-Swap

When first promoting code into Windows Azure platform from local development/test environment, the application is placed in the so-called staging phase and when ready, an administrator can then promote the application into production. An interesting fact is that the staging and the production environment in Windows Azure platform are identical. There is no difference and Windows Azure platform is Windows Azure platform. What differentiates a staging environment from a production environment is the URL. The former is provided with an unique alphanumeric strings as part of the non-published staging URL like https://c2ek9aa346384629a3401e8119de3500.cloudapp.net/ while the latter is an administrator-specified user friendly URL like https://yc.cloudapp.net. So to promote from a staging environment to the production in Windows Azure platform, simply swap the virtual IP by swapping the staging URL with the production one. This is called VIP-Swap and two mouse-clicks are what it takes to deploy a Windows Azure service from staging to production. See the screen capture below. And minutes later, once Fabric Controller syncs with all the URL references of this application, in production it is.

VIP-swap is handy for initial deployment and subsequent updating a service with a new package. When making changes of a service, the service will be placed in staging environment and a VIP-Swap will promote the code into production. This feature however is not applicable to all changes of service definition. In such scenarios, redeployment of the service package will become necessary.

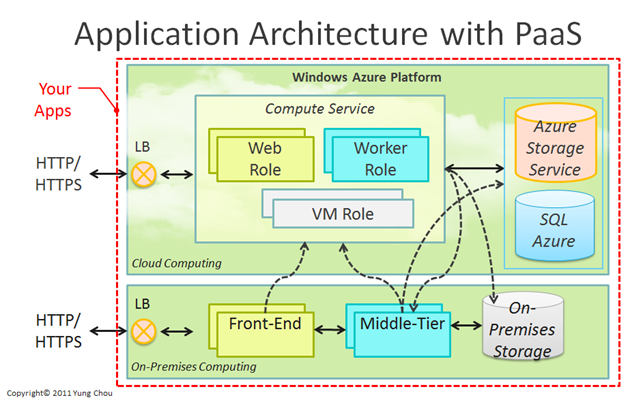

Application Architecture

With Windows Azure platform in the cloud, there are new opportunities to extend as well as integrate on-premises computing with cloud services. An application architecture can accept HTTP/HTTPS requests either with a Web Role or a front-end that is on premises. And where to process requests and store the data can be in cloud, on premises, and a combination of the two, as shown below.

So either the service starts in cloud or on-premises, an application architect now has many options to architecturally improve the agility of an application. An HTTP request can start with on-premises computing while integrated with Worker Role in the cloud for capacity on demand. At the same time, a cloud service can as well employ the middle-tier and the back-end that are deployed on premises for security and control.

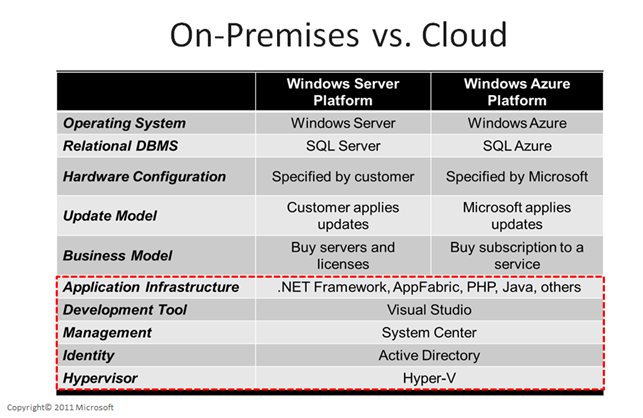

Standardizing Core Components

Either on premises or in cloud, core components including: application infrastructure, development tools, common management platform, identity mechanism, and virtualization technology should be standardized sooner than later. With a common set of technologies, see below, both IT professionals and developers can apply their skills and experiences to either computing platform. This will in the long run produce higher quality services with lower costs. This is crucial to make the transformation into cloud a convergent process.

Cost Model

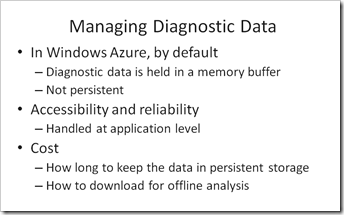

By default, diagnostic data in Windows Azure is held in a memory buffer and not persistent. To access log data in Windows Azure, first the log data need to be moved to persistent storage. One can do this manually by using the Windows Azure Developer Portal, or add code in the application to dump the log data to storage at scheduled intervals. Next, need to have a way to view the log data in Windows Azure storage using tools like Azure Storage Explorer.

Traditionally when running applications on premises, diagnostics data are relatively easy to get since they are stored in local storage and can be accesses and moved easily. Logs and events generated by applications are subscribed, acquired, and stored as long as needed. When moving an application to the cloud, the access to diagnostic data is no longer status quo. Because persisting diagnostic data to Windows Azure storage costs money, IT will need to plan how long to keep the diagnostic data in Windows Azure and how to download it for offline analysis.

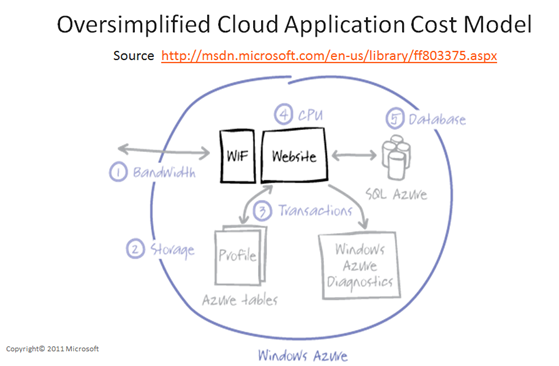

The cost of processing diagnostic data is one item in the overall cost model for moving application to public cloud. An application cost analysis can start with those big buckets including: network bandwidth, storage, transactions, cpu, etc. And eventually list out all the cost items and each with organizations responsible for those costs. Compare the ROI with that for on-premises deployment to justify if moving to cloud makes sense. Once agreements are made, each cost item/bucket should have a designated accounting code for tracking the cost. IT professionals will have much to do with the cost analysis since the operational costs of running applications in cloud are compared with both the capital expense and operational costs for on-premises deployment. For example, routine on-premises operations like backup and restore, monitoring, reporting, and troubleshooting are to be revised and integrated with cloud storage management which has both operation and cost implications.

The takeaway is “Don’t go to bed without developing a cost model.”

Some Thoughts on Security

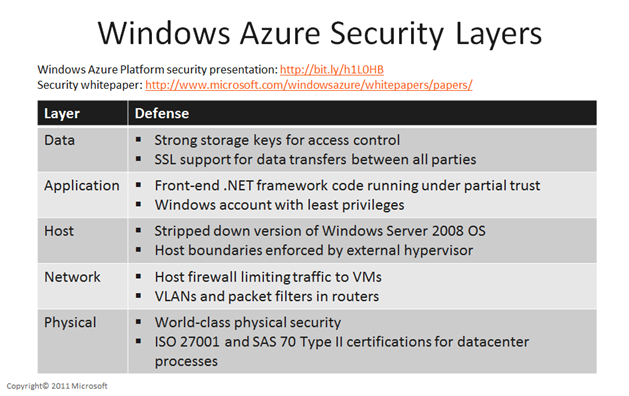

Cloud is not secure? I happen to believe in most scenarios, cloud is actually very secure, and more secure than running datacenter on premises. Cloud security is a topic itself and certainly far beyond the scope of the discussions here. Nevertheless, you will be surprised how fast a cloud security question can be raised and answered, many times without actually answering it. In my experience, many answers of cloud security questions seem surfacing themselves once the context of a security question is correctly set. Keep the concept, separation of responsibilities, vivid whenever you contemplate cloud security. It will bring so much clarity. For SaaS, there is very limited what a subscriber can do and securities at network, system, and application layers are much pre-determined. PaaS like Windows Azure platform, on the other hand, presents many opportunities to place security measures in multiple layer with defense in depth strategy as show below.

Recently some Microsoft datacenters hosting services in the cloud for federal agencies have achieved FISMA certification and received ATO (Authorization to Operate). This is a very proof that cloud operations can meet and exceed the rigorous standard. The story of cloud computing will only get better from here.

Cloud is not secure? Think again.