How to get started with HoloLens development

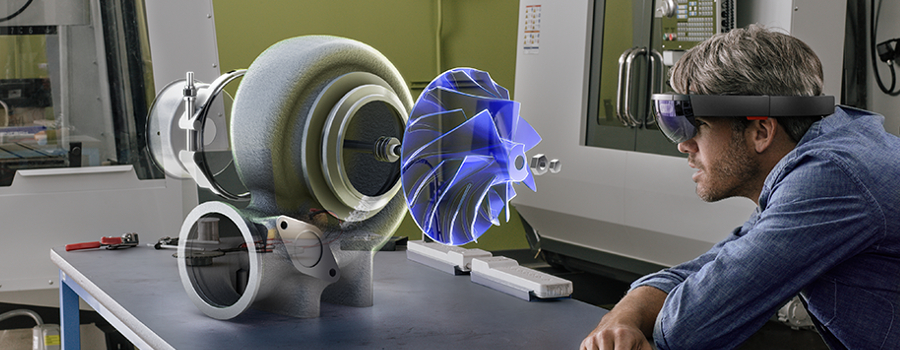

Microsoft HoloLens is the first self-contained holographic computer. A fully-mobile device that doesn't need to be tethered to a computer to work, and one that has a deep understanding of the environment it's being used in. It's a device that can benefit many people across different industries, and we've seen examples in medicine, automotive design and entertainment, just to name a few.

But if you're thinking about trying your hand at HoloLens development, where do you start? In this article, we hope to give you the resources you need to be able to kickstart your journey into developing holographic experiences.

One thing to remember is that the HoloLens is a full Windows 10 PC. While it might appear that development would be vastly different from what you're used to, there are far more similarities with Windows development than you might think.

Where should I start?

The video above is a presentation from Rocky Heckman, the HoloLens Pursuit Lead at Microsoft. Rocky provides a rundown of the device and its features, as well as running through a coding demo using Visual Studio and Unity. This video will give you everything you need to get a general understanding of how the device works, and how you can use Visual Studio and Unity to create your own holographic app.

There are many options for creating 3D holographic apps, though the most popular option is Unity. If you'd like more information on Unity specifically, you can view tutorials on the Holographic Academy, or head to the official Unity website which also has some great resources to get you started. You can also view the below video:

The video above is a presentation by Dennis Vroegop, an independent freelance consultant and Microsoft MVP, which will help you to understand the role of Unity in HoloLens app development. Not only does it run through some code examples and object importing, but it also shows an example through the HoloLens itself.

Development Considerations

The main user experience paradigms used in the HoloLens are Gaze, Gestures and Voice. These aren't all considerations you would always be making in app development, but they are all fundamental in defining how people will use your HoloLens app. Here's a little more information on how they work:

Gaze

Gaze

Mixed reality headsets use the position and orientation of your user's head, not their eyes, to determine their gaze vector. You can think of this vector as a laser pointer straight ahead from directly between the user's eyes. As the user looks around the room, your application can intersect this ray, both with its own holograms and with the spatial mapping mesh to determine what virtual or real-world object your user may be looking at.

On HoloLens, interactions should generally derive their targeting from the user's gaze, rather than trying to render or interact at the hand's location directly. Once an interaction has started, relative motions of the hand may be used to control the gesture, as with the manipulation or navigation gesture. With immersive headsets, you can target using either gaze or pointing-capable motion controllers.

You can learn more about working with gaze commands in Peter Daukintis' write up, which you can find here.

Gestures

Gestures allow users to take action in mixed reality with their hands. For HoloLens, gesture input lets you interact with your holograms naturally, or you can optionally use the included HoloLens Clicker. While hand gestures do not provide a precise location in space, the simplicity of putting on a HoloLens and immediately interacting with content allows users to get to work without any accessories.

You can learn more about working with gestures in Peter Daukintis' write up, which you can find here.

Voice

Voice is another key form of input on HoloLens. It allows you to directly command a hologram without having to use gestures - you simply gaze at a hologram and speak your command. Voice input can be a natural way to communicate your intent. Voice is especially good at traversing complex interfaces because it lets users cut through nested menus with one command. Worth noting is that voice input is powered by the same engine that supports speech in all other Universal Windows Apps.

You can learn more about working with voice commands in Peter Daukintis' write up, which you can find here.

Other Considerations

Something also worth noting is that your app will need to consistently render at 60 frames per second, a common minimum FPS when working with both augmented and virtual reality. Peter Daukintis explains more:

In mixed and virtual reality display technologies in order to not break the illusion of ‘presence’ it is critical to honour the time budget from device movement to getting the image to hit the users retina. This latency budget is known as ‘motion to photon’ latency and is often cited as having an upper bound of 20ms which, when exceeded will cause the feeling of immersion to be lost and digital content will noticeably lag behind the real world. With that in mind making performance optimisations a central tenet in your software process for these kinds of apps will help to ensure that you don’t ‘paint yourself into a corner’ and end up with a lot of restructuring work later.

If you'd like to read more on what considerations you can make to ensure your app runs at 60FPS, you can read Peter Daukintis' blog here.

What's next?

So you know how to start developing a mixed reality experience, but want to take it further and master more of the details that make it more immersive, more natural, more believable? This talk by Lars Klint will introduce new concepts and build on existing ones for doing just that. Learn how to take advantage of advanced gestures such as hold and manipulate, how to expand on voice commands, learn to master the developer tools such as the emulator and perception simulation, and much more. Get to grips with the development cycle and get into the details of the HoloLens development experience. At the end of this talk you will have the tools and knowledge to build more immersive and natural holographic experiences that take full advantage of mixed reality and isn’t merely an augmented or virtual experience.

Resources

If you are looking to get started on 2D apps or already have an existing UWP app you want to try, you will first need an updated Visual Studio 2015 or later, plus the HoloLens emulator from the links below to be able to build, debug and deploy. You can find documentation and guidance for UWP at Windows Dev Center.

You can find a comprehensive list of the tools needed, plus download links, on the Mixed Reality Install the Tools page.

The HoloLens emulator install also installs Visual Studio templates for C++ and Direct3D or C# and SharpDX if you prefer lower-level coding but if you are unfamiliar this is a steep learning curve. Here are some resources if that is your preferred route:

- Visual Studio 3D Starter Kit

- DirectX Samples

- DirectX Toolkit

- DirectX Graphics and Gaming Documentation

The HoloLens forums are there if you want to ask questions and find other developers, and here are some other fantastic resources which will help with HoloLens development:

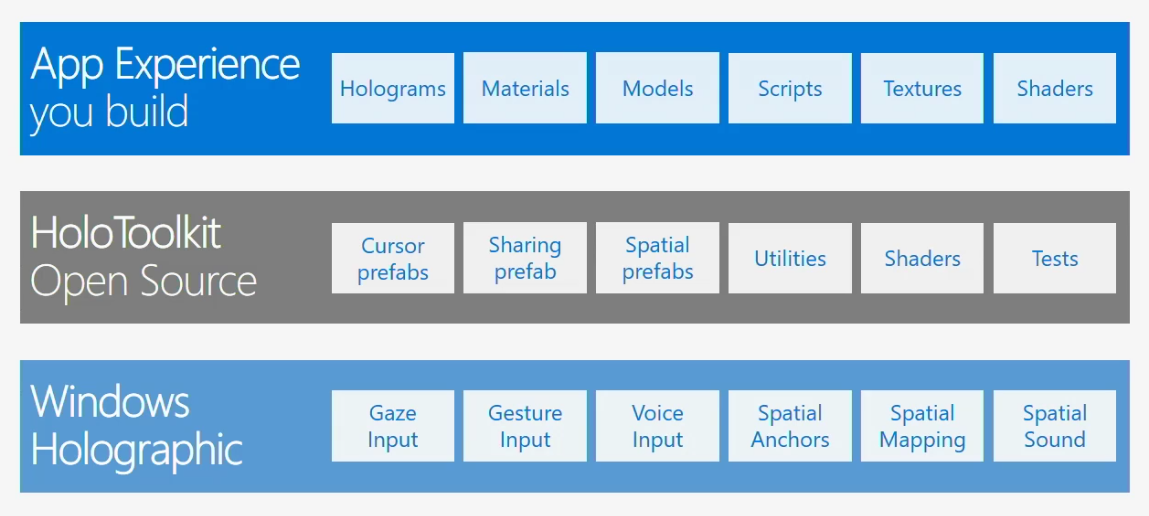

- HoloToolkit – tools which solve common dev scenarios

- HoloToolkit-Unity – same as above but Unity-compatible

- Galaxy Explorer – a fully working sample

- Holographic Academy – comprehensive tutorials

- The Official HoloLens website has lots more information including ordering a HoloLens device

We also have two technical evangelists here in the UK that work with HoloLens and regularly blog about it. Check out their blogs: