Future Decoded UK 2016 AR/VR Track

The rise of augmented, virtual and mixed reality technologies involve a huge shift for developers. Pete Daukintis provides an overview of these 'reality' technologies, and where we could go next.

Every 10-15 years we see fundamental paradigm shifts in computing; we’ve seen Desktop computing, Web, then Mobile... Well, now we are seeing the rise of artificial intelligence, cloud computing and, from a human-computer interface perspective, the rise of augmented, virtual and mixed reality technologies. To set the scene a little bit, the first desktop computer I owned was delivered to me as a grey box with no added extras such as web cam, microphone, etc., you were lucky to get a maths co-processor. It probably looked something like this:

This was a machine that we needed to tell what to do; it had no communications, and most of its resident programs were stand-alone desktop applications. Gradually, as the years rolled by, these machines started to evolve: they got eyes and ears in the form of microphones and web cams. With the rise of the web, they gained the ability to communicate with any other machine on the planet, with mobile - a slew of other senses in the form of the in-built sensors such as accelerometers, light sensors, etc. Also, their brains expanded into the cloud, and they could be transported anywhere with us, becoming much more personal in the process.

Future Decoded

This increased understanding of their environments has continued and some of the latest devices can understand the form of their environments and how they are positioned in the world. Alongside this greater environment understanding, voice and gesture understanding improvements powered by advances in machine learning technology and optics has paved the way for the rise of the different ‘realities’. Parallel advances in these technologies has enabled virtual reality experiences that have true ‘presence’ in stark contrast with the previous hype-cycle of virtual reality back in the 90s. Reflecting this, at Microsoft UK, we have added a VR/AR track to Future Decoded this year.

The intention of the track is to introduce virtual, augmented and mixed reality tech along with some industry experiences from partners in the field. The hope is that attendees will leave with a sense that they know how and where to get started. Each of these technologies is the embodiment of a three-dimensional world and this may leave current app developers pondering how to make the transition from 2D-based apps to 3D.

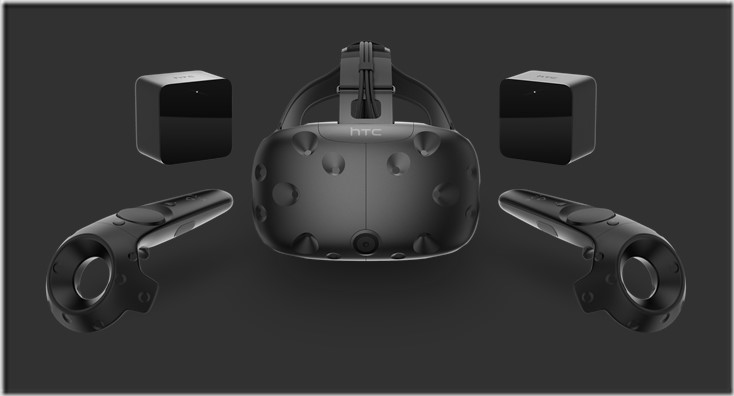

Virtual Reality

The complete replacement of your environment with a digital one including what you see, what you hear and the way that you interact. There is a whole range of consumer platforms from mobile VR to higher end with the releases earlier in the year of Oculus Rift and HTC Vive. The higher-end devices are tethered to a PC with a wire and require a top-end gaming PC whilst mobile VR requires a top-end smartphone and a cardboard headset. There are also options between including the Gear VR which augments the smartphone with higher quality sensors to provide a superior experience.

Augmented Reality

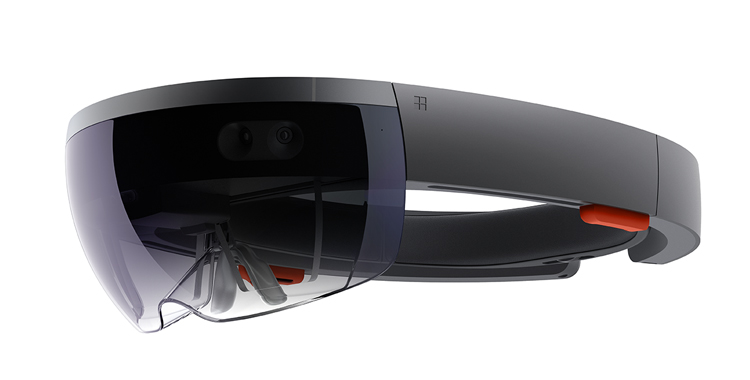

This term covers a wide range of tech spanning from heads-up displays for example, Google Glass to smartphone apps that mix their web cam feed with a digital overlay of some kind to provide the user with helpful signpost information. Pokemon GO is (arguably) an augmented reality game which has caught the general public’s imagination and really helped to enhance the profile of AR amongst the general public. The tech ranges from marker-based, where identifiable markers are placed in the real world to allow a virtual camera to be reverse engineered from the appearance of the marker, to markerless which uses sensors to achieve the same. We can see markerless systems in both Google’s Tango project and Microsoft’s HoloLens.

Mixed Reality

The term ‘mixed reality’ can be traced back to the early 90s at least where it’s meaning is somewhere on the continuum between virtual reality and reality. Currently it seems to be being used to describe a new genre of devices that allow mixing virtual and real worlds, such as Microsoft’s HoloLens and Meta’s Meta 2 device.

Developer Kit versions of these new devices are available and again we see different strategies with Meta focusing on natural interactions and Microsoft freeing the HoloLens from the need to be tethered as it is a full standalone Windows PC. Some common areas for mixed reality development include education and training scenarios, construction, maintenance and product design, some examples of which can be seen at the HoloLens site here.

Conclusion

The move to 3D will present a challenge to an industry that arguably requires improved standardisation and more easily accessible tooling. All of these ‘realities’ involve a huge shift for developers and user experience designers but also a huge opportunity to get involved and mould a nascent platform. It’s intriguing to watch the industry as each of the main players has a different angle on what the future will look like and how this will drive the industry on to the next level.

In this future world, it is not difficult to guess where the miniaturisation of hardware (contact lenses?) could take us, and where there will be no need for screens as the replacement virtual screens can go with you anywhere and be positioned and sized any way you like. The use cases for each tech are different enough to keep these technology streams separate, at least for the time being, but, will the technology evolve to a point where both fully-immersive and a mix of real and digital can be served by the same hardware?

By Pete Daukintis, Tech evangelist Microsoft UK (twitter LinkedIn Blog)