Data, no use at all without a catalog

About a decade a go I went on a Microsoft BI boot camp which was also attended by Microsoft staff specifically Matthew Stephen and Rob Gray who inspired me to work towards joining Microsoft myself. Two years later I got in and haven’t looked back and I still track Matt on LinkedIn, so I was very interested in his article on Data Lakes. I won’t repeat that here other than to summarise it briefly for those that don’t like to click on hyperlinks.

Data lakes are large heterogeneous repositories of data the idea being that essentially you can quickly store anything without worrying about it’s storage. Contrast this with a carefully curated data warehouse which has been specifically designed to be a high quality consistent store of business critical information. Of course this means that Gb per Gb data /.nbvcx much more expensive to build and maintain but this is because every bit of data in them is directly useful and applicable to the business.

On the other hand a data lake will contain vast amounts of less obviously useful data for example while the data warehouse might contain all the transactions from the online store, the data lake would store all of the logs from the site running the store. The former is immediately valuable in showing sales trends the later seems to be largely useless. However if storage is nearly free (and it largely is if data is stored in the cloud) we can afford to keep everything on the off chance it might be useful. But we couldn’t justify processing the log data into our warehouse as this would be expensive in terms of compute and the human effort to make this work. Not only that we aren’t sure what we need from this other kind of data or what it will be used for. Instead we just catalogue it and hang on to it until we realise we need it.

Let’s take an example – the company on-line store. The actual sales transactions get written into the data warehouse, but we decide it might be useful to store the web logs from the site as well, so they get thrown into the data lake, typically in a folder/container with a file for each time slice/ web server and the process is just a simple file copy from the web server. A couple of weeks later there seems to be something odd happening with the site and the cyber security firm we engage ask to see our logs and so we can provide them access to our data lake (we archive off the logs form the actual site to keep the servers nice and lean). They use their tools to analyse this and advise on a mitigation strategy. A few months later analysis of the data warehouse shows that the number of new customers being acquired is dropping despite discounts and promotions being in place. The suspicion is that the culprit might be the new customer sign up experience and a design agency is called in to conduct click stream analysis over the stored web logs to confirm this.

A couple of points come out of this.

- The Data Warehouse is still needed for the reporting & analytics that we have always done.

- The use of the logs wasn’t known when the data was stored and this data might not have been used at all. so trying to second guess how to store it at the time of arrival would be at best difficult and could be pointless.

- What is important is to catalogue what is stored in the lake and its structure as well as how it relates to other data be that in the data lake or in the data warehouse.

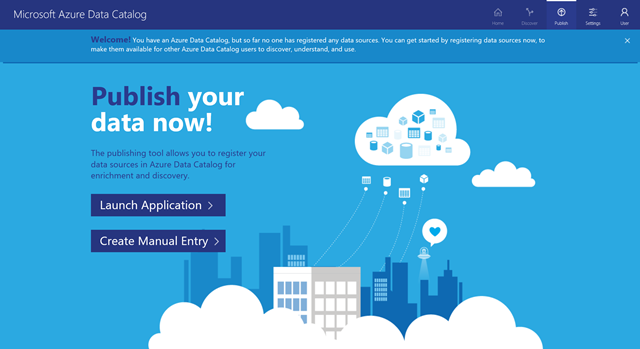

So in my opinion the term data lake is not a great description of what it does (how about a data museum?) and without understanding what is in there it is not a lot of use. So a key companion to the Azure Data Lake is the new Azure Data Catalog.

This new freemium service does is a bit like Master Data Services in SQL Server, but because it’s on line on Azure it’s accessible and available by default. However it also leverages and requires Azure Active Directory 1 so that catalogs are protected while still enabling teams inside an organisation to collaborate.

If this is used as intended then not only do the data professionals record what is going into the data lake AND the data warehouse, everyone shares their knowledge about how data is used and how to join the dots to do specific analytics.

So I would suggest two things start having a look at the principles of master data management in general and think about how this might play out in your organisation by having a look at the Azure Data Catalog.

1. This means that if you have an MSDN subscription linked to a personal account you may not be able to sign up to try this service however you can setup Azure Active Directory inside your subscription and then make a domain account a member of your subscription (a topic for another post).