Azure Data Services for Business Intelligence

A little known fact the biggest workload on Azure VM’s (aka IaaS) is .. SQL Server. This might seem odd give that there is a SQL Azure service where you can run SQL Server database without worrying about SQL Server itself. So what’s going on here?

There are a few possible options:

- These VMs might be running older versions of SQL Server as a way of offloading legacy apps to the cloud

- SQL Server is much more than a database engine and these VM’s might be running the other components that come with its such as Integration Service , Reporting Services ,Analysis Services, Master Data Management or Stream Insight.

- The databases the VM is running might not be compatible with SQL Azure

- SQL Azure can seem expensive and this is because it is essentially SQL Server in an Always On mode: Transactions are written to two back up servers in the same Azure data centre and all three server are replicated to the other European data centre.

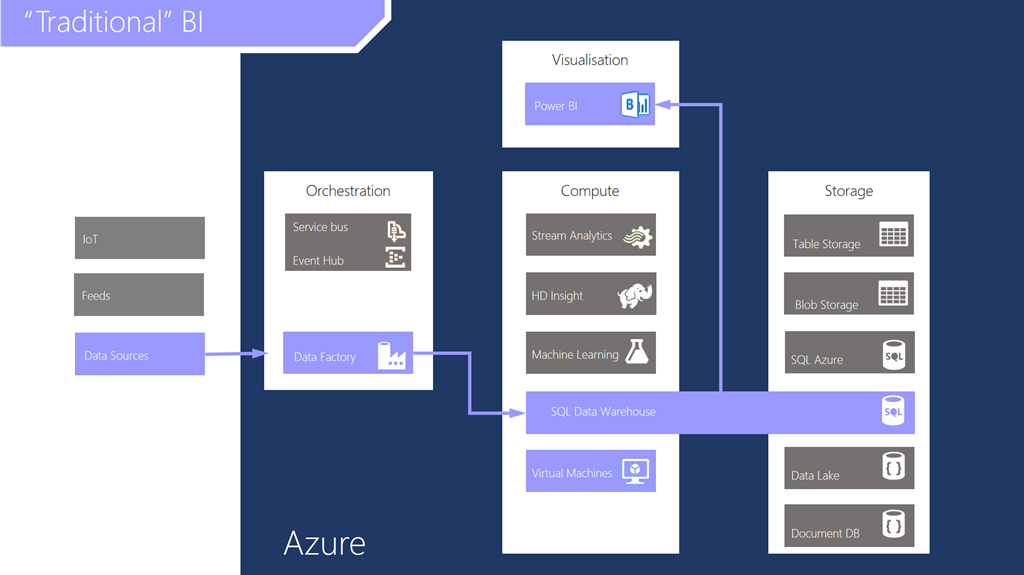

What’s interesting for me is that whichever scenario I think of it does mean that data and databases are being stored on Azure in some shape or form which is a good thing provided that it is properly protected in the way that any SQL Server instance that’s connected to them internet. However what I an interested in in this post is the use of Azure to do “traditional” business intelligence (BI). By this I mean data marts / warehouses reporting and analytics. Note If this data is simply persisted virtual hard disks attached to VMs then ultimately that ends up in azure blob storage.

If we accept concerns about where data is homed then BI s actually quite a good fit for cloud if the visualization tools are close to where the data is…

Note that since my last post two new data services have been announced for Azure – Data Lake and Data Warehouse. The Data Warehouse is a good example of how the cloud changes as it will allow the compute resources used to run queries to be scaled to meet demand while the storage aspect will be relatively cheap. the Data Lake is essentially a place to drop large amounts of data that you might want to use in future but aren’t sure what that use might be when you throw the data in the lake.

Wherever the data persists for a data mart/warehouse, we’ll need to have a process in place to update and refresh it. Back in my day this might have been SQL Server Integration Services (SSIS), Informatica etc. so how does the cloud change this? The simplest approach might be to move this processing to a VM in the cloud the upside being that we can be clever about when batch processing occurs (like the overnight warehouse refresh) and only pay for what we use. However we may still need to think about designing in redundancy to ensure our processing survives any failure. There is another way and that is to use the equivalent service in Azure, Data Factory. At the time of writing this is still very new and works in a very different way to SSIS etc. in that it is primarily about workflow and talks to sources and targets in their native languages and doesn’t have too many type of connectors.

With data visualization there are a couple of choices in the cloud either Power BI or spinning up your preferred tools in Azure RemoteApp. RemoteApp is essentially remote desktop services in the cloud using either a pre-built image with Office on it or supplying your own image with what ever tools you prefer to both build or consume analytical models. RemoteApp might also solve the problem of providing BI on the move away form the office as there are free RemoteApp clients for Android IOS and OS-X. I like this solution as data only persists in the cloud under control and doesn’t leak out onto local devices which also means it is fast as it’s only RDP traffic going between device and cloud.

Finally please be aware that so far I have just mentioned Microsoft’s BI tools here, but all of this could apply to Tableau over SQL Server, Oracle based BI, IBM Cognos and so on as any of these will also be fine in Azure assuming the vendors have sorted out how these tools are licensed when running in the cloud.