Open for business–Data Science at Microsoft

The word Open in IT is an emotive subject and not a word typically associated with Microsoft. But like a spring thaw under an ice sheet what seems rigid suddenly becomes fluid. Nowhere is this more obvious than in the various data related services that exist on Azure.

Before I get to what those things are let me explain why this matters. Despite what Ed, Susan Paul and Planky on my team may think it’s all about the data and what we do with it. and so having locked away in some arcane unreachable system is no good at all. What we need to be able to do is query it mash it together with other stuff to do useful work using whatever tools we want to irrespective of where it is, assuming we have permission to use for its intended purpose. If we are looking to do good old fashioned reporting then SQL of some form is as good anything for that. If it’s multidimensional then hopefully our end user tools can insulate us from MDX when we access an olap cube. If we want to do big data then we might go back to SQL (with Hive QL) or we might want to stay with what we know and use Python. On the other hand R is a very popular and open statistical language that also has its following. In all cases it shouldn’t really matter what matters is the data and result we want from it.

So how does this play out in Microsoft generally and specifically on Azure.

Firstly there’s a huge gallery of VMs on VM Depot you can spin up on Azure ..

For example I recently did a tutorial on H2O an R based machine learning tool over Hadoop and to do this all I had to do was export my azure subscription settings into this tool, select the relevant image and the VM was deployed complete with its tutorial in 10 minutes ready for me to use. The same is true of other big data solutions like Cloudera plus I load I haven’t heard of (I have enough trouble keeping up with Azure!).

However spinning up lots VMs and orchestrating them into a cluster can be heavy going especially if you are the data guy and just want to run a map reduce job to see what the answer is. So a better if less flexible way is to simply make use of HD Insight which is nothing more than Hadoop on Azure, but with the difference being that you can spin up a multi server cluster on demand in a couple of clicks. Currently HD Insight is based on Hadoop running on Windows but HD Insight based on Hadoop over Linux has just been announced as a preview.

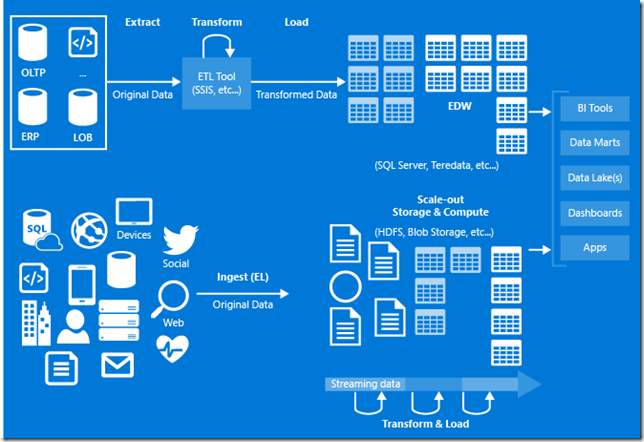

While having Hadoop on demand is great it’s not a lot of use without data, so how to do that? You could have an premises workbench VM loaded with your usual tools or have a similar setup in a cloud based VM, but there is another way and that is Azure Data Factory..

While this might look a bit like SQL Servers Integration Services tool or Informatica it is different in that it uses the language of the source or target to get work done rather than having an internal scripting engine. This makes sense because the options for connecting sources to target are much more varied than what Integration Services was designed to do, so its going to use pig and hive to move data around in HD Insight but will use stored procedures if SQL Server is involved. At them moment the list of connectors is quite small (files, HD Insight, Azure Machine Learning and SQL Server) but this will change as it evolves (so please feedback on what you’d like to connect to it).

The jewel in the crown of the Azure data platform is Azure Machine Learning (MAML).

The new MAML gallery showing the art of the possible

MAML can not only consume your data from a variety of sources but also enables to bring your own algorithm in R and Python if you aren’t impressed with that vast array of canned modules that are included. Having made your model this can be shared out to a web service and sample code in Python and C# is included to make that really easy. I think we’ll see more of R in other part of the Microsoft data platform now that Revolution has been acquired so this is a space to watch.

Finally all of this openness can be a bit disconcerting for Microsoft fans, for example where is the F# support in Azure ML given that F# is also a product of Microsoft Research (actually here in the UK) and that it is brilliant at mashing data and functions. Bizarrely F# is now an open language in that underneath .Net is now open source and the direction of F# is now independent of Microsoft at FSharp.org so this might seem to be even more logical but the Open for Business strategy is more than just latching on to every open source thing that's out there. It’s about being responsive to business needs and in the case of F# while it is very powerful and beloved of those who use it doesn’t have the wide scale adoption of say Python, and if businesses want to run on Azure they need the stuff that they actually use to be supported.

If this all sounds interesting then there are several events I’ll be at in the next few months where we can discuss..

- SQL Bits 3-56 March Excel Centre London

- the Data Culture series every month in a different city

- the UK versions of SQL Saturday again at various locations

Hopefully I’ll see you there