Making Big Data Valuable Data

By Ryan Simpson, Parallel Data Warehouse Technical Solution Professional at Microsoft

Data without analytics is simply that, it's just data. In this new 'Big Data' world, the value of data lies beyond what the individual predetermined structure and content tells you, and in what you are able to infer by bringing different sources of data together with someone who understands the meaning of the data in order to bring new insights to the business: Cue the Data Analyst.

How are businesses getting new insights out of their data beyond the classically structured or categorised information they've had access to before now? In a recent computer weekly article Alan Grogan of RBS explains how he is using payment information to build a map of his customers own supply chain. This helps them identify potential risks to their business from worldwide events that either directly, or indirectly impact their supply chain. Imagine if RBS had highlighted to you, your dependency on a supplier who procured hardware from Thailand ahead of the catastrophic flooding in Thailand in 2011, which resulted in a shortage of hard-drives worldwide.

Modelling the supply chain is a useful analogy in understanding the participants involved in ultimately delivering insights from the data. We could consider the delivery of insights as the output of analytics and model that as a supply chain between the data platform and the business, we can map the various participants in this delivery of analytics as:

The delivery required of each role, as we move from left to right change from getting the best out of the platform to accurately exploring the business. The risk to the best possible insight is anything that impacts the final delivery of any analytics. We can assume that with fewer impacts we are getting the most analytics possible.

To explore this mapping of deliveries from left to right, and what potential impacts are on the 'supply chain' that delivers these insights, let’s take a fairly simple but typical scenario: loading a large flat file to run ad-hoc queries against, and look at that from the perspectives of the Dev\DBA and the Analyst and how they might spend their time or ‘cycles’. This is a fresh data load, we don't yet know the value of the data, so we're just loading the data to develop some analytic queries over it. I've laid out the steps as you might have to consider them, rather than the steps you might execute them. We'll assume the target platform is SQL 2014, so clustered column store is available to us from a platform choice, and there's some reference or CRM data available to join on.

Task |

Dev\DBA |

Analyst |

Load Data |

File appears large enough to require some optimised loading process

|

|

Develop analytic queries |

|

|

I've deliberately made the example activities here to highlight where each role is spending their cycles. The Dev/DBA is spending their cycles developing using the features of the platform getting ready for the analytics. They're taking into account the physical configuration of the platform and how best to utilise it, and, as this is a shared platform, they're also considering the impact on other users. The Analyst focus their cycles on the analytics, and, with limited knowledge about the physical platform are using the fewest cycles to get to the point they can start analysing. They don't have the same responsibility to the platform, they just have a responsibility to get answers. Now obviously, there are analysts and dev/dba's who cover both sides of this, the point is; cycles spent on either side of this relationship are not spent on the other, which will reduce the total output, or increase the time to get to the same output.

This highlights potential friction in our 'supply chain' between data and business insights: getting hold of, and processing lots of data requires physical storage and compute power. In many organisations that responsibility lies with the IT Organisation, who have in turn optimised their own supply chain to efficiently purchase and manage and present these resources to the Dev\DBA, who then configures the software as we have above. However, this supply chain must fit in with purchasing and deployment cycles and be shared across the whole organisation. Ultimately it is this friction combined with tailoring to the specifics of the platform that has led to the statistic that '50% of an Analysts time is spent loading and preparing data'. In addition to this the IT Organisation also has to ensure the business is meeting the right levels of governance, by ensuring data is appropriately protected and secure. Alan Grogan articulates his experience of this friction on zdnet, explaining how he has enabled his analytics team using Parallel Data Warehouse 2012.

On April 15th Microsoft announced the 'Analytics Platform System' (APS) as the new name for SQL Server 2012 Parallel Data Warehouse. The new name is no coincidence and is more than just a name change from its former 'Parallel Data Warehouse'.

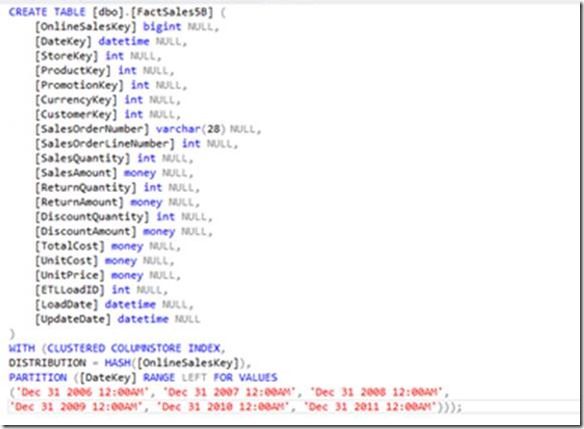

APS accelerates time to insights by automating the delivery key area, removing the friction between the platform and the analytics. It is purchased as an appliance, with hardware built and configured by HP or Dell to an exact architecture developed jointly between Microsoft and each of these vendors. The Software is developed, installed, and configured by Microsoft, with a single line of support for both hardware and software also led by Microsoft. The Massively Parallel Processing (MPP) engine which runs over SQL Server automatically takes care of the platform optimisation cycles detailed in our task table above. There are no hardware specific details surfaced to the Analyst or Developer so the following DDL is all that is required to create a column store table that is partitioned by date and correctly laid out on its own file groups and aligned to the optimised storage arrays in the appliance:

When your DDL is this concise, any changes to the data model become significantly easier as there is no dependency on the physical configuration. If we need to scale the solution out, APS has a 'add node' function - a scale unit, which consists of additional SQL Servers and Storage is added to the appliance, and the 'add node' command run to redistribute the data across the new hardware to allow us to take advantage of the additional power. That's simply it, no other changes to the solution. As an MPP appliance, doubling the size of the appliance will double the performance, and as hardware and its configuration is managed the requirements on the IT organisation can be clearly defined and consistent even though the analytic demands will be constantly changing.

APS has a parallel loading architecture, so our analyst can construct a simple SSIS load package to get the data in, and APS will automatically load the data in an optimised fashion ready for ad-hoc analytic style queries, and it will automatically manage the resources across the appliance to ensure that other queries and data loads can be satisfied whilst this data load is running.

There is recognition across many vendors that data in Hadoop can be hugely valuable, however for many organisations the skills required to access the data and manage a cluster presents a barrier to adoption. APS makes interaction with data stored in Hadoop seamless to analysts in two ways: The first is via Polybase. Polybase allows us to express external tables that map to data in a HDFS cluster so that it can be queried directly from, and be joined to data in APS using T-SQL, so no combination of HiveQL and Jscript functions, just T-SQL. APS collects statistics on this external data, and will generate a Map reduce job if it decides this provides more efficient access to the data. The second way is the options available to an organisation for hosting a Hadoop cluster. APS can have a dedicated, in-appliance HDInsight region, again, installed and support by Microsoft. APS can also be configured to talk to an external Hadoop cluster. That external cluster could also be a HDFS store in Windows Azure. As with the DDL, to enable connectivity it's simply a case of running two commands: sp_configure 'hadoop connectivity', and 'CREATE EXTERNAL DATA SOURCE'. Hadoop connectivity is also by-directional, analysts can query from and push data out to Hadoop with just T-SQL and without having to learn new languages.

APS makes BigData valuable data by allowing a business to focus their time on developing insights from that data, without introducing limits to scale or new skills to learn, on a platform that fits into many existing organisational structures. RBS have detailed their use of Parallel Data Warehouse in their Microsoft case study.

Additional links: