Server Market in Decline - 6 potential reasons why.

I saw an article a few weeks ago in Information Week discussing the slow down in server purchasing. It was then going on to decry the lack of innovation, but actually this trend could be down to any of these factors:

Consolidation is up – the number of VMs running on a given server is higher than its ever been typically over 10 VMs per host. That is down to two things; the servers can handle the workload because they have more memory cores and connectivity than they did before, and because the IT industry has embraced virtualization wholesale. Both of those represent innovation, be that higher spec CPUs, with things like NUMA, advanced virtualization support plus hypervisors that support that technology and provide that power through to VMs with little overhead. This all means those higher spec servers could well replace two or three older units even if the older servers were virtualized already. So hopefully those horror stories about floor loading being exceeded by the sheer weight of server or having to wait for more power from the local utility companies are less common, though we could still all use some more connectivity!

There’s no Mid-Life Crisis. What will often stop additional workloads say an eleventh or twelfth VM running on a server is not CPU or the hypervisor it will be memory and connectivity. Given that most servers are not purchased fully loaded with network cards (NICs) or memory but do have the latest CPUs then it makes perfect sense to upgrade these servers to ensure more balance of resources, where an initial purchase would rightly target the CPU as something that is harder to swap out and spend money on peripherals like memory and NICs later as the price of those drops. One thing I like about NIC teaming in Windows Server here is that disparate NICs can be teamed even if they are from different vendors (though it’s not a good idea to team across NIC of different speeds!)

Storage? Many critical workloads rely on shared storage like SANs so as storage needs to expand, then more storage will be put into these and there will also be investments in better connectivity from the storage to the servers but again no new servers. Deduplication whether that’s built into the OS like in Windows Server 2012/R2 or part of the storage solution has helped to slow demand for storage but data volumes are only growing so it’s good to know that for most storage solutions it’s possible to swop out drives for larger ones without throwing away the whole storage solution. Innovation in storage is also evident in the numerous ssd and hybrid solutions available and that technology is being embraced in all sorts of imaginative ways.

Cloud. At least one workload, e-mail has been making a steadily increasing move to the cloud if the take-off of Office 365 is anything to go by. This has either resulted in servers and storage not being acquired for an increase in e-mail capacity, that existing servers can be repurposed for other uses or a combination of the two. There are other workloads that are off the cloud like remote desktops, and internet facing solutions even if an organisation doesn’t move everything out of its own datacentres

Windows Server OS. Back when I was young, every new Server OS or application had higher and higher base specifications to run it. However the memory & CPU requirements for windows haven’t really changed for a decade, and in some cases have sort of decreased. I am thinking here about the wider use of Server Core as installation option for Windows Server.

I can’t use the new technology. If you have applications or hardware that can’t use the new technology in a new server, that could also block new server purchases. Not every application is multi threaded or run on x64 and even if it does it might not be any faster which is why you might want to upgrade in the first place. Virtualisation should help here but not every environment can use this for example there are often questions about licensing and support in a virtual world.

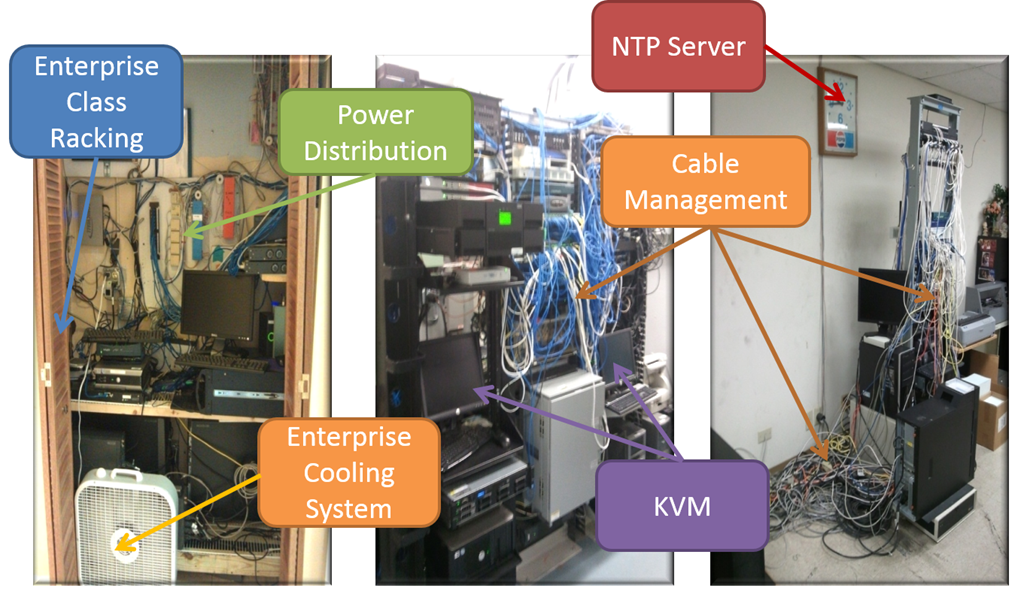

However Server innovation still continues and so when a new server is needed it will have the latest hardware to support an even better virtualisation story such as network cards that support virtualization (SR-IOV) storage communications (SMB-Direct using RDMA on the latest NICs). New server designs also allow for new ways of doing things for example in a small business you might have had this..

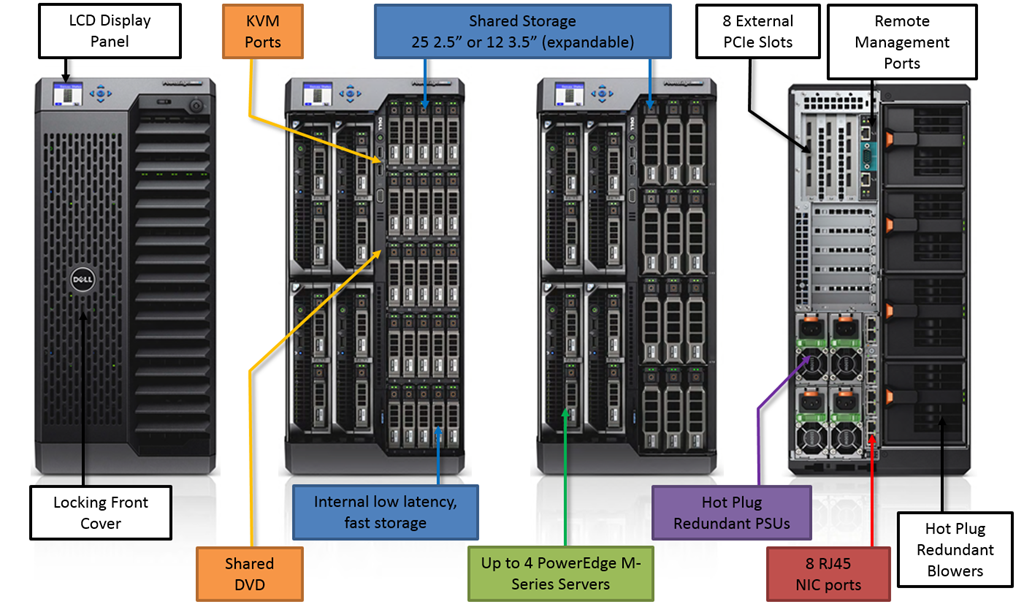

where you could do the same thing with this, the Dell VRTX tower..

I say tower because on first inspection it looks a bit like my gaming rig, but as you can see this monster has got up to four separate servers in there ,plus shared storage with raid controllers and multiple NICs and redundant power supplies. This means we can deliver a departmental cluster in a box which can sit under a desk in a school, store or any branch office and that didn’t really exist before.

So yes server slowdown is happening for a variety of reasons, but actually the workloads and useful data volumes in data centres is still on the increase. This means we as IT Professionals still have more and more stuff to manage, it’s just there might be a few less boxes with flashing lights in darkened rooms as part of the mix.