Lab Ops part 9 - an Introduction to Failover Clustering

For many of us Failover Clustering in Windows still seems to be a black art, so I thought it might be good to show how to do some of the basics in a lab and show off a few of the new clustering features of Windows server 2012 R2 in the process.

Firstly what is a cluster? It’s simply a way of getting one or more servers in a group to provide some sort of service that will continue to work in some form if one of the servers in that group fails. For this to work the cluster gets its own entry in active directory and in DNS so the service it’s running can be discovered and managed. Just be clear all the individual servers in a cluster (known as cluster nodes) must be in the same domain.

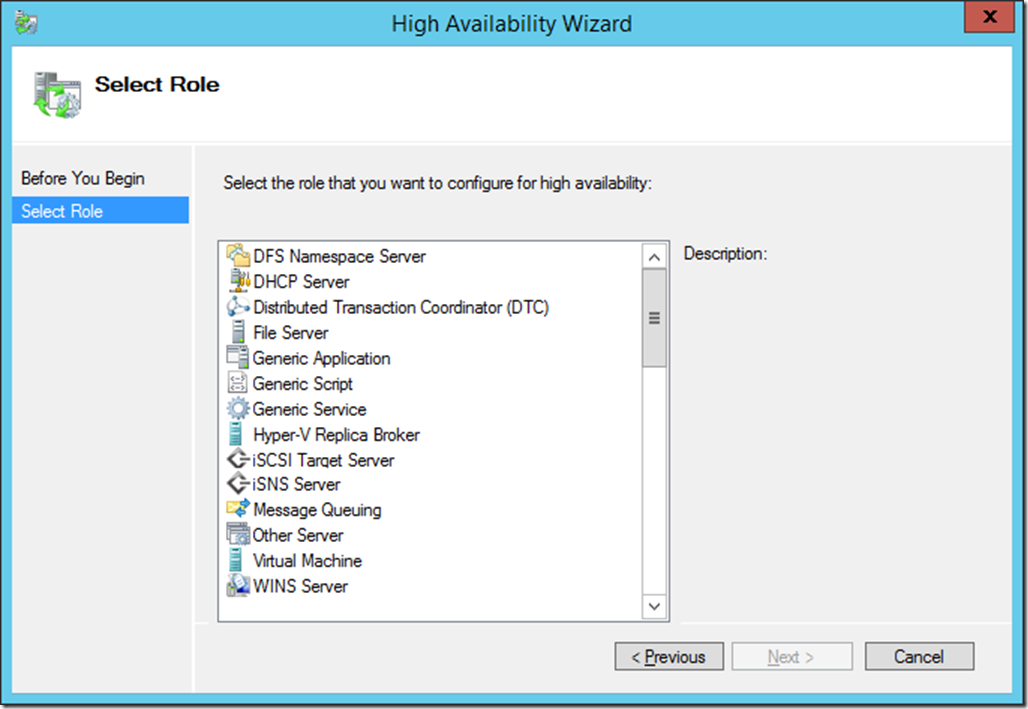

So what sort of services can you run on a cluster? In Windows Server 2012R2 that list looks like this..

Note: Other roles from other products like SQL Server can also be added in. Notice too that virtual machines (VMs) are listed here and running them in a cluster is how you make them highly available.

All of these roles, but one, can be run from guest clusters; that is a cluster built of VMs rather than physical servers and it is also possible to have physical hosts and VMs combined in the same cluster – it’s all Windows servers after all. The exception to this is when you want to make VMs highly available - the cluster must only contain physical hosts and this is known as a host or physical cluster.

Making a simple cluster is easy, it’s just a question of installing the failover clustering feature on each node and then joining them to a cluster. When you add in the feature you can add in failover cluster manager but if you have been following this series you’ll have access to this on your desktop as the point of Lab Ops is to remotely manage where possible and also make use of PowerShell. So In my example I am going to create 2 x VMs (fileserver2 & 3) from my HA FileServerCluster Setup local.ps1 script which adds in the clustering feature (from this xml file).

Note: my SSD drive where I store my VMs is E: s you will need to edit my script if you want to follow along

Having run that I could then simply run a line of PowerShell on one of those servers to create a cluster..

New-Cluster -Name HACluster -Node FileServer2,FileServer3 -NoStorage -StaticAddress 192.168.10.30

Note the –NoStorage switch. This cluster has just got my two nodes in and that’s it. For some clustering roles such as SQL Server 2012 AlwaysOn this is OK but most roles that you put into a cluster will need access to shared storage, and historically this has meant connecting nodes to a SAN via ISCSI or Fibre Channel. This applies to host and guest clusters, but for the latter guest VMs will need direct access to this shared storage. That can cause problems in a multi-tenancy setup like a hoster as the physical storage has to be opened up to the VMs for this to work, and even if you don’t work for one of those then this will at least cut across the separation of duties in many modern IT departments.

There’s another problem with this cluster; it has an even number of objects in it. If one of the nodes fails it will restart and think it “owns” the cluster but the other node already has that ownership and problems will occur. So for most situations we want an odd number of objects in our cluster and the “side” of a cluster that has the majority after a node failure will own the cluster. This democratic approach to clustering means that there is no one node that is in charge of the cluster, and this enables the Windows Server to support bigger clusters than VMware who use the older style of clustering that Microsoft abandoned after Windows Server 2003.

So having built a simple cluster I need add in more objects and work with some shared storage. If you look at the script I used to create FileServer2 & 3 there’s a couple of things to note:

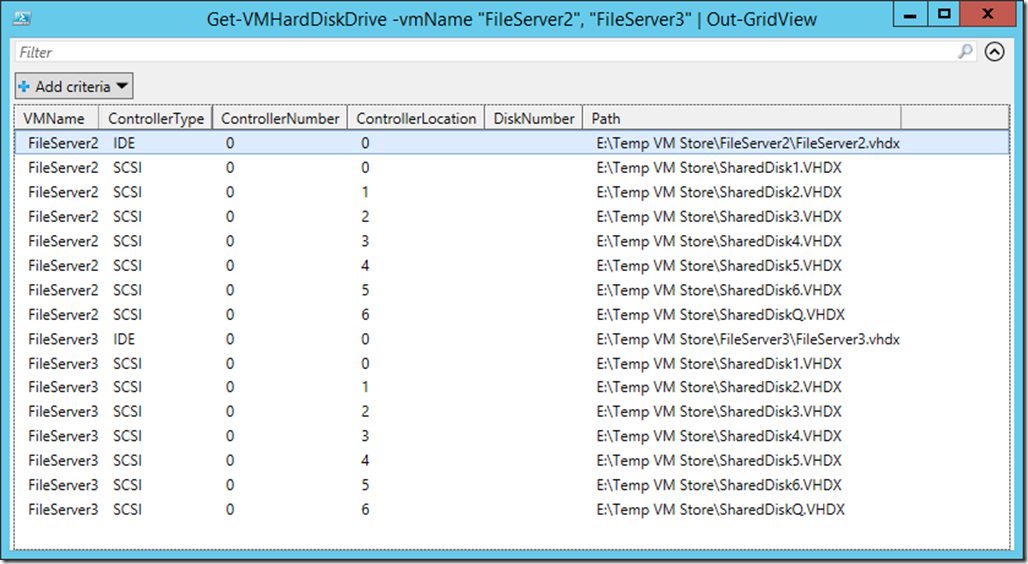

I have created 7 shared virtual hard disks and these are attached to both FileServer2 and FileServer3 and if I run this PowerShell

Get-VMHardDiskDrive –VMName “FileServer2”,”FileServer3” | Out-GridView

you can see that..

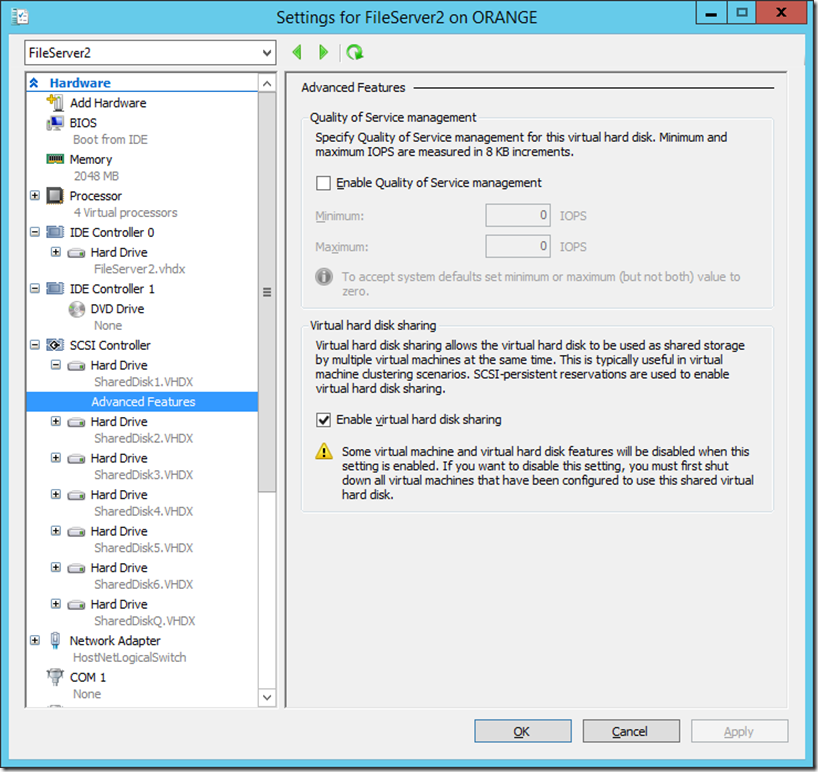

Also If I look at the settings of FileServer2 in Hyper-V manager there’s a switch to confirm these disks are shared ..

This is new for Windows Server 2012R2 and for production use the shared disks (VHDX only is supported) must be on some sort of real shared storage. However there is also a spoofing mechanism in R2 to allow this feature to be evaluated and demoed and this is in line 272 of my script ..

start-process "C:\windows\system32\FLTMC.EXE" -argumentlist "attach svhdxflt e:"

Note you’ll need to rerun this after every reboot of your lab setup.

Now that my two FileServer VMs are joined in a cluster (HACluster) I can use one of these shared disks as a third object in the cluster. To do that I need to format it add it in as a cluster resource and then declare it’s use as a quorum disk, and I’ll be running this script from one of the cluster nodes..

#Setup (Initialize format etc. )the 1Gb Quorom disk

$QuoromDisk = get-disk | where size -EQ 1Gb

Initialize-Disk $QuoromDisk.Number -PartitionStyle GPT

set-disk $QuoromDisk.number -IsOffline 0

New-Partition -DiskNumber $QuoromDisk.Number -DriveLetter "Q" -UseMaximumSize

Initialize-Volume -DriveLetter "Q" -FileSystem NTFS -NewFileSystemLabel "Quorum" -Confirm:$false

#Add in the quorum disk to the cluster, and then set the quorum mode on the cluster

start-sleep -Seconds 20

Get-ClusterAvailableDisk -Cluster $ClusterName | where size -eq 1073741824 | Add-ClusterDisk -Cluster $ClusterName

$Quorum = Get-ClusterResource | where ResourceType -eq "Physical Disk"

Set-ClusterQuorum -Cluster $ClusterName -NodeAndDiskMajority $Quorum.Name

Using a disk like this as a third object is only one way to create that odd number of objects (called Quorum). You can just have three physical nodes or use a file share. In my case if a node fails the node that has ownership of this disk will form the cluster, and the failed node will then re-join the cluster rather than try to rebuild an identical cluster when it is recovered

That’s where I want to stop as there are several ways I can use a basic cluster like this and I’ll be covering those in individual posts.

If you want to build the cluster I have described so far all you need is an evaluation edition of Windows Server 2012R2. and go through part 1 & Part 2 of this series.