Exchange 2010: Collapsing DAG Networks

As a post configuration step in an Exchange 2010 Database Availability Group installation the administrator may need to collapse Database Availability Group Networks. Unfortunately this is a commonly missed configuration which results in the replication of log files in an unexpected manner.

Let’s take a look at the following Exchange installation.

In this case we are dealing with a total of four subnets, two subnets assigned to hosts in the primary data center and two subnets assigned to hosts in the secondary data center. Each of the MAPI networks is routable via default gateway settings. Each of the replication networks is routable by using the appropriately established static routes.

When the Database Availability Group is established the Failover Clustering services are leveraged for certain functions. One of the functions of the Failover Cluster Service is the enumeration of networks on nodes. When the cluster service starts the IP address bindings of each network card is reviewed and the subnet determined. Failover Clustering then creates a Cluster Network for each subnet. Nodes that have an IP address in a cluster network then have their network interface placed in the appropriate cluster network. In this example there are four subnets – therefore Failover Clustering will enumerate four cluster networks. Each of the individual cluster networks will contain two network interfaces, since each node has at least one network interface assigned to each subnet.

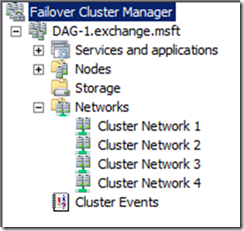

Here is an example of the cluster network enumeration as seen in failover cluster manager.

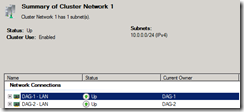

Here is an example of the network ports placed into a cluster network.

The Exchange Replication Service enumerates the cluster networks as reported by cluster and establishes an initial set of Database Availability Group Networks. You can view the default Database Availability Group Networks in the Exchange Management Console. Since Failover Clustering reports 4 cluster networks, the default set of DAG Networks is now four. Here is an example:

In this example you can see the default four DAG networks. Each DAG Network, like each Cluster Network, has assigned a network port from each host. DAG Networks is how the replication service determines what connectivity is available for log shipping activities. Based on this DAG network topology the replication service knows the following about DAG node communications:

192.168.0.3 <-> 192.168.0.4

10.0.0.1 <-> 10.0.0.2

10.0.1.1 <-> 10.0.1.2

192.168.1.3 <-> 192.168.1.4

What is missing here is any relationship between the 192.168.0.X and 192.168.1.X subnets as well as the 10.0.0.X and 10.0.1.X subnets. As of now the replication service has no idea how a node in 192.168.0.X can communicate with a remote node –> can it do so on 192.168.1.X or 10.0.1.X? In this situation we do not want DAG communications to fail so we resort to DNS name resolution. For example, when the server MBX-4 wants to replicate log files that are hosted on MBX-2, it looks at the DAG networks and determines that there are no networks that contain both MBX-4 and MBX-2 – therefore the replication service cannot make a direct TCP connection to the known IP address for MBX-2. Rather then fail replication, we issue a DNS query. The DNS query should always return an IP address that corresponds to a MAPI network (replication networks should not be registered in DNS). Therefore, the final connection from MBX-4 to MBX-2 is performed on IP Address 192.168.0.3. The replication network IS NEVER USED.

This behavior is different though for communications from MBX-2 to MBX-3. If MBX-3 needs to pull log files from MBX-2 the replication service knows that 10.0.0.X can be used, since DAGNetwork02 contains both network ports. Therefore, the replication service can bypass DNS name resolution and make a direct IP connection from 10.0.0.2 to 10.0.0.1 to pull logs from MBX-2 to MBX-3.

The administrator can correct this condition by appropriately collapsing the DAG networks. In this example we know that the underlying routing topology allows for the following:

192.168.0.X <-> 192.168.1.X

10.0.0.X <-> 10.0.1.X

At this point we need to re-assign subnets to the appropriate DAG networks. In this example we will take the 10.0.1.X subnet from DAGNetwork05 and move it to DAGNetwork02. This will leave an empty DAGNetwork05 which can be deleted. We will also take the 192.168.1.X from DAGNetwork02 and move it to DAGNetwork01. This will leave an empty DAGNetwork02. The following example shows the desired final DAG network layout.

Once this is done we will disable replication on the MAPI network allowing only the replication network to initially service log shipping activities. Why do you disable the MAPI network from log shipping activities? Remember that if no other network exists in a DAG to replicate log files we will utilize the MAPI network for log shipping. If the MAPI network is replication enabled, then when the replication service is choosing a network to perform log shipping it considers it at the same weight as identified replication networks. By disabling the MAPI network it is no longer considered at the same weight and therefore all initial log shipping activities are balanced between the enumerated replication networks.

You can use the get-mailboxdatabasecopystatus * –connectionStatus | fl name,outgoingconnections,incominglogcopyingnetwork you can view the networks that are being utilized for inbound and outbound operations.

In this example you can see that all incoming and outgoing connections are occurring on DAGNetwork02.

You can also review a netstat –an an see that log copying activities are occurring on the 10.0.0.X network utilizing port 64327 (the default DAG replication port).

By collapsing DAG networks you can ensure that the replication service functions in an optimized fashion.

![clip_image002[4] clip_image002[4]](https://msdntnarchive.blob.core.windows.net/media/TNBlogsFS/prod.evol.blogs.technet.com/CommunityServer.Blogs.Components.WeblogFiles/00/00/00/70/98/metablogapi/5315.clip_image0024_thumb_3504DABE.jpg)