Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Device Management in Microsoft

URL

Copy

Options

Author

invalid author

Searching

# of articles

Labels

Clear

Clear selected

AlwaysOn

Application Management

Automation

availability group

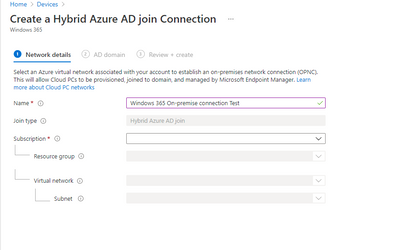

Azure

azure availability groups

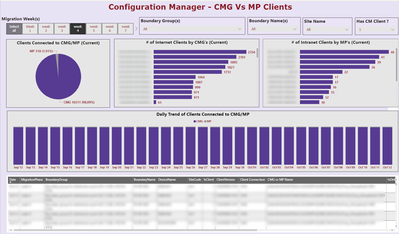

CMG SCCM ConfigMgr MEM MEMCM

ConfigMgr

configmgr 2012

configmgr in azure

Creators Update

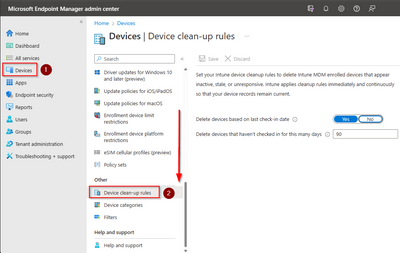

device cleanup

Device management

E-HTTP SCCM Enhanced HTTP ConfigMgr MEMCM

Engineering

GitHub

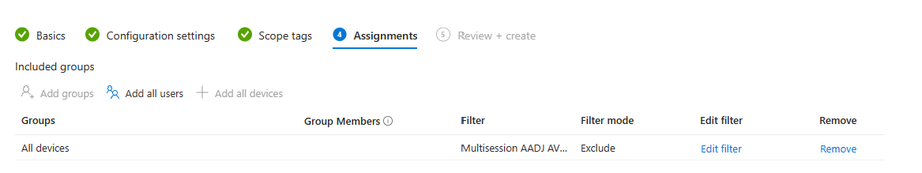

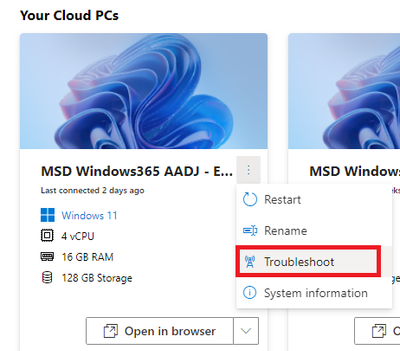

Intune

MEMCM

Microsoft Graph

microsoft it

msit

Operations

os upgrade

pre download

Reporting

SCCM

sccm lift and shift

software update point

sql

susdb

system center 2012

system center configuratio manager

System Center Configuration Manager

task sequence

VPN

windows intune

WSUS

- Home

- Microsoft Intune and Configuration Manager

- Device Management in Microsoft

Options

- Mark all as New

- Mark all as Read

- Pin this item to the top

- Subscribe

- Bookmark

- Subscribe to RSS Feed

Latest Comments