Backups: Doing it right so you can recover quickly!– Don’t keep your eggs in one basket

In my prior post I looked at confirming that backups were happening, and if they were the right type. The next issue is to verify that a recovery is possible. Two major events may force you to do a recovery:

- Hard Drive failure

- DBCC CheckDb reporting problems that cannot be repaired without data loss.

A hard disk failure can be a major ouch because you may discover (too late!) that part of the backups are sitting on the same hard drive (that is now dead) as the database files. Rather than take you through a myriad of steps to check that your backups and database files are on independent drives, I will give a script to do this checking for you. If there is an issue, you can start your learning curve well motivated; if there are no issues, you have one less item to learn.

If you are running on a drive array or SANS with data redundancy (Mirroring, RAID-5 etc) , then you can skip this post because a single drive failure should not impact you. Your actions are simple:

- Replace the failed hard drive

- Allow the hardware to rebuild the failed hard drive from the good hard drive(s).

The script below checks for independence of backups and data files on the logical drives ( C:, D:, etc). Just copy the script and execute it. I will give a second script in a later post that requires a little data entry because we will check the physical drives hosting the logical drives (including mount points) , and you will have to input this information.

Cavaet: If you have a professional SQL Server DBA available, discuss the material below with them. This is focused on simple backup and restore environments not involving tapes, mount points, and other advanced features such as FileStream Databases.If your ISV application stores files in the operating system, this code cannot determine if those files have been backed up. |

Checking Backup Logical Drives against Database Logical Drives

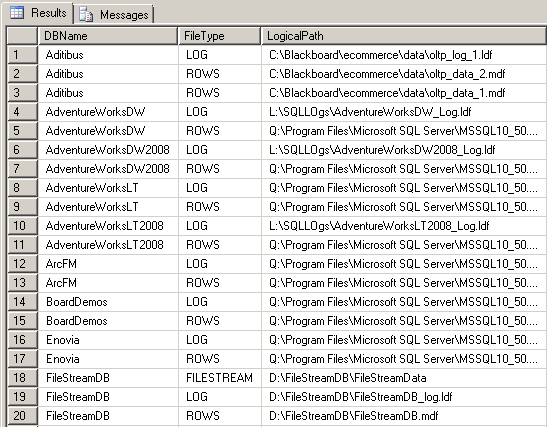

The first part or our code builds up a table that collects the location of all of the files used by the user databases in your SQL Server instance. This information is then listed so you may review it, or copy it elsewhere for reference.

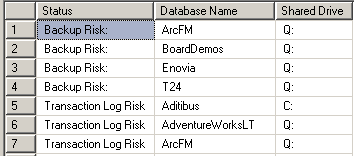

The second result set identifies backups sharing drives with the database files and databases where the Log file and the database files are located on the same drive. You do not want any databases listed.

The transaction log is used to bring the database from the last backup image to the state immediately before failure. Typically, a transaction log would be the current day's work or current week's work. If the transaction log is on the failed drive, this work is lost.

The Code

This stored procedure should be located in a utitity database, such as [SQLTools].

IF NOT EXISTS(Select 1 FROM sys.databases where Name=N'SQLTools')

Create Database [SQLTools]

GO

USE [SQLTools] -- See Below

go

CREATE PROC SQLISV_CheckDbAndBackupLocations

@SinceDate DateTime='01/01/1990'

AS

SET NOCOUNT ON;

DECLARE @CR NVARCHAR(1)= CHAR(13),

@DBName sysname,

@SQLCmd nvarchar(max) =N'',

@RowID tinyint = 1

-- Next table must be TEMP because of @SQLCmd

CREATE TABLE #DBFileLocation (

DBName nvarchar(128) NOT NULL,

FileType nvarchar(60) NOT NULL,

LogicalPath nvarchar(260) NOT NULL)

SELECT @SQLCmd=@SQLCmd

+ N'INSERT INTO #DBFileLocation (DBName, FileType, LogicalPath) SELECT '''

+ Name

+ N''', [type_desc], [physical_name] FROM ['

+ Name + N'].sys.database_files'

+ @CR

FROM sys.databases

WHERE Name Not In (N'tempdb',N'msdb',N'model',N'master')

EXEC(@SQLCmd)

IF @@ERROR <> 0

BEGIN

RAISERROR(N'Failed to get data', 16, 1)

RETURN

END

-- List the results

SELECT * FROM #DBFileLocation ORDER BY DBName, FileType

-- List when there is the same logical drive

SELECT DISTINCT

N'Backup Risk:' as [Status],

DBName as [Database Name],

Left(LogicalPath,2) as [Shared Drive]

FROM #DBFileLocation

INNER JOIN msdb.dbo.backupset

ON database_name = Dbname

INNER JOIN msdb.dbo.backupmediafamily

ON backupset.media_set_id = backupmediafamily.media_set_id

AND backupset.is_copy_only = 0

WHERE Left(physical_device_Name,2)=Left(LogicalPath,2)

AND backupset.backup_finish_date > @SinceDate

UNION

SELECT Distinct

N'Transaction Log Risk',

RowsSite.DBName,

Left(RowsSite.LogicalPath,2)

FROM #DBFileLocation RowsSite

INNER JOIN #DBFileLocation LogSite

ON RowsSite.DBName=LogSite.DBName

AND Left(RowsSite.LogicalPath,2)=Left(LogSite.LogicalPath,2)

AND RowsSite.FileType IN (N'ROWS',N'FILESTREAM')

AND LogSite.FileType=N'LOG'

GO

EXEC SQLISV_CheckDbAndBackupLocations

Bottom Line

Backups are often moved to network shares or other locations. This moving complicates analysis since it is not visible to SQL Server. This moving also complicates the recovery process and increases the risk of being unable to recover. Physical copying of files has a very small risk of resulting in corruption, unfortunately corruption seems to occur whenever a perfect copy is needed. I recommend locating the backup at an appropriate location (i.e. not co-located) and making copies (not moving) to network shares and other locations as additional insurance.

I have included an optional parameter on the above stored procedure. The @SinceDate parameter allows you to exclude old backups (to poor locations) from being reported; just update the default value in the code above.

SQLTools: Where to Save these Utilities?

I had a discussion with Shaun Tinline-Jones of the SQL Server Customer Advisory Team - SQL Server Best Practices team(SQLCAT) about where to locate this procedure and other like it. Should it be in [msdb], [model],[master], an existing ISV related database or a new database. Our conclusion was a new database named [SQLTools] was best (instead of [SQLISV] or [SQLCAT]).

To create this database, just execute:

?

Create Database [SQLTools]

Go