Implementing a Custom Security Pre-Trimmer with Claims in SharePoint 2013

This post is a contribution from Adam Burns, an engineer with the SharePoint Developer Support team.

This post repackages examples that are already out there on MSDN, but brings two examples together and provides some implementation details that may not be obvious in the existing articles. I also tried to leave out confusing details that didn’t bear directly on the subject of using custom claims to do Pre-Trimming of search results. In general you should look at the included sample code after reading the description of the different parts and that should be enough to understand the concepts.

What you will learn:

- We will review basic concept of a custom Indexing Connector because we will have to implement a connector in order to demonstrate the capabilities of the new Security Trimmer Framework.

- We’ll cover how a connector can provide Claims to the Indexer and how the Security Trimmer can use those Claims to provide a very flexibly way to trim and customize search results.

- We’ll quickly cover the different between a pre- and post- trimmer but we’ll only implement the pre-trimmer.

Sample Code:

The sample code includes two projects, the Custom Indexing Connector (XmlFileConnector) which sends claims to BCS, and the SearchPreTrimmer project which actually implements the trimming. In addition you’ll find:

- Products.xml - a sample external data file for crawling.

- Xmldoc.reg - to set the proper registry value so that the custom protocol is handled by the connector.

- Datafile.txt – a sample membership data file. This is where the claims for each user are specified.

The Connector

We’ll use the XmlFileConnector. One of the good things about this kind of connector is that you can use it for a database. For instance it can be a product catalog, a navigation source, etc.

To install the connector do the below steps (All paths are examples and can easily be substituted for applicable paths:

1. Build the included XmlFileConnector project.

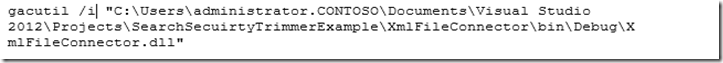

2. Open the “Developer Command Prompt for Visual Studio 2012” and type:

3. Merge the registry entries for the protocol handler by double-clicking on the registry file located at “C:\Users\administrator.CONTOSO\Documents\Visual Studio 2012\Projects\SearchSecuirtyTrimmerExample\XmlFileConnector\xmldoc.reg”. That’s the protocol handler part of all this. Unless SharePoint knows about the protocol you are registering the connector won’t work. Registering the custom protocol is a requirement for custom indexing connectors in SharePoint.

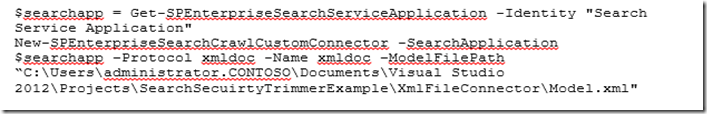

4. Open “SharePoint 2103 Management Shell” and type:

5. You can check the success like this:

Get-SPEnterpriseSearchCrawlCustomConnector -SearchApplication $searchapp | ft -Wrap

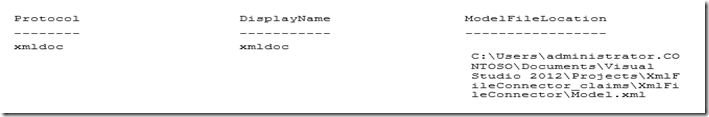

You should get:

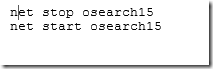

6. Stop and Start the Search Service

7. Create a Crawled Property Category.

When the custom indexing connector crawls content the properties discovered and indexed will have to be assigned to a Crawled Property Connector. You will associate the category with the Connector.

Later on, if you want to have Navigation by Search or Content By Search, you will want to create a managed property and map it to one of the custom properties in this Crawled Property Category. This will allow to present content using Managed Navigation and the Content Search Web Part (note that Content Search Web Part is not present in SharePoint Online yet, but it is coming in the future).

To create a new crawled property category, open SharePoint 2013 Management Shell and type the following commands and run them,

a. $searchapp = Get-SPEnterpriseSearchServiceApplication -Identity "<YourSearchServiceName>"

b. New-SPEnterpriseSearchMetadataCategory -Name "XmlFileConnector" -Propset "BCC9619B-BFBD-4BD6-8E51-466F9241A27A" -searchApplication $searchapp

NOTE: The Propset GUID, BCC9619B-BFBD-4BD6-8E51-466F9241A27A, is hardcoded in the file XmlDocumentNamingContainer.cs and should not be changed.

c. To specify that if there are unknown properties in the newly created crawled property category, these should be discovered during crawl, at the command prompt, type and run the following:

i. $c = Get-SPEnterpriseSearchMetadataCategory -SearchApplication

ii. $searchapp -Identity "<ConnectorName>"

iii. $c.DiscoverNewProperties = $true

iv. $c.Update()

8. Place a copy of the sample data from C:\Users\administrator.CONTOSO\Documents\Visual Studio 2012\Projects\SearchSecuirtyTrimmerExample\Product.xml in some other directory such as c:\ XMLCATALOG. Make sure the Search Service account has read permissions to this folder.

9. Create a Content Source for your Custom Connector:

a. On the home page of the SharePoint Central Administration website, in the Application Management section, choose Manage service applications.

b. On the Manage Service Applications page, choose Search service application.

c. On the Search Service Administration Page, in the Crawling section, choose Content Sources.

d. On the Manage Content Sources page, choose New Content Source.

e. On the Add Content Source page, in the Name section, in the Name box, type a name for the new content source, for example XML Connector.

f. In the Content Source Type section, select Custom repository. In the Type of Repository section, select xmldoc.

g. In the Start Address section, in the Type start addresses below (one per line) box, type the address from where the crawler should being crawling the XML content. The start address syntax is different depending on where the XML content is located. Following the example so far you would put this value:

xmldoc://localhost/C$/xmlcatalog/#x=Product:ID;;titleelm=Title;;urlelm=Url#

The syntax is different if you have the data source directory on the local machine versus on a network share. If the data source directory is local, use the following syntax:

xmldoc://localhost/C$/<contentfolder>/#x=doc:id;;urielm=url;;titleelm=title#

If the data source directory is on a network drive, use the following syntax:

xmldoc://<SharedNetworkPath>/#x=doc:id;;urielm=url;;titleelm=title#

· "xmldoc" is the name of name of the protocol of this connector as registered in the Registry when installing this connector. See step 3 above.

· "//<localhost>/c$/<contentfolder>/" or “//<ShareName>/<contentfolder>/” is the full path to directory holding our xml files that are to be crawled

· "#x=:doc:url;;urielm=url;;titleelm=title#" is a special way to encode config parameters used by the connector

o "x=:doc:url" is the path of element in the xml document that specifies the id of a single item

o "urielm=url" is the name of the element in the xml document that will be set as the uri of the crawled item

o "titleelm=title" is the name of the element in the xml document that will be set as the title of the crawled item

· Note that the separator is “;;” and that the set of properties are delineated with “#” at the beginning and “#” at the end.

Parts of the Connector Having to do with Claims-based Security

If you’ve looked at the BCS model schema before you know that in the Methods collection of the Entity element you usually specify a Finder and a “SpecificFinder” stereotyped method. In this example the Model is not used for External Lists or a Business Data List Web Part. It does not create or update items. Therefore, it specifies an AssociationNavigator method instead, which is easier to implement and is good in this example because it lets us focus more on the Security Trimming part.

Also, this connector doesn’t provide for much functionality at the folder level, so we’ll concentrate primarily on the XmlDocument Entity and ignore the Folder entity.

The Model

In both the AssociationNavigator (or Finder) method definition and the SpecificFinder method definition we must specify a security descriptor but in this case we need to specify two other Type Descriptors.

<TypeDescriptor Name="UsesPluggableAuth" TypeName="System.Boolean" />

<TypeDescriptor Name="SecurityDescriptor" TypeName="System.Byte[]" IsCollection="true">

<TypeDescriptors>

<TypeDescriptor Name="Item" TypeName="System.Byte" />

</TypeDescriptors>

</TypeDescriptor>

<TypeDescriptor Name="docaclmeta" TypeName="System.String, mscorlib, Version=2.0.0.0, Culture=neutral, PublicKeyToken=b77a5c561934e089" />

In the Method Instance definition we tell BCS what the names of these field type will be:

<Property Name="UsesPluggableAuthentication" Type="System.String">UsesPluggableAuth</Property>

<Property Name="WindowsSecurityDescriptorField" Type="System.String">SecurityDescriptor</Property>

<Property Name="DocaclmetaField" Type="System.String">docaclmeta</Property>

· UsesPluggableAuth as a boolean field type . If the value of this field is true, then we’re telling BCS to use custom security claims instead of the Windows Security descriptors.

· SecurityDescriptor as a byte array for the actual encoded claims data

· docaclmeta as an optional string field, which will only be displayed in the search results if populated. This field is not queryable in the index.

XmlFileLoader Class

In the connector code the XmlFileLoader utility class populates these values into the document that is returned to the index.

In some case we use logic to determine the value as here:

UsesPluggableAuth =security !=null,

The XmlFileLoader class also encodes the claim. Claims are encoded as a binary byte stream. The data type of this example is always of type string but this is not a requirement of the SharePoint backend.

The claims are encoded in the AddClaimAcl method according to these rules:

· The first byte signals an allow or deny claim

· The second byte is always 1 to indicate that this is a non-NT security ACL (i.e. it is a claim ACL type)

· The next four bytes is the size of the following claim value array

· The claim value string follows as a Unicode byte array

· The next four bytes following the claim value array, gives the length of the claim type

· The claim type string follows as a Unicode byte array

· The next four bytes following the claim type array, gives the length of the claim data type

· The claim data type string follows as a Unicode byte array

· The next four bytes following the claim data type array, gives the length of the claim original issuer

· The claim issuer string finally follows as a Unicode byte array.

Entity Class

Naturally, we have to include the three security-related fields in the Entity that is returned by the connector:

public class Document

{

private DateTime lastModifiedTime = DateTime.Now;

public string Title { get; set; }

public string DocumentID { get; set; }

public string Url { get; set; }

public DateTime LastModifiedTime { get { return this.lastModifiedTime; } set { this.lastModifiedTime = value; } }

public DocumentProperty[] DocumentProperties { get; set; }

// Security Begin

public Byte[] SecurityDescriptor { get; set; }

public Boolean UsesPluggableAuth { get; set; }

public string docaclmeta { get; set; }

// Security End

}

Products.xml

In the example all the documents are returned from one XML file, but here is one Product from that file:

<Product>

<ID>1</ID>

<Url>https://wfe/site/catalog/item1</Url>

<Title>Adventure Works Laptop15.4W M1548 White</Title>

<Item_x0020_Number>1010101</Item_x0020_Number>

<Group_x0020_Number>10101</Group_x0020_Number>

<ItemCategoryNumber>101</ItemCategoryNumber>

<ItemCategoryText>Laptops</ItemCategoryText>

<About>Laptop with 640 GB hard drive and 4 GB RAM. 15.4-inch widescreen TFT LED display. 3 high-speed USB ports. Built-in webcam and DVD/CD-RW drive. </About>

<UnitPrice>$758,00</UnitPrice>

<Brand>Adventure Works</Brand>

<Color>White</Color>

<Weight>3.2</Weight>

<ScreenSize>15.4</ScreenSize>

<Memory>1000</Memory>

<HardDrive>160</HardDrive>

<Campaign>0</Campaign>

<OnSale>1</OnSale>

<Discount>-0.2</Discount>

<Language_x0020_Tag>en-US</Language_x0020_Tag>

<!-- Security Begin -->

<claimtype>https://surface.microsoft.com/security/acl</claimtype>

<claimvalue>user1</claimvalue>

<claimissuer>customtrimmer</claimissuer>

<claimaclmeta>access</claimaclmeta>

<!-- Security End -->

</Product>

Note that the last 4 elements specify the claim value that will be evaluated by the Security Descriptor member that is returned in the Entity.

Custom Security Pre-Trimmer Implementation

The new pre-trimmer type is where the trimmer logic is invoked pre-query evaluation. The search server backend rewrites the query adding security information before the index lookup in the search index.

Because the data source contains information about the claims that are accepted, the lookup can return only the appropriate results that satisfy the claim demand.

Benefits of Pre-Trimming

Pre-trimming has better performance metrics so that is the method that is most recommended. Besides that the new Framework makes it easy to specify simple claims (in the form of strings) instead of having to encode ACLs in the form of Byte Arrays in the data source (something which can be quite time consuming and difficult).

To create a custom security pre-trimmer we must implement the two methods of ISecurityTrimmerPre.

IEnumerable<Tuple<Microsoft.IdentityModel.Claims.Claim, bool>> AddAccess(IDictionary<string, object> sessionProperties, IIdentityuserIdentity);

void Initialize(NameValueCollection staticProperties, SearchServiceApplication searchApplication);

The Membership Data (DataFile.txt)

In the beginning of the SearchPreTrimmer class I set the path to the datafile.txt membership file to @"c:\membership\datafile.txt". You’ll need to either change that or put your membership file there. The included example is basically blank but here is what mine looks like;

domain\username:user1;user3;user2;

contoso\aaronp:user1;

contoso\adamb:user2;

contoso\adambu:user1;user2;user3

contoso\alanb:user3;

The identity of the user is the first thing on every line and then comes a colon. After that is a list of claims, separated by semicolons. I left the user1, user2, user3 claims, but you could make it “editor”, “approver”, “majordomo” or whatever group claims make sense to you. You don’t have to think of it as groups, it just a string claim. The data that is crawled by the connector will specify the list of claims that can view that item in the search results.

Initialize

The main thing you’ll be doing in Initialize is setting the values of claim type, the claim issuer and the path to the datafile. The claim type and claim issuer are very important because the trimmer will use this information to determine whether and how to apply the demands for claims. The path to the data file is important because that is where the membership is specified. You can think of it as a membership provider just for the specific claims that you want to inject for the purposes of your custom trimming. It The framework passes in a NameValueCollection and it’s possible values could be sent in from there. You will probably want to set default values as we do in the example.

AddAccess

The AddAccess method of the trimmer is responsible for returning claims to be added to the query tree. The method has to return an IEnumerable<Tuple<Claim, bool>> but a Linked List is appropriate because it orders the claims in the same order that they were added in the datafile.

The group membership data is refreshed every 30 seconds which is specified in the RefreshDataFile method. RefreshDataFile uses a utility wrapper class (Lookup) to load the membership data into a simple to use collection.

The framework calls AddAccess passing in the identity with the claims we attached in the datafile specified in Initialize. Then we loop through those claims and add them to the IEnumerable of claims that AddAccess returns.

Installing and Debugging the Pre-Trimmer

To register the custom trimmer:

New-SPEnterpriseSearchSecurityTrimmer -SearchApplication "Search Service Application"

-typeName "CstSearchPreTrimmer.SearchPreTrimmer, CstSearchPreTrimmer,Version=1.0.0.0, Culture=neutral, PublicKeyToken=token"

-id 1

net stop "SharePoint Search Host Controller”

net start “SharePoint Search Host Controller”

The –id parameter is just an index in the collection. I think it’s arbitrary. I had to put a value though.

To debug the pre-trimmer code:

You may not need to do this and you won’t want to do it too often, but if you have complex logic in any of the code that retrieves or sets the static properties of the trimmer, you may want to debug the code.

I had to do the following things. I’m really not sure if all of them are necessary, but I could not break in without doing all of them:

1) Build and GAC the debug version of the SearchPreTrimmer to make sure you have the same version installed.

2) Remove the Security Trimmer with:

$searchapp = Get-SPEnterpriseSearchServiceApplication -Identity "Search Service Application"

Remove-SPEnterpriseSearchSecurityTrimmer -SearchApplication $searchapp -Identity 1

3) Restart the Search Service Host.

a. net stop "SharePoint Search Host Controller”

b. net start “SharePoint Search Host Controller”

4) IIS Reset.

5) Re-register the security trimmer using register script above.

6) Restart the Search Service Host.

a. net stop "SharePoint Search Host Controller”

b. net start “SharePoint Search Host Controller”

7) Use appcmd list wp to get the correct Worker Process ID

8) Attach to the correct w3wp.exe and all noderunner.exe instances (not sure if there’s a way to get the correct instance or not).

9) Start start a Search using and Enterprise Search Center Site Collection.

I got frequent problems like time outs and I always had to start the search on the same login account as Visual Studio was running in. Nevertheless it was possible and I did it more than once with the same steps as above.

Conclusion

We’ve covered the basics of how claims are sent to SharePoint in the customer connector. We covered the basics of the custom Security Pre-Trimmer in SharePoint 2013 and how it can use the claims sent by the custom connector to let the Query Engine specify claims-based ACLs so that only relevant result are returned to the results page.