More on Continuous Replication

In my last blog entry, I talked about the internals of the continuous replication feature in Exchange 2007. We went into a lot of technical details about the Replication service, its DLL companion files, its object model, etc. Deep stuff.

For this blog, I thought it might be useful to step back a bit and cover some more of the basics of continuous replication.

Why Continuous Replication?

You may be wondering, why do we have continuous replication at all? The problem we’re trying to solve is one of data outages. We’re trying to provide data availability; the observation being, that if you lose your data, you have a very expensive recovery from this. Restoring from backup takes a long time, there might be significant data loss, and you’re going to be offline for a long period of time before you get your data back.

What is Continuous Replication?

A simple way to describe the solution is that we keep a second copy of your data. If you have a copy of your data, you can use that copy, should you lose your original. The thing that makes this hard, is that this copy of your data has to be up-to-date.

The theory of continuous replication is actually quite simple. The idea is that we make a copy of your data, and then as the original is modified, we make the exact same modifications to the copy. This is going to be far less expensive than copying all of the data each time it is modified. And, this gives you an up-to-date copy of the data which you can then use, should you lose your original.

How does Continuous Replication Work?

The way that we keep this data up-to-date is through Extensible Storage Engine (ESE) Logging. ESE is the database engine for Exchange Server. As ESE modifies the database, it generates a log stream (a stream of 1 MB log files) containing a list of physical modifications of the database. The log stream is normally used for crash recovery. If the server blue screens, if a process dies, etc., the database can be made consistent by using the changes described in these logs files. The basic technology for this is industry standard. For example, SQL Server and other database engines all use write-ahead logging. Now in Exchange, though, there are a lot of complexities and subtleties, which I won’t go into in this blog.

Log files contains a list of physical modifications to database pages. When an update is made to the database an in-memory copy of the page is modified. Then, the log record describing that modification is written to the log file. Once that is done, the page can then be written to the database.

To implement continuous replication, we make a copy of the database, and then as log files are created that describe modifications to the original, we copy the log files and then replay them into the database copy.

Continuous Replication Behavior

This leads us to the basic architecture of continuous replication. A new service, called the Microsoft Exchange Replication service, is responsible for keeping the copy of the database up-to-date. It does this by copying log records that the store generates, inspecting them, and then replaying into the copy of the database.

Having a copy of the data is only useful if you have some way to use it; preferably, accessing it in a way that is transparent to the user. For CCR, the cluster service provides that. It moves the network address and identity to the passive node and starts the services. For LCR, activation is manual, but it is generally a very quick operation, since it's copy of the data is already available to the server.

Replication Pipeline

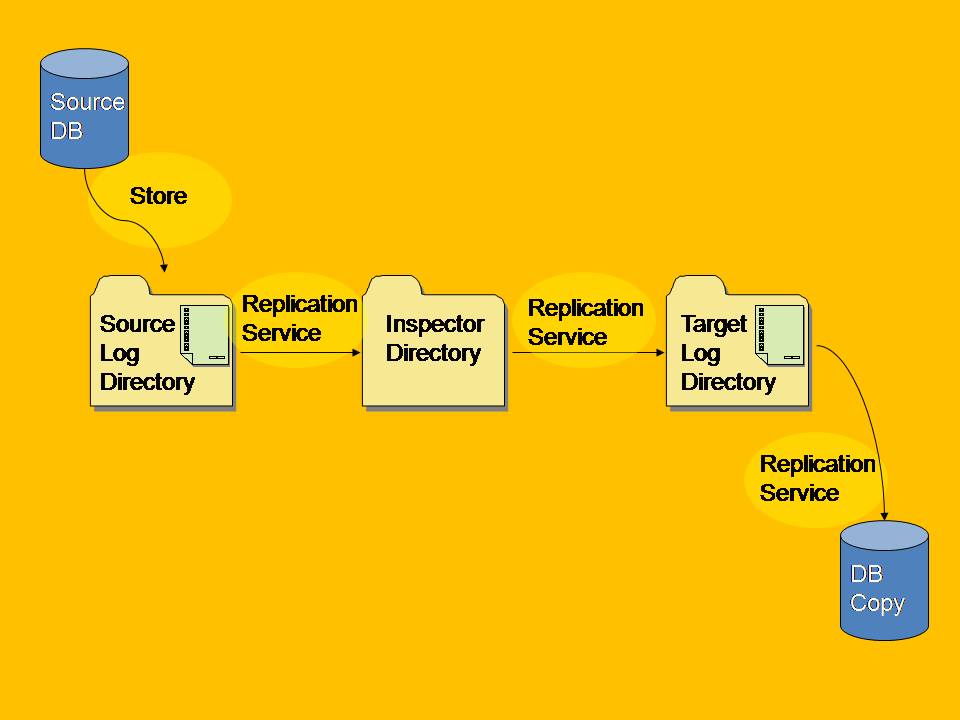

The replication pipeline is illustrated in the following figure.

To briefly recap what happens in the replication pipeline - the Store modifies the source database and generates log files in its log directory (the log directory for the storage group containing the database). The Microsoft Exchange Replication service, which "listens" for new logs by using Windows File System Notification events, is responsible for first copying the log files, inspecting them, and then applying them to the copy of the database.

ESE Logging and Log Files

To go deeper on this subject, we need to talk about ESE log files. Each storage group is assigned a prefix number, starting with 00 for the first storage group. Each log file in each storage group is assigned a generation number, starting with generation 1. Log files are a fixed size; 1 MB in Exchange 2007. The current log file is always Exx.log, where xx is the storage group prefix number. Exx is the only log file which is modified, and it is the only log file to which log records can be added. Once it fills up, it is renamed to a filename that incorporates its generation number. In Exchange 2007, the generation number is an 8-digit hexadecimal number.

Log Copying

Log copying is a pull model. The Exchange store on the active copy (sometimes referred to as the source) creates log files normally. Exx.log is always in use, and log records are being added to it. So that log file cannot be copied. However, as soon as it fills up and is renamed to the next generation sequence number. The Replication service on the passive side (sometimes referred to as the target) will be notified through WFSN and it will copy the log file.

On a move (scheduled outage) or failover (unscheduled outage), once the store is stopped, Exx.log becomes available for copying and the Replication service will try and copy it. If the file is unavailable (perhaps because, in the case of CCR, the active node blue-screened) then you have what we call a "lossy failover." It's called "lossy" because not all of the data (e.g., Exx.log, and any other log files in the copy queue) could be copied. In this case, the administrator-configured loss setting for the storage group is consulted to see if the amount of data loss is in the acceptible range for mounting the database.

Log Verification

Log files are copied by the Replication service to an Inspector directory. The idea is that we want to look at the log files and make sure that they are correct. There are physical checksums to be verified, as well as the logical properties of the log file (for example, its signature is checked to make sure it matches the database). The intention is, that once a log file is inspected, we have a high degree of confidence that replay will succeed.

If there is an inspection failure, the log file is recopied. This is to try to deal with any network issues that might have resulted in a non-valid log file. If the log file can't be copied successfully, then a re-seed is going to be required.

After a log file is successfully inspected, it is moved to the proper log directory where it becomes available for replay.

Log Replay

As log files are copied and inspected, a log re-player applies the changes to the database. This is actually a special recovery mode, which is different from the replay performed by Eseutil /r. Among other differences, the undo phase of recovery is skipped. There's a little more to this, but I won't go into it in this blog.

If possible, log files are replayed in batches. We'll wait a little bit of time for more log files to appear, and that's because replaying several log files together improves performance.

Monitoring the Replication Pipeline

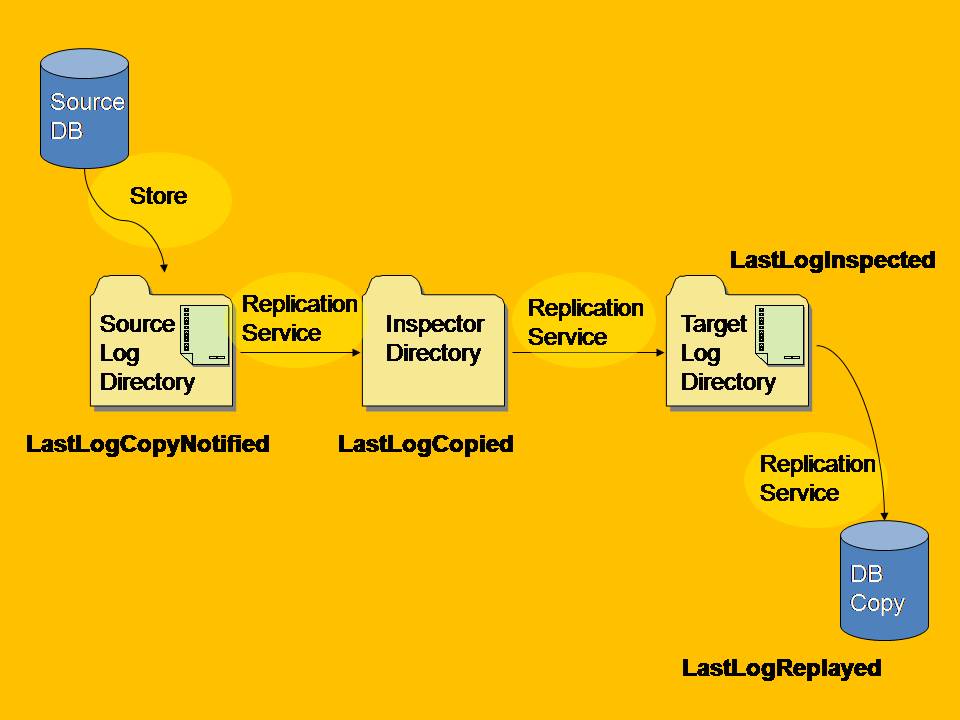

Now let's look at how the Get-StorageGroupCopyStatus cmdlet reflects the status of the different phases in the pipeline. If you run this cmdlet, some of the information that is returned can be used to track the status of the replication pipeline:

- LastLogCopyNotified is the last generation that was seen in the source directory. This file has not even been copied yet, but it's the last file that the Replication service saw appear in this directory that the store created.

- LastLogCopied is the last log file that was successfully copied into the Inspector directory by the Replication service.

- As a log file is validated and moved from the Inspector directory to its target log file directory, LastLogInspected is updated.

- Finally, as the changes are applied to the database, LastLogReplayed is updated.

The following figure illustrates the replication pipeline with these values shown:

These numbers are also available using Performance Monitor, as well.

Looking at the Replication Pipeline figure once more, we have our database which is modified by the store. That generates a log file. The Replication service sees the log file created, and updates LastLogCopyNotified. It copies the log file to the Inspector directory and updates LastLogCopied. After inspecting the log file, it is moved to the log directory used by the Replication service for the storage group copy, and then LastLogInspected is updated. Finally, the changes are applied to the copy of the database and LastLogReplayed is updated. And these two databases now have these changes in common.

Cluster Continuous Replication and Failovers

Let's talk about failover in a CCR environment. The Cluster service's resource monitor keeps tabs on the resources in the cluster. Keep in mind that failure detection is not instantaneous. Depending on the type of failure, it could be a fraction of a second to several seconds before the failure is noticed.

Failover behavior is dependent on which resource(s) failed:

- In the case of the failure of an IP address or network name resource, the behavior is to assume that a machine, or network access to a machine, has failed, and the services are moved over from the active node to the passive node.

- If Exchange services fail or timeout, they are restarted on the same node, and failover does not occur.

- Should a database fail, or should a database disk go offline, it will not trigger failover. The reason for this is that you now have the ability to have as many as 50 databases on a single mailbox server, including a clustered mailbox server. Moving all of the databases because one database failed would result in a lot of downtime for the storage groups/databases that are still running.

Lossy Failovers

A Move-ClusteredMailboxServer operation is called a "handoff," or a scheduled outage. This is something an administrator does when they need to move the clustered mailbox server from one node to the other. A failover, often referred as a lossy failover, is an unscheduled outage.

Consider the example of a CCR cluster. The active and passive are running along normally, and then suddenly the active node dies and goes offline. Because it is offline, the passive node cannot copy log files from it. Once the passive is the active, it starts making modifications to the database. The problem that occurs here is that without knowledge of the log files that were on Node 1, Node 2 starts generating log files with the same generation number. But of course, these files have different content.

So what happens when Node 1 comes back online? Node 1 will come online as the passive, and it will want to copy log files from Node 2. But you've now got two different log files with the same generation number, and potentially conflicting modifications. It literally could be the case that the modifications made on Node 1 before it died are the complete opposite of the modifications made on Node 2 after Node 1 died.

In this case, the log files have different content, the databases are different, and the storage group copies are in a state of divergence.

Divergence

Divergence is a case where the copy of the data has information that is not in the original. We expect the copy will run behind the original a little bit in time. So the original will have more data than the copy. If the copy has more data, or different data from the original, then we are in a state of divergence; the diverged data may be in the database, or it may be in the log files.

A lossy failover is always going to produce divergence. You can also get into a diverged state if "split-brain" syndrome happens in the cluster. Split brain is the condition where all network connectivity between the nodes is lost, and both nodes believe they are the active node. In this case, the Store is running on both nodes, and both nodes are making changes to their copies of the database. This means that, even though clients might only be able to connect to the Store on one of the nodes, or even if clients cannot connect to either of the Stores/nodes, background maintenance will still be occurring, and that is a logged operation. In other words, even if the Store is isolated from the network, logged physical changes to the database can and do occur.

Divergence can also be caused by administrator action. Remember that the recovery logic used by the Replication service is different from Eseutil /r. So if an administrator went to the passive node and ran Eseutil /r, they will end up in a diverged state. Or if an administrator performs an offline defragmentation of the active or the passive copy.

Detecting Divergence

To deal with divergence, we first need to know how to always detect it, so we can then correct it. Detecting divergence is the job of the Replication service. Divergence checking runs when the first log file is copied by the Replication service. It compares the last log file on the passive that was copied by the Replication service with its equivalent on the active node. If the files are the same then we can continue copying log files.

Every log file has a header and the header contains not only the creation time of the log file, but also the creation time of the previous log file in the sequence. This means that all log files are linked together by a chain of modification times which allows us to know that we have the correct set of log files.

The last thing that we do is, before replacing Exx.log, we make sure that the log file that is replacing it, is a superset of the data.

Correcting Divergence

The first thing to note about divergence is that a re-seed will always correct it. You can always re-seed a storage group copy to correct everything. But the problem is that this is a very expensive operation when dealing with large databases and/or constrained networks. So we tried to come up with some solutions. Looking at the common case, we expect to have a lossy failover where only a few log files are lost. A lossy failover in which the passive node was, for example, 1, 2, or 3 log files away from the active (e.g., only a few log files failed to copy). The solutions we implemented include decreasing the log file size, so that the amount of data loss was smaller, and implementing a new feature called Lost Log Resilience.

Lost Log Resilience

Lost Log Resilience (LLR) is a new ESE feature in Exchange 2007. Remember, with write-ahead logging, the log record is written to disk before the modified database page is written to disk. Normally, as soon as the log record is written, it becomes possible that the page can be written to the database file. LLR introduces the ability to force the database modification to be held in memory until some more log generations have been created.

LLR only runs on the active copy of a database; if you analyze a passive copy's database header, you'll see that its database is always up-to-date.

As an example, if a database page is modified, and if the log record describing the modification is written in log generation 10, we might enforce something such that the database cannot be modified until log generation 12 is created. Essentially, we're forcing the database on disk to remain a few generations behind the log files we created.

Log Stream Landmarks

For readers familiar with ESE logging recovery, LLR introduces a new marker within the log stream. You have the Checkpoint (the minimum generation that is required - the first log file required for recovery). And now at the other end of the log stream, there are two markers:

- Waypoint, or the maximum log required. This is the log file that is required for recovery. Without it, even with all of the log files up to this point, you cannot successfully recover.

- Committed log, which is further out. This is created data which is not technically needed for recovery of your database. However, if you lose the logs, you have lost some modifications.

Recovering from Divergence

Its through LLR that we can recover from divergence. The divergence correction code that uses this runs inside the Replication service on the passive. After realizing there is a divergence, the first thing it does is find the first diverged log file. It starts with the highest number and works backwards until it finds a log file that is exactly the same on the active. The log file above the one that is exactly the same is the first diverged log file.

The nice thing is, if the diverged log file is not required by the database, then we can just throw it away. We'll throw it away and copy the new data from the active. If the diverged file is required by the database, then re-seed will be required to recover from divergence.

Loss Calculations

When you failover in a CCR environment, there is a loss calculation that occurs. For example, you just failed over in your CCR cluster, and you know Exx.log was copied so there was some loss. Now you want to quantify the loss. There are two numbers that you use for this.

Remember, the Replication service keeps track of the last log that the store generated. But the store, just in case the Replication service is down, also updates, in the cluster database, the last log generation that it created. When you run the Get-StorageGroupCopyStatus cmdlet, LastLogGenerated represents the maximum of those two numbers.

So when you do a failover, we compare the last log generation with the last log that was copied. The gap between them is how many log files you just lost. The lossy-ness setting (AutoDatabaseMountDial) on your storage group is compared that to that number to determine whether it can mount automatically.

If you cannot mount a specific storage group, the Replication service will run on the active (which was the old passive). It will "wake up" every once in a while, try to contact the passive (which was the old active), and copy the missing log files. If it can copy enough log files to reduce the "lossy-ness" to an acceptable amount, then the storage group will come online.

There are three settings for AutoDatabaseMountDial: Lossless (0 logs lost); GoodAvailability (3 logs lost) and BestAvailability (default; 6 logs lost).

Say, for example, you have the dial set to Lossless, and then for some reason, the active node dies. The passive node will become the active node, but the database won't come online. Should the original active appear, its log files will be copied, and one-by-one, the storage groups will start coming online.

Transport Dumpster

Finally, there is also the Transport Dumpster. After a lossy failover, the Replication service can look at the time stamp on the last log file it copied. And then it can ask Transport Dumpster to redeliver all email since that time stamp. So, although you might lose data representing some actions (for example, making messages read/unread, moving messages, accepting meeting requests), all of the incoming mail can be re-delivered to the clustered mailbox server.