Exchange 2007 - Continuous Replication Architecture and Behavior

I've previously blogged about the two forms of continuous replication that are built into Exchange 2007: Local Continuous Replication (LCR) and Cluster Continuous Replication (CCR). In those blogcasts, you can see replication at work, but we really don't get into the architecture under the covers. So in this blog, I'm going to describe exactly how replication works, what the various components are, and what the replication pipeline looks like.

As you may have heard or read, continuous replication is also known as "log shipping." In Exchange 2007, log shipping is the process of automating the replication of closed transaction log files from a production storage group (called the "active" storage group) to a copy of that storage group (called the "passive" storage group) that is located on a second set of disks (LCR) or on another server altogether (CCR). Once copied to the second location, the log files are then replayed into the passive copy of the database, thereby keeping the storage groups in sync with a slight time lag.

In simple terms, log shipping follows these steps:

- Seed the source database in the destination to create a target database.

- Monitor for new logs in source log directory for copying by subscribing to Windows file system notification events for the directory.

- Copy any new log files to the destination log directory.

- Inspect the copied log files.

- After inspection is passed, move the log files the destination log directory and replay them into the copy of the database.

Microsoft Exchange Replication Service

Exchange 2007 implements log shipping using the Microsoft Exchange Replication Service (the "Replication service"). This service is installed by default on the Mailbox server role. The executable behind the Replication service is called Microsoft.Exchange.Cluster.ReplayService.exe, and its located at <install path>\bin. The Replication service is dependent upon the Microsoft Exchange Active Directory Topology Service. The Replication service can be stopped and started using the Services snap-in or from the command line. The Replication service is also configured to be automatically restarted in case of a failure or exception.

Running Replication Service in Console Mode

The Replication service can be started as service or as a console application., But note, that running the service as a console application is strictly for troubleshooting and debugging purposes. This is not something that would be done as a regular administrative task. In console mode the replication process check for two parameters: -console and -noprompt.

-Console

If the console switch is specified or no default parameter is provided then the process will check to see if it is started up as service or console application. This is done by looking at the SIDs in the tokens of the process. If the process has a service SID, or no interactive SID, the process is considered to be running as a service.

-NoPrompt

By default, a shutdown prompt is on. You use the -noprompt switch to disable the shutdown prompt.

The Replication Service Internals

The Replication service is a managed code application that runs in the Microsoft.Exchange.Cluster.ReplayService.exe process.

Replication Service Registry Values

The Replication service keeps track of a storage group that is enabled for replica by keeping that information in the registry. The storage group replica information is stored the registry with the Object GUID of the storage group.

State

The replay state of storage group that has the continuous replication enabled is stored at HKLM\Software\Microsoft\Exchange\Replay\State\GUID.

StateLock

Each replica state is controlled via a StateLock to make sure that the access to the state information is gated. As its name implies, StateLock is used to manipulate a state lock from inside the Replication service. There are two StateLocks created per storage group: one for the database file and one for the log files. These locks states are stored at HKLM\Software\Microsoft\Exchange\Replay\StateLock\GUID.

Replication Service Diagnostics Key

The Replication service stores its configuration information regarding diagnostics at HKLM\System\CCS\Services\MSExchange Repl\Diagnostics.

You can query the current diagnostic level for the Replication service using an Exchange Management Shell command: get-EventLogLevel -Identity "MsExchange Repl" . This will also return the diagnostic level for the Replication service's Exchange VSS Writer, which is another subject altogether (maybe something for a future blog).

Replication Service Configuration Information in Active Directory

The Replication service uses the msExchhasLocalCopy attribute to identify which storage groups are enabled for replication in an LCR environment. msExchhasLocalCopy will be set at the database level, as well.

In a CCR environment, the Replication service uses the cluster database to store this information.

The Replication service uses an algorithm to search Active Directory for replica information:

- Find the Exchange Server object in the Active Directory using the computer name. If there is no server object then return.

- Enumerate all storage groups that are on this Exchange server.

- For each storage group with msExchhasLocalCopy set to true:

a. Read the msExchESEParamCopySystemPath and msExchESEParamCopyLogFilePath attributes of the storage group.

b. Read the msExchCopyEdbFile attribute for each database in the storage group

Replication Components

The Replication Service implements log shipping by using several components to provide replication between the active and passive storage groups.

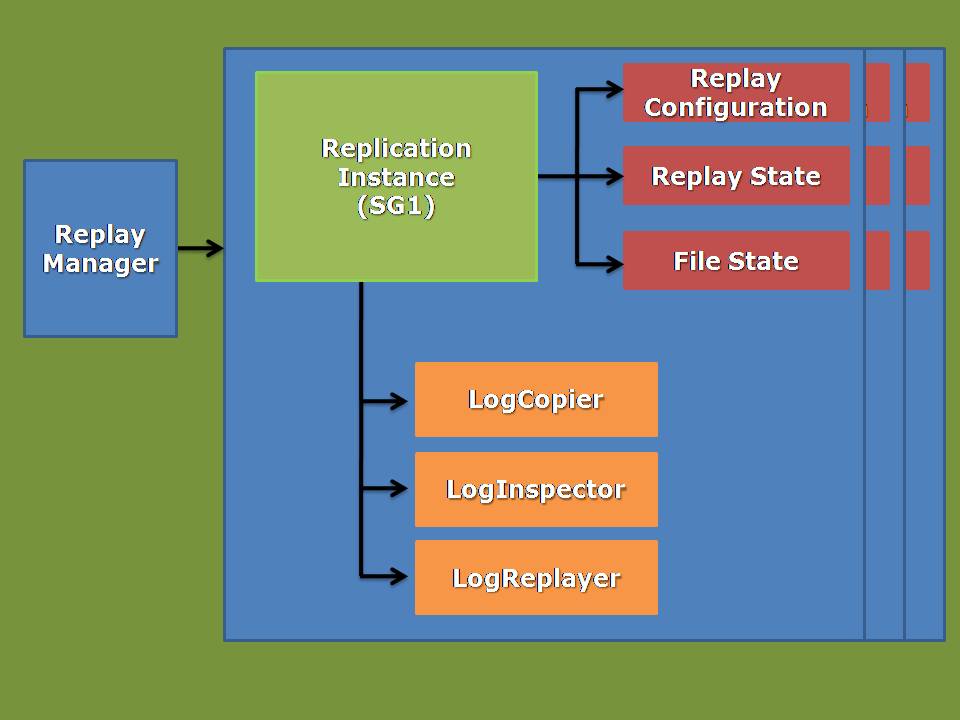

Replication Service Object Model

The Replication service is responsible for creating an instance of the replica associated with a storage group. The object model below shows the different objects that are created for each storage group copy.

In a CCR environment, the Replication service runs on both the active node and the passive node. As a result, both an active and a passive replica instance will be created.

Copier

The copier is responsible for copying closed log files from the source to destination. This is an asynchronous operation in which the Replication service continuously monitors the source. As soon as new log file is closed on the source, the copier will copy the log file to the inspector location on the target.

Inspector

The inspector is responsible for verifying that the log files are valid. It checks the destination inspector directory on a regular basis. When a new log file is available, it will be checked (checksummed for validity) and then copied to the database subdirectory. If a log file is found to be corrupt, the Replication service will request a re-copy of the file.

LogReplayer

The logreplayer is responsible for replaying log files into the passive database. It also has the ability to batch multiple log files into a single batch replay. In LCR, replay is performed on the local machine, whereas with CCR, replay is performed on the passive node. This means that the performance impact of replay is higher on for LCR than CCR.

Truncate Deletor

The truncate deletor is responsible for deleting log files that have been successfully replayed into the passive database. This is especially important after an online backup is performed on the active copy since online backups delete log files are not required for recovery of the active database. The truncate deleter makes sure that any log files that have not been replicated and replayed into the passive copy are not deleted by an online backup of the active copy.

Incremental Reseeder

The incremental reseeder is responsible for ensuring that the active and passive database copies are not diverged after a database restore has been performed, and after a failover in a CCR environment.

Seeder

The seeder is responsible for creating the baseline content of a storage group used to start replay processing. The Replication service perform automatic seeding for new storage groups.

Replay Manager

The replay manager is responsible for keeping track of all replica instances. It will create and destroy the replica on-demand based on the online status of the storage group. The configuration of a replica instance is intended to be static; therefore, when a replica instance configuration is changed the replica will be restarted with the updated configuration. In addition, during shutdown of the Replication service, the configuration is not saved. As a result, each time the Replication service starts it has an empty replica instance list. When the Replication service starts, the replay manager does discovery of the storage groups that are currently online to create a "running instance" list.

The replay manager periodically runs a "configupdater" thread to scan for newly configured replica instances. The configupdater thread runs in the Replication service process every 30 seconds. It will create and destroy a replica instance based on the current database state (e.g., whether the database is online or offline. The configupdater thread uses the following algorithm:

- Read instance configuration from Active Directory

- Compare list of configurations found in Active Directory against running storage groups/databases

- Produce a list of running instances to stop and a list of configurations to start

- Stop running instances on the stop list

- Start instances on the start list

Effectively, therefore, the replay manager always has a dynamic list of the replica instances.

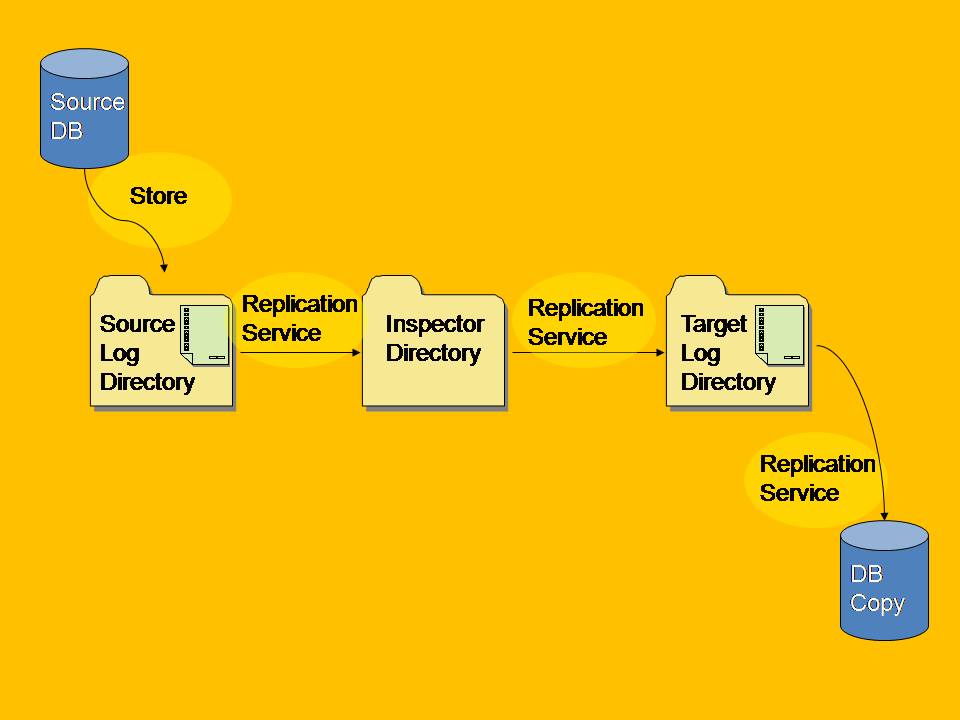

Replication Pipeline

The replication pipeline implemented by the Replication service is shown below. In an LCR environment, the source database and target database are on the same machine. In a CCR environment, the source and target database are on different machines (different nodes in the same failover cluster).

Log Shipping and Log File Management

The Replication service uses an Extensible Storage Engine (ESE) API to inspect and replay log files that are copied over from the active storage group to the passive storage group. Once the log files are successfully copied to the inspector directory, the log inspector object associated with the replica instance verifies the log file header. If the header is correct, the log file will be moved to the target log directory and then replayed into the passive copy of the database.

Log Shipping Directory Structure

The Replication service creates a directory structure for each storage group copy. This per-storage group directory structure is identical in both LCR and CCR environments, with one exception: in a CCR environment, a content index catalog directory is also created.

Inspector Directory

The Inspector directory contains log files copied by the Copier component. Once the log inspector has verified that a log file is not corrupt, the log file will be copied to the storage group copy directory and replayed in the passive copy of the database.

IgnoredLogs Directory

The IgnoredLogs directory is used to keep valid files that cannot be replayed for any reason (e.g., the log file is too old, the log file is corrupt, etc.). The IgnoredLogs might also have the following subdirectories:

E00OutofDate

This is the subdirectory that holds any old E00.log file that was present on the passive copy at the time of failover. An E00.log file is created on the passive if it was previously running as an active. An event 2013 is logged in the Application event log to indicate the failure.

InspectionFailed

This is the subdirectory that holds log files that have failed inspection. An event 2013 is logged when a log file fails inspection. The log file is then moved to the InspectionFailed directory. The log inspector uses Eseutil and other methods to verify that a log file is physically valid. Any exception returned by these checks will be considered as a failure and the log file will be deemed to be corrupt.

Well, there you have it. I hope you found this useful and informative.