The Case in the Corner Series: Session Pool Exhaustion

[Today’s post comes to us courtesy of Wayne Gordon McIntyre from Commercial Technical Support]

Troubleshooting resource exhaustion issues in support is something that you have no choice but to get good at, and not to worry, we get plenty of practice with the number of performance based cases that come in on a regular basis. It is generally pretty easy to spot a potential resource exhaustion condition, as the symptoms are usually resolved (perhaps the better word is relieved) by a reboot only to resurface a few days to weeks later depending on how fast the particular resource is exhausted. So, if you encounter a server that you have to reboot every few days to work properly, you probably have an issue with resource exhaustion which is usually caused by a memory leak. This next case will discuss such a condition; however the resource exhaustion occurred in an area of memory where we had never previously encountered, and have not encountered again since which puts it into the corner case bucket.

The case involved an SBS 2003 server which being 32bit has many memory resource limitations especially for kernel mode. The main ones being 2GB of virtual address space for the kernel (assuming no /3gb switch), 530MB for paged pool (can be paged out) and 256MB for NonPaged Pool (can’t be paged out to a pagefile) on server SKUs. For a complete list of memory limits see:

https://msdn.microsoft.com/en-us/library/aa366778(VS.85).aspx#memory_limits

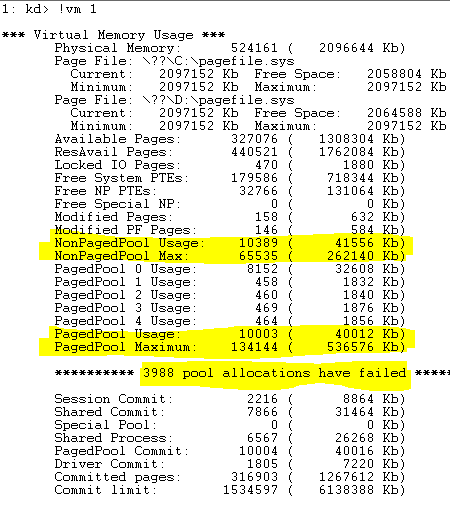

The symptoms in this case were MMC snap-ins were not loading correctly such as active directory users and computers, the SBS mmc etc… of course they would reboot and everything would work again until 1 or 2 days later when the symptoms would re-emerge. Going thru the case notes, it seemed they had checked all of the usual suspects of resource consumption issues but were not making any progress. Since I had the dump file, I decided I would double check everything. I started out with inspecting the virtual memory usage with !vm 1 ( !vm displays summary information about virtual memory use statistics, the 1 just causes the display to omit process-specific statistics which I don’t care about at the moment)

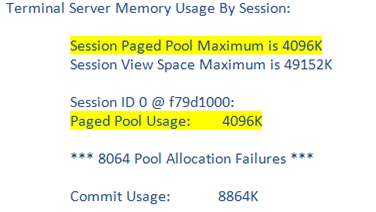

The output immediately stood out as interesting to me, the NonPagedPool Usage is roughly 41MB and the PagedPool Usage is roughly 39MB (we can also see the potential max is 256MB and 530MB which means there is no /3gb switch at play). The part that was interesting to me is that there have been 3988 pool allocation failures, yet there is plenty available pool memory. Luckily the O/S keeps a counter for all pool allocation failures and their reasons in “MmPoolFailures” and “MmPoolFailureReasons”. Next I dumped out those addresses in memory using dd nt!MmPoolFailures and dd nt!MmPoolFailureReasons, which basically showed me that the failures were in session pool and there was actually a total of 8064 pool allocation failures. After consulting the debugger’s help on how to view Session Paged Pool memory statistics I discovered a better method by enabling the bit 2 flag (0x4) with the !vm command. !vm 4 includes session memory in the output; this is where the answer was clearly revealed to where the resource exhaustion was occurring. . The bottom portion of the output is shown below.

Ahh, so we are out of session paged pool, but who uses session paged pool? Turns out SessionPoolSize is used for video card driver allocations when Terminal Services is enabled, and SessionViewSize (Desktop heap when TS is enabled) is used for GUI objects such as fonts and menus. The default value of SessionPoolSize on an SBS 2003 server is 4MB; however this value is controllable thru the SessionPoolSize DWORD in “HKLM\System\CCS\Control\Session Manager\Memory Management\ ”. In this case 4MB was not a sufficient amount of session paged pool for his video card’s driver allocations so we increased it to 16MB which resolved the problem. The KB article below talks about the sizes you can configure for SessionPoolSize and SessionViewSize as well as their default values.

https://support.microsoft.com/default.aspx?scid=kb;EN-US;840342