Software Defined Networking – Hybrid Clouds using Hyper-V Network Virtualization (Part 1)

Hello Readers and Viewers!

By now, you have likely seen Brad Anderson’s Blog Post (Networking Without Limits: SDN) where he talks about Software Defined Networking, and how it applies to the larger topic of Transform the Datacenter.

So what the heck is SDN you ask? Well, as Brad mentioned in his blog , at its core SDN is all about using software to make your network a pooled, automated resource that can seamlessly extend across cloud boundaries. SDN begins with abstracting your applications and workloads from your underlying physical network through network virtualization. It then provides a consistent platform to express and enforce policy across all clouds – in-built services such as gateways seamlessly extend your datacenters across these clouds. Finally, SDN provides for a standards-based mechanism to automate deployment of both your networks.

In that context, this series of blog posts detail how Windows Server 2012 R2 and System Center 2012 R2 deliver a hybrid cloud-enabled, built-in, SDN solution, providing multi-tenant isolation and network policy deployment through Hyper-V Network Virtualization and System Center 2012 R2 Virtual Machine Manager, while enabling new Hybrid Cloud scenarios through Multi-Tenant software gateway that supports site-to-site VPN, forwarding and NAT capabilities. Here is a table of contents for the full series:

Table of Contents

- Part 1: Hyper-V Network Virtualization: Architecture and Key concepts.

- Part 2: Implementing Hyper-V Network Virtualization: Conceptual “Simple” Setup.

- Part 3: Bring it all Together: Cloud Based Disaster Recovery (DR) using Windows Server 2012 R2.

Hyper-V Network Virtualization: Architecture and Key Concepts

Hyper-V Network Virtualization provides a complete SDN end-to-end solution for network virtualization that uses a network overlay technology paired with a control plane and gateway to round out the solution. These three pieces are embodied in:

- Hyper-V Virtual Switch (with a virtual network adapter attached to a virtual network),

- System Center Virtual Machine Manage (SCVMM) as the control plane

- The inbox Windows HNV Gateways

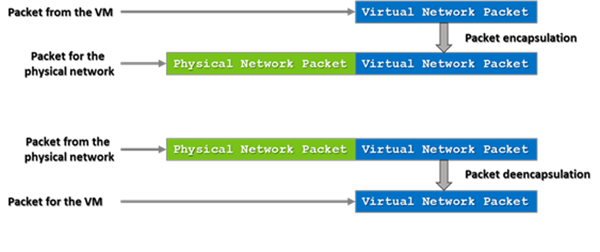

At the core of HNV is a network overlay technology that allows separation between the virtual network and the underlying physical network. Network overlays are a well know technique that layers a new network on top of an existing network. This is often done using a network tunnel. Typically, this tunnel is provided by packet encapsulation, essentially putting the packet for the virtual network inside a packet that the physical infrastructure can route.

Network overlays are widely used today for a number of scenarios including VPN connections over wide area networks (WAN) connections or MPLS connections over a variety of telecommunications networks. One major part of an overlay network is that the endpoints in the overlay network have the intelligence needed to begin or terminate the tunnel by either encapsulating or deencapsulating the packet. As mentioned above the implementation of the overlay network is done as part of the Hyper-V Virtual Switch through the HNV filter which encapsulates and deencapsulates the packets as they are entering and exiting the virtual machines.

On top of an overlay network, HNV also provides a control plane that manages the overlay network independently from the physical network. There are two main types of control planes, centralized and distributed, each having their own strengths. For HNV, a centralized control plane is used to distribute policies to the endpoints needed to properly encapsulate and deencapsulate the packets. This allows for a centralized policy that has a global view of the virtual network while having the actual encapsulation and deencapsulation happening based off this policy at each end host. This makes for a very scalable solution as the policy updates are relatively infrequent while the actual encapsulation and deencapsulation is very frequent (every packet). Windows provides PowerShell APIs to program the policies down to the Hyper-V Virtual Switch that means anyone could build the central policy store. System Center Virtual Machine Manager has implemented the necessary functionality to be the central policy store and is the recommended solution.

Finally, because having a virtual network that cannot communicate with the outside world is of little value, gateways are provided that bridge between the virtual network and either the physical network or other virtual networks. Windows Server 2012 R2 provides an inbox gateway and several third parties including F5, Iron Networks and Huawei have gateways that can provide the bridge needed for virtual networks.

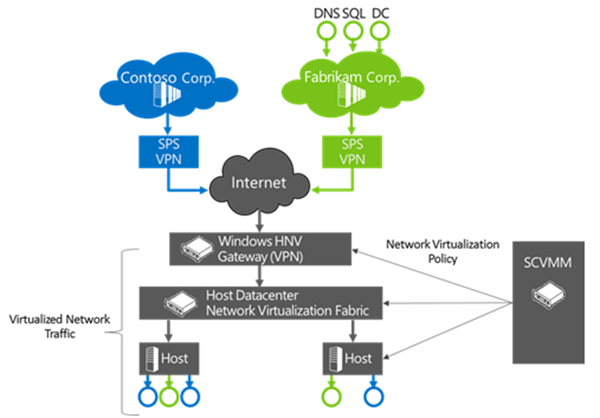

The following Figure shows how the three pieces (SCVMM, the Windows Gateway and the Hyper-V virtual switch) combine to provide a complete SDN solution. In this example the inbox Windows HNV gateway is providing VPN capabilities to connect customers over the Internet to datacenter resources being hosted at a service provider.

Now that we covered the general concept of how HNV works using a combination of overlay network technologies, control plane and gateways, let’s have a look to specific concepts in HNV.

Virtual Machine Network

The virtual machine network is core concept in network virtualization. Much like a virtual server is a representation of a physical server including physical resources and OS services, a virtual network is a representation of a physical network including IP, routing policies, etc. Just like a physical network forms an isolation boundary where there needs to be explicit access to go outside the physical network, the VM network also forms an isolation boundary for the virtual network.

In addition to being an isolation boundary, a VM network has most of the characteristics of a physical network but several are unique to VM Networks.

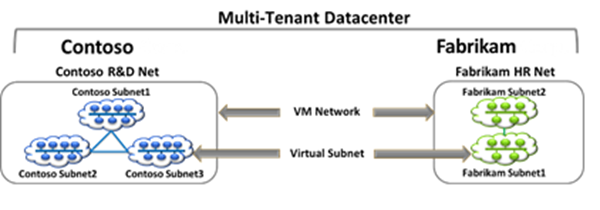

First, there can be many VM networks on a single physical network. This a major advantage for virtual networks, particularly in data centers that contain multiple tenants such as what a service provider or cloud provider might have. These VM networks are isolated from each other even though their traffic is flowing across the same physical network and even in the same hosts. Specifically the Hyper-V Virtual switch is responsible for this isolation.

Second, it is good to understand how IP and MAC addresses work in VM networks. There are two important cases. Within a single VM network, there cannot be any overlapping IP or MAC addresses just like in a physical network. On the other hand, across multiple VM networks each VM network can contain the same IP and MAC Address, even when those VM Networks are on the same physical network. When talking about IP address it is good to mention that HNV supports both IPv4 and IPv6 addresses. Currently, HNV does not support a mixture of IPv4 and IPv6 customer addresses in a particular VM Network. For each VM Network, it needs to be configured to either use IPv6 or IPv4 for the customer addresses. On a single host there can be a mixture of IPv4 and IPv6 customer addresses if they are in different VM networks.

Third, only VMs can be joined to a virtual network. Windows does allow the host operating system to run through the Hyper-V Virtual Switch and can be attached to a VM network but SCVMM, in System Center 2012 R2, won’t configure the host operating system to be attached to a virtual network.

Fourth, currently a single instance of SCVMM manages a particular VM network. This limits the size of the VM network to the number of VMs supported by a single instance of SCVMM.

In SCVMM, the Virtual Machine Network is called “VM Network” and has a workflow that allows for the creation and deletion of VM Networks and managing the properties associated with a VM Network. In the HNV Windows PowerShell APIs, the VM Network is identified by a Routing Domain ID (RDID) property. This RDID property must be unique within the physical network and set automatically by SCVMM.

Virtual Subnet

Within a VM Network, there must be at least one Virtual Subnet. The concept of a Virtual Subnet is identical to a subnet in a physical network in that it provides a broadcast domain and is a single LAN segment. In HNV, the Virtual Subnet is encoded in each virtualized packet in the Virtual Subnet ID (VISD) property and is a 24-bit field discoverable on the wire. Because of the close approximation to VLANs, valid VSIDs range from 4096 to 16,777,214 beginning after the valid VLAN range and having the last VSID in the 24-bit range (16,777,214) reserved for private use. The Virtual Subnet ID must also be unique within a particular physical network, typically defined as the network being managed by SCVMM.

To understand how VM networks and Virtual Subnets relate to each other, the previous figure shows an example of multi-tenant datacenter where network virtualization is turned on. In this example, there are two tenant, representing different companies, potentially competitors. They want their traffic to be securely isolated from each other so they form two VM Networks. Inside each of these VM Networks they are free to create one or more Virtual Subnets and attach VMs to particular subnets creating the particular network topology that suites their needs.

VM Network Routing

After VM Networks and Virtual Subnets, the next concept to understand is how routing is handled in VM Networks. Specifically there are two main cases to look at routing between Virtual Subnets and routing beyond the VM Network.

- Routing between Virtual Subnets

In a physical network a subnets is the L2 domain where machines (virtual and physical) can directly communicate with each other without having to be routed. In Windows, if you statically configure a network adaptor you must set a “default gateway” which is the IP address to send all traffic that is going out of the particular subnet so that it can be routed appropriately. This is typically the router for your physical network. HNV uses a built in router that is part of every host to form a distributed router for virtual network. This means that every host, in particular the Hyper-V Virtual Switch, acts as the default gateway for all traffic that is going between Virtual Subnets that are part of the same VM network. In Windows Server 2012 and Windows Server 2012 R2 the address used as the default gateway is the “.1” address for the subnet. This .1 address is reserved in each Virtual Subnet for the default gateway and cannot be used by VMs in the Virtual Subnet.

- Routing beyond a VM Network

The second main routing functionality needed is when a packet needs to go beyond the VM Network. As explained above the VM Network is an isolation boundary but that does not mean that no traffic should go outside of the VM Network. In fact, you could easily argue that if there was no way to communicate outside the VM network then network virtualization wouldn’t be of much use. So much like physical networks having a network edge that controls what traffic can come in and out of the physical network, virtual networks also have a network edge in the form of a HNV Gateway. The role of the HNV gateway is to provide a bridge between a particular VM network and either the physical network or other VM networks. There are several different capabilities that an HNV gateway can provide including:

-

- Forwarding:

The most basic function of the gateway that simply encapsulates or deencapsulates packets between the VM network and the physical network the Forwarding Gateway is bridging to. This type of gateway would typically be used to connect a VM in a VM Network to a shared resource like storage or a backup service that is on the physical network. This can also be used to connect a VM Network to the edge of the physical network so that the VM Network can use the same edge services (firewall, intrusion detection) as the physical network provides. - VPN:

- Site-to-Site

The site-to-site function of the gateway allows direct bridging between a VM Network and a network (physical or another VM network) in a different datacenter. This is typically used in hybrid scenarios where a part of a tenant’s datacenter’s network is on premises and part of the tenant’s network is hosted virtually in the cloud. The requirement when using the site-to-site function is that the VM network is routable in the network at the other site and the other site’s network is routable in the VM network. Also there is required to be a site-to-site gateway on each side of the connection (ex. One gateway on premise in the enterprise and one gateway at the service provider). - Remote Access (Point-to-Site)

The remote access function of the gateway allows a user on a single computer to bridge in the virtual network. This is similar to the Site-to-Site function but doesn’t require a gateway on each side, only on one side. An example of this is an administrator connecting from their laptop into the virtual network from their corporate network instead of their on-premise datacenter network.

- Site-to-Site

- NAT/Load balancing

The final function that the gateway can provide is NAT and or Load balancing. As expected NAT and Load balancing allows connectivity to an external network like the internet without having the internal Virtual Subnets and IP Addresses of the VM Network routable external to the VM Network. The NAT capability allows for a single externally routable IP address for all connections external to the VM Network or can provide a 1-1 mapping of a VM that needs to be accessed from the outside where the address internal to the virtual network is mapped to an address that is accessible from the physical network. A Load balancer provides the standard load balancing capabilities the primary difference being the Virtual IP (VIP) is on the physical network while the Dedicated IPs (DIPs) are in the VM Network

- Forwarding:

In Windows Server 2012 R2 the in-box gateway provides Forwarding, Site-to-Site and NAT functionality. The gateway is designed to be run in a Virtual Machine and takes advantage of the host and guest clustering capabilities in Windows and Hyper-V to be highly available. A second major feature of the gateway is that a single gateway VM can be the gateway for multiple VM Networks. This is enabled by the Windows networking stack becoming multi-tenant aware with the ability to compartmentalize multiple routing domains from each other. This allows multiple VM Networks to terminate in the same gateway even if there are overlapping IP addresses.

In addition to the inbox Windows Gateways there are a growing number of third party gateways that provide one or more of these functions. These gateways integrate with SCVMM just as the inbox Windows Gateway does and acts as the bridge between the VM network and the physical network.

A few other capabilities of the SCVMM’s support of gateway that should be noted are:

-

- There can only be one gateway IP Address per VM network.

- The gateway has to be put in its own Virtual Subnet.

- There can be multiple Gateway VMs on the same host but there cannot be other VM on a VM Network on the same host of the Gateway VMs.

For more details on how routing works and the packet flow related to routing in a VM network, check this link for A PowerPoint slide deck that describes Hyper-V Network Virtualization packet flow.

Packet Encapsulation

The next major concept in network virtualization is the concept of packet encapsulation. For many, packet encapsulation is the core of network virtualization. In particular when looking at overlay networking technologies like HNV, packet encapsulation is the way in which the virtual network is separated from the physical network. The basic way in which packet encapsulation works is that the packet for the virtual network is put inside (encapsulated) a packet that is understood by the physical network. Before the packet is delivered to the VM the packet that is understood by the physical network is striped off (deencapsulated) leaving only the packet for virtual network. As mentioned above in HNV the VM switch provides the packet encapsulation functionality.

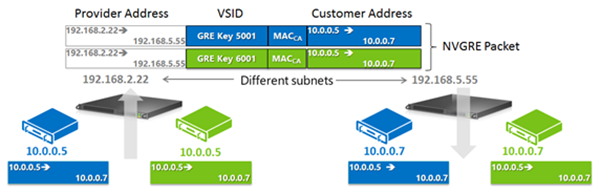

There are many different encapsulation formats including recent ones like Virtual eXtensible Local Area Network (VXLAN), Stateless Transport Tunneling Protocol for Network Virtualization (STT) and Generic Routing Encapsulation (GRE). HNV uses a particular format of GRE, called Network Virtualization using Generic Routing Encapsulation (NVGRE) , for the encapsulation protocol.

GRE was chosen as the encapsulation protocol for HNV because it is an industry standard mechanism for packet encapsulation protocol. NVGRE is a specific format of GRE that is provided jointly between Microsoft, Arista, Intel, Dell, HP, Broadcom, Emulex, and Mellanox as an internet draft at the IETF. A full version of the specification can be found at: https://tools.ietf.org/html/draft-sridharan-virtualization-nvgre-03..

The NVGRE wire format has an outer header with source and destination MAC and IP Addresses and an inner header with source and destination MAC and IP addresses. In addition there is the standard GRE header between the outer and inner headers. In the GRE Header the Key field is a 24 bit field where the Virtual Subnet ID (VSID) is put in the packet. As mentioned above this allows the VSID to be explicitly set in each packet going across the virtual network.

- Customer Address (CA)

When looking at the NVGRE format it is important to understand where the address space for the inner packet comes from. It is called the Customer Address (CA). The CA is the IP address of a network adaptor that is attached to the VM Network. This address is only routable in the VM Network and does not necessarily routable anywhere else. In VMM, this CA comes from the IP Pool assigned to a particular Virtual Subnet in a VM Network.

- Provider Address (PA)

The outer packet is similar in that the IP address is called the Provider Address (PA). The PA must be routable on the physical network but should not be the IP address of the physical network adaptor or a network team. In VMM, the PA comes from the IP pool of the Logical Network.

The following shows how NVGRE, CAs and PAs relate to each other and the VMs on the VM Networks.

That’s it for Now, What’s Next!

In this blog post, we covered all of the major concepts related to Hyper-V Network Virtualization. From here, the next area to look at is how Windows Server 2012 R2 and System Center 2012 R2 deliver a hybrid cloud-enabled, built-in, end-to-end SDN solution through a simple conceptual setup.