Hyper-V Virtual Fibre Channel Design Guide

Virtual Fibre Channel for Hyper-V provides the guest operating system with unmediated access to a SAN by using a standard World Wide Name (WWN) associated with a virtual machine. Hyper-V users can now use Fibre Channel SANs to virtualize workloads that require direct access to SAN logical unit numbers (LUNs).

Virtual Fibre Channel for Hyper-V provides the guest operating system with unmediated access to a SAN by using a standard World Wide Name (WWN) associated with a virtual machine. Hyper-V users can now use Fibre Channel SANs to virtualize workloads that require direct access to SAN logical unit numbers (LUNs).

Fibre Channel SANs also allow you to operate in new scenarios, such as running the Failover Clustering feature inside the guest operating system of a virtual machine connected to shared Fibre Channel storage[1].

1 Virtual Fibre Channel Pre-Req’s

- One or more installations of Windows Server 2012 with the Hyper-V role installed.

- A computer with one or more Fibre Channel host bus adapters (HBAs) that have an updated HBA driver that supports virtual Fibre Channel. Updated HBA drivers are included with the in-box HBA drivers for some models. The HBA ports to be used with virtual Fibre Channel should be set up in a Fibre Channel topology that supports NPIV. To determine whether your hardware supports virtual Fibre Channel, contact your hardware vendor or OEM.

- An NPIV-enabled SAN.

- Virtual machines configured to use a virtual Fibre Channel adapter, which must use Windows Server 2008, Windows Server 2008 R2, or Windows Server 2012 as the guest operating system.

- Storage accessed through a virtual Fibre Channel supports devices that present logical units. Virtual Fibre Channel logical units cannot be used as boot media.

2 Terms and definitions

NPIV – Virtual Fibre Channel for Hyper-V guests uses the existing N_Port ID Virtualization (NPIV) T11 standard to map multiple virtual N_Port IDs to a single physical Fibre Channel N_port. A new NPIV port is created on the host each time you start a virtual machine that is configured with a virtual HBA. When the virtual machine stops running on the host, the NPIV port is removed.

vSAN – A virtual SAN (vSAN) defines a named group of physical Fibre Channel ports that are connected to the same physical SAN.

vHBA – Virtual HBA the hardware component assigned to a Virtual Machine Guest and is assigned to a specific vSAN.

Fabric – A fabric is a collection of switches that have been linked together through a Fibre Channel cable, also known as an ISL (interswitch link). The term fabric is generally used to indicate a group of switches, although it is technically correct to refer to a single switch that is not connected to other switches as a single switch fabric.

MPIO – Multi-pathing software used to provide load balancing and\or fault tolerance for multiple Fibre Channel connections in the same host. Native MPIO is available in Windows Server 2012, as well, MPIO vendor specific DSM’s are available from your storage vendor.

WWPN – World Wide Port Number (WWPN) is a unique number provided to a Fibre Channel HBA similar to a MAC address. This unique key is used to allow the storage fabric to recognize a specific HBA.

Zoning – The process of mapping the HBA WWPN’s in the storage fabric and assigning them to storage ports to provide a path of communication with allocated storage.

3 Configuration Options

With Virtual Fibre there are a few common scenarios that should be reviewed and understood prior to implementation. As there are pros and cons to each scenario as well as technical\business requirements that will drive the design decision.

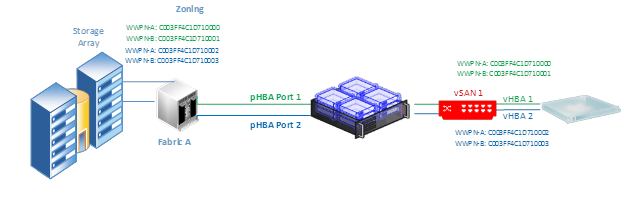

3.1 Single Fabric

With the single fabric design, there are one or more switches interconnected to provide the storage fabric. This is the simplest design and makes it easier for storage admins when connecting hosts and zoning storage.

In the single fabric design, administrators can connect multiple Physical HBA’s from the Host server to the fabric in order to provide redundancy and load balancing capabilities. When configuring the vSAN the administrator then has the ability to assign one or more of the physical HBA ports to a vSAN. In turn one or more vHBA’s can be assigned to a Virtual Guest.

In the diagram above, 2 physical ports have been attached to the storage fabric, and both ports have been assigned to vSAN 1. Two vHBAs are then added to the guest, which would require MPIO to be installed within the guest for load balancing and fault tolerance.

Under normal operations and in the best case scenario, each vHBA will create its N_Port on a separate pHBA. This provides redundancy in the event of a bad physical connection, as well as true load balancing for higher bandwidth. However the creation of the N_Port on separate pHBA’s cannot be guaranteed so consideration for those situations need to be examined. A round robin algorithm is used for N-Port creation so theoretically the ports should be balanced across the physical’s.

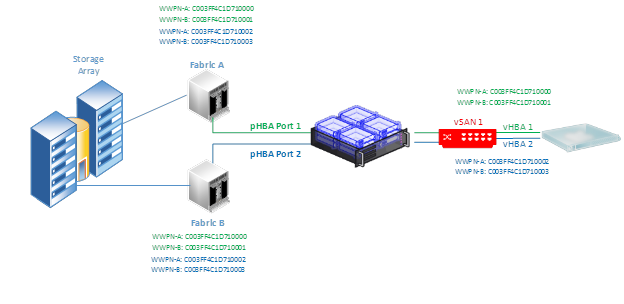

3.2 Multi-Fabric Single vSAN

In the Multi-Fabric scenario, there are multiple Fibre switches that are not interconnected providing access to storage array(s), just like the scenario described in 4.2. It is necessary to take into consideration the number of available Physical HBA ports, as well as technical and business requirements around availability and redundancy.

Diagram 3 Multi-Fabric Single vSAN

Diagram 3 shows a multi-fabric configuration with a single vSAN. In our scenario each fabric is made up of a single switch and both fabrics connect to the same storage array(s). The physical HBA connectivity is the same as the multi-fabric multi-vSAN scenario (Section 4.2), the key difference is that there is a single vSAN with both physical HBA ports assigned to it. The Guest VM is provided multiple vHBA’s for redundancy. This scenario provides some protection against our key issue raised in the previous discussion. If for some reason a Physical HBA port is down, or has reached its Virtual Port maximum limit, the VM has a significantly better chance of starting, as the round robin algorithm will attempt to create a virtual port for both HBA’s on whichever Physical Ports are available.

Storage admins have a little more work to do, as in this scenario, the WWPN’s for all vHBA’s need to be zoned on all fabrics (as shown in the diagram).

This design mitigates the key issue raised in Section 4.2 (VM Can’t start due to failed physical port), provides redundancy for physical path loss, and under normal operating conditions provides true load balancing. However the issues raised in section 4.1 (not guaranteed N-Port creation on multiple pHBA’s) is evident here. For VM’s that require very high levels of bandwidth\load balancing this is an important consideration.

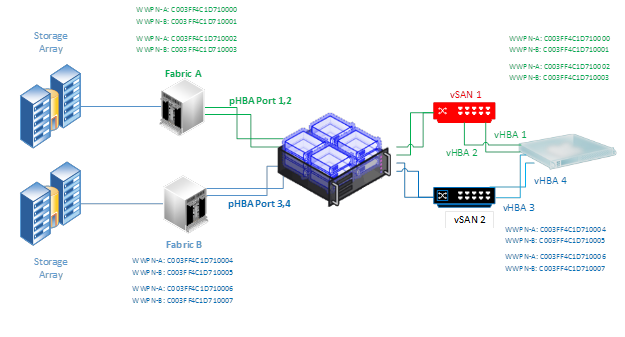

3.3 Multi-Fabric Multi-vSAN Multi-Port

The concerns and issues presented with virtual Fibre Channel while minimal, can pose issues if not fully understood. The best chance to mitigate the risks around it are of course costly, but in some situations may be required. With that comes the multi-Fabric Multi-vSAN Multi-Port scenario.

Diagram 4 Multi-Fabric Multi-vSAN Multi-Port

This scenario provides the highest level of redundancy, availability, and load balancing for virtual Fibre in a guest. It also comes at the highest cost and most complex. By providing multiple vSAN’s, each backed with multiple Physical ports, to separate fabrics, we can provide a great certainty that each vHBA will create its N_Port on a separate Physical port even in the event that a physical port is down, or even a fabric switch. By increasing the likelihood that each N_Port is created on a separate physical port, we have increased redundancy, availability and load balancing.

3.4 Multi-Fabric Multi-vSAN Multi-Array

In some cases Guests may require storage provisioned from multiple arrays which are connected through separate fabrics. This can complicate the design for virtual Fibre channel, however the decision points are the same just duplicated. When designing for connectivity in this scenario, it’s important to keep in mind the number of physical ports, as well as the redundancy, and throughput requirements from the guest.

Diagram 5 Multi Fabric Multi vSAN Multi Array

In this scenario we have two storage arrays, each connected through its own fabric which can be made up of one or more switches. The Hyper-V host provides four (4) physical fibre channel ports, with 2 connected to each fabric. In Hyper-V, two vSANs are created, one for each fabric, and the appropriate physical HBA ports are bound. The guest is assigned four vHBAs, two assigned to each vSAN.

Note:

Guest virtual machines have a maximum limit of 4 vHBA’s.

4 Design Guidance

- NPIV must be enabled on the physical HBAs, the fibre switch port(s), and the SAN must be NPIV capable.

- Rule of thumb is to map vSANs to the physical fabric. 1 fabric equals 1 vSAN, 2 fabrics equals 2 vSANs.

This is not always the case, and should be considered on a case by case basis with the caveats explained in the above examples around redundancy.

- Zone the WWPN’s (A and B) for each vHBA on the appropriate fabric(s). You only need to zone the WWPNs that will appear on a given fabric.

- Guest machines are limited to a maximum of four (4) vHBA’s.

- Virtual Port creation is performed in a round robin fashion, and if for any reason a virtual port cannot be created for a vHBA a virtual machine will not be able to start.

Make sure there are sufficient physical ports available taking into consideration hardware failure, and NPIV port limits in order to avoid failed VM start.

- Multiple vHBAs in a guest are necessary for redundancy and load balancing, when the physical topology supports it.

Adding multiple vHBAs to a guest that is backed by a single physical port doesn’t provide any benefit. However it is recommended for redundancy and improved I/O throughput to add multiple vHBAs backed by multiple physical ports to a guest.

- When there are more than one vHBAs assigned to a guest, MPIO is necessary in order to handle the load balancing and\or fault tolerance of the fibre connections. This can be provided by native Microsoft MPIO and DSM, or by adding the vendor specific DSM (ex. PowerPath for EMC).

- For high bandwidth make sure each vHBA in a guest is attached to separate vSAN’s. This guarantees the load is balanced across different physical ports.

- For higher redundancy be sure to have multiple vSAN’s with each vHBA attached to a separate vSAN. This guarantees that different physical ports are used when creating the N_Ports.

- For highest availability make sure each vSAN is backed by multiple physical ports. This will provide more physical ports for each vHBA to create its N_Port.

[1] https://technet.microsoft.com/en-us/library/hh831413.aspx

Jason Dinwiddie

Jason is an eight-year veteran at Microsoft as a Senior Consultant for State and Local Government. With 16 years of overall IT experience, Jason is focused on virtualization, management, and private cloud, specializing in Hyper-V.