SCOM Manager of Managers

| Caution |

| Test the script(s), processes and/or data file(s) thoroughly in a test environment, and customize them to meet the requirements of your organization before attempting to use it in a production capacity. (See the legal notice here) |

Note: The workflow sample mentioned in this article can be downloaded from the Opalis project on CodePlex: https://opalis.codeplex.com |

Overview

It’s typical for multiple event monitoring solutions to exist in a typical IT environment. These tools will all typically have their own agents, policies and events that they capture. However, it’s increasingly atypical for operations to function in a distributed fashion. Hence the consolidation of event data into a “single pane of glass” is often a task undertaken in an attempt to provide a more consolidated view of the health and status of an IT infrastructure.

Because Opalis provides Integration Packs with SC Operations Manager as well as many typical monitoring solutions it serves well as a “event broker” in a Manager of Managers approach to addressing this challenge. In such a solution, events from various tools are normalized into a single event structure in SC Operations Manager. This involves several aspects of event integration:

Each solution will have its own record structure. Example, one solution might have event fields for “Priority, Severity, Message Text, etc” while another might have “Severity, Description” and not have a field for “Priority”. Moreover, the expected values of these fields might be different between solutions. One might use “Critical, Major, and Minor” for severity levels while another might use “Fatal, Severe, Normal, and Information”. Normalization of the event would involve mapping values from the source system (HP, BMC, CA, etc) into the target system (SCOM).

Generally, not all events from the source systems will be integrated into the top-level manager (SCOM). Hence filtering of each source provides a “floodgate” to control which events bubble up into the view of operations. Opalis provides a rich feature set supporting workflow-driven logic that can de-duplify, filter, augment and otherwise manipulate events prior to being forward to SCOM. The sample provides a few typical examples of how such techniques might be applied.

Workflow Walk-Through

“1. Master SCOM Event Handler”

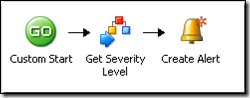

This is the main workflow for integrating with SCOM. It’s very simple because it is meant to be called from the other 4 workflows where most of the integration/filtering of events actually occurs. Notice how it calls a child workflow to map severity levels.

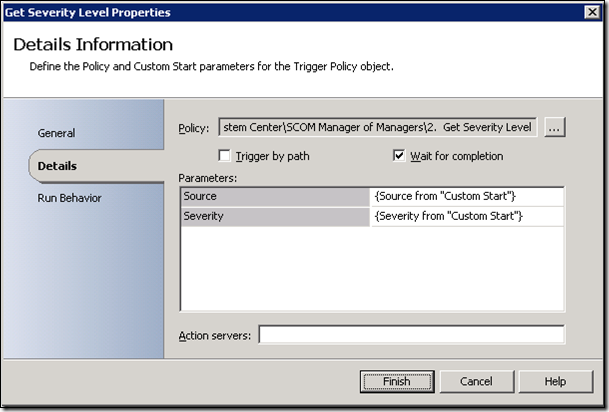

The “Get Severity Level” child workflow (documented later in this sample) accepts as input the name of the source solution (CA, HP, BMC, etc) and a severity level (Critical, Major, Minor, etc). It returns a SCOM severity level. Basically, this activity normalizes Status fields from a variety of tools into a SCOM severity level.

Finally, the workflow accepts the remote event ID (a unique string associated with the event on the source system) and maps this into a Custom field in SCOM. This allows an Operator to cross-reference the event in both systems. Also, it allows Opalis to determine if a given event from a target has already been forward to SCOM.

“2. Get Severity Level”

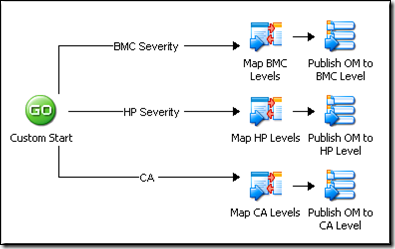

This child workflow is designed to map severity levels from three different monitoring solutions into SCOM severity levels. It’s designed as a conceptual example and will have to be edited for actual use. The workflow accepts as input the name of a monitoring solution (CA, BMC, HP, etc) and routes the request to a specific “Map Published Data” activity that contains the severity level in SCOM the one wants associated with the severity level in the source event.

Again, the example is illustrative. A similar approach could be taken for other fields (Class, Object, etc). Basically, using this approach one can normalize the data between two systems according to a set of rules. Additional child workflows could be built for different fields (which will require additional workflow executions in Opalis).

“3. Accept CA Alerts”

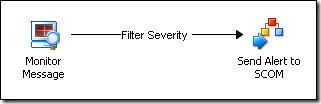

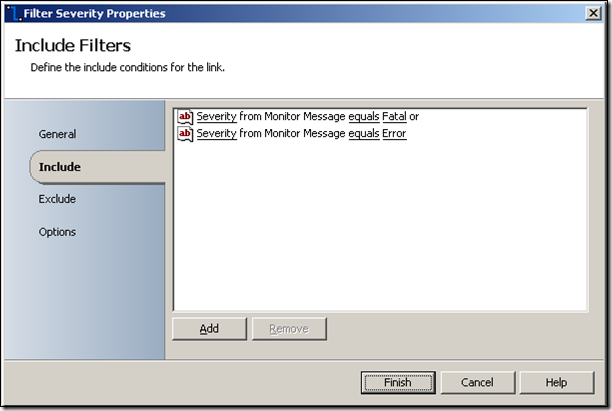

This workflow feeds events from CA into SCOM, but not all events are processed. A link condition is used to only select events with a Severity of “Fatal” and “Error”.

Opening up the link condition (labeled “Filter Severity”), one can see that “Fatal” and “Error” values for Severity will trigger the “Send Alert to SCOM” activity. Essentially, this filters out all events that don’t match our acceptance criteria for being forwarded to SCOM.

Note: Filtering scenarios are best served by doing a good job configuring the Monitor rather than relying on workflow logic to filter events. Both techniques are at the disposal of the workflow author, however precise input filtering for a Monitor will reduce the number of events returned from a Monitor query as well as the time for the overall forwarding workflow complete.

“4. Accept HP Alerts”

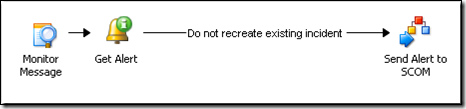

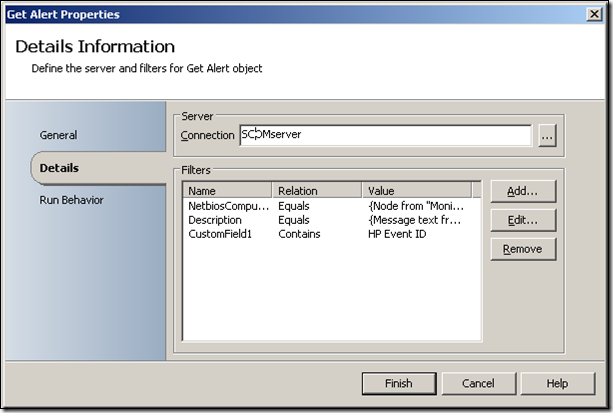

This workflow illustrates another possible option for managing source event streams. Again, this sample is meant to be illustrative. One can perform a “lookup” in an external system to make a decision as to whether or not an event should be forwarded. In this sample, a lookup in OM is done to determine if there is currently an identical event already open in SCOM. Events that have already been forwarded are dropped. Variations on this workflow could bump a counter (stored in a custom field) to indicate the number of times the HP solution reported the event.

The filter applied to the query is really up to the workflow author. In this example an event in SCOM is considered as “matching” one in HP if the name of the system and the message text match. Also, the HP Event ID would have to be present in the Custom Field (indicating that this event was already forwarded).

“5. Accept BMC Alerts”

The example for BMC is trivial in that it is forwarding all events to SCOM. No filtering, enrichment or external lookup is done. This would be a very basic configuration.

| Share this post : |  |