Use The All Management Servers Resource Pool To Run Scheduled Tasks

A major improvement from SCOM 2007 to SCOM 2012 was the federation of the Root Management Server role. To preserve backwards compatibility the Microsoft.SystemCenter.RootManagementServer class still exists. By default the first management server in a Management Group gets the role of RMS Emulator, which you can move. But this is a static role. It won't move unless explicitly moved to another management server.

It is not uncommon for SCOM Administrators to need to run scheduled tasks. A few scripts that I have scheduled include

- Assign failover management servers to agents

- Enable Agent Proxy

- Resolve rule-based alerts that haven't incremented their repeat count in a few days

- Set the agent action accounts

I have multiple management servers in the management group. I wanted these scripts run routinely, but I was unsure what the risk was if they were run at the same time by multiple management servers. So how do I ensure that they are only run by one management server? The easy answer was the RMS Emulator. Only one machine can have that role, so I targeted all of my maintenance rules at the RMSE. But this created two problems. First, the SDK service on the RMSE ends up using 5x more RAM than the SDK service on the other management servers, which bogs down its performance. Second, I really don't have any fault tolerance. If the RMSE is down for maintenance or patching, no other management server takes over the workflows unless I explicitly move the role.

So how can we distribute the load and make it fault tolerant? I ended up stealing the answer from the data warehouse. The Microsoft.SystemCenter.DataWarehouse.Internal MP has a class called Microsoft.SystemCenter.DataWarehouseSynchronizationService. This class is non-hosted, meaning it doesn't explicitly belong to any health service. As such it is managed by the All Management Servers Resource Pool. (If you want to confirm this, look up the BaseManagedEntityId of the only instance of the class in MTV_Microsoft$SystemCenter$DataWarehouseSynchronizationService in the Ops DB. Then see the Relationship table where that BMEID is the target of a relationship. The SourceEntityId should be the BMEID of the resource pool.)

By using non-hosted entities to run workflows we gain automatic fault tolerance, and if we have multiple instances we gain some load distribution as the non-hosted entities are roughly evenly distributed among the management servers. Please note that we cannot guarantee exactly which management server will pick up any particular instance. We also cannot guarantee that the instances will be perfectly, evenly distributed.

Here is my Management Pack Design:

NOTE: I actually authored this in the SCOM 2007 Authoring Console, so the MP will work in SCOM 2007, but the RMS will pick-up all of the non-hosted instances, so you don't gain the fault tolerance and load distribution benefits.

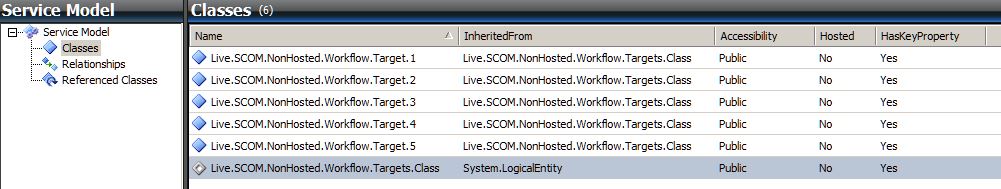

I created an abstract class inherited from System.LogicalEntity and gave it a single, key property of an integer. I then created multiple non-abstract classes that inherited from it. I made multiple, separate classes so that I could spread my scheduled script rules around.

In the interest of keeping the MP short and simple, I have unfiltered registry discoveries running from the management servers. I realize that making a custom discovery with a Class Snapshot in it would also be perfectly adequate (and probably more elegant) but for example purposes, I wanted to keep it readable and not add custom write action modules. Also, by targeting the management servers, each instance should have multiple discovery references, again reducing the risk associated with the loss of any single management server.

If you really wanted to get clever you could rotate different integers in for the key property of a class, having multiple instances of a single class if you had the need.

I am including a sealed and unsealed version of the management pack that contains the class definition and discoveries of five targets. I am also including a sample unsealed management pack with a rule for each target that writes a warning event to the Application event log every five minutes, to show which management servers are serving each target.

I tested these management packs in my environment where I have five management servers. In my test, one management server picked up two targets, three picked up one each, and one picked up no targets.