Experience from the Field: Resolving the OMPM issue “Type of column ‘WarningInfo’ in table ‘osWarning’ is too small to hold data”

My name is Anthony Cafarelli and I’m a Consultant with Microsoft Consulting Services who recently compiled a list of useful advice that I gained while performing OMPM scans at client sites. In this blog post I would like to share some of that knowledge and I hope that anyone reading it finds it useful!

The issue I am going to focus on in this blog post is an import error. This error is caused when the scanned files have very long file names, in excess of 250 characters. Thus it isn’t an extremely common error but these steps will help you address the import if you do find this situation. When importing data into the OMPM database you may receive the following error:

Type of column ‘WarningInfo’ in table ‘osWarning’ is too small to hold data

What this error indicates is that the file name and path in an XML file you are trying to import is too long for the “Warninginfo” field in osWarning table. Because of this length issue, the information is not imported into the database and the XML file is skipped. Typically this shows up with a Last Accessed or Last Modified date warning, so the files usually wouldn’t be a concern to not have in the database. However, if it was part of a .cab file containing multiple XML files (and most likely it is, and most likely that CAB contains 10,000 files unless you modified the offscan.ini file to change those settings). And, this is important to note, if any XML file contained in a CAB file cannot import, then none of those files make it into the database. At this point, you have a few options:

1) Ignore that the CAB file did not import and base your results on the other CAB/XML files that did import correctly.

2) Extract the CAB file and import each XML.

3) Modify the database.

If option 1 is acceptable, there is nothing more for me to say here. That is the easiest option, but you do lose a significant amount of data that may be useful.

Option 2 is an interesting one. Out of the 2 options I listed that address the issue, this is one that has a lot of work required for the technician, but significantly increases the import time into the database.

Just to give a little background in a simplified way: The reason why the entire CAB doesn’t import when a single XML file has an error is because of the way OMPM does the import. The CAB is extracted by the import process and every XML file is parsed all at once. This significantly (VERY significantly) speeds up the import of a CAB file but also reduces the ability to address errors.

When you extract the XML files you will be able to get the (on average) 9,999 other XML files imported into your database. I haven’t actually timed it and compared it, but I would say the import of the individual XML files is at least 10x slower, if not more. There is another way to increase the import speed, but it involves more work from the technician (and this is my preferred method because I hate modifying the database due to the supportability concerns, and I will get into that a little more below). Here is the modified option 2:

1) Extract the CAB file.

2) Use the findstr command to locate the extracted XML file that is the one with the error.

3) Delete that XML file.

4) Re-package the CAB file with the remaining files.

With this method, you maintain the high import speed and address the file with the long name. Using findstr and deleting the XML file are pretty straight-forward, so I’m not going to get into them. But, finding a good way to re-package the CAB can be tricky. My best advice is to go to this page (yep, another TechNet page) and implement the PowerShell scripts listed:

https://technet.microsoft.com/en-us/magazine/2009.04.heyscriptingguy.aspx?pr=blog

Once you’ve re-compressed into another CAB file, you can import it and still maintain your high speed import! Pretty good trick, right?

Now let’s talk about Option 3. I have very mixed emotions about this one. It’s easy and it’s effective but it definitely pushes supportability. The simple explanation of this approach is this: The oswarning field in the database isn’t big enough to hold the data we are trying to put into it, so let’s just make the bucket larger. I found 2 methods for doing this. And apparently, based on what I have written so far, I love numbered lists, so here’s another one:

1) Use SQL Management Studio to modify the field size.

2) Modify the files that OMPM uses to create the database so that every database you create has the larger field size to start.

Using SQL Management Studio is fairly straight-forward, but depending on your version of SQL it can be slightly different. I’m not going to get in depth into that solution, so if you are unsure of it, either find your favorite SQL resource (friend, colleague, book, blog, etc.) and research it or use the second method.

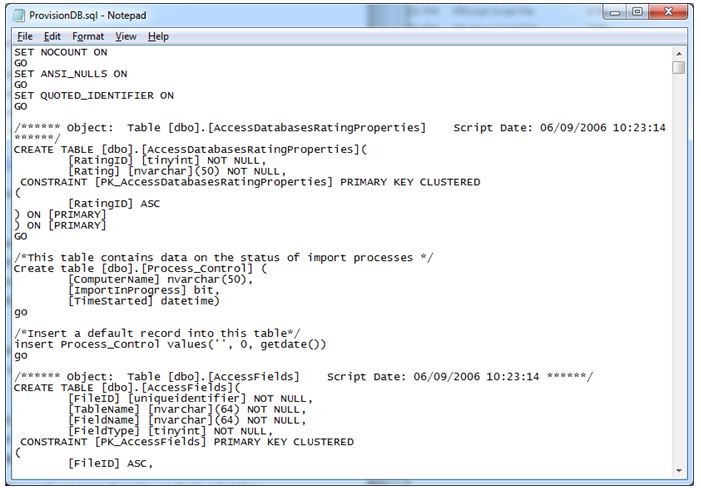

The second method requires you fire up a text editor. Notepad.exe is my favorite, so that’s what I’m going to use as an example. Start notepad and open ProvisionDB.sql in the OMPM/Database/Include folder.

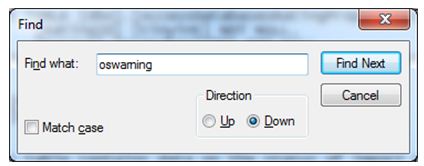

Once you have the file opened search for “oswarning” and click Find Next.

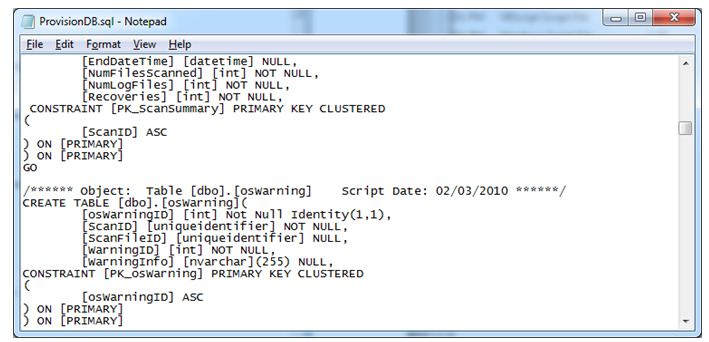

You will see the following:

Here you will see the WarningInfo field (at 255 characters). Simply change the number to something higher (let’s say 285) and save the file. Now every time you run the createdb command, the new database will have a larger field. NOTE: This will not modify your existing databases, so make sure you create a new database and run your imports there. You’ll want to pull any files out of the OMPMimported folder that you imported into the old database so you can re-import then into the new one.

The Office Compatibility team is aware of this limitation and is reviewing this challenge for future updates.

Hope this was helpful for anyone reading it! I plan on writing a few more blog posts about other issues and “creative” resolutions I found in the field.

Anthony