Troubleshooting Windows Performance Issues: Lots of RAM but no Available Memory

Summary: Craig Forster, a Senior Microsoft Premier Field Engineer, runs us through how he deals with a performance issues for a file server that has plenty of RAM but, for some reason, no available memory.

Hi, this is Craig Forster here. I’m hoping to shed a little light on an issue which we’re seeing appear more with our customers file servers. The first sign of trouble was that user mode processes (like interactive login and launching processes) were extremely slow, but kernel mode activities (like serving files on the network) were unaffected.

1. Check the Cache

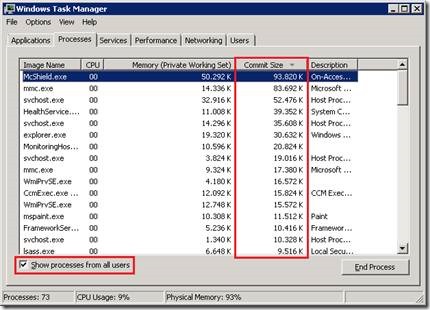

Once our (very slow) login was done, we went to our first and simplest tool to get a hint of where the problem could be: Task Manager! The physical memory (4GB) was nearly all used, with only 234 MB available. Normally on a large file server we’d expect the main user of physical memory to be Cache. It’s the Cache’s job to keep a copy of recently-accessed files in physical memory (so they don’t have to be re-read from disk).

But here, we saw in Task Manager that Cache is only using 122MB:

2. Check which processes are using physical memory

So the next most logical thing to check is: “which running process is using the physical memory?” When a process uses physical memory it’s called the process’ Working Set and typically the largest consumer of working set within a process is memory which is Private to that process.

The opposite of Private Working Set is shareable memory, which is read-only data that is not special to the running process and can be used by other processes. The most common example of this is disk-stored binary executable files, like .exes and .dlls. (As an aside, memory used by a process which is not in RAM lives in the page file. The sum of RAM use and page file use by a process is called a process’ committed memory – each committed page is backed either by RAM or in the pagefile on disk)

So, looking at all the running processes, we could see that no process was using large amounts of memory (physical or committed), and certainly nothing that would bring the server close to 4GB of RAM usage:

Task Manager had reached its limit in terms of what it can do to help us find the consumer of this memory.

So: The next tool to move to is Perfmon.

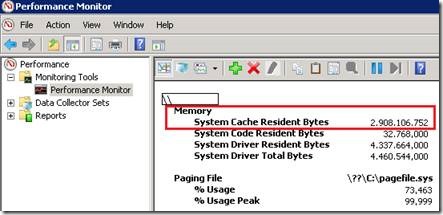

In Perfmon, we can see that the Memory\System Cache Resident Bytes counter showed that we’re using ~3GB:

Note that System Driver Resident Bytes and System Driver Total Bytes have a decimal separator of a comma, meaning they only use 4MB.

Which meant that some form of system “cache” is using all the memory here. But we’re not able to see further into kernel mode memory from here.

To find the culprit, we need to advance to the next tool.

3. Zero in on Root Cause, starting with RAMMAP

What we really need to do is look at the RAM use in its most raw, yet usable, format (using a debugger would be the next most advanced tool, but that’s much more complicated).

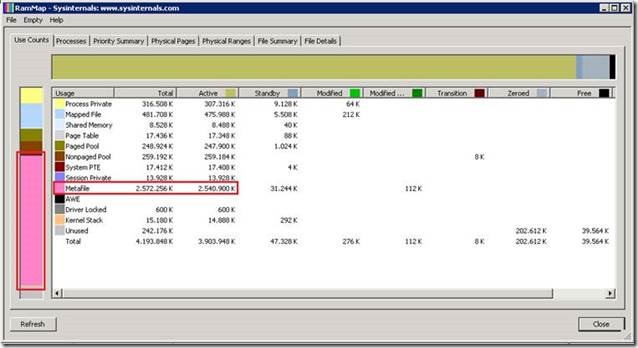

To do that we can run RAMMAP from Sysinternals. In RAMMAP we can see that Mapped File is not using much memory at all. Mapped files are files in the file system which have been mapped into RAM while a user has the file open.

The big user of RAM is something called Metafile. This is typically not a big user of memory on a server or workstation. But what exactly is this thing called metafile?

“Metafile is part of the system cache and consists of NTFS metadata. NTFS metadata includes the MFT as well as the other various NTFS metadata files… In the MFT each file attribute record takes 1KB and each file has at least one attribute record. Add to this the other NTFS metadata files and you can see why the Metafile category can grow quite large on servers with lots of files”.

NTFS metadata is our culprit! What counts as NTFS metadata? Attributes of the files and folders in a volume, like:

- names

- paths

- attributes (like read-only, hidden, archived flags)

- the Access Control List (ACL) for permissions and audit settings

- the offset addresses on the disk where the file is physically located

And this last bit is rather important: a file which is contiguous on the disk would have 2 entries here: the start offset address and the end offset address. A file which has 2 fragments would have 4 entries, and a file with 1000 fragments would have 2000 entries.

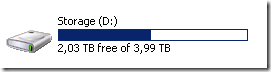

And the more files on the disk, the larger the Master File Table (MFT – the thing that manages the NTFS metadata) will be. This is a file server serving users and department data with a lot of files:

But why is the MFT being placed in memory at all? Most of these files are likely very old as this is user data, meaning not all of the data is being accessed all the time.

|

Here’s what we discovered with this file server:

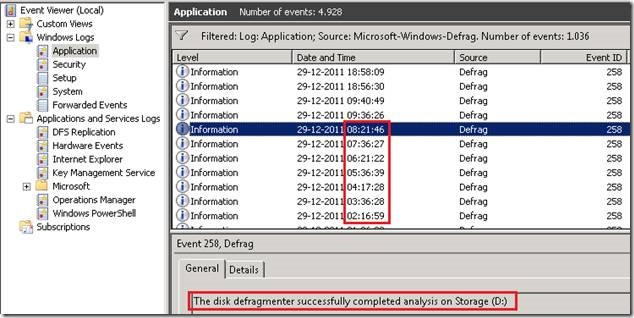

Looking in the event viewer we can see (via a filtered view) that something is asking the defragmentation service to perform an analysis of the fragmented file status of the disks approximately every hour. The files on the disks are never actually being consolidated, just analysed!

Only C: and D: are being scanned, not E: which is the other fixed disk. Both C: and E: are rather empty, D: stores the user data.

|

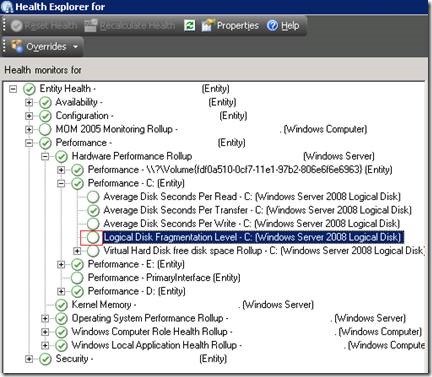

So starting at the top of the list, I looked in SCOM (System Center Operations Manager) to see what was configured in the Base Server OS management pack relating to disks. However, SCOM is not set to run defragmentation analysis:

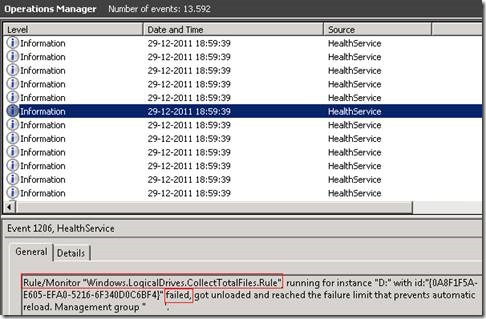

The event log on the file server for SCOM says it is trying to process the rule or monitor but is failing:

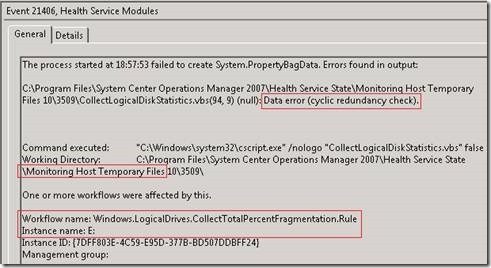

It looks like there are some broken files in the SCOM temp folder relating to the E: drive which is the drive which is not being analysed by the defragmenter. The log shows that defragmentation analysis was never attempted on E:

Deleting these SCOM temp files and restarting the Health service caused the following chain of events:

- The SCOM agent to found out that the defrag rule had been disabled and stopped running the script.

- This caused the defrag analysis job to stop running

- This caused the MFT to stop being cached in RAM

- After some time (in their case, hours rather than minutes), this caused the server to become responsive to user mode processes (like logon, task manager etc.) again. Note that kernel-level processes, like file sharing, were never affected.

4. Wrap-Up

I’ll repeat what I mentioned above; the root cause in your environment could be something completely different. In this case it was corrupt temporary data in the monitoring software preventing the new management pack rules from being downloaded. I’d look to:

- Monitoring services

- Asset management applications

- Auditing agents

- Disk optimisation software

Finally, there is a service which can artificially limit the cache usage to prevent these kinds of problems impacting the overall system. It’s called the “Dynamic Cache Service” and is discussed more in the following support article: You experience performance issues in applications and services when the system file cache consumes most of the physical RAM:

If your root cause is not something which can be fixed or worked-around like we did in the example of the file server above, then you can contact Microsoft Support to obtain this service for Windows 7 and Windows Server 2008 R2. Windows Server 2003, Vista and Windows Server 2008 can access a version of the service from here.

And if you’re reading this and think it’s super interesting (as I do), then have a read of this article on the Microsoft Windows Dynamic Cache Service.

Posted by Frank Battiston , MSPFE Editor