Monitoring a Busy Azure Automation Infrastructure (Part 2)

Keeping Operations and our customers happy with telemetry

OK, so as we established in part one of this subject we’ve got a ton of Azure Automation (AA) runbook job history stored in Azure, and we need to begin collecting it and storing somewhere that is quick and easy to access and can serve as a feed for an AA jobs dashboard. Here in part two, I'll provide an overview of how I went about getting this done.

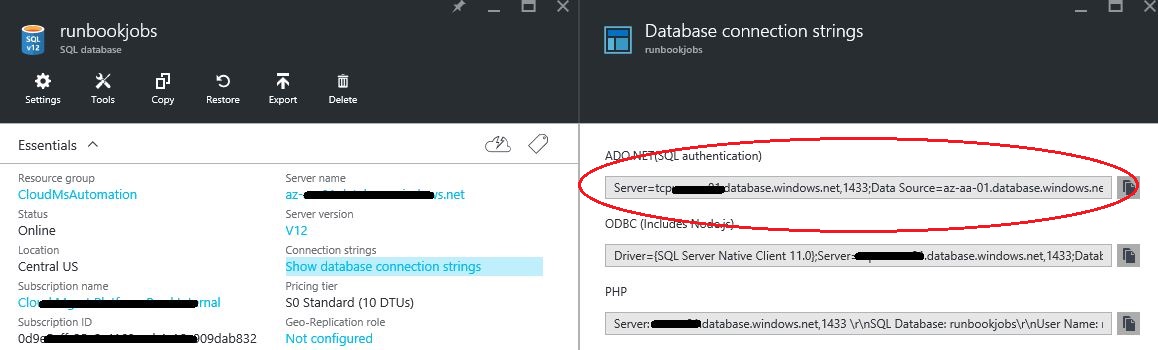

The first order of business for me was to provision a Azure SQL DB instance. This was a bit of a no-brainer decision. It is super simple to setup and aside from managing logins, there is little to no maintenance overhead to bother us in the future. If you aren’t familiar with the process of setting up Azure SQL, there is a ton of helpful information available online including a video hosted here to guide you through the process to ‘create DBs in seconds.’ One detail I will mention here is that once your DB is provisioned, you can access the connection string from the portal which will be needed in just a bit.

[caption id="attachment_45" align="alignnone" width="1158"] Connection String[/caption]

Connection String[/caption]

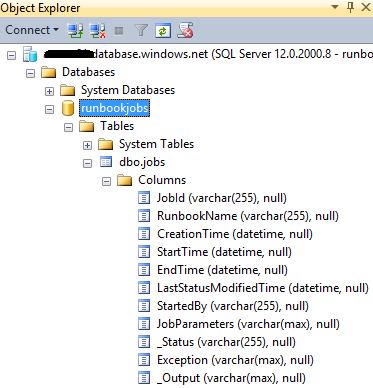

OK, great! So now we’ve got an Azure SQL DB stood up named runbookjobs. We’ll connect to it through the SQL Server Management Studio using the login specified when we provisioned it and create a single table called jobs with columns for each of the data points we want to store about runbook job history for future reference. I’m a huge fan of simplicity so (for now at least) this simple schema will suffice.

[caption id="attachment_55" align="alignnone" width="373"] Jobs Table[/caption]

Jobs Table[/caption]

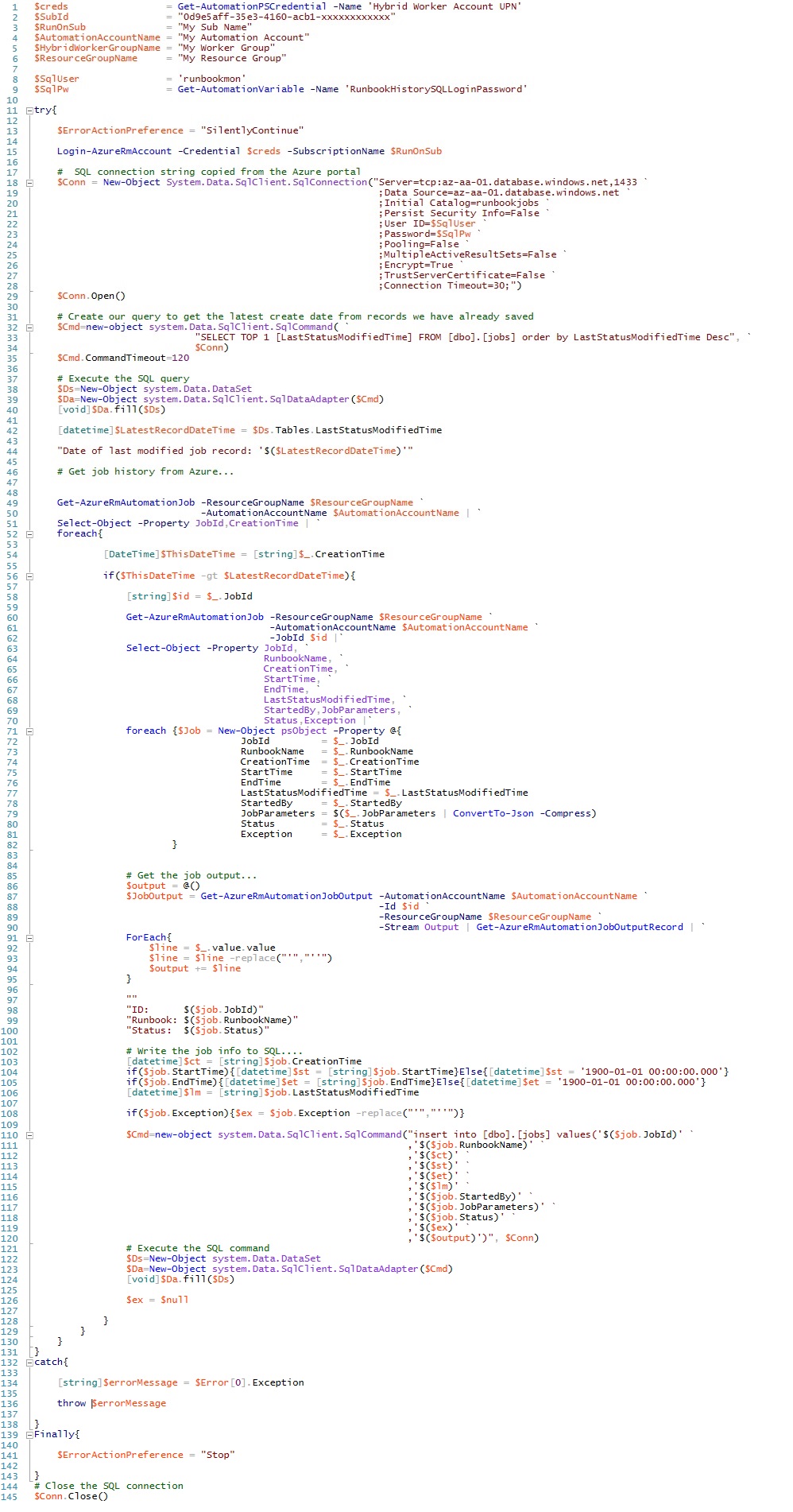

Right on, now we’ve got a home for our job history data. Next we’ve got to get it populated. What better way to do that then to create a PowerShell runbook in AA to do the work? What I did was to create a runbook with the hugely imaginative name of Get-RunbookJobHistory and started writing the script to pull runbook job history stored in Azure and stuff it into our shiny, new runbookjobs DB.

Getting a the runbook job history is not too difficult, especially if you’ve previously done it in SMA to monitor child runbook status from a parent runbook or other process. The concept is pretty much the same, except that only a summary of the job output is available in the output stream of you are not referencing them directly with a JobId. To get the complete job output, you need find your job using the Get-AzureRmAutomationJob and then reference the job by JobId with the Get-AzureRmAutomationJobOutput cmdlet.

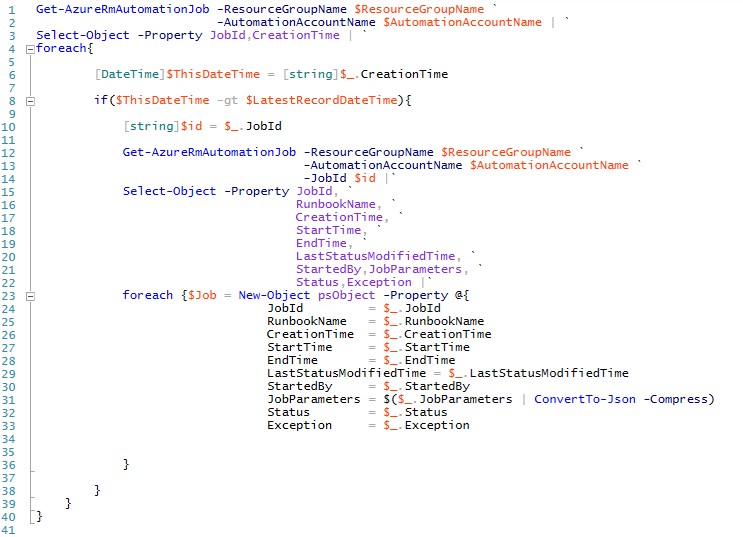

So, let’s go ahead and get the Jobs we care about along with the properties of each we can get now for storing in SQL. After logging into Azure using a credential asset stored in AA we can iterate through our list of jobs. Here, I’m going to collect all of the jobs that have been created since the last time my history collection ran:

[caption id="attachment_75" align="alignnone" width="743"] Get Jobs[/caption]

Get Jobs[/caption]

You can see above that we are simply walking the list of jobs run in the Azure Automation Account we are interested in and then for each, if it is newer than the last one we collected during the last cycle, we use the JobId we found for it to reference it again directly so we will have access to the full job output stream. For each job then, getting 10 of the 11 values we want for each job is readily available and we pluck them right out and stuff them into a custom object.

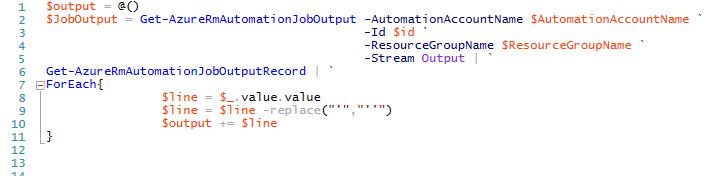

For the all important output stream, there is one more step we need to take. We'll stuff the following snippet into the foreach loop to get the output details:

[caption id="attachment_85" align="alignnone" width="709"] Get Job Output[/caption]

Get Job Output[/caption]

Here we have to make a 3rd call to Azure using the previously mentioned Get-AzureRmAutomationJobOutput cmdlet to get the complete job output. Then, we pipe that to the Get-AzureRmAutomationJobOutputRecord cmdlet which finally gets us access to the full output stream. You may also notice above that as I’m grabbing the output, I am replacing single quotes with double quotes so that when we insert the data into SQL, we don’t goof up our query string.

Having to make so many calls out to Azure slows down the how fast we can walk through all of our jobs, but hey, that’s one of the points of using AA in the first place - let it handle those tedious, long running tasks for you silently in the background while you are getting real work done, right? I have this runbook running on a 4 hour schedule with a Webhook enabled to kick it off with a couple of lines of PoSh anytime the Operations team needs closer to real time data.

So this is pretty much it – all we need to do now is store the data in SQL and we are off to the races!

Below is the entire contents of the PowerShell runbook as it exists today, it isn’t terribly complex, but I hope it helps someone out.

FWIW, I desperately want to be able to place code here that you could copy and paste, but it is near impossible with the WordPress tools available for me here. If you want the actual PS1 file, hit me up at mattraff@msn.com and I'll get it to you.

[caption id="attachment_95" align="alignnone" width="998"] Full Runbook[/caption]

Full Runbook[/caption]