Holistic Approach to Energy Efficient Datacenters

By Dileep Bhandarkar, Ph. D.

Distinguished Engineer

Global Foundation Services, Microsoft

A little over three years ago, I joined Microsoft to lead the hardware engineering team that helped decide which servers Microsoft would purchase to run its online services. We had just brought our first Microsoft-designed datacenter online in Quincy and were planning to use the innovations there as the foundation for our continued efforts to innovate with our future datacenters. Before the launch of our Chicago datacenter, we had separate engineering teams: one team that designed each of our datacenters and another team that designed our servers. The deployment of containers at the Chicago datacenter marked the first collaboration between the two designs teams, while setting the tone for future design innovation in Microsoft datacenters.

As the teams began working together, our different perspectives on design brought to light a variety of questions. Approaches to datacenter and server designs are very different with each group focusing on varying priorities. We found ourselves asking questions about things like container size and if the server-filled containers should be considered megaservers or their own individual datacenters? Or was a micro colocation approach a better alternative? Should we evaluate the server vendors based on their PUE claims? How prescriptive should we be with the power distribution and cooling approaches to be used?

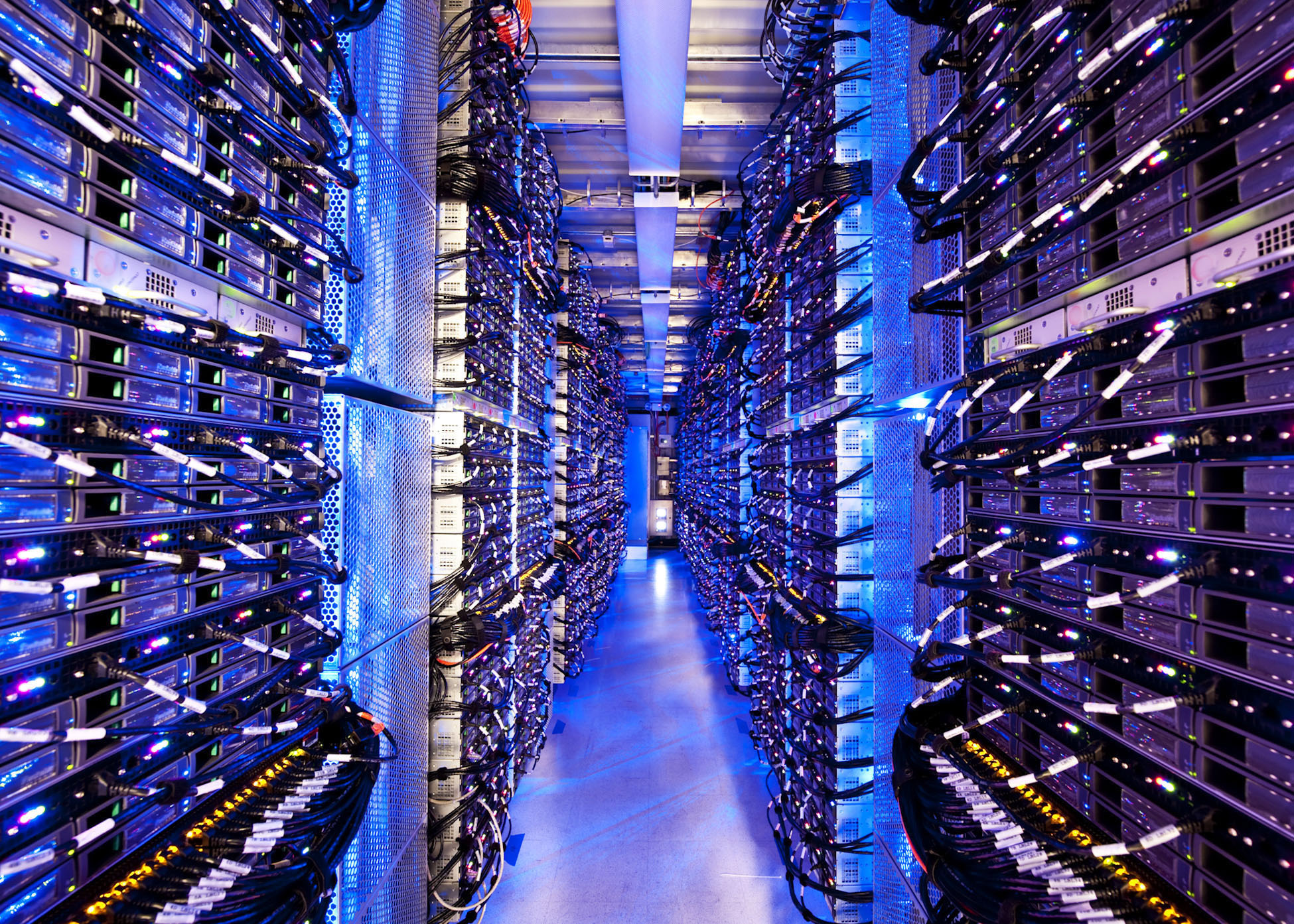

View inside a Chicago datacenter container

After much discussion we decided to take a functional approach to answering these questions. We specified the external interface – physical dimensions, amount and type of power connection, temperature and flow rate of the water to be used for cooling, and the type of network connection. Instead of specifying exactly what we needed in the various datacenter components we were purchasing through vendors, we let the vendors design the products for us and were surprised at how different all of their proposals were. Our goal was to maximize the amount of compute capability per container at the lowest cost. We had already started to optimize the servers and specify the exact configurations with respect to processor type, memory size, and storage.

After first tackling the external interface, we then worked on optimizing the server and datacenter designs to operate more holistically. If the servers can operate reliably at higher temperatures, why not relax the cooling requirements for the datacenter? To test this, we ran a small experiment operating servers under a tent outside one of our datacenters, where we learned that the servers in the tent ran reliably. This experiment was followed by the development of the ITPAC, the next big step and focus for our Generation 4 modular datacenter work.

Our latest whitepaper, “A Holistic Approach to Energy Efficiency in Datacenters,” details the background and strategy behind our efforts to design servers and datacenters as a holistic, integrated system. As we like to say here at Microsoft, “the datacenter is the server!” We hope that by sharing our approach to energy efficiency in our datacenters we can be a part of the global efforts to improve energy efficiency in the datacenter industry around the world.

\db

The paper can be found on GFS’ web site at www.globalfoundationservices.com on the Infrastructure page here.