Search Folder Performance problem detection

One of the problems we see in support from time to time is High CPU or server not responding scenarios causing 623 events on Exchange servers. This has been talked about in great detail in the following links:

Troubleshooting Version Store issues - JET_errVersionStoreOutOfMemory

https://msexchangeteam.com/archive/2006/04/19/425722.aspx

Version Store issues revisited - Event ID 623 at Information Store service startup

https://msexchangeteam.com/archive/2008/05/23/448923.aspx

Dave did a good job of explaining what they are on what happens on the Exchange side when search folders are created in the following blog

Microsoft Exchange and Search Folders

https://blogs.msdn.com/dgoldman/archive/2008/07/01/microsoft-exchange-and-search-folders.aspx

For a simple look at how to create a visible Search Folder in outlook, see https://office.microsoft.com/en-us/outlook/HA100389111033.aspx

Most of the times, this problem occurs because of hidden search folders that Dave mentions.

To break this down in to simple terms, you can think of Version Store of a predetermined amount of memory to build a queue for transactions in the store which can be tracked via the Version Buckets allocated counter in Performance monitor. What happens in this case, is that the amount of Version Buckets goes up due to a queued transaction taking a long time to finish. Since this queue is serialized, any transaction queued that takes a long time to complete will back up any other request behind it. During Store startup, there are a lot of transactions that are occurring, so you essentially get a snowball effect causing the store to go unresponsive.

This could also happen during runtime as well, but the bad part is that if you have hidden search folders causing this problem, this queued transaction will survive a restart of the information store and that is when things get really bad.

To resolve this problem, you will normally have to dump the store.exe process and have someone from CSS look at the dump to confirm what the underlying problem is and who is causing it.

After looking at this from the performance side as this fits in to the performance category, you can actually see this by adding just a few counters in perfmon to detect this type situation.

As I mentioned before, The Version buckets allocated counter is what you use to track transactions in the store and is also how we trigger getting the store dump for analysis.

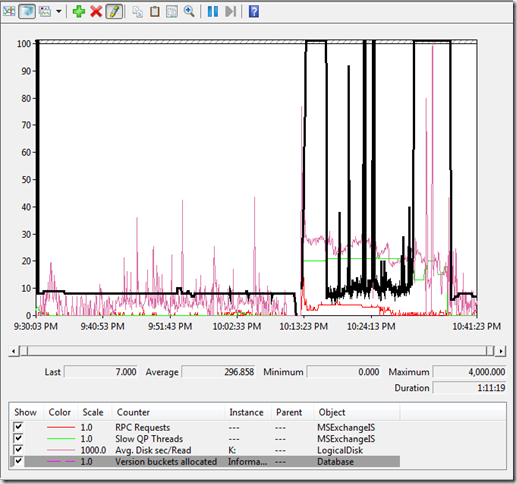

Here is what this looks like in Performance monitor. This blg file was taken at 5 second intervals.

As you can see about in the middle of the perfmon, is when the Store process was restarted denoted by the dip in version buckets. As soon as the store started, Version buckets shot all the way up to a maximum of 4,000. That is extremely high and bad for Store health.

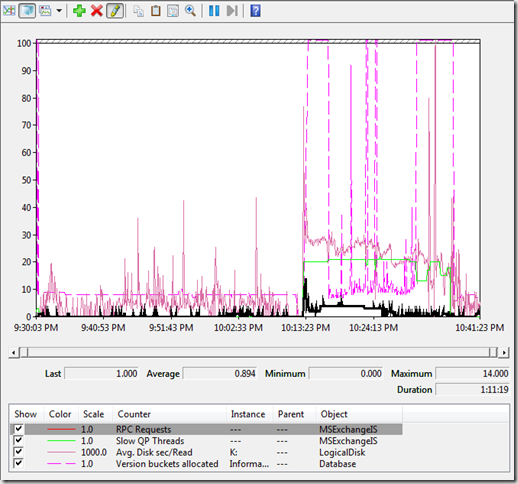

One of the characteristics of this phenomenon, is during the time of these queued transactions, you will see RPC requests flat line as shown below. What this means is that during the time that the search folder is being updated, we are performing a deep search traversal. Remember that I mentioned earlier that this was serialized? Well, while we are processing that transaction, that is the only thing we are doing, so we are not creating or removing any RPC threads currently running, thus the flat line for this counter.

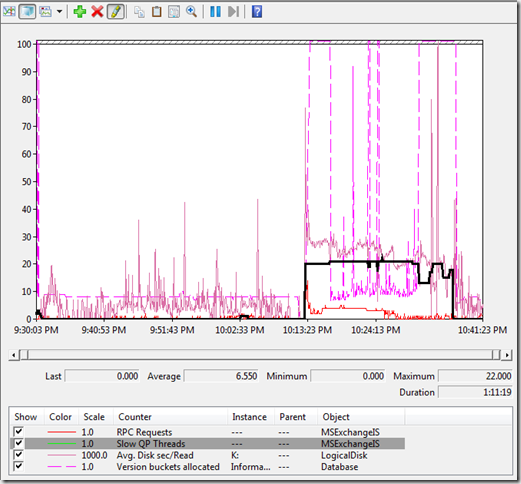

OK, so now you have this pattern, but another counter to add is Slow QP threads. This counter is essentially incremented when an un-optimized search for a string in the text context or subject occurred. This is what a Search folder really is basing it on some text or some property of a message. This confirms what is happening here. During normal operations, this counter should remain below 10 at all times, ideally under 5 on average.

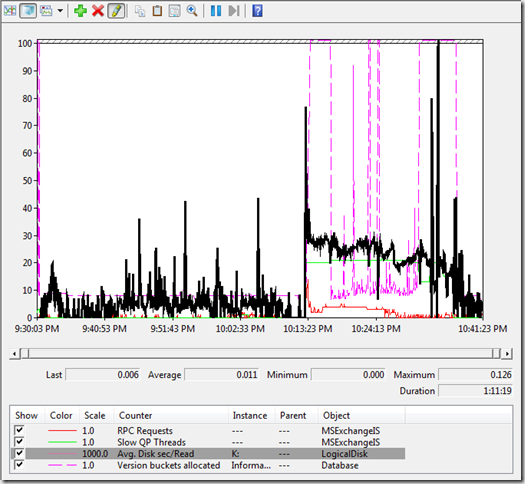

One of the side effects of these searches is a high rate of Disk Read activity due to the amount of data we are requesting.

The unfortunate problem with finding who the culprit is that you have to take that mailbox store down and run an isinteg -dump against that database. Once you have this file, you can use Dave's method of searching through the file to determine who has a high amount of Search folders in their mailbox using his SearchFolderFinder utility at https://blogs.msdn.com/dgoldman/archive/2008/08/26/searchfolderfinder-has-now-been-posted.aspx

Maybe this will give you some fighting power in tackling these problems if not now, but in the future as well.

cya!!