The Case of the Slow Project File Opens

If you’ve seen one of my Case of the Unexplained presentations (like the one I delivered at TechEd Europe last month that’s posted for on-demand viewing), you know that I emphasize how thread stacks are a powerful troubleshooting tool for diagnosing the root cause of performance problems, buggy behavior, crashes and hangs (I provide a brief explanation of what a stack is in the TechEd presentation). That’s because often times the explanation for a process’s behavior lies in the code it loads, either explicitly like in the case of DLLs it depends on, or implicitly like for processes that host extensions. This case is another demonstration of successful stack troubleshooting. It also shows how a little time troubleshooting to get a couple of clues can quickly lead to a solution.

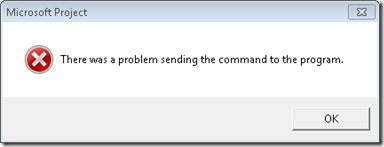

The case opened when the customer, a network administrator, contacted Microsoft support because a user reported that Microsoft Project files located on a network share were taking up to a minute to open and about 1 in 10 times the open resulted in an error:

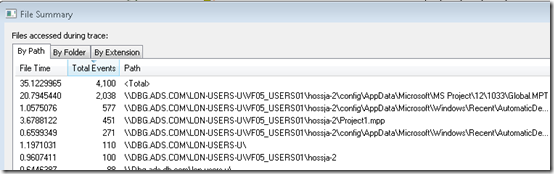

The administrator verified the issue, checked networking settings and latency to the file server, but could not find anything that would explain the problem. The Microsoft support engineer assigned to the case asked the administrator to capture a Process Monitor and Network Monitor traces of a slow file open. After receiving the log a short time later, he opened the log and set a filter to include only operations issued by the Project process and then another filter to include paths that referenced the target file share, \\DBG.ADS.COM\LON-USERS-U. The File Summary dialog, which he opened from Process Monitor’s Tools menu, showed significant time spent in file operations accessing files on the share, shown in the File Time column:

The paths in the trace revealed that the user profiles were stored on the file server and that the launch of Project caused heavy access of the profile’s AppData subdirectory. If many users had their profiles stored on the same server via folder redirection and were running similar applications that used stored data in AppData, that would surely account for at least some of the delays the user was experiencing. It’s well known that redirecting the AppData directory can result in performance problems, so based on this, the support engineer arrived at his first recommendation: for the company to configure their roaming user profiles to not redirect AppData and to sync the AppData directory only at logon and logoff as per the guidance found in this Microsoft blog post:

Special considerations for AppData\Roaming folder:

If the AppData folder is redirected, some applications may experience performance issues because they will be accessing this folder over the network. If that is the case, it is recommended that you configure the following Group Policy setting to sync the AppData\Roaming folder only at logon and logoff and use the local cache while the user is logged on. While this may have an impact on logon/logoff speeds, the user experience may be better since applications will not freeze due to network latency.User configuration>Administrative Templates>System>User Profiles>Network directories to sync at Logon/Logoff.

If applications continue to experience issues, you should consider excluding AppData from Folder Redirection – the downside of doing so is that it may increase your logon/logoff time.

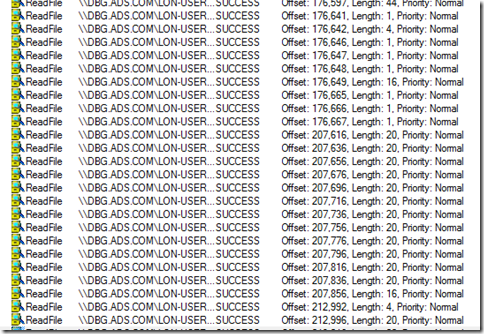

Next, the engineer examined the trace to see if Project was responsible for all the traffic to files like Global.MPT, or if an add-in was responsible. This is where the stack trace was indispensible. After setting a filter to show just accesses to Global.MPT, the file that accounted for most of the I/O time as shown by the summary dialog, he noticed that it was opened and read multiple times. First, he saw 5 or 6 long runs of small random reads:

The stacks for these operations showed that Project itself was responsible, however:

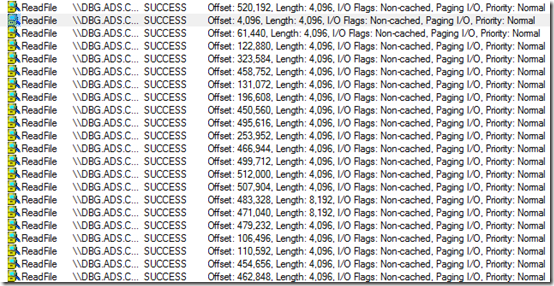

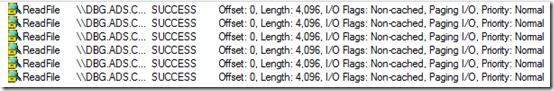

He also saw sequences of large, non-cached reads. The small reads he looked at first were cached, so there would be no network access after the first read caused the data to cache locally, but non-cached reads would go to the server every time, making them much more likely to impact performance:

To make matters worse, he saw this sequence six times in the trace, which you can see with a filter set to just show the initial read of each sequence:

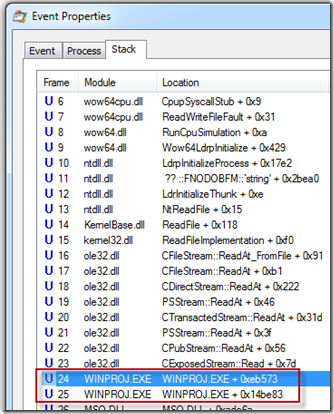

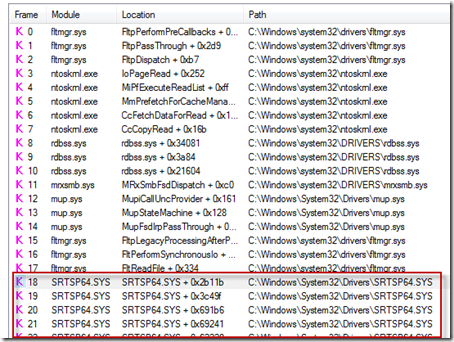

The stacks for these reads revealed them to be the result of a third-party driver, which was visible by the fact that the stack trace dialog, which he’d configured to obtain symbols from Microsoft’s public symbol servers, showed no symbol information:

Further, the stack frames higher up the same stack showed that the sequence of reads were being performed within the context of Project opening the file, which is a behavior common to on-access virus scanners:

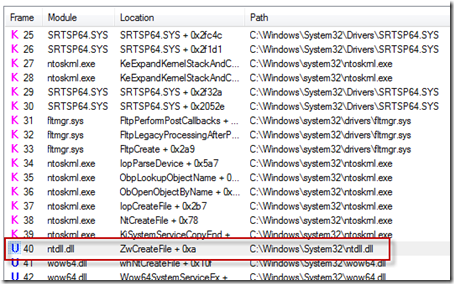

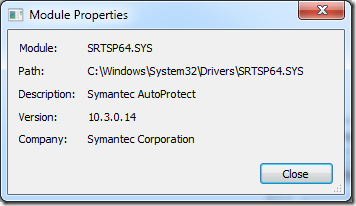

Sure enough, double-clicking on one of the SRTSP64.SYS lines in the stack dialog confirmed that it was Symantec AutoProtect that was repeatedly performing on-access virus detection each time Project opened the file with certain parameters:

Typically, administrators configure antivirus on file servers, so there’s no need for clients to scan files they reference on servers since client-side scanning simply results in duplicative scans. This lead to the support engineer’s second recommendation, which was for the administrator to set an exclusion filter on their client antivirus deployment for the file share hosting user profiles.

In less than fifteen minutes the engineer had written up his analysis and recommendations and sent them back to the customer. The network monitor trace merely served as confirmation of what he observed in the Process Monitor trace. The administrator proceeded to implement the suggestions and a few days later confirmed that the user was no longer experiencing long file loads or the errors they had reported. Another case closed with Process Monitor and thread stacks.