Loading a Trained Model Dynamically in an Azure ML Web Service

This post is authored by Ahmet Gyger, Program Manager at Microsoft.

We are very excited to announce the availability of a new module named "Load Trained Model". As its name indicates, this module allows you to load a trained model (also referred to as an iLearner file) in Azure ML into an experiment at runtime. When used on a published predictive web service, in combination with web service parameters, it allows you to dynamically select a trained model for scoring.

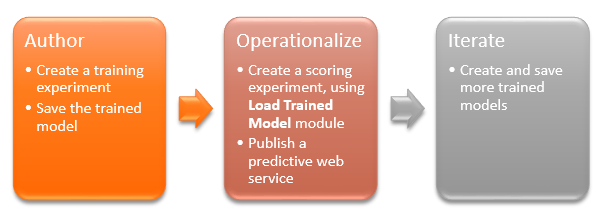

Training multiple models using different datasets but with identical schema and identical training workflow is a very common scenario. (This article explains how this can be done using automation.) After you have obtained the models, you want to put them in operation. But you might not want to create individual web services for each trained model, particularly when the number of models are high, and the scoring workflow is identical except the models are different. You could accomplish that using PowerShell automation and web service endpoints as suggested in the aforementioned article, but it can still be cumbersome and difficult to manage.

With the new "Load Trained Model", you can just create a single predictive web service and dynamically load a trained model based on your client logic. It greatly simplifies the process and dramatically improves manageability.

For example, one of our customers uses Azure ML for predictive building maintenance. They created hundreds of trained models, one for each building they maintain. The training experiment workflow (algorithms, data format, etc.) remains exactly the same for each model except the training data is unique for each building. And the predictive experiment workflow is also identical except the model used is different for each building. Instead of having to deploy one web service for each building, they can now simply create one predictive experiment with a Load Trained Model, then generate just one web service, and use a web service parameter to select which trained model to use for each batch of predictions. This significantly reduces their cost of maintaining the web service, and the scoring model can also be updated much more easily.

Load a Trained Model: The End-to-End Process

How to Use the Load Trained Model Module

To start using the new Load Trained Model module, you must first obtain the iLeaner file which is a binary representation of a trained model in Azure ML. The easiest way to obtain the iLeaner file is to follow the typical process of invoking the retraining API. Esentially, you will need to create a training experiment, attach a web service output module to a Train Model module in your training experiment, generate a training web service out of it, and invoke the training web service which then will produce the trained model in iLearner format in a designated Azure blob storage account. Please follow this article for more details on the retraining workflow in Azure ML.

Once you get hold of the iLearner file, you can load it into an Azure blob storage account (or a public HTTP endpoint) and then configure the Load Trained Model to point to that location. When the experiment is run, the iLearner file is loaded and de-serialized into a model, which can then be used to score. If you mark the parameters of the Load Trained Model module as web service parameters, after you generate a predictive web service out of the predictive experiment, you can – at runtime – dynamically feed the path to the iLearner file as the web service parameter value, and effectively load models dynamically on-demand, for making predictions.

More Information

For more information, be sure to check out these additional articles:

- Load Trained Model: Detailed technical information about the module.

- Retrain a Machine Learning Model: Overview of the standard retraining process.

- Deploy the Training Experiment as a Training Web Service: Descriptiono of how to set up a training web service to output a trained model in .iLearner format.

- Load a Trained Deep Learning Model: Gallery example illustrating the use of the Load Trained Model.

Ahmet

@metah_ch