Announcing Microsoft Machine Learning Library for Apache Spark

This post is authored by Roope Astala, Senior Program Manager, and Sudarshan Raghunathan, Principal Software Engineering Manager, at Microsoft.

We're excited to announce the Microsoft Machine Learning library for Apache Spark – a library designed to make data scientists more productive on Spark, increase the rate of experimentation, and leverage cutting-edge machine learning techniques – including deep learning – on very large datasets.

Simplifying Data Science for Apache Spark

We've learned a lot by working with customers using SparkML, both internal and external to Microsoft. Customers have found Spark to be a powerful platform for building scalable ML models. However, they struggle with low-level APIs, for example to index strings, assemble feature vectors and coerce data into a layout expected by machine learning algorithms. Microsoft Machine Learning for Apache Spark (MMLSpark) simplifies many of these common tasks for building models in PySpark, making you more productive and letting you focus on the data science.

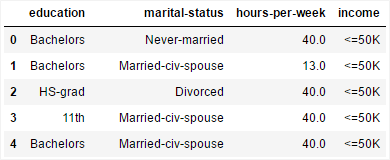

The library provides simplified consistent APIs for handling different types of data such as text or categoricals. Consider, for example, a DataFrame that contains strings and numeric values from the Adult Census Income dataset, where "income" is the prediction target.

To featurize and train a model for this data using vanilla SparkML, you would have to tokenize strings, convert them into numerical vectors, assemble the numerical vectors together and index the label column. These operations result in substantial amounts of code that is not modular, as it depends on the data layout and the chosen ML algorithm. However, in MMLSpark, you can simply pass the data to the model, while the library takes care of the rest. Furthermore, you can easily change the feature space and algorithm without having to re-code your pipeline.

model = mmlspark.TrainClassifier(model=LogisticRegression(), labelCol=" income").fit(trainData)

predictions = model.transform(testData)

MMLSpark uses DataFrames as its core datatype and integrates with SparkML pipelines for composability and modularity. It is implemented as Python bindings over Scala APIs, ensuring native JVM performance.

Deep Learning and Computer Vision at Scale

Deep Neural Networks (DNNs) are a powerful technique and can yield near-human accuracy for tasks such as image classification, speech recognition and more. But building and training DNN models from scratch often requires special expertise, expensive compute resources and access to very large datasets. An additional challenge is that DNN libraries are not easy to integrate with SparkML pipelines. The data types and APIs are not readily compatible, requiring custom UDFs which introduce additional code complexity and data marshalling overheads.

With MMLSpark, we provide easy-to-use Python APIs that operate on Spark DataFrames and are integrated into the SparkML pipeline model. By using these APIs, you can rapidly build image analysis and computer vision pipelines that use the cutting-edge DNN algorithms. The capabilities include:

- DNN featurization: Using a pre-trained model is a great approach when you're constrained by time or the amount of labeled data. You can use pre-trained state-of-the-art neural networks such as ResNet to extract high-order features from images in a scalable manner, and then pass these features to traditional ML models, such as logistic regression or decision forests.

- Training on a GPU node: Sometimes, your problem is so domain specific that a pre-trained model is not suitable, and you need to train your own DNN model. You can use Spark worker nodes to pre-process and condense large datasets prior to DNN training, then feed the data to a GPU VM for accelerated DNN training, and finally broadcast the model to worker nodes for scalable scoring.

- Scalable image processing pipelines: For a complete end-to-end workflow for image processing, DNN integration is not enough. Typically, you have to pre-process your images so they have the correct shape and normalization, before passing them to DNN models. In MMLSpark, you can use OpenCV-based image transformations to read in and prepare your data.

Consider, for example, using a neural network to classify a collection of images. With MMLSpark, you can simply initialize a pre-trained model from Microsoft Cognitive Toolkit (CNTK) and use it to featurize images with just few lines of code. We perform transfer learning by using a DNN to extract features from images, and then pass them to traditional ML algorithms such as logistic regression.

cntkModel = CNTKModel().setInputCol("images").setOutputCol("features").setModelLocation(resnetModel).setOutputNode("z.x")

featurizedImages = cntkModel.transform(imagesWithLabels).select(['labels','features'])

model = TrainClassifier(model=LogisticRegression(),labelCol="labels").fit(featurizedImages)

Note that the CNTKModel is a SparkML PipelineStage, so you can compose it with any SparkML transformations and estimators in scalable manner. For more examples, see our Jupyter notebooks for image classification.

Open Source

To make Spark better for everyone, we've released MMLSpark as an Open Source project on GitHub – and we would welcome your contributions. For example, you can:

- Provide feedback as GitHub issues, to request features and report bugs.

- Contribute documentation and examples.

- Contribute new features and bug fixes, and participate in code reviews.

Getting Started

You can quickly get started by installing the library on your local computer as a container from Docker Hub using this one-line command:

docker run -it -p 8888:8888 -e ACCEPT_EULA=yes microsoft/mmlspark

Then, when you're ready to take your model to scale, you can install the library on your cluster as a Spark package. The library can be installed on any Spark 2.1 cluster, including on-premises, Azure HDInsight, and a Databricks cluster.

Take a look at our GitHub repository for installation instructions, links to documentation, and example Jupyter Notebooks.

Roope & Sudarshan

Apache®, Apache Spark, and Spark® are either registered trademarks or trademarks of the Apache Software Foundation in the United States and/or other countries.