Microsoft expands availability of Project Oxford intelligent services

Posted by Ryan Galgon, Senior Program Manager in the Technology & Research group at Microsoft.

Earlier this year, we announced Project Oxford, a set of APIs, SDKS and services available to developers to make their applications more intelligent, engaging and discoverable. Project Oxford expands upon Microsoft’s portfolio of machine learning APIs and enables developers to easily add intelligent features – such as vision, speech, facial recognition and language understanding – into their applications. Project Oxford face, speech and computer vision APIs have also been included as part of the Cortana Analytics Suite.

Starting today, we’ve further expanded the reach of Project Oxford’s Language Understanding Intelligent Service (LUIS) with public beta availability, Chinese language support and more pre-built models. In addition, beta versions of the Project Oxford face, computer vision and speech SDKs are now available on GitHub.

Chinese Language Support and New Features for LUIS

LUIS allows developers to build a model that understands natural language and commands tailored to their application.

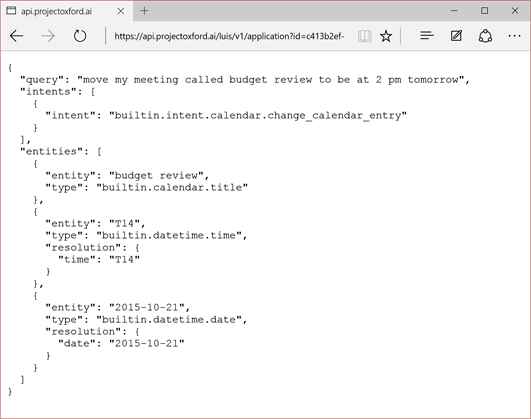

Example LUIS analysis of the utterance “move my meeting called budget review to be at 2 pm tomorrow”.

LUIS determines both the intent of the utterance (change_calendar_entry), and entities like times, dates and the event title.

Formerly available by invitation only, LUIS is now accessible to all developers as a public beta. New features available today, include:

- Chinese language support: Chinese support has been one of our most commonly requested features, and now you can create LUIS applications in English or Chinese. In addition, LUIS correctly processes Chinese utterances that include fragments of English.

- Application import and export: Now, all of the data you've entered into a LUIS application can be downloaded to a JSON object and new applications can be created by importing that JSON object. This allows developers to copy applications, share applications with others and check their applications into source control – for example, to be versioned alongside the code for the client app that calls LUIS.

- Increased coverage of pre-built models: LUIS provides access to many of the same models that power Microsoft products, and we’ve more than tripled the number of intents and entities available, to 196 from 56.

Since launch, we’ve worked closely with early adopters on our MSDN forum, and we’ve added bulk import of unlabeled utterances, regular expression machine-learning features, a super-charged date-time entity and a lot of UI polish.

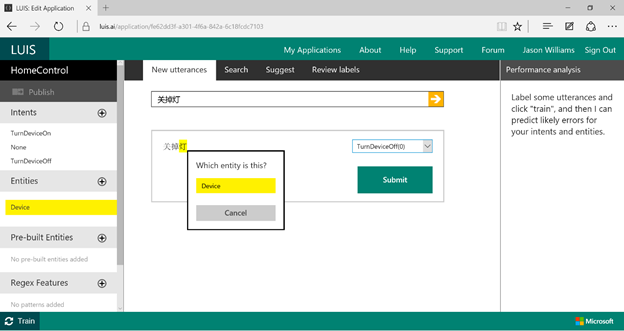

The LUIS labeling UI makes it easy to label utterances in English or Chinese, and quickly build custom models. English translation: “Turn off the light”.

So what applications have developers been building with LUIS? We've been amazed! For example, Meekan is a company that develops textual robots for multiple platforms (Slack, HipChat) that help busy people make more of their schedule. The robot's uniqueness is his ability to recognize complex time ranges and actions ("schedule meeting after Wednesday around noon", or "move tomorrow's meeting to Friday afternoon"). Meekan uses LUIS for all intent and tagging identification to help differentiate between actions the company’s textual robot can help perform and actions he cannot, as well as find the important entities in user sentences.

“LUIS saved us tremendous time while going from a prototype to production,” said Co-Founder & CTO of Meekan.com Eyal Yavor. “We focused on user interaction, and LUIS was working in the background, helping us understand users' usage. Starting with just a few sample sentences, LUIS's feedback loop helped improve the robot quickly.”

Microsoft GigJam is also using LUIS for its language understanding. Currently in private preview, GigJam is an unprecedented new way for teams to accomplish tasks and transform business processes by breaking down the barriers between devices, apps and people. When GigJam users say "Bring up my mail," "Filter these emails by orders" or (after circling an invoice on the screen) "share this with John", it is LUIS that processes the varied and fluid utterances.

Developers have also told us about using LUIS to build dialog systems for ordering pizza, controlling devices in the home, booking a taxi and playing music. By pairing LUIS with other Project Oxford services, developers have built some really unexpected stuff, with more than 4000 models and counting. For example, using Project Oxford's Speech API, developers built a system for scoring how well people say tongue twisters. By pairing LUIS with Project Oxford's vision API for optical character recognition, LUIS has even been used to identify the text of "social memes" in images.

Project Oxford SDKs now available at GitHub

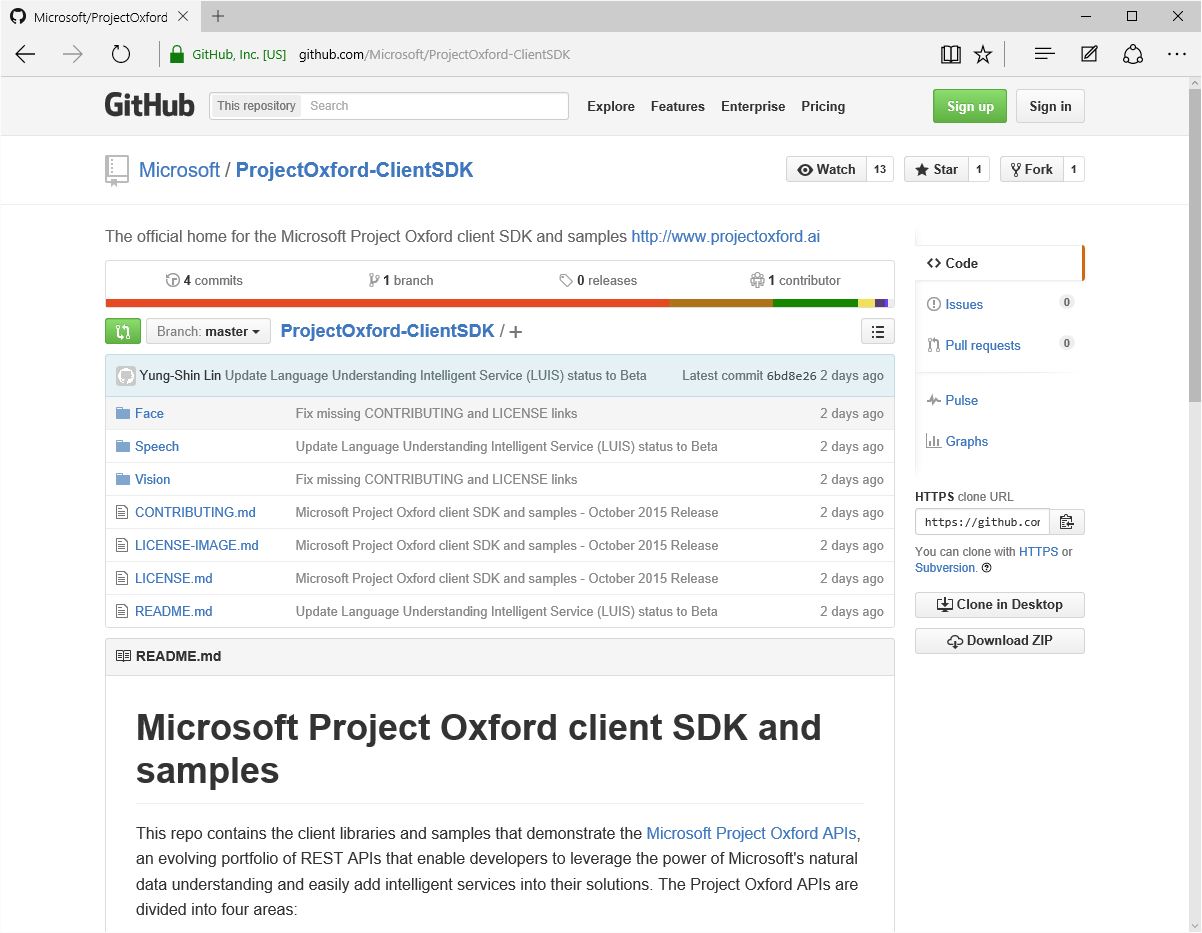

Since we launched Project Oxford, Microsoft developers have used the APIs to create popular sites like How-Old.net and TwinsOrNot.net. Today we’re making it easier for developers to create their own applications, by adding Project Oxford face, computer vision and speech SDKs to GitHub.

The official Project Oxford repo for client SDKs enables all developers to view the source code, access the history, file issues or even contribute to the code.

This will enable us to better work with the community and build a more inclusive, robust platform. The repo contains Windows and Android support for face, computer vision and speech APIs, and we are working to onboard the remaining packages as well as increase our SDK offerings in iOS and Node.js, both top requests from developers. Follow, fork or contribute at https://github.com/Microsoft/ProjectOxford-ClientSDK. We welcome your comments, issues and pull requests. And GitHub isn’t the only place you will find us – in order to improve workflow you can also access our Windows and Android packages on NuGet and Maven, respectively.

Try Project Oxford SDKs today by visiting https://www.projectoxford.ai/sdk or GitHub. We’re looking forward to seeing what you can build.

Ryan