Deploy S2D Lab w/ Script on One Single Machine

Recently in a local discussion group, people are talking about leveraging VMs validating the Storage Spaces Direct (S2D) functionalities. Actually that's not something new. Claus posted a blog two months ago and introduce how to deploy Storage Spaces Direct in Azure (https://blogs.technet.microsoft.com/filecab/2016/05/05/s2dazuretp5/).

In this blog I will show you how I deploy a S2D environment on one single machine with script.

Why?

- Some of the people don't have 10Gb physical network in the lab but internal and private virtual switch can provide 10Gb link (although due to hardware limitation, they might not always be able to achieve 10Gb/s throughput).

- If you want to demo S2D in an event or a customer meeting, you probably don't want to waste your time troubleshooting the network connectivity issue.

How?

I wrote a script to help me deploy S2D end-to-end environment in a automated way. You may download script from https://github.com/ostrich75/s2d-lab . You may also modify it. For example, if you machine don't have 64GB memory, you may want to decrease the memory size of each VM.

If you just want to use it directly, please make sure read the README.MD file. Basically you need a DC and DHCP in the (virtual) network. I also zip and upload my DC and a Syspreped Windows Server 2016 image. You may download it and import it to a Windows Server 2016 TP5 Hyper-V Host.

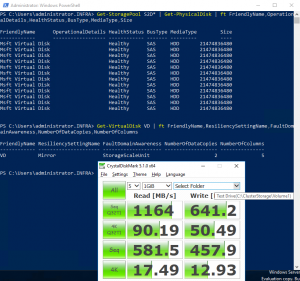

I just used the script this morning to deploy a 3-node S2D Hyper-Converged environment on my laptop (Thinkpad P50, i7-6820HQ, 64GB Memory, SM951a NVMe 512GB x2).

Here is a quick test results (for fun only).