Build an All-Flash Share Nothing High Available Scale-Out Storage for Private Cloud

NVMe drive can provider 10x better performance compare to the traditional SAS/SATA SSD. However since it needs to be plugged in the PCIe slot instead of the RAID controller, there is no way to create hardware RAID array across multiple NVMe drives. You still cloud leverage the soft RAID capability provided by operating system (e.g. Storage Space in Windows Server 2012 (R2)) to create Soft RAID. But for the enterprise who needs high availability, the server itself is a single point of failure.

Different from SAS SSD, which support multi-path and be able to attached to multiple HBA. One NVMe drive can only plug in one PCIe slot of one PC server. Then the question is how to get the performance benefit from the NVMe drive while keep the high available capability required by enterprise.

In Ignite China, I demonstrated a 3-node hyper-converged Hyper-V cluster, which is based on Storage Space Direct (S2D). Now with S2D, you may build such high performance and high available storage system with NVMe drives direct attached to individual PC servers.

Hardware:

- WOSS-H2-10 (DELL 730xd, E5-2630-v3 X2, 128GB Memory, 2TB SATA HDD X2 RAID1, 1 INTEL P3700 400GB NVMe drive, 1 Mellanox ConnectX-3 10Gb NIC + 1Gb NIC)

- WOSS-H2-12 (DELL 730xd, E5-2630-v3 X2, 128GB Memory, 2TB SATA HDD X2 RAID1, 1 INTEL P3700 800GB NVMe drive, 1 Mellanox ConnectX-3 10Gb NIC + 1Gb NIC)

- WOSS-H2-14 (DELL 730xd, E5-2630-v3 X2, 128GB Memory, 2TB SATA HDD X2 RAID1, 1 INTEL P3700 400GB NVMe drive, 1 Mellanox ConnectX-3 10Gb NIC + 1Gb NIC)

Software:

- Windows Server 2016 Technical Preview 3/4 Datacenter Edition

Deployment Steps:

Please refer to the link below to understand what's S2D and how to setup a S2D cluster. The majority of the steps here are same the guide below. I will highlight the difference.

https://technet.microsoft.com/en-us/library/mt126109.aspx

Install Windows Server 2016 Technical Preview 3/4 Datacenter Edition on the above three host machines.

Join the above three host machines to the domain (bluecloud.microsoft.com in my case).

Rename the 1Gb NIC's network to "VM" on each of the host machines.

Run the cmdlets below on each of the host machines.

- Install-WindowsFeature –Name Hyper-V -IncludeManagementTools –Restart

- New-VMSwitch -Name Lab-Switch -NetAdapterName "VM"

On one of the above three machiens, run the following cmdlets.

New-Cluster -Name WOSS-H2-C1 -Node WOSS-H2-10,WOSS-H2-12,WOSS-H2-14 -NoStorage

(get-ClusterNetwork "Cluster Network 1").Role = "ClusterAndClient"

(get-ClusterNetwork "Cluster Network 2").Role = "ClusterAndClient"

Enable-ClusterS2D -S2DCacheMode Disable

New-StoragePool -StorageSubSystemName WOSS-H2-C1.bluecloud.microsoft.com -FriendlyName Pool -WriteCacheSizeDefault 0 -FaultDomainAwarenessDefault StorageScaleUnit -ProvisioningTypeDefault Fixed -ResiliencySettingNameDefault Mirror -PhysicalDisk (Get-StorageSubSystem -Name WOSS-H2-C1.bluecloud.microsoft.com | Get-PhysicalDisk | ? CanPool -eq $true)

New-Volume -StoragePoolFriendlyName Pool -FriendlyName VD10 -PhysicalDiskRedundancy 1 -FileSystem CSVFS_REFS –Size 230GB

New-Volume -StoragePoolFriendlyName Pool -FriendlyName VD12 -PhysicalDiskRedundancy 1 -FileSystem CSVFS_REFS –Size 230GB

New-Volume -StoragePoolFriendlyName Pool -FriendlyName VD14 -PhysicalDiskRedundancy 1 -FileSystem CSVFS_REFS –Size 230GB

Set-FileIntegrity C:\ClusterStorage\Volume1 -Enable $false

Set-FileIntegrity C:\ClusterStorage\Volume2 -Enable $false

Set-FileIntegrity C:\ClusterStorage\Volume3 -Enable $false

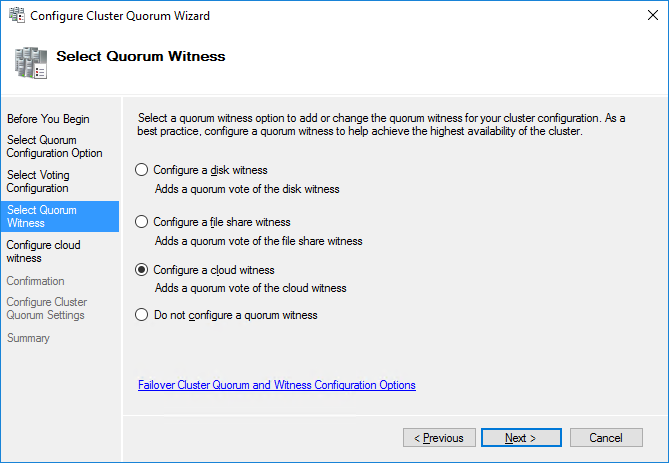

Configure the quorum. I configured cloud witness, which is new feature in Windows Server 2016

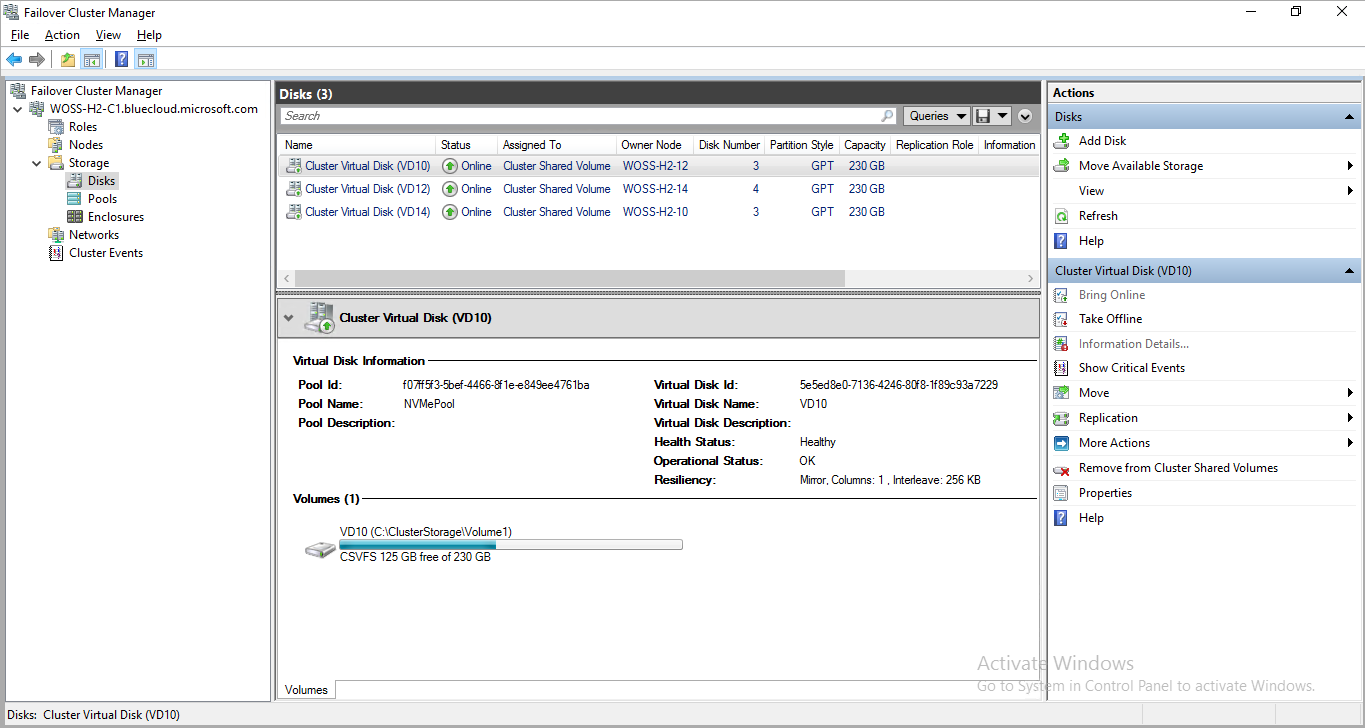

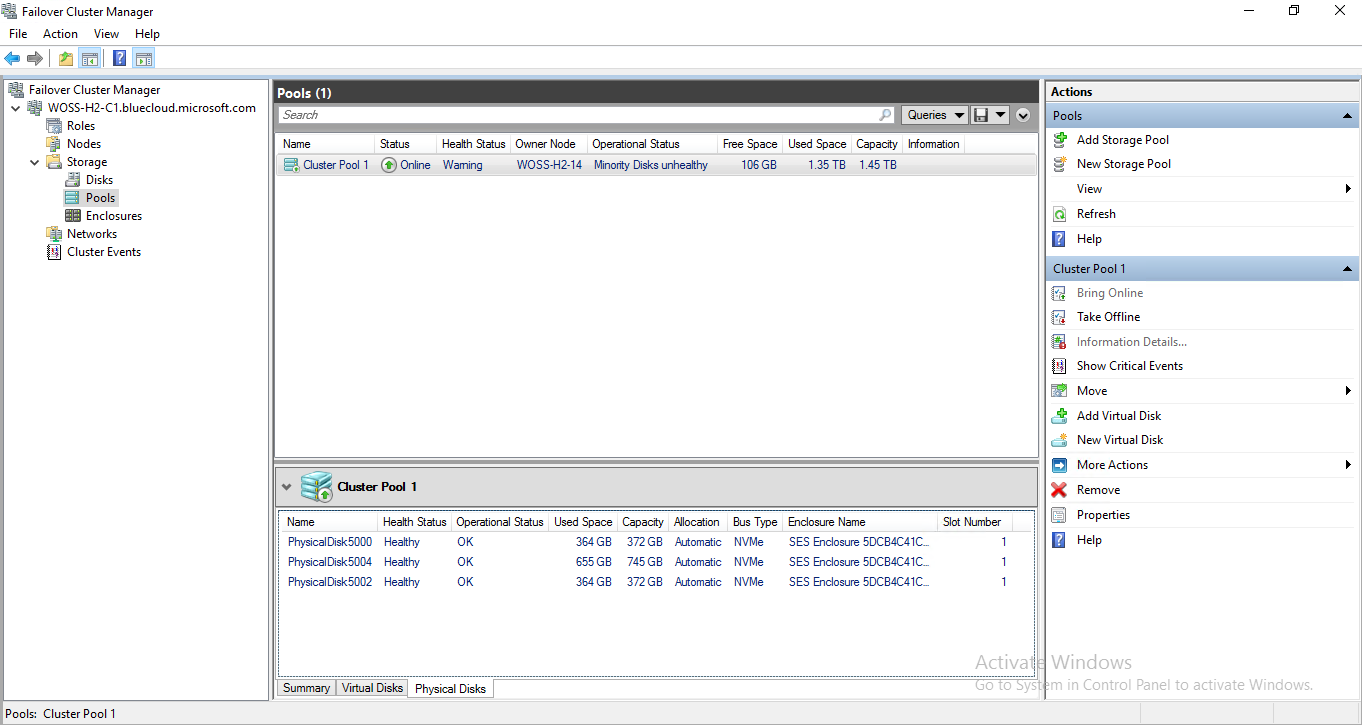

Now you could check the storage pool and disks' status from Failover Cluster Manager.

You could see the only difference between my steps and the official guide is I used switch "-S2DCacheMode Disable" when I enable S2D. By default, S2D will claim all SSD as cache for HDD. For all flash (including NVMe and SAS/SATA SSD), you need to enable S2D with cache disabled. For multi-tier flash (NVMe & SSD), you can identify the bus type for cache. For example,

For all flash:

Enable-ClusterS2D -S2DCacheMode Disabled

For multi-tier flash:

Enable-ClusterS2D -S2DCacheDevice NVMe (SSD will be used for storage and NVMe for cache).

Performance Test

Test case:

- Test file size: 100GB

- Test Duration: 30min

- IO Size: 8KB

- Read/Write Ratio: 70/30

- Disable all the hardware and software cache

- 16 Threads

- Queue Depth = 16

.\diskspd.exe -c100G -d1800 -w30 -t16 -o16 -b8K -r -h -L -D C:\ClusterStorage\Volume1\IO.dat

Benchmark:

Single Intel P3700 800GB NVMe Drive Local Test on the same platform

- IOPS = 215,135.66

- IOPS StdDev = 10,862.92

- Avg Latency = 1.188

- Latency StdDev = 1.163

CSV1 w/ RDMA:

- IOPS = 185,964.06

- IOPS StdDev = 17,182.95

- Avg Latency = 1.375

- Latency StdDev = 1.504