Everything you wanted to know about SR-IOV in Hyper-V Part 8

This part of the series is all about determining why SR-IOV may not be operational. As you will discover, there are several reasons, some of them obvious if you’ve followed all the parts so far, some more subtle. By the end of this part, you will be an expert!

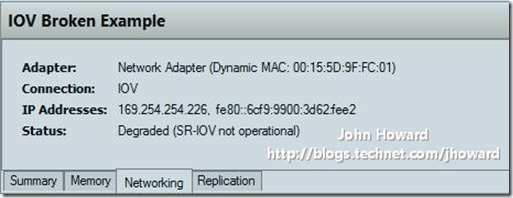

Assuming you have a switch in SR-IOV mode, and have enabled SR-IOV on a virtual network adapter, the most obvious place you will notice that SR-IOV isn’t working is in Hyper-V Manager after selecting the networking tab for a running virtual machine. (I love this panel – my favourite bit of Hyper-V Manager that I worked on for the Windows “8” release!)

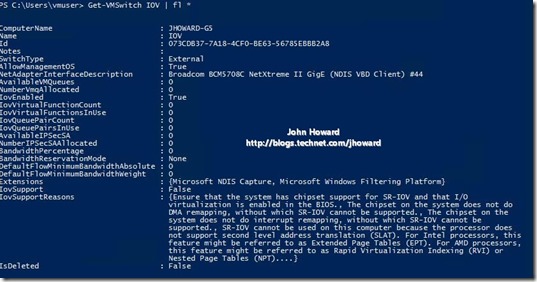

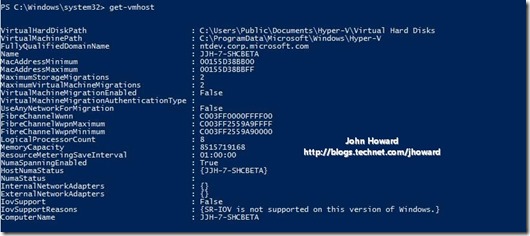

I’ve already outlined dependencies from past posts. But let’s assume you haven’t heeded them and have done this on an older machine which isn’t SLAT capable, doesn’t have BIOS support for SR-IOV and doesn’t even have an SR-IOV capable network adapter, as for the following screenshot. The first clues will come from the Get-VMHost PowerShell cmdlet. In this case, IovSupportReasons property returned from the cmdlet is pretty verbose in outlining a number of issues.

Essentially you’re never going to get SR-IOV working on the above machine. So let’s move on…

The follow example is a machine which has chipset support, but the BIOS doesnt have support for SR-IOV. This is probably the most common error you will find on servers currently shipping, or if you were to install Windows Server “8” beta on a desktop class machine. The error specifically is the first entry which says “To use this SR-IOV on this system, the system BIOS must be updated to allow Windows to control PCI Express. Contact your system manufacturer for an update.”

Next, let’s assume that the machine has chipset support, the BIOS has SR-IOV support, and you’re using a NIC which is capable of SR-IOV, but it still isn’t working. In this case, Get-VMHost may return the following:

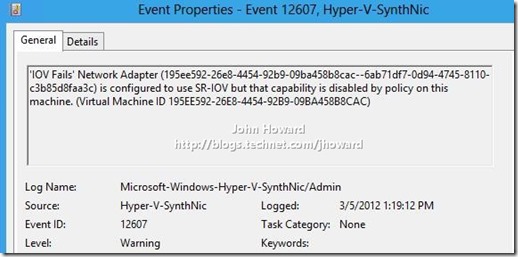

In addition, after a virtual network adapter is started (by changing the state of a virtual machine to running, or by toggling the IOVWeight property on a running virtual network adapter to a positive value in the range 1..100) the following may be logged in the event log indicating that the user of SR-IOV has been disabled by policy on this system.

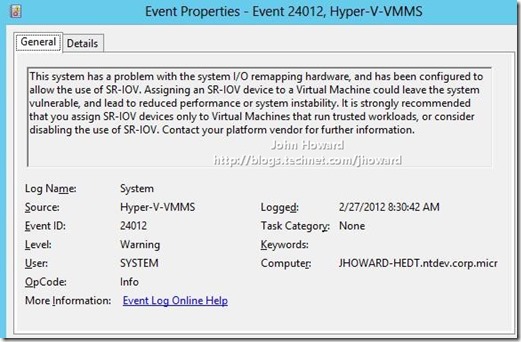

This reason for this takes a little explaining. Even if the system manufacturer has made the necessary changes in their BIOS for the base functionality Windows requires to support SR-IOV, some chipset implementations have flaws in them. In some cases, system manufacturers may be able to work around the problem by a fix in firmware. This is not universally true, and it may be a case that it requires a revision to silicon that cannot be fixed by firmware alone (in other words, a revised motherboard). The result of the chipset flaws are such that it is possible for a guest operating system which has a VF assigned to cause the physical system to operate with reduced performance, or in the worst case cause it to crash.

If you are prepared to assign VFs only to “trusted” workloads in lieu of an updated BIOS with a workaround (assuming it is possible on your hardware), the following registry key can be added on the parent partition. IOVEnableOverride. Type DWORD. Value 1. Under HKLM\Software\Microsoft\Windows NT\CurrentVersion\Virtualization. The system should also be restarted after setting this key. (Technically you could restart the VMMS service and save/restore each running VM which has an IOVWeight set as well.)

On a restart, the following event will be logged on each startup. As long as you are comfortable and understand the potential risk involved, SR-IOV should now work on a system with this registry key set.

If your system manufacturer can work around the chipset flaw, and has provided a BIOS which incorporates a workaround, the registry key is not required, the event above will not be logged, and VFs can be securely assigned to virtual machine. In these cases, if a virtual machine with a virtual function assigned can trigger the conditions which would otherwise cause the symptoms previously described, Hyper-V will automatically remove the VF from the VM and let it continue running using software based networking. However, it should be noted that if there is a VM which is able to trigger one of the conditions, there is an extremely likely probability that the guest operating system is compromised and likely to crash very soon after. However, the remainder of the system including other running VMs will not be affected.

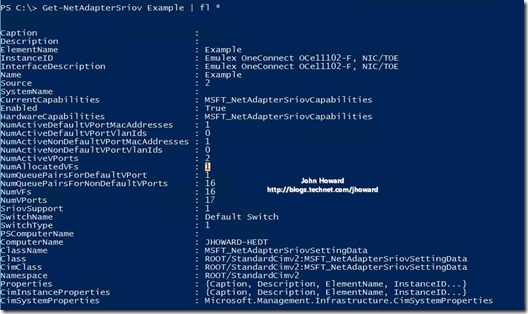

The next useful cmdlet is Get-NetAdapterSriov. This cmdlet gives a lot of useful information about the physical network adapter, assuming it supports SR-IOV.

It’s pretty telling that nothing was returned. A clear indication that there are no SR-IOV capable network adapters. Let’s instead run this on a machine which does have an SR-IOV capable network adapter.

The fact that something was returned indicates the network adapter is SR-IOV capable. Furthermore, looking at NumVFs, we can see that this adapter is working correctly and has available resources.

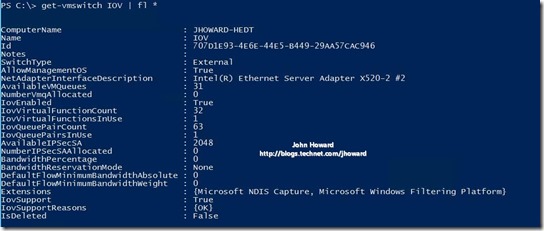

If you’ve created a virtual switch, the third useful cmdlet is Get-VMSwitch. Remember that to enable SR-IOV, the switch must be created in SR-IOV mode to start with. When SR-IOV is not available on the physical NIC, there are a number of properties which indicate why. IovVirtualFunctionCount and IovQueuePairCount will be zero. IovSupport will be false, and IovSupportReasons will list the reasons why.

First an example where the machine itself does not support SR-IOV, and the switch is bound to a network adapter which doesn’t support SR-IOV either.

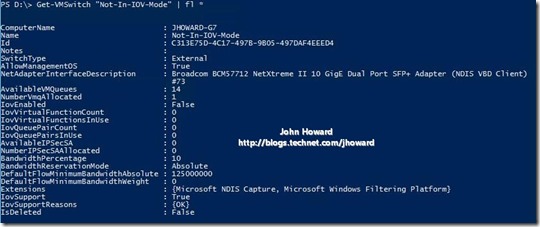

Here’s an example where the machine does support SR-IOV, but the physical network adapter does not. IovSupportReasons is clear as to the cause of the problem, regardless of whether the virtual switch is created with SR-IOV enabled or not.

And another example where the machine supports SR-IOV, as does the physical network adapter, but the switch was not created in SR-IOV mode. This one is a bit more subtle to spot as IovSupport and IovSupportReasons indicate everything is OK. The property IovEnabled is False, hence IovVirtualFunctionCount is zero even though the physical NIC has resources potentially available.

On a “good” (well configured) machine, you will get very different results in these properties. Notice how there is a positive integer in IovVirtualFunctionCount, IovSupport is True, and IovSupportReasons has a single value in the array, “OK”.

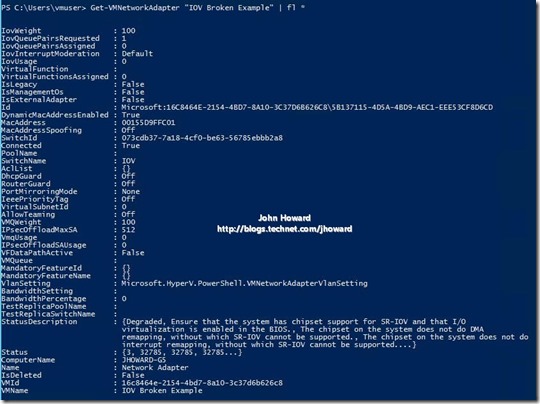

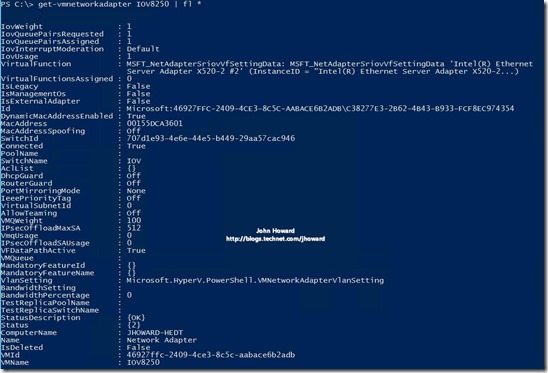

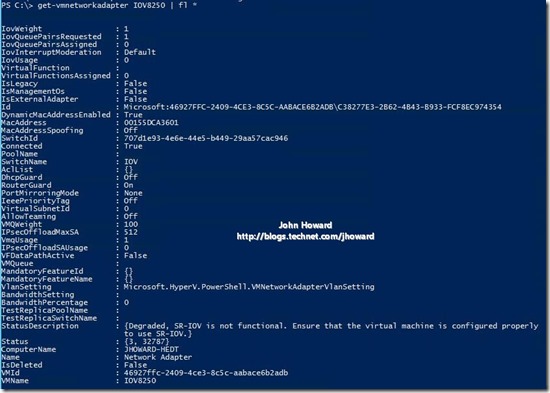

The last cmdlet is Get-VMNetworkAdapter. This should be run against a running VMs network adapter. Here again is an example from a physical machine which does not support SR-IOV, and does not have an SR-IOV capable network adapter. Even though the IovWeight property is non-zero, note that IovQueuePairsAssigned and IovUsage are zero, and Status and StatusDescription contain a slew of reasons why the network adapter is degraded.

Here’s the same on a “good” machine for comparison. Notice that IovUsage is 1.

The above has covered the common cases, but there are slightly more subtle ones, those around when port policies have been applied. See if you can spot what’s wrong in the following output. In this case, the machine is fully capable of SR-IOV, the virtual switch is in SR-IOV mode, and the IovWeight has been set on the network adapter correctly. It’s none of the reasons described so far.

Unfortunately, the StatusDescription isn’t overly helpful in indicating the precise reason. In fact, for several technical reasons, this is something which is incredibly difficult to accurately provide, so is unlikely to change before final release. Instead, we need to look at the policies which have been applied. In this particular case, I enabled RouterGuard on the VM. When we apply policy which can only be enforced by the virtual switch, and not the physical NIC, we automatically disable the use of SR-IOV on the VM so that the policy can be applied. Turning off any such policies (assuming they are compatible with the networking configuration requirements of the VM) will enable SR-IOV to start operating again.

Now I did mention it in an earlier post, but if you are still struggling to get SR-IOV enabled and you believe you have everything you should need (chipset, latest BIOS, BIOS settings, NIC, virtual switch in SR-IOV mode), there is one other thing that is definitely worth checking. Some BIOS’s have more than one firmware setting to enable SR-IOV. If in doubt, always go back to your system manufacturers documentation to make sure you have the settings configured correctly. And remember, if you do change BIOS settings, you may need to hard power cycle the machine, not just a soft restart.

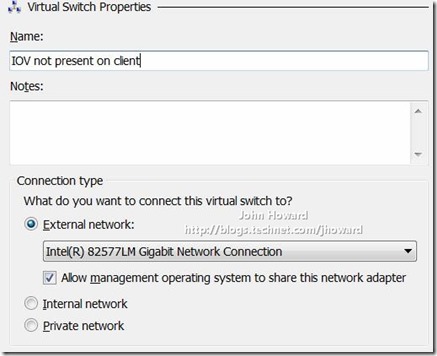

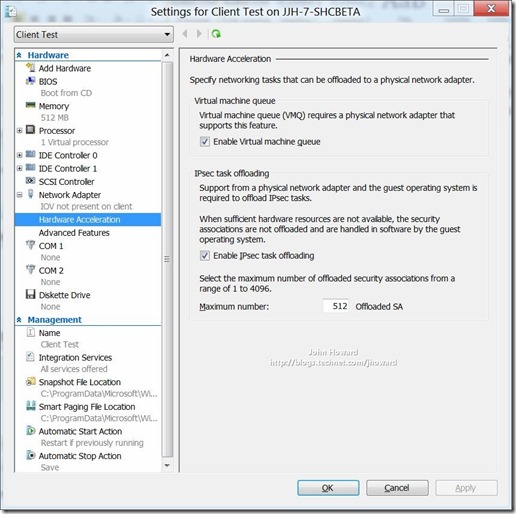

There are two other reasons worth mentioning. One is if you are using client Hyper-V. As this is a server only feature, the user interface for SR-IOV does not exist in Hyper-V Manager on client. (Note that the SR-IOV options will appear though if you are using Hyper-V Manager on a client connecting to a remote Windows “8” server.)

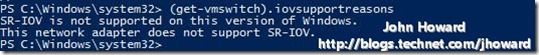

If you were to run get-vmhost on a client, it will indicate that SR-IOV is not supported.

And similarly for a virtual switch (sadly my laptop doesn’t have a 10G network adapter that supports SR-IOV either – next upgrade ![]() )

)

So that’s pretty much it in terms of diagnosing why SR-IOV may not be operating. If you understood all the above, you are now a fully-fledged superhero and have earned your cape with honours!

Probably one more part to come in this series, the “kitchen sink” part, as in everything not already mentioned. That will hopefully be early next week after I find time to write it.

Cheers,

John.