Using System Center Orchestrator to Gather Client Logs at Microsoft

Hello again readers. I wanted share with you a solution we are leveraging within Microsoft for log collection utilizing System Center Orchestrator. This will entail a few posts as this Log Copy solution is somewhat complex, but simple in its architecture and implementation. This initial blog post will merely introduce the solution and I will continue to discuss this in future blog posts fully explaining the design, moving parts, and provide code you can leverage to implement this Orchestrator Runbook in your own environment (at your own discretion / testing / risk and under your own support, but happy to respond to questions as you have them of course ![]() ).

).

Some Background

To give you a little background on the group I work in at Microsoft currently; MPSD (Managed Platforms and Service Delivery) supports ~300,000 client systems within Microsoft. On occasion we are required to pull logs from client systems to troubleshoot various items related to the System Center products we dogfood and support. Previously, our go to mechanisms for collecting logs have been somewhat numbered and all called “Log Copy”, but utilizing different approaches.

- ConfigMgr Software Distribution – static log collection script targeted by advertisement

- PSEXEC scripts to run remotely against machines and gather these logs

- VBSCRIPTS, PowerShell scripts, batch files

You get the idea…lots of ways, but all somewhat limited in one way or another.

Negatives to the Above Solutions

- Log collection not possible if ConfigMgr client is broken (if using the Software Distribution method)

- File compression wasn’t always possible and likely not used to keep complexity down between different OSs

- Multiple solutions and multiple folks supporting

- Difficult to maintain and keep up to date

- Not scalable – single threaded grabbing one log from one machine at a time

- Manually driven and cumbersome in most cases

- Restricted to few users due to rights required

Introducing the System Center Orchestrator Log Copy Workflow

The Log Copy Workflow, in its simplest form, is a front end interface built on PowerShell and rolled into an EXE that instructs Orchestrator on the back end to gather logs on behalf of the requestor. The UI accepts requests for scenario based logs (predefined logs) or custom logs (logs you choose with restrictions) and runs these requests against the machines that are provided. This UI, when the requestor hits the “Submit” button, will generate an XML request file that gets copied to a file share on our System Center Orchestrator Runbook (Action) Server. Once there, our Runbook automatically picks up this file, processes the data within this file as a request that is submitted into the system. This request is inputted into a backend database table which Orchestrator leverages to know what “work” it has to do. This same table is utilized for a retry queue that happens every 2 hours by another Runbook executing on a timed interval.

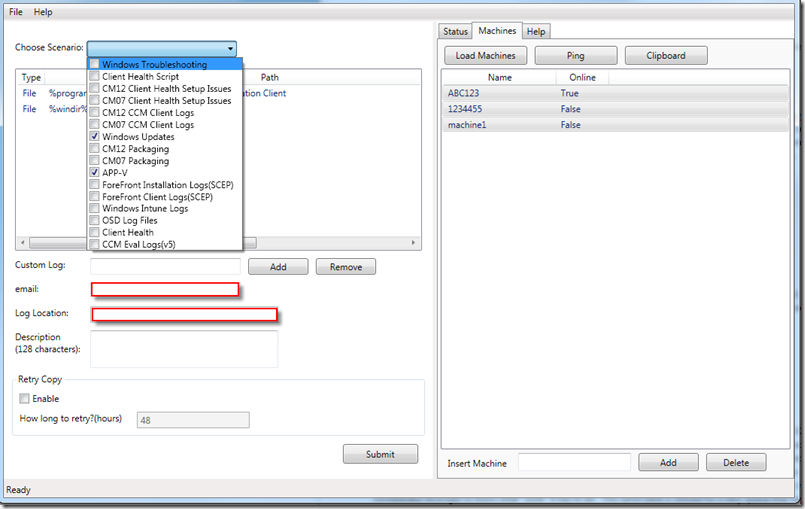

Front End

The front end shown below is essentially the portal to request logs. You add machines from an input file or from the clipboard. You can choose a scenario from the drop down by selecting check boxes as shown and they show up in the list. A custom log request is also available. By default, the front end is launched and prepopulates a majority of the look and feel from a XAML file stored on our Runbook (Action) server. The email address is used to isolate who is requesting the logs (pulled from logged on user ID). The email field can additionally be semi colon separated to include multiple recipients to be notified each time an email is sent on status of log collection. A description field is provided and required in order to notate what the log request was for (shows up in the subject line of the email you receive). Finally, you can set a retry interval (maximum of 99) which will allow you to request a retry to occur by default every 2 hours for a maximum of 99 hours to capture machines that are offline at the time of your initial request.

Notifications

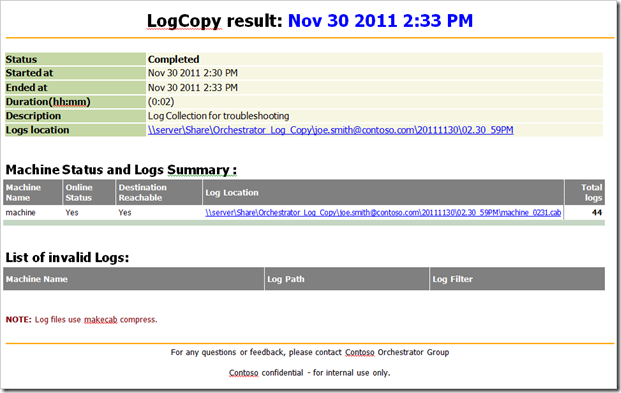

As mentioned above, an email notification is sent to provide status of your log copy request. This is done by default at the beginning of each request, and the completion of each request (grouped together by request so you receive only one email for all machines you have requested), and if you select the retry copy option, you will receive an email by default every 2 hours until all systems have successfully been gathered or until the retry has expired in number of hours. Example below.

What’s Next?

Well, this was just an introduction to this solution. Stay tuned for a more in depth analysis of the Log Copy Workflow within Orchestrator. For now, I hope you’ve gotten a taste of one potential scenario that can leverage the power of System Center Orchestrator for IT Process Automation. This solution has definitely provided impact and value for our organization and has shown how automation can shine when applied to repetitive tasks to save time and effort.

Giving “Props”

I love giving credit where credit is due and ensuring the folks I work with get noticed for their hard work. I have a very talented developer working on my team that works with me to make these workflows happen. David Wei deserves credit for his hard work and effort on putting together my design, architecture, and overall ad hock requests into our finished products. Great teams make great end results – thank you David for all your hard work on this project and continued support as we move forward with more automation!

That’s it for now – Happy Automating!