Core parking in Server 2008 R2 – why it’s like airport X-ray machines.

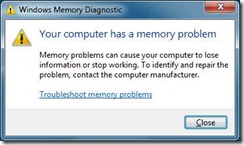

It’s third time lucky for this post… a couple of weeks ago I was at our big training event “tech-ready”, and my laptop blue screened on me, citing memory as the cause. On my return I started to write this post and came back to find the laptop had rebooted after a crash. Within 24 hours it had done it a second time, and I saw it do it third time with memory getting the blame at a blue screen. I ran the on-board diagnostics which told me there was nothing wrong but the Windows 7 memory diagnostic came back with the message you can see. So it looks like the laptop needs a bit more TLC from Dell. Fortunately I have a spare carcass I can put my hard disk into so I’m not totally stranded. It’s also reminded me that the auto-save option in Windows live writer is not on by default.

It’s third time lucky for this post… a couple of weeks ago I was at our big training event “tech-ready”, and my laptop blue screened on me, citing memory as the cause. On my return I started to write this post and came back to find the laptop had rebooted after a crash. Within 24 hours it had done it a second time, and I saw it do it third time with memory getting the blame at a blue screen. I ran the on-board diagnostics which told me there was nothing wrong but the Windows 7 memory diagnostic came back with the message you can see. So it looks like the laptop needs a bit more TLC from Dell. Fortunately I have a spare carcass I can put my hard disk into so I’m not totally stranded. It’s also reminded me that the auto-save option in Windows live writer is not on by default.

I don’t much like spending time away from home these days, queuing for airport security is a chore (in fact there is very little fun in flying) and the fight is a huge part of my annual carbon footprint, so going to Seattle for Tech-Ready is something which makes me stop and think: and each time I decide that it is still worth going . Sometimes it is the chance to network with colleagues from round the world I just wouldn’t get to hang out with , sometimes it is the deep technical content, sometimes it is the ability of people like Ray Ozzie to just engage me with ideas. Often it’s a combination. I was mighty impressed with Ray’s first appearance last year, and this year we got a taste of some ideas which will end up in products soon, some that are on a slower burn, and some we’ll look back on as an interesting flight of fancy. Of the “wow” demos we saw it’s not always possible to predict which will end up in which group. Ray quoted Steve Ballmer saying something like “We’re not going to go home, we’re going to keep coming and coming and coming” – which has echoes of the Blue Monster about it. Ray put it a different way: yes the economy is bad and we’re not immune to it, but if we cut back on R&D then … we he didn’t actually use the words “you'll regret it. Maybe not today. Maybe not tomorrow, but soon and for the rest of your life.”* but that was what it came down to.

If Ray was the big vision then Mark Russinovich had some of the best detail, and he talked about core parking in Server 2008 R2. It dawned on me that Research isn’t just about some of the blue sky stuff that we saw in Ray’s session, it is sometimes about going back to problems you thought you’d solved: like the process scheduler.

When I studied Computer Science at university in the mid 1980s we covered the theory of operating system design and I still have the text book. It describes the job of the process scheduler like this

- [Decide if] the current process on this processor is still the most suitable to run. If it is return control to it, otherwise …

- Save its volatile environment (registers, instruction pointer etc)

- Restore the volatile environment of the most suitable process

- Return control to that process

As a model it’s something we hardly need to think about: it has coped with the arrival of multi-processor environments, notice it said “this processor” the scheduler just looks at each processor of a multi core/multi chip environment and repeats the task. “Most suitable” covers a variety of possibilities, and the introduction of multi threading meant nothing more than saying you could have more than one instruction pointer and register state for a single process: each one representing a different thread of execution. We know there is often not a thread ready to go – if there were we’d be seeing utilization running at 100%. This doesn’t break the model, and as soon as a thread becomes ready it gets scheduled on an idle processor. That means the load on the processors is roughly equal which seems “fair” and so “good” We’d assume that also gives better performance, and here we need to go and look queues definitions of performance – something I studied before university, and I get reminded of every time I fly.

People I’ve asked think we have longer queues for airport security because “the more complex checks of today take longer”. But that can’t be so because the number of people flying has remained the same. The total number of passengers per hour who need to be processed hasn’t changed. If time-to-process was the only thing to change the queue would get longer and longer all day or we’d need to lengthen the gaps between flights and have fewer flights or a longer day. That hasn’t happened. As passengers we notice queue-length or time-in-queue but the airport measures “units processed per hour”. If the queue looks like overflowing, another X-ray machine is opened. and when it gets shorter staff can take a break or attend to other duties. So the minimum amount of processor time is used to process all the passengers going through the airport. If every processor was running all day there would be minimal queues.

This model turns out to be very good for other kinds of processors. Modern CPUs can close down a core or socket which can save a lot of power. The amount is variable be its hundreds of watts for a large server, and then the same again in data centre air-con to take the heat. If you want to save 1 ton of C02 in a year (on National Energy Foundation numbers), you need to save 45 Kilowatt Hours per week and it’s easy to see servers which save enough Watts for enough hours to make that number. But if every processor processes the first thread waiting for processing, the savings won’t amount to a hill of beans** That’s what most schedulers do because they assumed that if there is at least one runnable thread, the best thing to do must be to get a thread onto an idle processor. Some smart person went back to scheduler design with the new idea that “most suitable thread” might be no thread at all : let the processing unit (core) go idle and into a low power state or power it down completely and Park it. This is a complex decision for a scheduler because it involves questions like “how long must the processor be off to save more than the energy it takes to bring it back” and “If this processor stops processing, what will happen to time-in-queue and queue-length”. Intuitively we’d say that queuing threads must give lower performance, but the processor will still complete the tasks faster than they go into the queue. Change your measure to work done per unit time and hey presto…

This model turns out to be very good for other kinds of processors. Modern CPUs can close down a core or socket which can save a lot of power. The amount is variable be its hundreds of watts for a large server, and then the same again in data centre air-con to take the heat. If you want to save 1 ton of C02 in a year (on National Energy Foundation numbers), you need to save 45 Kilowatt Hours per week and it’s easy to see servers which save enough Watts for enough hours to make that number. But if every processor processes the first thread waiting for processing, the savings won’t amount to a hill of beans** That’s what most schedulers do because they assumed that if there is at least one runnable thread, the best thing to do must be to get a thread onto an idle processor. Some smart person went back to scheduler design with the new idea that “most suitable thread” might be no thread at all : let the processing unit (core) go idle and into a low power state or power it down completely and Park it. This is a complex decision for a scheduler because it involves questions like “how long must the processor be off to save more than the energy it takes to bring it back” and “If this processor stops processing, what will happen to time-in-queue and queue-length”. Intuitively we’d say that queuing threads must give lower performance, but the processor will still complete the tasks faster than they go into the queue. Change your measure to work done per unit time and hey presto…

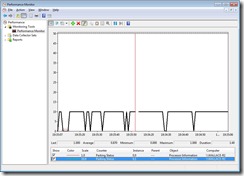

Core parking doesn’t need any particularly fancy hardware – when I checked on this 2 year old laptop (before I had to put in the drive from regular laptop) and you can see from the Perf-Mon was working (it’s on by default). Better yet although older OSes have no concept of core parking if you are running Hyper-V then processors are scheduled for the VMs to provide it core parking. There are significant savings to be made here over and above what virtualization was already giving.

* That was Humphrey Bogart in Casablanca

** OK, that’s a different greenhouse gas.