Disaster Recovery Site and Active Directory (Part 3 of 3)

Welcome to part 3 of the series… hopefully you have enjoyed the first two parts where we have discussed client logon and clients failover between Domain Controllers and sites.

In the last part of the series we're going to discuss Domain Controller replication failover between a Hub, Branch and DRP sites and different scenarios when doing so.

While talking about AD replication, it's important to note that usually Active Directory replication isn't considered as the most critical service in the environment, and I absolutely agree.. An environment can work seamlessly when Active Directory replication is not working. Obviously having Active Directory not replicating for long periods of time may cause issues, inconsistencies and other problems (long period => Tombstone Life Time), but having AD not replicating in the middle of the night wouldn't get me out of bed running to my computer in order to fix the issue.. On the other hand, I would go and do that first thing in the morning.

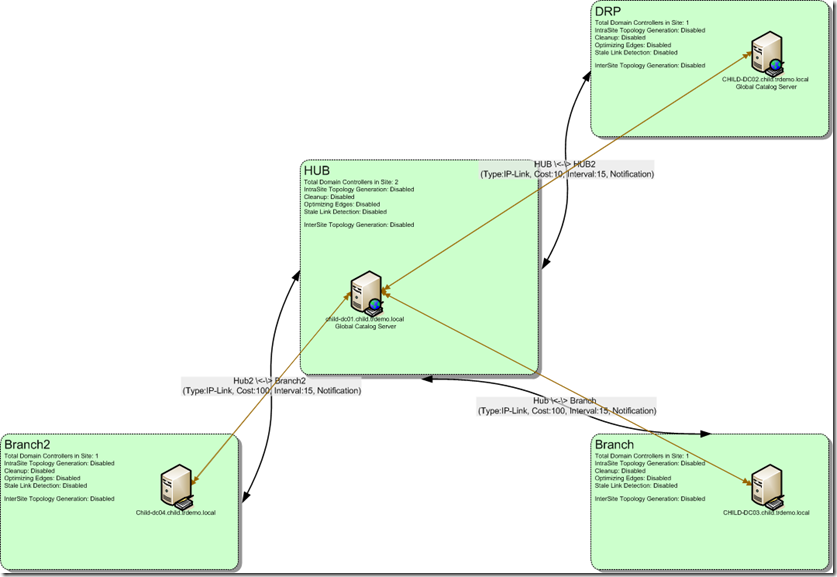

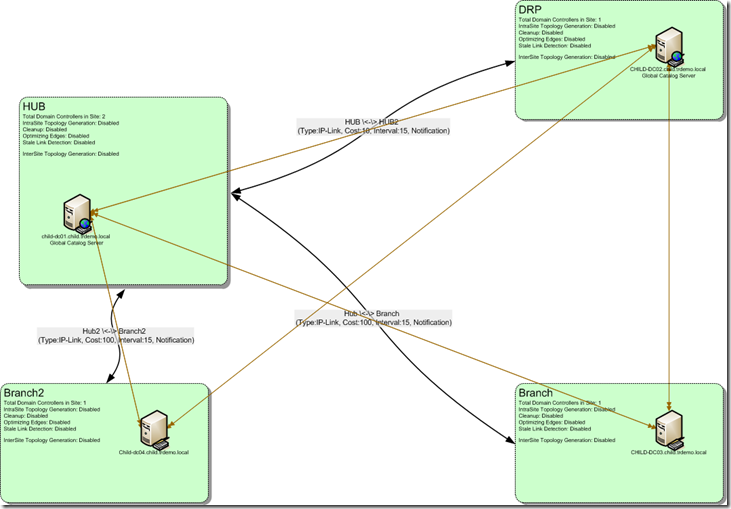

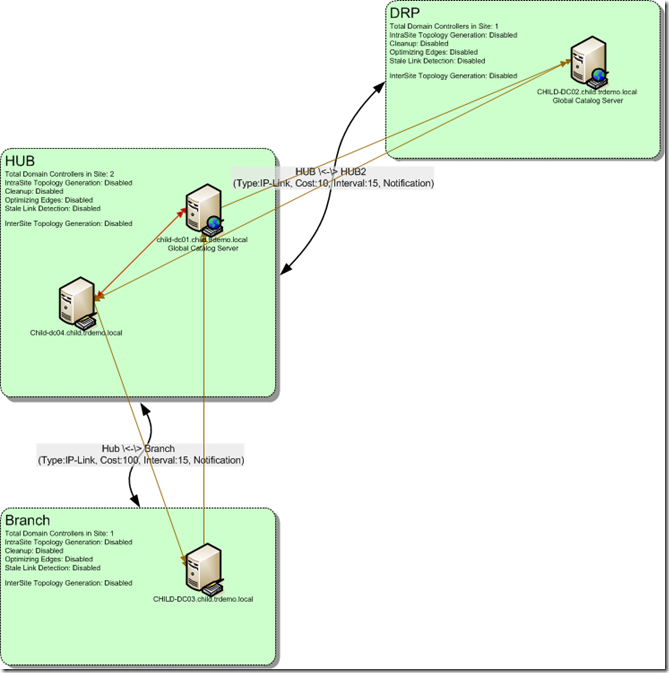

Now back to our environment.. again, we'll start with the simplest scenario, of one Domain Controller in each site:

In our environment we have two Branch sites (Branch and Branch2) which are connected to the HUB site, each with a site link cost of 100.

The HUB site is connected to the DRP site with a site link cost of 10. Bridge All Site Links (BASL) is enabled in the environment. Having said that an attempt to calculate the routes in the environment would result in the following (ordered based on costs):

From Branch

1. Branch –> Hub = 100

2. Branch –> DRP = 110 (Branch –> HUB –> DRP).

3. Branch –> Branch2 = 200 (Branch –> HUB –> Branch2)

From Branch2

1. Branch2 –> HUB = 100

2. Branch2 –> DRP = 110 (Branch2 –> HUB –> DRP)

3. Branch2 –> Branch = 200 (Branch2 –> HUB –> Branch).

(A short reminder to make things clearer: BASL – Bridge All Site Links, means all site links are transitive. More information on BASL and AD replication topology can be found here - http://technet.microsoft.com/en-us/library/cc755994(WS.10).aspx).

In that scenario it's obviously expected that the Branch sites (Branch and Branch2) would failover to the DRP site if the HUB site fails, instead of failing over to each other. (A matter of cost calculation, based on the table mentioned Branch –> DRP is cheaper than Branch –> Branch2).

So let's fail the DC in the HUB site…

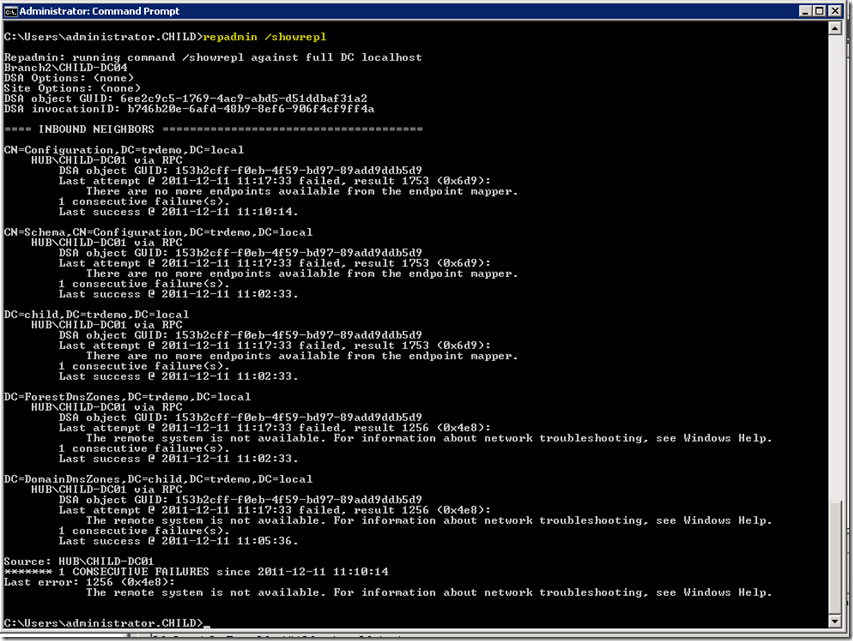

Running repadmin /showrepl on Child-DC04 would show we already have several failed attempts to replicate with the Domain Controller in the HUB site:

So the question is:

What are we waiting for?

Well… the answer is this:

IntersiteFailuresAllowed

Value: Number of failed attempts

Default: 1

MaxFailureTimeForIntersiteLink (sec)

Value: Time that must elapse before being considered unavailable, in seconds

Default: 7200 (2 hours)

(All described here - http://technet.microsoft.com/en-us/library/cc755994(WS.10).aspx and http://technet.microsoft.com/en-us/library/cc961781.aspx).

Basically we'll wait for 2 hours before the source DC for replication is considered stale. So in our scenario when we have failed the DC in the HUB site it would take 2 hours from the last replication attempt for the DCs in the Branch sites to consider the DC in the HUB site as stale.

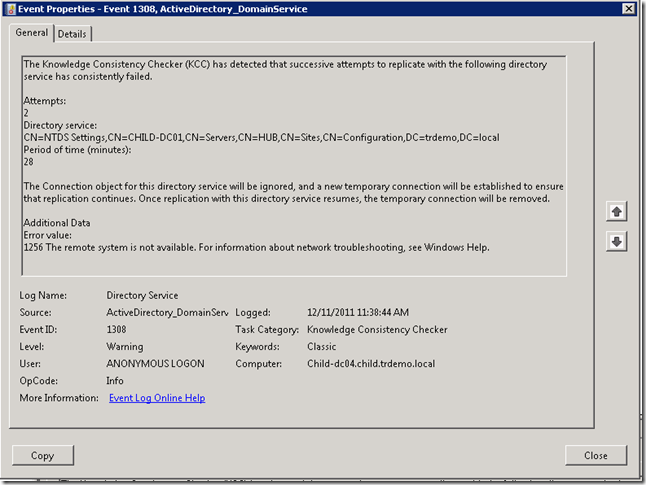

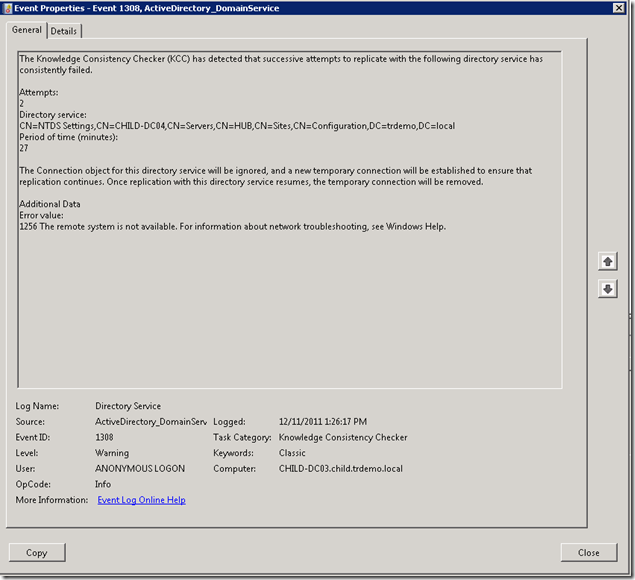

After the time and the number of failures have elapsed we will get the following event:

Event ID 1308, stating that "The Knowledge Consistency Checker (KCC) has detected that successive attempts to replicate with the following directory service has consistently failed." and that "The Connection object for this directory service will be ignored, and a new temporary connection will be established to ensure that replication continues. Once replication with this directory service resumes, the temporary connection will be removed. "

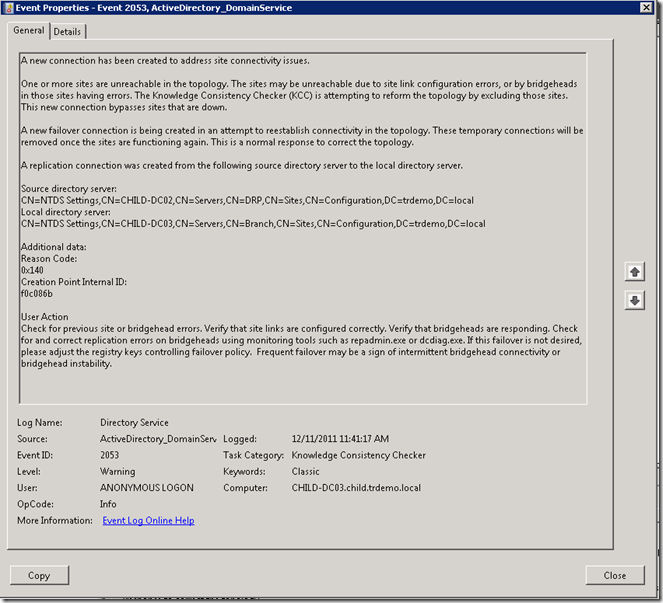

And a short while later we have received Event ID 2053: "A new connection has been created to address site connectivity issues."

Looking at our environments Visio diagram now would show (as expected):

Child-DC03 and Child-DC04 have created failover connection objects with Child-DC02, which is located at the DRP site, and vice versa, Child-DC02 has created failover connection objects with the DCs at the Branch and Branch2 sites.

So how long did this process take?

Well – we need three events to take place:

1. At least one replication attempt to occur, meaning if the link to the HUB site has failed at 3PM, but we have a replication schedule on the site link configured to replicate only at 1AM we wouldn't even try to replicate before that time… so how would we know the site link has failed?!

2. After we have at least one failed replication attempt (stated in the InterSiteFailuresAllowed registry) we begin counting the Failure time – as stated in the "MaxFailureTimeForIntersiteLink (sec)" registry key.

3. After the MaxFailureTimeForInterSiteLink has elapsed we need KCC to run (which by default occurs every 15 minutes).

So it very much depends on the environment how long it would take the failover connection objects to be created.

What Can I do to make it faster?

First of all, take things in perspective!

if you replicate once in 24 hours, how bad can it be to be 48 hours without replication? Like I mentioned in the beginning of the post – Active Directory not replicating would not get me running anywhere, it would just one of those things on the TODO list (unless obviously it's a symptom for another issue – like all DCs have crashed on no one in the organization can work… well… I wouldn't consider that as a replication problem to be honest, but that's a matter of semantics cause I can see there's a replication issue there among other problems ![]() ).

).

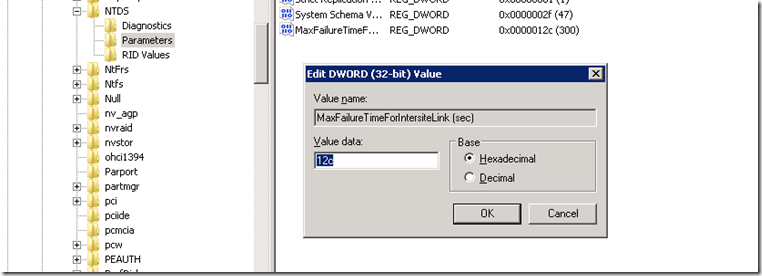

If you still feel the failover time is not enough, and you need to expedite the failover times you can set the "MaxFailureTimeForIntersiteLink (sec)" value to a lower value.

Again from http://technet.microsoft.com/en-us/library/cc755994(WS.10).aspx

Modifying the thresholds for excluding nonresponding servers requires editing the following registry entries in HKEY_LOCAL_MACHINE\SYSTEM\CurrentControlSet\Services\NTDS\Parameters, with the data type REG_DWORD. You can modify these values to any desired value as follows:

For replication between sites, use the following entries:

- IntersiteFailuresAllowed

Value: Number of failed attempts

Default: 1 - MaxFailureTimeForIntersiteLink (sec)

Value: Time that must elapse before being considered unavailable, in seconds

Default: 7200 (2 hours)

You will have to perform this on every DC in every Branch site (as those are the ones counting the fails).

You also need to take care while reducing this as having a very low value for this setting may cause false positives when failing between DCs, and since a failover can be an expensive operation we want to minimize the false positives as much as possible.

Note: Whatever you do – Don't go creating manual connection objects! Manage the KCC and the topology of the environment to match your requirements, don't override it with manual connection objects.

Failing back

Usually that's not a concern, but many times this question rises. What happens when the failed DC has come back online?

Well – KCC is aware of that when we have a successful replication attempt with the failed DC (and again, that's based on our replication topology, schedule and times).

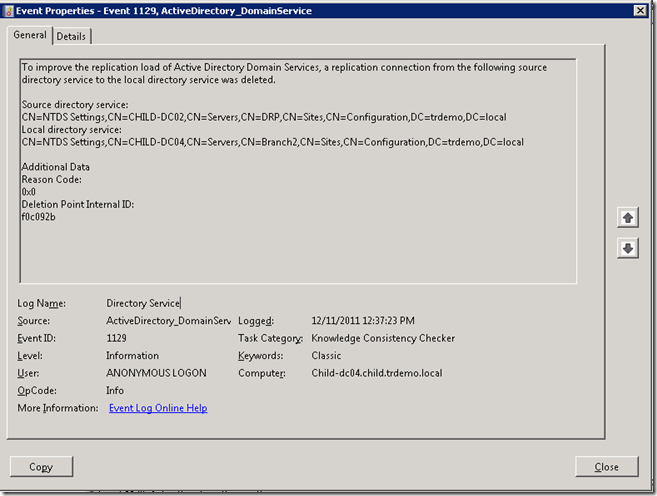

So the next time KCC is run we will receive event 1129 stating "To improve the replication load of Active Directory Domain Services, a replication connection from the following source directory service to the local directory service was deleted. "

So those temporary failover connection objects created for failover to the DR site are automatically deleted when the "best" DC (in our case – the DC at the HUB site) comes back online.

So now that we know how failover works lets just briefly discuss the following scenario:

Two Domain Controllers in the HUB site

Let's imagine that the physical site link between the Branch site and the HUB site have failed (to simulate that I'll shutdown the Active Directory Domain Services service on both DCs in the HUB site – Child-DC01 and Child-DC04.

So the first things occurs is exactly as previously: Child-DC03 (Branch) identifies that the current replication partner in the HUB site (Child-DC01) has failed and Event ID 1308 is generated on Child-DC03 (just as previously) stating "The Knowledge Consistency Checker (KCC) has detected that successive attempts to replicate with the following directory service has consistently failed. " and "The Connection object for this directory service will be ignored, and a new temporary connection will be established to ensure that replication continues. Once replication with this directory service resumes, the temporary connection will be removed. ".

Now is the different part:

The new connection object that is generated is with Child-DC01 ( HUB):

Event ID 2054 stating:

"The Knowledge Consistency Checker (KCC) created a new bridgehead failover connection because the following bridgeheads used by existing site connections were not responding or replicating.

Servers:

CN=NTDS Settings,CN=CHILD-DC04,CN=Servers,CN=HUB,CN=Sites,CN=Configuration,DC=trdemo,DC=local"

was generated on Child-DC03.

So Child-DC03 has failed over from Child-DC01 to Child-DC04. Is this the expected behavior???

The answer is yes! If you scroll back up you will note that I was stating all the time that we identify failed Domain Controllers. There is no possible way of identifying site link failures. So in terms of Active Directory replication a failed site is a site where all DCs are not responsive.

And that's an important thing to remember. So in this scenario where we have two Domain Controllers in the HUB site we count the InterSiteFailuresAllowed and the "MaxFailureTimeForIntersiteLink (sec)" values for each Domain Controller in the site.

So with this scenario if the MaxFailureTimeForInterSiteLink is the default 2 hours we are talking 4 hours for failover to the DRP site.

Now let's talk specifically about our test environment:

I have set the MaxFailureTimeForIntersiteLink (sec) to a value of 300 seconds(decimal) – 5 minutes:

Meaning that 5 minutes of replication failures on an inter-site connection object would consider the source Domain Controller as failed.

So we have Child-DC01 and Child-DC04 failing since (repadmin /showrepl output on Child-DC03):

Source: HUB\CHILD-DC04

******* 8 CONSECUTIVE FAILURES since 2011-12-11 12:58:52

So taking the times we have configured are:

12:58:52 + 5 minutes (MaxFailureForIntersiteLink time) = 13:03:52. So at 13:03:52 Child-DC04 will be considered stale, after which we'll wait for KCC to run.

Since KCC runs every 15 minutes it depends on where in the 15 minutes timeframe we catch the KCC.

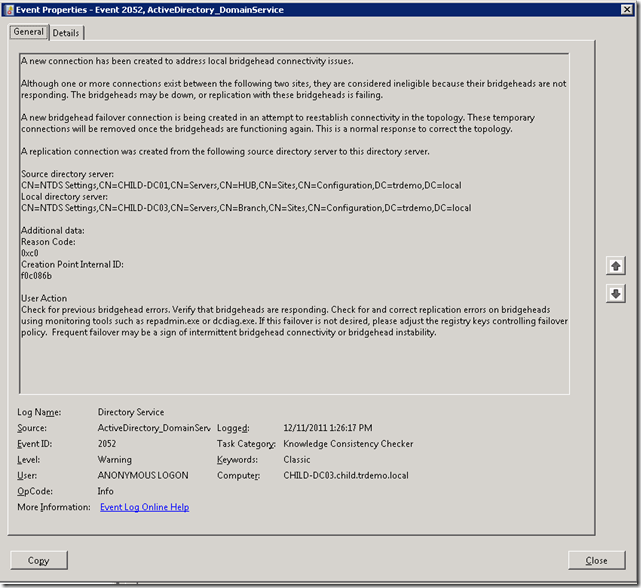

The KCC is generating Event 1308 stating that it has identified the failed Domain Controller, followed by Event 2052 stating a new connection object has been created with Child-DC01 (as expected we try to failover between DCs in the HUB site):

Next time the KCC is running (which is 15 minutes later @ 13:41:17) we still try to establish a connection object with Child-DC01 (or new selected server at the HUB site).

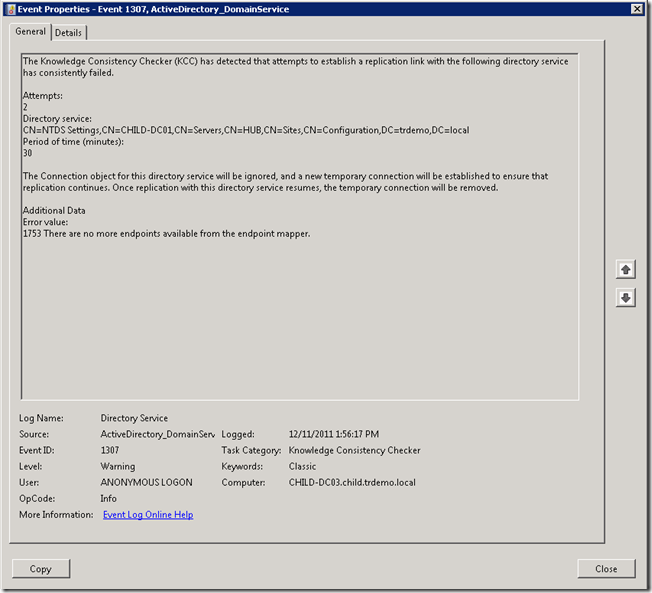

Next time KCC is run (that's at 13:56:17) we have identified Child-DC01 as a stale server:

Event ID 1307 stating that "The Knowledge Consistency Checker (KCC) has detected that attempts to establish a replication link with the following directory service has consistently failed. "

And only then we consider the HUB site as stale!

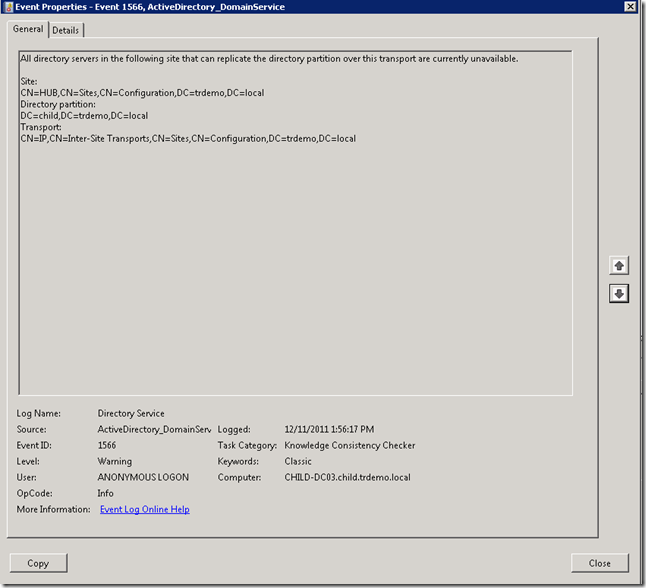

And we have Event ID 1566 to support that:

All directory servers in the following site that can replicate the directory partition over this transport are currently unavailable.

Site:

CN=HUB,CN=Sites,CN=Configuration,DC=trdemo,DC=local

So now we can failover to the DRP site:

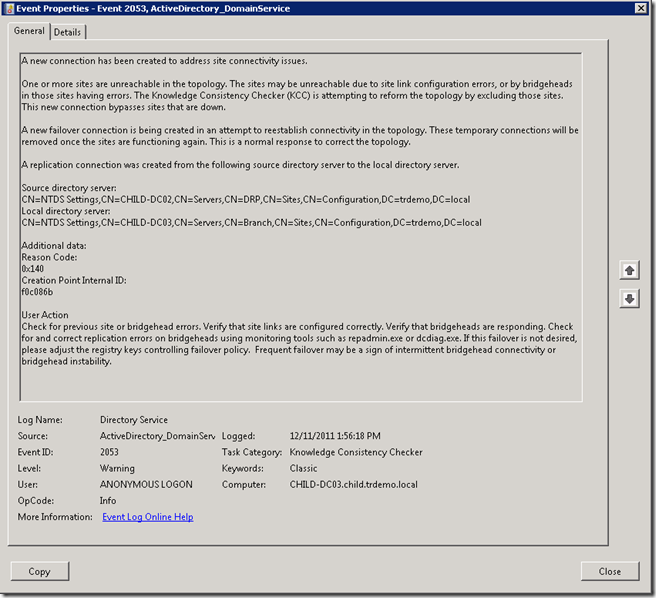

Event ID 2053 is generated, stating that we have created a new connection object for failover, and this time it's with Child-DC02 located at the DRP site:

So overall the process took us about 1 hour to failover, and that's with the MaxFailureForIntersiteLink set to a very low value (5 minutes). So with the default setting of 2 hours you can expect 4-4.5 hours (depending on KCC intervals and where you catch them) to failover between a branch site and a HUB site containing two Domain Controllers.

How can we make the process faster?

Just as previously – Take things in perspective. Ask – what happens if my environment doesn't replicate for 5 hours? Do I even need to change anything?

If you do need to decrease the MaxFailureForIntersiteLink value again, take into consideration the false positives which may result if setting this value to a value which is too low.

Now – if you feel like asking "so what happens if I have 3 DCs in the HUB site?" just use your own logic…![]() (Let me give you a hint – We wait for MaxFailureForIntersiteLink for each DC).

(Let me give you a hint – We wait for MaxFailureForIntersiteLink for each DC).

So hopefully it makes sense… now after I completely broke my Lab environment while writing this post I think I'm going to spend some time setting things back to Normal (e.g – Default).

Hope you enjoyed and it will make some more sense when troubleshooting/planning or testing your DRP scenarios.

And let me end on a cheerful note – A DRP site is not a DR Plan!!! In other words: Now that you got your DR site all sorted out, it's time to go and test your other DR scenarios!

(And our colleagues from the UK have nicely listed all the scenarios in one place - http://blogs.technet.com/b/newenglandpfe/archive/2011/05/20/common-scenarios-for-active-directory-related-backup-and-disaster-recovery.aspx).

Hope we all never need it!

Michael.