Missing BDA hook rules – impact and potential root cause

Some of you may have already heard and know what NLB is and how it works as described in the general Network Load Balancing Overview [http://technet.microsoft.com/en-us/library/cc725946.aspx].

An integral part of a TMG NLB solution is Bi-direction affinity, which is well described at the following link:

Bi-Directional Affinity in ISA Server [http://blogs.technet.com/b/isablog/archive/2008/03/12/bi-directional-affinity-in-isa-server.aspx].

Bi-directional affinity creates multiple instances of Network Load Balancing (NLB) on the same host, which work in tandem to ensure that responses from published servers are routed through the appropriate ISA servers in a cluster. Bi-directional affinity is commonly used when NLB is configured with Internet Security and Acceleration (ISA) servers. If bi-directional affinity is not consistent across all NLB hosts or if NLB fails to initialize bi-directional affinity, the NLB cluster will remain in the converging state until a consistent teaming configuration is detected.

Bi-directional affinity is a crucial thing if you enable NLB on multiple interfaces, as it ensures a single client to work through the same node and have consistent data flow.

By default when a client connects to an NLB interface a hash algorithm based on the packet source IP (client) is computed in the NLB driver to decide which NLB node should handle this request. On the way back (the server responses to the client) the source IP is the server IP (not the client IP) and without BDA it may be handled by another TMG NLB node – which would discard the server response, not having seen the client request. Hence, a mechanism is needed to guarantee that client/server packets are handled by the same host in the array.

Bidirectional affinity ensures the server responses are handled by the same TMG NLB node as the original client request. The mechanism ensuring this functionality is implemented as so-called hook rules:

http://technet.microsoft.com/en-us/library/dd348817(v=ws.10).aspx

Filter hooks help to direct traffic in a Network Load Balancing (NLB) cluster by filtering network packets. If the filter hooks are not properly configured, the NLB cluster will continue to converge and operate normally, however, the server application that is running with NLB will not be able to properly register the hooks.

The essential logic of the hook rules is the following:

At each packet, NLB calls out to the registered drivers (in this case fweng) whether they want to modify how the hash is created.

For example, when a client sends a SYN packet, NLB “asks” TMG’s fweng driver how it should calculate the hash. TMG will tell (depending on its hook rules) to use for example the client source IP for hashing (which is the default behavior). The calculated hash instructs NLB for example that the first node should handle the traffic and pass the SYN to the backend server.

When the server responds to the internal NLB of the TMG array, NLB calls out again to ask TMG how the hash is supposed to be calculated.

Based on the same hook rule set and seeing the packet direction, TMG tells NLB to hash based on the destination IP, which is again the client IP, so the packet will be handled by the same node as the original packet.

If the hook rule was not present , TMG would tell to use the default behavior (use the source IP), which would result in calculating the hash based on the source (server) IP, possibly yielding that a different node is supposed to handle the traffic, which is not what we want.

If you have a TMG array with several nodes and NLB is enabled, then TMG service creates hook rules at start.

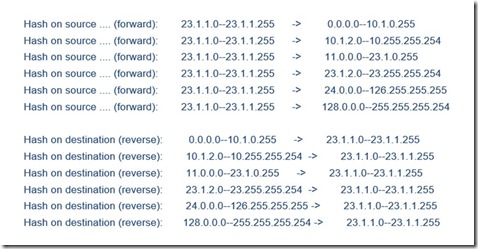

These rules can be checked by executing netsh tmg show nlb from an elevated command prompt, which yields similar output as can be seen below.

Notice the rules have a source and destination range, and a direction based on which TMG can decide what to tell NLB when its called: whether to hash on source (forward) or destination IP (reverse).

A potential problem occurs if hook rules are missing. In this post, we are going to explore a potential cause for missing hook rules.

The below is from a sample test lab which we built based on a particular issue we got reported by a customer:

In the example above we can see only rules which were created and hash based on source (forward direction) for outgoing scenario.

You however do not see any reverse rules, indicating that some rules may be missing .

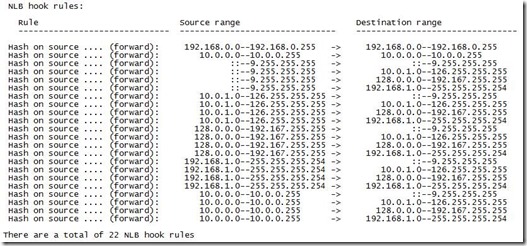

I took those rules from my TMG Array with Internal 10.0.0.0/24, DMZ 192.168.0.0/24 and External 172.20.1.0/24 networks.

Let's imagine a scenario where we have created a publishing rule for a server from DMZ with a listener on External network and you have configured the rule to be half NAT (request appears from client).

Because there is no specific rule for the range external network -> DMZ, DMZ -> external, in both directions we use the default behavior to hash based on the source IP.

As described above, because the hook rule is missing, this may or may not work depending on client IP/published server twin. If the NLB hash algorithm gives the same NLB node ID for both the client and the server IP , it will work. Otherwise, client and server packets will be serviced by different hosts, and the published server responses will be dropped with the error 0xc0040017 FWX_E_TCP_NOT_SYN_PACKEP_DROPPED.

The reason of the issue is lack of some NLB hook rules.

These rules are created at the startup based on network rules. In a real world scenario, when we may have a lot of subnets, it's quite easy to miss a network rule between two networks.

In this case, this is exactly what happened - there was no network relationship defined between External and DMZ, hence the appropriate rule was never created

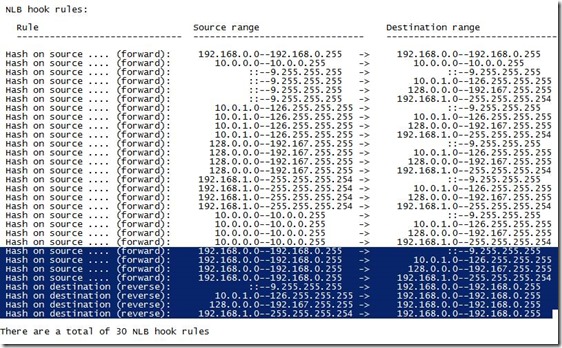

Once we add the network rule, the hook rules will be created. I created the rule from External to DMZ network with Route relationship. In the output below you can see how hook rules changed.

Now we have appropriate rules for processing requests from External to DMZ network back and forth, ensuring that we hash using the same IP in both directions. Hence, we should not get error 0xc0040017 FWX_E_TCP_NOT_SYN_PACKEP_DROPPED any more.

If you see the above error, make sure to check whether the appropriate hook rules are present – one of the root causes for missing hook rules can be the missing network relationship definition.

Author:

Vasily Kobylin, Senior Support Engineer, Microsoft EMEA Forefront Edge

Reviewer:

Balint Toth, Senior Support Escalation Engineer, Microsoft EMEA Forefront Edge

Franck Heilmann, Senior Escalation Engineer, Microsoft EMEA Forefront Edge