Use Background Jobs to Run a PowerShell Server Uptime Report

Summary: Microsoft Scripting Guy, Ed Wilson, shows how to use background jobs to run a Windows PowerShell script that produces a server uptime report.

Hey, Scripting Guy! I like your script to get uptime and to get free disk space, but I cannot be responsible for running it on a regular basis. In addition, keep in mind we have a very large network, and the script will take a long time to run. Is there a command to make this run in the background?

Hey, Scripting Guy! I like your script to get uptime and to get free disk space, but I cannot be responsible for running it on a regular basis. In addition, keep in mind we have a very large network, and the script will take a long time to run. Is there a command to make this run in the background?

—RE

Hello RE,

Hello RE,

Microsoft Scripting Guy, Ed Wilson, is here. PowerShell Saturday in Charlotte, North Carolina is a little more than a month away (September 15, 2012), and we have all the sponsors and all the speakers lined up, the agendas posted, and nearly half of the tickets are already sold. If you want to attend, you need to get your reservations placed before the tickets are all gone. There will be 200 people attending, and it will be a tremendous day of learning delivered by some “rock star” trainers! There are three different tracks (Beginner, Advanced, and Applied), so there will be something for anyone who uses or anticipates using Windows PowerShell.

Use Start-Job to run the report

Note This is the fourth blog in a series about using the Convertto-HTML cmdlet and creating a HTML server uptime report. The first blog talked about creating an HTML uptime report, the second one used Windows PowerShell to create a report that displays disk space in addition to uptime, the third discusses adding color and stuff to the uptime report. You should read the three previous blogs prior to reading today’s.

If you have a large network, it can take a very long time to reach out and touch every server on the network and to bring back the information to a central location. One thing that can help is to run the script as a background job. Using jobs, Windows PowerShell will run the script in the background. You then have the option of using the Wait-Job cmdlet to pause the Windows PowerShell console until the script completes, or you can allow the job to complete. At any rate, it is not really necessary to use the Receive-Job cmdlet to receive the job because the HTML page creates it as part of the script, and not as part of the job itself. The command shown here creates a job and runs the script.

Start-Job -FilePath C:\fso\HTML_Uptime_FreespaceReport.ps1

To receive the job, use the following commands.

Get-Job | Receive-Job

When you have done this, you may want to remove the job. This command accomplishes that task.

Get-Job | Remove-Job

Use Invoke-Command to multithread the job

Using Start-Job on the local computer does not really do much in the way of speeding up things—it simply hides how long the script takes by placing it in the background. If you have a large network, use the Invoke-Command to run the commands against remote machines. Windows PowerShell automatically determines the best number of concurrent connections (by default 32). To use the Invoke-Command cmdlet effectively requires a few changes to the HTML_UptimeReport.ps1 script. The changes involve removing the hard-coded list of servers in the param portion of the script. This first change is shown here.

Param([string]$path = “c:\fso\uptime.html”)

The next change involves removing the servers parameter from the call to the Get-UpTime function as shown here.

Get-UpTime |

The next change I make changes the servers parameter on the Get-UpTime function. This change is shown here.

Function Get-UpTime

{ Param ([string]$servers = $env:COMPUTERNAME)

The last change removes the Invoke-Item command that displayed the completed HTML report. This will not work remotely anyway, and it is therefore unnecessary.

I leave the foreach command because I do not want to make more changes to the function than required. The revised HTML_UptimeReport.ps1 is shown here as Invoke_HTML_UptimeReport.ps1.

Invoke_HTML_UptimeReport.ps1

Param([string]$path = “c:\fso\uptime.html”)

Function Get-UpTime

{ Param ([string]$servers = $env:COMPUTERNAME)

Foreach ($s in $servers)

{

$os = Get-WmiObject -class win32_OperatingSystem -cn $s

New-Object psobject -Property @{computer=$s;

uptime = (get-date) – $os.converttodatetime($os.lastbootuptime)}}}

# Entry Point ***

Get-UpTime |

ConvertTo-Html -As Table -body “

<h1>Server Uptime Report</h1>

The following report was run on $(get-date)” >> $path

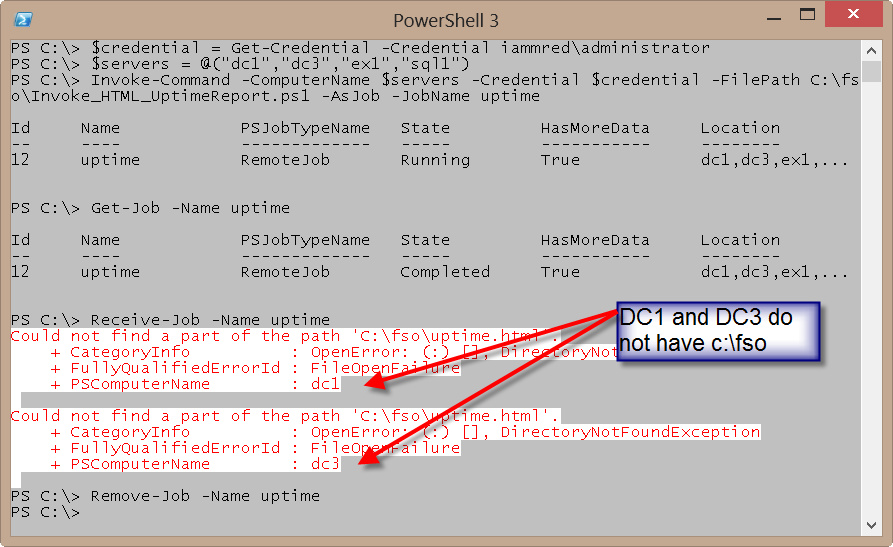

I now open my Windows PowerShell console, and I retrieve and store a credential object to be used when making the remote connections. Next, I provide an array of server names. Finally, I call the Invoke-Command cmdlet and specify the computer names and the credentials. I use the FilePath parameter to point to a script that exists on my local computer. Next I use the AsJob parameter to run the command as a job. Finally, I specify a friendly name for the job. This command permits up to 32 simultaneous remote connections to obtain the information. The commands I type are shown here.

$credential = Get-Credential -Credential iammred\administrator

$servers = @(“dc1″,”dc3″,”ex1″,”sql1”)

Invoke-Command -ComputerName $servers -Credential $credential -FilePath C:\fso\Invoke_HTML_UptimeReport.ps1 -AsJob -JobName uptime

There is one problem with this command. The c:\fso\uptime.html command refers to a folder (c:\fso), which must exist on the remote server. Actually, several of my remote servers have a c:\fso folder, but the ones that do not will generate an error when I receive the job. The commands and associated output are shown in the following image.

To override the default value that is contained in the script, I supply a value for the args parameter of the Invoke-Command cmdlet. The values for the args parameter must appear in the same order that the script declares the parameters.

If I run the command and do not make provisions for multiple file access, file locking issues arise. There are two easy ways to deal with this issue. The first is to dial down the ThrottleLimit value to 1. Doing this, however, does no more than allow for a single connection at a time. This is the same thing the original script accomplished; and therefore, it is not a great solution. A better approach is to modify the output file name, to include the server name. Doing this permits simultaneous file access. Here is a modification to the code.

>> (“{0}{1}_Uptime.html” -f $path, $env:COMPUTERNAME)

When I use Invoke-Command now, I do not include the file name, but only the \\dc1\share path. This command is shown here.

Invoke-Command -ComputerName $servers -Credential $credential -FilePath C:\fso\Invoke_HTML_UptimeReport.ps1 -AsJob -JobName uptime -args “\\dc1\share\”

RE, that is all there is to using jobs to run a Windows PowerShell script and produce an uptime report. Join me tomorrow for more Windows PowerShell cool stuff.

I invite you to follow me on Twitter and Facebook. If you have any questions, send email to me at scripter@microsoft.com, or post your questions on the Official Scripting Guys Forum. See you tomorrow. Until then, peace.

Ed Wilson, Microsoft Scripting Guy

Light

Light Dark

Dark

0 comments