Use PowerShell to Compute MD5 Hashes and Find Changed Files

Summary: Learn how to use Windows PowerShell to compute MD5 hashes and find files changed in a folder.

Hey, Scripting Guy! I have a folder and I would like to detect if files within it have changed. I do not want to write a script to parse file sizes and dates modified because that seems to be a lot of work. Is there a way I can use an MD 5 hash to do this? Oh, by the way, I do have a reference folder that I can use.

Hey, Scripting Guy! I have a folder and I would like to detect if files within it have changed. I do not want to write a script to parse file sizes and dates modified because that seems to be a lot of work. Is there a way I can use an MD 5 hash to do this? Oh, by the way, I do have a reference folder that I can use.

—RS

Hello RS,

Hello RS,

Microsoft Scripting Guy, Ed Wilson, is here. Things are certainly beginning to get crazy. In addition to all of the normal end-of-the-year things going on around here, in addition to the middle of a product ship cycle, we are entering “conference season.” This morning, the Scripting Wife and I (along with a hitchhiker from the Charlotte Windows PowerShell User Group) load up the car and head to Atlanta, Georgia for TechStravaganza. We have the speaker’s dinner this evening, and tomorrow we will be flat out all day as the event kicks off. It will be a great day with one entire track devoted to Windows PowerShell. The following week, we head to Florida for a SQL Saturday, Microsoft TechEd, and IT Pro Camp. In fact, our Florida road trip begins with the monthly meeting of the Charlotte Windows PowerShell User Group (we actually leave for our trip from the group meeting). If you find all this a bit confusing, I do too. That is why I am glad we have the Scripting Community page, so I can keep track of everything.

Note This is the fourth in a series of four Hey, Scripting Guy! blogs about using Windows PowerShell to facilitate security forensic analysis of a compromised computer system. The intent of the series is not to teach security forensics, but rather to illustrate how Windows PowerShell could be utilized to assist in such an inquiry. The first blog discussed using Windows PowerShell to capture and to analyze process and service information. The second blog talked about using Windows PowerShell to save event logs in XML format and perform offline analysis. The third blog talked about computing MD5 hashes for files in a folder.

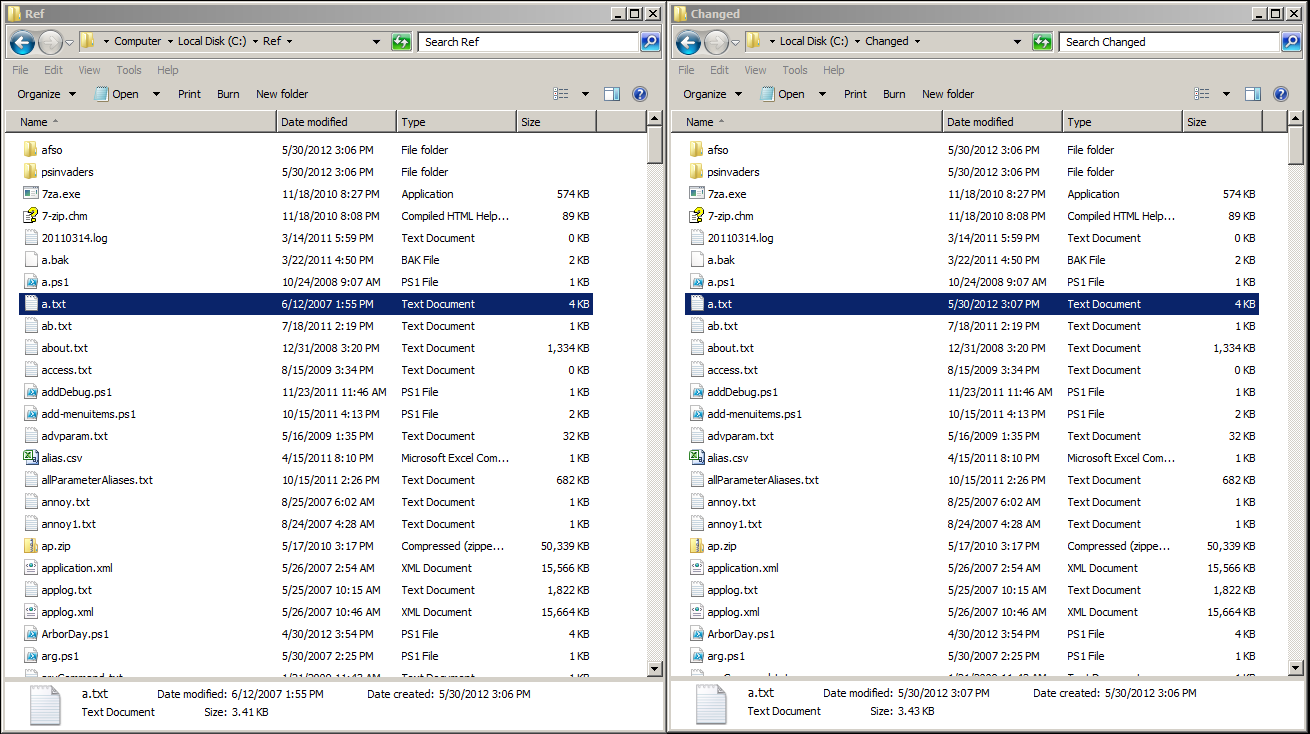

The easy way to spot a change

It is extremely easy to spot a changed file in a folder by making a simple addition to the technique discussed yesterday. In fact, it does not require writing a script. The trick is to use the Compare-Object cmdlet. In the image that follows, two folders reside beside one another. The Ref folder contains all original files and folders. The Changed folder contains the same content, with a minor addition made to the a.txt file.

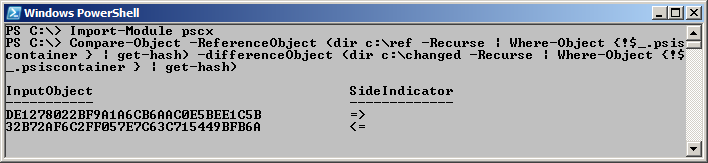

After you import the PSCX, use the Compare-Object cmdlet to compare the hashes of the c:\ref folder with the hashes of the c:\changed folder. The basic command to compute the hashes of the files in each folder was discussed in yesterday’s blog. The chief difference here is the addition of the Compare-Object cmdlet. The command (a single logical command) is shown here.

PS C:\> Compare-Object -ReferenceObject (dir c:\ref -Recurse | Where-Object {!$_.psis

container } | get-hash) -differenceObject (dir c:\changed -Recurse | Where-Object {!$

_.psiscontainer } | get-hash)

The command and the associated output are shown here.

The command works because the Compare-Object cmdlet knows how to compare objects, and because the two Get-Hash commands return objects. The arrows indicate which object contains the changed objects. The first one exists only in the Difference object, and the second one only exists in the Reference object.

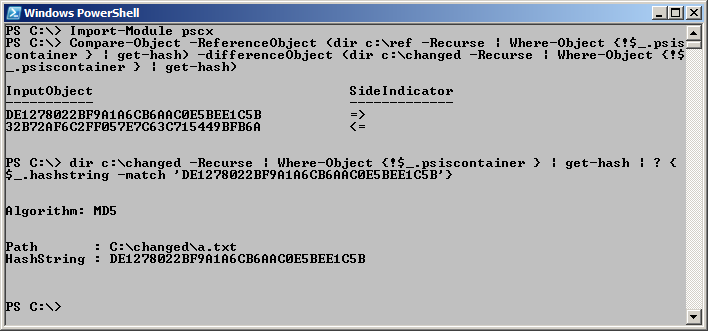

Find the changed file

Using the information from the previous command, I create a simple filter to return more information about the changed file. The easy way to do this is to highlight the hash, and place it in a Where-Object command (the ? is an alias for Where-Object). I know from yesterday’s blog, that the property containing the MD5 hash is called hashstring, and therefore, that is the property I look for. The command is shown here.

PS C:\> dir c:\changed -Recurse | Where-Object {!$_.psiscontainer } | get-hash | ? {

$_.hashstring -match ‘DE1278022BF9A1A6CB6AAC0E5BEE1C5B’}

The command and the output from the command are shown in the image that follows.

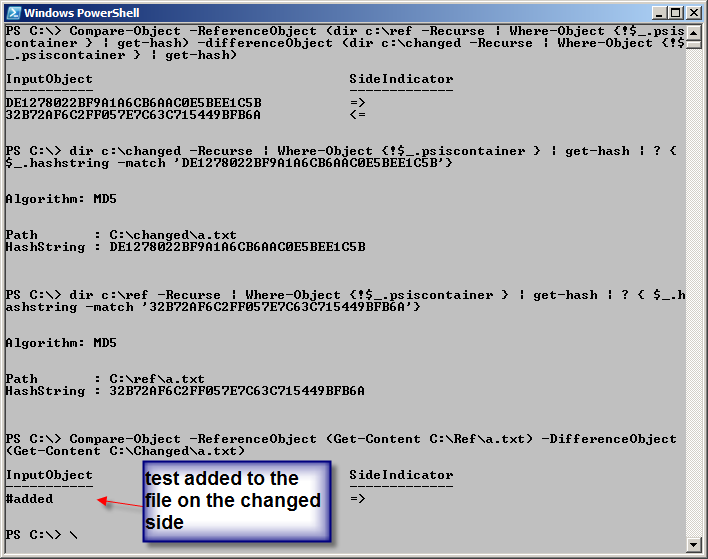

Finding the differences in the files

I use essentially the same commands to find the differences between the two files. First, I make sure that I know the reference file that changed. Here is the command that I use for that:

PS C:\> dir c:\ref -Recurse | Where-Object {!$_.psiscontainer } | get-hash | ? { $_.h

ashstring -match ’32B72AF6C2FF057E7C63C715449BFB6A’}

When I have ensured that it is, in fact, the a.txt file that has changed between the reference folder and the changed folder, I again use the Compare-Object cmdlet to compare the content of the two files. Here is the command I use to compare the two files:

PS C:\> Compare-Object -ReferenceObject (Get-Content C:\Ref\a.txt) -DifferenceObjec

(Get-Content C:\Changed\a.txt)

The image that follows illustrates the commands and the output associated with these commands.

RS, that is all there is to using finding modifications to files in folders when you have a reference folder. Join me tomorrow for more cool stuff in the world of Windows PowerShell.

I invite you to follow me on Twitter and Facebook. If you have any questions, send email to me at scripter@microsoft.com, or post your questions on the Official Scripting Guys Forum. See you tomorrow. Until then, peace.

Ed Wilson, Microsoft Scripting Guy

Light

Light Dark

Dark

0 comments