Linux P2V With DD and VHDTool – EASY and CHEAP!

So I’ve been busy the last two week getting ready for TechEd (WAHOO!) where I’m co-presenting two sessions this year. One of the sessions is all about Linux on Hyper-V.

To get ready, I’ve been working though lots of the common operational tasks including (as you know P2V) migrations.

I mentioned to my buddy Alexander Lash (my partner in crime at TechEd 2008 where we presented a great session on Hyper-V Scripting) all the challenges of Linux P2V migrations, and he mentioned an easy way to do it using DD and VHDTool.

- DD is common UNIX / Linux command that is commonly used for capturing disk images to a file.

- VHDTool is a Windows tool for manipulating VHDs (including the nearly instantaneous creation of HUGE VHDS!).

What I didn’t know was that VHDTool can quickly alter a binary disk image file (like those created by DD) and turn it into a VHD for Hyper-V!

I put DD / VHDTool to the test a couple of different ways this week, and wanted to share some results with you. Note that using DD and VHDTool ARE NOT SUPPORTED by Microsoft (but they seem to work pretty well, and the price is right!).

DD on Windows

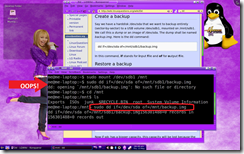

My first run through was to take an existing Linux hard drive out of a system (using of course, Hanna Montanna Linux) and plugging it into one of my Hyper-V servers.

I ran a Windows version of DD against the disk and created a binary image file of the system.

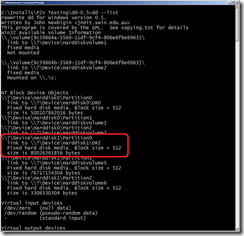

One trick with the Windows version if DD is finding the right disk. It has a nice option to list all the drives on a system (see picture).

Getting the drive ID right is important (slashes and all), or the process wont work.

The actual command line I used to “suck the brain” out of the Linux system was pretty simple:

dd if=\\?\Device\Harddisk1\DR2 of=C:\Hanna.img bs=1M --progress

It took quite some time to copy the entire disk (empty space and all) to a new 80ish GB file, but once it was done creating the image, it took just a minute to get the VM up and running.

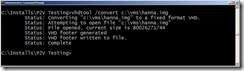

I moved the image file to a better location and ran VHDtool to “convert” the image:

I also renamed it to a .VHD (Hyper-V only likes to define VMs using storage files named .VHD) and then defined my VM (using the converted image file).

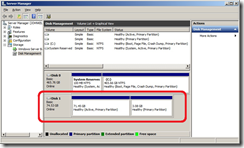

The VM started right up, noticing the changes to hardware (no longer having a sound card, for instance), and worked like a champ for me.

DD on Linux Direct to NTFS

Direct to NTFS

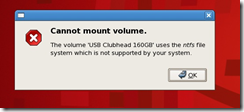

I tried capturing an image of the same Linux system using DD on Linux. I Ran DD on the Linux system, and wrote the binary image file to an attached (NTFS formatted) USB drive.

When DD was done, I plugged the USB drive into my Hyper-V host, copied over the file, ran VHDTool, and again success!

NOTE that most commercial Linux distribution DO NOT support reading / writing NTFS formatted disks, making this type of image capture (direct to USB) impractical.

Still, it was pretty awesome that it worked.

Still, it was pretty awesome that it worked.

DD on Linux Over the Network

As I mentioned, not being able to access a common file system (like NTFS) on a USB drive from common, commercial Linux distributions is a blocker for the last process I showed. Yes I could have tried all sorts of other file systems, but I figured I should skip all the disk swapping that I had been doing and just use the network instead.

I got some more help from “Mr. Z” (mentioned in my earlier “Linux P2V The Hard Way” post). He rattled off the command line over the phone to mount a remote CIFS share so I could dump the output of DD directly on my Hyper-V host – saving a step.

I got some more help from “Mr. Z” (mentioned in my earlier “Linux P2V The Hard Way” post). He rattled off the command line over the phone to mount a remote CIFS share so I could dump the output of DD directly on my Hyper-V host – saving a step.

On the Linux system I mounted my share:

mount –t cifs –o username=administrator //192.168.0.10/D$ /mnt

dd if=/dev/sda of=/mnt/rhel54.img

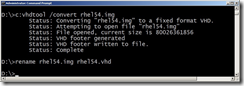

Once it was done, I ran VHDTool and renamed the .IMG file to .VHD, defined the VM and was all set again to start my VM.

I was of course using SELinux and now (because I did this nutty P2V) was have all sorts of consistency “opportunities” in my VM. I had to repair my file system, reconfigure the X Server, add the Linux Integration Services (ISs – actually cheated and added them to physical server first!), but after that and a reboot the VM was online.

The Fine Print

Here are a few thoughts on the process, after the fact.

Firstly, this process HAS ZERO SUPPORT FROM ANYONE! The process will vary somewhat based on your installation and distribution (security options, file systems, other).

DD – Size Matters

The biggest drawback to this process is the HUGE files that DD creates that must be consumed by VHDTool. Using PlateSpin, Tar, or another file-based process skips all the blank space on the disk. Still, the process is pretty simple and works reliably for me.

VHDTool – Size Matters in Different Way

VHDTool can sometimes “wrap” your binary image with information that Linux may not 100% understand. For instance, I ran it against a 320GB image I captured. Everything seemed to go well, and the VM booted, but the file system wouldn’t mount. Apparently “the disk” (VHD) was reporting a size of 127GB, while the file system was 300+GB (300 pounds of data in a 127 pound bag?), and the operating system took exception to this.

The process worked for me (above) in each case because the source disk (binary image) was smaller than 127GB. I’ll touch base with the developer folks and see if they know anything about that.

Let me know what you think of this post, as well as your thoughts for additional posts.

-John