Lite-Touch Deployments - Making them a bit more scalable

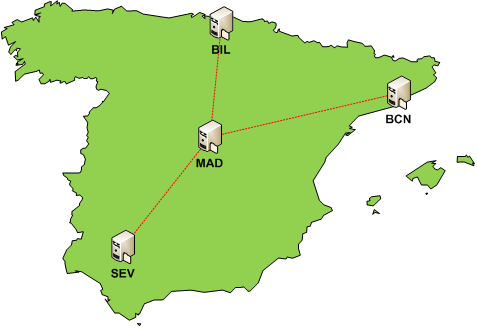

Take a look at the image below, which is a fairly common scenario for a company; let's call them Contoso. Contoso has multiple offices around the country that all connected to their head office, some with high-speed network connections and others with relatively slow ones. Contoso is planning their Windows Vista rollout which will be driven from their central office, in this example, Madrid. Contoso wants to deploy their Windows Vista image that they created on their MDT server in Madrid but with a lite-touch installation to all their clients nationwide.

The problem they need to solve is one of distributing their image file to all company workstations without saturating the network at the time of deployment. Unfortunately, they do not have the infrastructure of SMS or Systems Center Configuration Manager which would make this problem fairly trivial, so they need to create a solution that will allow them to deploy their image created in Madrid to all clients without using up all available network bandwidth. The biggest problem that they will have with a lite-touch scenario is that all clients will contact the MDT server directly by hostname (as configured in the file bootstrap.ini that MDT created and placed inside the WinPE image file) to download the corporate WIM image which, in Contoso's case, is 6 Gb in size. This will work fine in the Madrid office because the MDT server is there and there is high-speed network connectivity between the server and the workstations. But when Contoso starts the deployment in their office in Bilbao, which only has a 256kb network connection to Madrid, it will almost certainly fail due to network bandwidth/latency issues.

So, how can Contoso deploy their image to their satellite offices without bringing down the network at the same time? (this really does sound like a possible Microsoft MCP question, doesn't it??!!)

Not too long ago Adam Shepherd wrote a great article about making a BDD server scalable using technologies such as DFS-R and SQL Replication. It really is a good article because I am often asked by clients about this topic, and I have always used Adam's article to show how it can be done. The problem is that some of my clients do not always implement the recommendations in Adam's article as they see it as too complicated, expensive, or do not like the idea of updating the Active Directory schema in order to implement DFS-R.

Well, there is another possibility that exists which would achieve a similar result and does not require any network or schema changes; DNS Round-Robin. Round-Robin requires the installation of no additional servers, no extra hardware, no extra licenses and probably no changes to any infrastructure/configuration; all it needs to work in this scenario is the simple tick of 2 checkboxes (which are pre-selected with the default configuration of DNS) on the DNS server. In the properties of the DNS server, just make sure that the following two options are selected from the "Advanced" tab:

- Enable round robin

- Enable netmask ordering

You should also double-check that you have "Strict Name Checking" disabled as well. If it isn't you might find that the remote computer refuses any connection made to it via a DNS alias rather than it's real name. The reason for the error is that an attempt was made to access \\AnAlias\AShare using the DNS A record but the actual hostname of that target was "XYZ", and strict name checking was enabled. See here for more information.

The first step to get it all setup is to replicate the MDT file share to a server in each of the remote offices. Contoso will need to do this manually, and a good way to do it would be with the command ROBOCOPY source destination /MIR. A scheduled task could then be set up to update any changes made to the share with this command as well. An alternative to copying the data over the network is to burn it to a DVD and then send the DVD to the remote office. From there someone in the remote office could copy the data from the DVD onto the local server. Afterwards, Contoso creates an A record for each remote server but with the same hostname as the MDT server in Madrid, just with relevant remote server's IP address. That's it!

To test the round robin configuration, simply use the NSLOOKUP command from any computer in a remote office. If setup correctly, your NSLOOKUP request should always return the IP address of the nearest server, i.e. the server that is hosting the replicated MDT share in the same location as the computer that made the query; if you repeat the NSLOOKUP command, the returned result should always be the same. If not, then a different IP address will be received for each repetition of the NSLOOKUP command. If this is the case then you probably do not have the two checkboxes configured on the DNS server, as mentioned previously. An interesting 'choice' for this setup is that you could even host the MDT share on a Windows XP workstation in a remote office if no server existed and one could not be installed; however, there are 2 major downsides of using a workstation OS rather than a server OS:

- Windows XP is limited to 10 concurrent network connections at any one time.

- You will certainly see lower network performance from the workstation when compared to a server.

If Contoso wish to use PXE to load the WinPE boot image then they will also need to replicate the WDS server as well to each office which introduces other complications and might not make this solution a viable choice. Otherwise, Contoso simply boots each machine from the MDT-generated boot CD, and upon boot WinPE will map a drive using the MDT server's hostname which, when resolved by DNS, will actually be the server on the same subnet that holds a replica of the MDT share. From then on, the process will continue over the local office network and should thus run significantly faster.

Finally, I would like to add two very important points that will need considering. The first is that Contoso will still need to replicate the MDT database (assuming one is used) to the remote servers, or they could leave it in the central office and maintain the cost of the network traffic for the database. The amount of data transferred from the DB would be far less than the amount used to deploy the image so this might not be too much of an issue. The second is that DNS round robin is not fault-tolerant. The DNS server will not be aware that any of the remote servers are offline and will continue issuing the IP address of a server regardless of it's status. This would lead to failures in the deployment process for an office if the local resource is not available when an attempt to install a computer was made.

Alternatively, you could use a MEDIA deployment point and do away completely with the need for replicated the data between offices and what I have just described. This is also a great way of deploying image files created with MDT but the disadvantage is that every time your deployment point changes you will need to recreate and re-distribute all the deployment DVD's as well; which could prove costly and hard to maintain. Using the method outlined here offers more flexibility and, once setup, would require very little administrative effort (you could program a task to replicate only the changes to the remote shares over night when there would be more network bandwidth available).

In conclusion, what has been (albeit briefly) explained in this post is a useful way of improving the chances of a successful operating system deployment in situations where you need to send large amounts of data over slow or unreliable network connections. My recommendation will always be to use SMS or System Center Configuration Manager if faced with this scenario as they are designed to handle extremely well these situations, and therefore the use of round-robin could never replace the functionality that these technologies provide.

This post was contributed by Daniel Oxley a consultant with Microsoft Services Spain