Windows Server 2008 - the facts beyond the fluff

With a new product coming up, there are usually streams upon streams upon streams of information, but I saw this top 10 new features in Windows Server 2008 that really clearly explains what it does and why these things are any good

So thank you to Scott Fulton at BetaNews

Top 10 New Features in Windows Server 2008

BetaNews - May 24, 2007

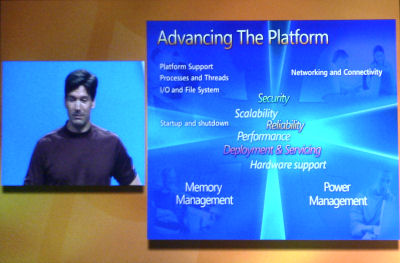

Subtle and fundamental differences in the basic architecture of Windows Server 2008 could dramatically change not only the way it is used in the enterprise, but also the logical and physical structure of networks where it is the dominant OS.

Related:

Windows Server 2008 Includes a Little Something for Nearly Everyone

There are a myriad of both subtle and fundamental differences in the basic architecture of Windows Server 2008, which could dramatically change not only the way it's used in the enterprise, but also the logical and physical structure of networks where it's the dominant OS.

The abilities to consolidate servers, to manage hardware more effectively, to remotely manage hardware without the graphical traffic, and to radically alter the system security model, could present a more compelling argument for customers to plan their WS2K8 migrations now, than the arguments for moving from Windows 2000 to Server 2003.

Based on the information we gathered last week at WinHEC 2007 in Los Angeles, we decided that rather than list a bunch of mind-jarring new categories and marketing terms that sound like rejected gadgets from the Bat-Cave, we'd select what we believe to be the ten most influential and important new technologies to find their way into WS2K8, with the help of Microsoft software engineers such as Mark Russinovich to explain their relevance. We begin at the end with our #10 entry:

#10: The self-healing NTFS file system. Ever since the days of DOS, an error in the file system meant that a volume had to be taken offline for it to be remedied. In WS2K8, a new system service works in the background that can detect a file system error, and perform a healing process without anyone taking the server down.

"So if there's a corruption detected someplace in the data structure, an NTFS worker thread is spawned," Russinovich explained, "and that worker thread goes off and performs a localized fix-up of those data structures. The only effect that an application would see is that files would be unavailable for the period of time that it was trying to access, had been corrupted. If it retried later after the corruption was healed, then it would succeed. But the system never has to come down, so there's no reason to have to reboot the system and perform a low-level CHKDSK offline."

Microsoft's SysInternals software engineer Mark Russinovich, opening one of the most well-received hours of WinHEC 2007 last week.

#9: Parallel session creation. "Prior to Server 2008, session creation was a serial operation," Russinovich reminded us. "If you've got a Terminal Server system, or you've got a home system where you're logging into more than one user at the same time, those are sessions. And the serialization of the session initialization caused a bottleneck on large Terminal Services systems. So Monday morning, everybody gets to work, they all log onto their Terminal Services system like a few hundred people supported by the system, and they've all got to wait in line to have their session initialized, because of the way session initialization was architected."

The new session model in both Vista and WS2K8 can initiate at least four sessions in parallel, or even more if a server has more than four processors. "If you've got a Vista machine where this architecture change actually was introduced, and you've got multiple Media Center extenders, those media center extenders are going to be able to connect up to the Media Center in parallel," he added. "So if you have a media center at home, and you send all their kids to their rooms and they all turn on their media extenders at the same time, they're going to be streaming media faster from their Vista machines then if you had Media Center on a XP machine."

#8: Clean service shutdown. One of Windows' historical problems concerns its system shutdown procedure. In XP, once shutdown begins, the system starts a 20-second timer. After that time is up, it signals the user whether she wants to terminate the application herself, perhaps prematurely. For Windows Server, that same 20-second timer may be the lifeclock for an application, even one that's busy spooling ever-larger blocks of data to the disk.

In WS2K8, that 20-second countdown has been replaced with a service that will keep applications given the signal all the time they need to shut down, as long as they continually signal back that they're indeed shutting down. Russinovich said developers were skeptical at first about whether this new procedure ceded too much power to applications; but in practice, they decided the cleaner overall shutdowns were worth the trade-offs.

#7: Kernel Transaction Manager. This is a feature which developers can take advantage of, which could greatly reduce, if not eliminate, one of the most frequent causes of System Registry and file system corruption: multiple threads seeking access to the same resource.

In a formal database, a set of instructed changes is stored in memory, in sequence, and then "committed" all at once as a formal transaction. This way, other users aren't given a snapshot of the database in the process of being changed - the changes appear to happen all at once. This feature is finally being utilized in the System Registry of both Vista and Windows Server 2008.

"The Kernel Transaction Manager [intends] to make it very easy to do a lot of error recovery, virtually transparently," Microsoft software engineer Mark Russinovich explained. "The way they've done this is with the [KTM] acting as a transaction manager that transaction clients can plug into. Those transaction clients can be third-party clients that want to initiate transactions on resources that are managed by Transaction Resource Manager - those resource managers can be third-party or built into the system."

#6: SMB2 network file system. Long, long ago, SMB was adopted as the network file system for Windows. While it was an adequate choice at the time, Russinovich believes, "SMB has kind of outlived its life as a scalable, high-performance network file system."

So SMB2 finally replaces it. With media files having attained astronomical sizes, servers need to be able to deal with them expeditiously. Russinovich noted that in internal tests, SMB2 on media servers delivered thirty to forty times faster file system performance than Windows Server 2003. He repeated the figure to make certain we realized he meant a 4000% boost.

#5: Address Space Load Randomization (ASLR) Perhaps one of the most controversial added features already, especially since its debut in Vista, ASLR makes certain that no two subsequent instances of an operating system load the same system drivers in the same place in memory each time.

Malware, Mark Russinovich described it (as only he can), is essentially a blob of code that refuses to be supported by standard system services. "Because it's isn't actually loaded the way a normal process is, it would never link with the operating system services that it might want to use," he described. "So if it wants to do anything with the OS like drop a file onto your disk, it's got to know where those operating system services live.

"The way that malware authors have worked around this chicken-and-egg kind of situation," he continued, "is, because Windows didn't previously randomize load addresses, that meant that if they wanted to call something in KERNEL32.DLL, KERNEL32.DLL on Service Pack 2 will always load in the same location in memory, on a 32-bit system. Every time the system boots, regardless of whose machine you're looking at. That made it possible for them to just generate tables of where functions were located."

Now, with each system service likely to occupy one of 256 randomly selected locations in memory, offset by plus or minus 16 MB of randomized address space, the odds of malware being able to locate a system service on its own have increased from elementary to astronomical.

"This slide...this being a keynote, the marketing people had to make a pass through the deck. And this thing is technical, which is a little bit different from what they're used to, they didn't understand any of the slides. But they still wanted to feel like they were adding value, so they threw this slide in. And of course, I don't understand this slide. But I hope you like it.”

Mark Russinovich, Microsoft technical fellow

#4: Windows Hardware Error Architecture (WHEA). That's right, Microsoft has actually standardized the error - more accurately, the protocol by which applications report to the system what errors they have uncovered. You'd think this would already have been done.

"One of the problems facing error reporting is that there's so many different ways that devices report errors," remarked Russinovich. "There's no standardization across the hardware ecosystem. So that made it very difficult to write an application, up to now, that can aggregate all these different error sources and present them in a unified way. It means a lot of specific code for each of these types of sources, and it makes it very hard for any one application to deliver you a good error diagnostic and management interface."

Now, with hardware-oriented errors all being reported using the same socketed interface, third-party software can conceivably mitigate and manage problems, reopening a viable software market category for management tools.

#3: Windows Server Virtualization. Even pared down a bit, the Viridian project will still provide enterprises with the single most effective tool to date for reducing total cost of ownership...to emerge from Microsoft. Many will argue virtualization is still an open market, thanks to VMware; and for perhaps the next few years, VMware may continue to be the feature leader in this market.

But Viridian's drive to leverage hardware-based virtualization support from both Intel and AMD has helped drive those manufacturers to roll out their hardware support platforms in a way that a third party - even one as influential as VMware - might not have accomplished.

As Microsoft's general manager for virtualization, Mike Neil, explained at WinHEC last week, the primary reason customers flock to virtualization tools today remains server consolidation. "There's this sprawl of servers that customers have, they're dealing with space constraints, power constraints, [plus] the cost of managing a large number of physical machines," Neil remarked. "And they're consolidating by using virtual machines to [provide] typically newer and more capable and more robust systems."

Consolidation helps businesses to reclaim their unused processor capacity - which could be as much as 85% of CPU time for under-utilized servers. Neil cited IDC figures estimating US businesses have already spent hundreds of billions on processor resources they haven't actually used. It's not their fault - it's the design of operating systems up to now. "So obviously, we're trying to drive that utilization further and further," Neil said.

#2: PowerShell. At last. For two years, we've been told it'll be part of Longhorn, then not really part of Longhorn, then a separate free download that'll support Longhorn, then the underpinning for Exchange Server 2007. Now we know it's a part of the shipping operating system: the radically new command line tool that can either supplement or completely replace GUI-based administration.

At TechEd 2007 in Orlando in early June, we'll be seeing some new examples of PowerShell in the WS2K8 work environment - hopefully unhindered now that the product is shipping along with the public Beta 3...at least unless someone changes his mind. We hope that phase of PowerShell's history is past it now.

#1: Server Core. Here is where the world could really change for Microsoft going forward: Imagine a cluster of low-overhead, virtualized, GUI-free server OSes running core roles like DHCP and DNS in protected environments, all to themselves, managed by way of a single terminal.

If you're a Unix or Linux admin, you might say we wouldn't have to waste time with imagining. But one of Windows' simple but real problems as a server OS over the past decade has been that it's Windows. Why, admins ask, would a server need to deploy 32-bit color drivers and DirectX and ADO and OLE, when they won't be used to run user applications? Why must Windows always bring its windows baggage with it wherever it goes?

Beginning with Windows Server 2008, the baggage is optional. As product manager Ward Ralston told BetaNews in an interview published earlier this week, the development team has already set up Beta 3 to handle eight roles, and the final release may support more.

What's more, with the proper setup, admins can manage remote Server Core installations using a local GUI that presents the data from the GUI-less remote servers. "We have scripts that you can install that enable [TCP] port 3389," Ralston told us, "so you can administer it with Terminal Services. [So] if you're sitting at a full install version and let's say I bring up the DNS, I can connect to a Server Core running DNS, and I can administer it from another machine using the GUI on this one. So you're not just roped into the command line for all administration. We see the majority of IT pros using existing GUIs or using PowerShell that leverages WMI [Windows Management Instrumentation] running on Server Core, to perform administration."

PowerShell can run on Server Core...partially, Iain McDonald told us. It won't be able to access the .NET Framework, because the Framework doesn't run on Server Core at present. In that limited form, it can access WMI functions.

But a later, more "component-ized" version of .NET without the graphics functionality may run within Server Core. This could complete a troika, if you will, resulting in the lightest-weight and most manageable servers Microsoft has ever produced. It may take another five years for enterprises to finally complete the migration, but once they do...this changes everything.