Hyper-V 2012 R2 Network Architectures Series (Part 4 of 7) – Converged Networks using Static Backend QoS

Ok. We have discussed the pros and cons of Non-Converged Networks and Converged Networks with SCVMM 2012 R2 in the last two blogs, but what happens when you already have a third party technology that already provides QoS in the Backend? There are several vendors and partners offering this hardware functionalities but the most common ones are the Virtual Connect with Flex-10 FlexNICs, the Cisco FEX with UCS and the Dell NPAR.

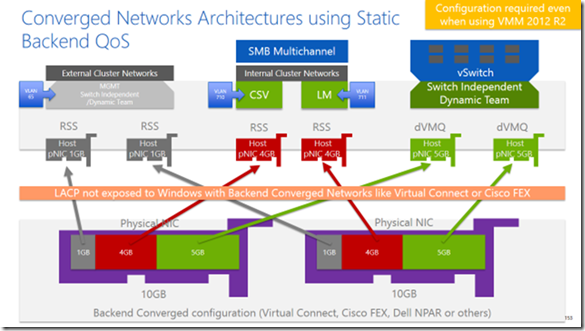

Again, let’s take a look to the following diagram and let’s elaborate what we are seeing:

Starting from the bottom you can see two big purple NICs representing the real uplinks available in the backend. These two uplinks use to be connected to different Physical Switches or Fabrics to provide HA and redundancy on the physical layer, but this is not what Windows sees after it is configured.

Usually Windows will see 8 NICs and in this diagram we will use only 6 of them because we assume that storage connection is done via FC over HBAs. On the Third Party backend QoS setup, we need to define what networks are required for a good Hyper-V Cluster environment and the bandwidth required for each of these networks.

One possible approach is to multiplex the uplinks with the above configuration. This will have almost the same benefits as a Non-Converged network Architecture except for:

- LACP is not exposed to Windows, so we cannot aggregate incoming traffic for the Mgmt or the VMs TEAMs

- On this Static QoS configuration, the bandwidth is hard blocked. Even if it’s not used, it will not be available for the other traffic. This might be a waste of bandwidth and not desirable.

- Combining the backend QoS with the SCVMM 2012 R2 QoS for the VMs can be difficult to understand and configure.

- Windows will see Physical NICs with non-standard bandwidth (anything different than 1GB or 10GB)

- It will require additional configuration and knowledge on how to integrate these backend solutions with Hyper-V.

- Firmware and drivers from these vendors will be a key component of the performance and reliability.

On the other hand, if our environment is really static and we know for sure the bandwidth consumption of all our traffic, this third party solution can help us to use RSS and dVMQ over the same uplinks. From a Windows perspective all the adapters will be physical and depending on the driver and the model you will be able to use both.

This kind of setup was really common until one year ago, when almost all vendors started to offer Dynamic QoS on the backend. Let’s talk about it in the next post.