Hyper-V 2012 R2 Network Architectures Series (Part 2 of 7) – Non-Converged Networks, the classical but robust approach

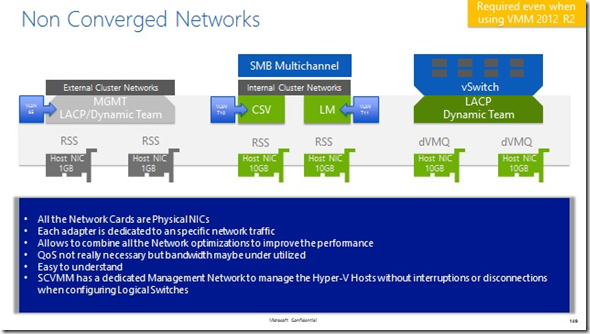

As an IT guy I have the strong belief that engineers understand graphics and charts much better than bullet points and text, so the first thing I will do is to paste the following diagram

At first sight you can recognize from left to right that there are 6 Physical Networks cards used in this example. You can also recognize that two of these adapter on the left are 1GB adapters and the other four green adapters are 10GB adapters. These basic considerations are really important because they will dictate how your Hyper-V Cluster nodes will perform.

On top of the 6 Physical Network cards you can see that some of them are using RSS and some of them are using dVMQ. Here is where things start to become interesting because you might wonder why I don’t suggest to create a big 4 NIC team with the 10GB adapters and dismiss or disable the 1GB adapters. At the end of the day, 40GB should be more than enough right?

Well, as a PFE, I like stability, high availability and robustness in my Hyper-V environments, but I also like to separate things that have different purposes. Using the approach from the picture above will give me the following benefits:

- You can use RSS for Mgmt, CSV and LM traffic. This will enable the host to squeeze the 10GB adapters if needed. Remember that RSS and dVMQ are mutually exclusive, so if I want RSS I need to use separate Physical NICs

- Since 2012 R2, LM and CSV can take advantage of SMB Multichannel, so I don’t need to create a Team, especially when the adapters support RSS. CSV and LM will be able to use 10GB each without external dependencies or aggregation on the Physical Switch like LACP

- CSV and LM Cluster networks will provide enough resilience to my cluster in conjunction with the Mgmt network.

- The Mgmt network will have HA using an LACP team. This is important and possible because each Physical NIC is connected directly to a Physical Switch that can be aggregated by our Network Administrator.

- Any file copy using SMB between Hyper-V hosts will use the CSV and LM network cards at 10GB because of how the SMB Multichannel algorithm works. Faster adapters take precedence, so even with simple a copy over the Mgmt network, I will take advantage of this awesome feature and will send the copy at 20GB (10GB from each CSV and LM adapter)

- SCVMM will always have a dedicated Mgmt network to communicate with the Hyper-V host for any required operation. So creation or deletion of any Logical Switch will never interrupt the communication between them.

- You can dedicate two entire 10GB Physical Adapters to your Virtual Machines using a LACP Team and create the vSwitch on top. dVMQ and vRSS will help VMs to perform as needed while the LACP /Dynamic Team will allow to receive and send up to 20GB from your VMs if really required. I have to be honest here, the maximum bandwidth inside a VM that I have seen using this configuration was 12GB, but that is not a bad number at all.

- You can use SCVMM 2012 R2 to create a logical switch on top and apply any desired QoS to the VMs if needed.

- You are not mixing Storage IOs with Network IOs

So, as you can see, this setup has a lot of benefits and best practice recommendations. It is not bad at all and maybe there are other benefits that I’ve forgotten to mention… but where are the constraints or limitations here with this Non-Converged Network Architecture? Here are some of them:

- Cost. Not a minor issue for some customer that can’t afford to have 4 x 10GB adapters and all the network infrastructure that this might require if we want real HA on the electronics.

- Additional Mgmt effort. This model requires us to setup and maintain 6 NICs and their configurations. It also requires the Network administrator to maintain the LACP port groups on the Physical Switch.

- More cables in the datacenter.

- Replica or other Management traffic that is not SMB will only have up to 2GB throughput.

- Enterprise Hardware is going in the opposite direction. Today it is more common to see 3rd party solutions that multiplex the real adapters in more logical partitions, but let’s talk about that later.

Maybe I didn’t gave you any new information regarding this configuration, but at least we can see that this Architecture is still a good choice for several reasons. If you have the hardware available, you certainly have the knowledge to use this option.

Let’s see you again in my next post where I will talk about Converged Networks Managed by SCVMM and Powershell

The series will contain these post:

1. Hyper-V 2012 R2 Network Architectures Series (Part 1 of 7 ) – Introduction (This Post)

5. Hyper-V 2012 R2 Network Architectures Series (Part 5 of 7) – Converged Networks using Dynamic QoS

6. Hyper-V 2012 R2 Network Architectures Series (Part 6 of 7 ) – Converged Network using CNAs

7. Hyper-V 2012 R2 Network Architectures Series (Part 7 of 7 ) – Conclusions and Summary

8. Hyper-V 2012 R2 Network Architectures (Part 8 of 7) – Bonus