Virtualizing SQL Server on Hyper-V and on Windows Azure VMs

I’ve been asked the question by many organizations: “can we virtualize SQL Server?” My answer is typically yes, however there are several factors to consider before moving SQL Server workloads to a virtualized environment. For the purposes of this post I’ll focus on virtualizing SQL Server on Windows Server Hyper-V, however several, if not all of factors for consideration pertain to other virtualization platforms as well.

I’m going to keep this simple and fairly high level, however all points below (and probably others not listed) are valid factors to consider when virtualizing SQL Server or any application.

Let’s take a look at some architecture components of Window Server 2012 R2 and Hyper-V and what to consider when virtualizing application such as SQL Server.

Windows Server Hyper-V Capabilities

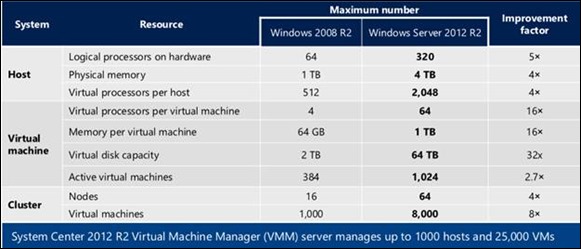

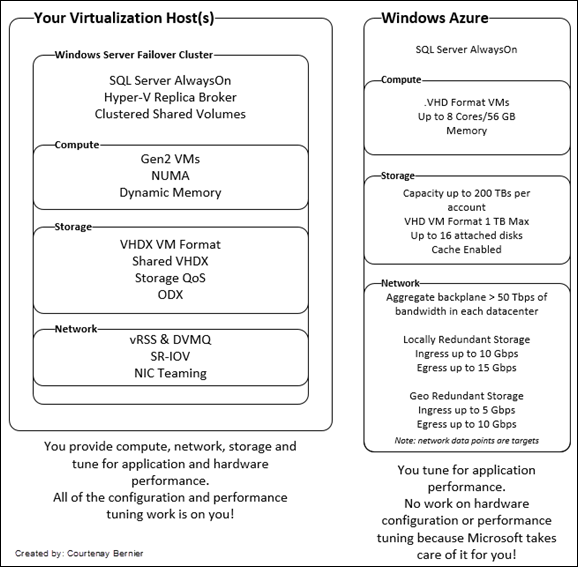

Referencing the diagram below we see vast amounts of improvements in Windows Server 2012 R2 over its predecessors. In theory, scalability shouldn’t be a limiting factor, in fact your hardware becomes a limitation if not sized properly.

Compute

When considering the allocation of CPU and Memory there are several underlying architectures that support or enhance the performance of an application or service.

Hyper-V Generation 2 VMs (VHDX format)

Hyper-V has a new generation of virtual machine called Generation 2 VM. Generation 2 virtual machines remove most of the emulated (”legacy”) devices, or replaces them with optimized software-based devices. Generation 2 virtual machines lay the foundation for advanced virtual machine features, including a reduced attack surface for the platform and faster boot times for virtual machines.

The advantages of Generation 2 VMs are as follows:

· Boot from a SCSI virtual disk.

· PXE Boot Option

· Secure Boot (UEFI)

· Faster boot and OS installation times.

· Native VMBUS support at boot (SCSI drivers are present at boot).

· Less emulated drivers such as the legacy network adaptor are needed.

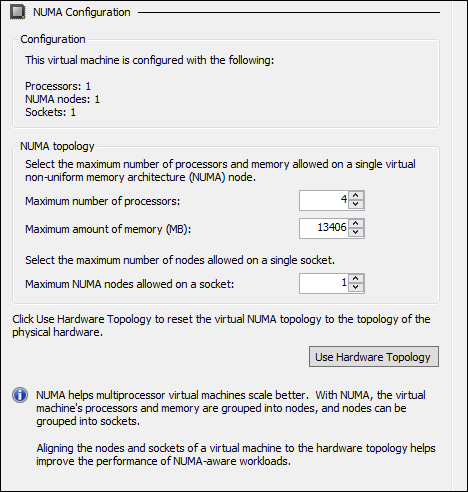

Non-Uniform Memory Access (NUMA)

SQL Server (2005 and up) is Non-Uniform Memory Access (NUMA) aware and is detected automatically whether at the host OS level or VM (the default virtual NUMA settings Hyper-V are optimized to match the host’s NUMA topology).

A NUMA architecture divides memory and processors into groups, called NUMA nodes. From the perspective of any single processor in the system, memory that is in the same NUMA node as that processor is referred to as local, and memory that is contained in another NUMA node is referred to as remote (foreign). Processors access local memory faster vs. remote memory so it’s a good idea to set the NUMA settings in Hyper-V accordingly.

SQL Server subdivides CPUs associated with a hardware NUMA node into multiple CPU nodes. This is known as soft-NUMA.

Basically, NUMA prevents SQL Server from waiting in line to access the same block of memory in use by another thread which is the reason for multiple nodes.

Note: Virtual NUMA and Dynamic Memory features cannot be used at the same time. Workloads that are not NUMA-aware will not take advantage of virtual NUMA.

More information on NUMA may be found here: https://technet.microsoft.com/en-us/library/dn282282.aspx

Configure SQL Server to use Soft-NUMA: https://technet.microsoft.com/en-us/library/ms345357%28v=sql.105%29.aspx

Example NUMA Configuration in Hyper-V:

Dynamic Memory

Memory in general Windows Server 2012 R2 supports 4 TB on the host and each guest (VM) will support up to 1 TB of memory. Applications such as SQL Server like to consume every last bit of memory made available to it, however as shown below, you can set limits on how much memory should be allocated to a specific virtual machine.

Note: reminder, you’re either going to use NUMA or Dynamic Memory, not both.

Storage

There are many factors to consider when selecting storage to place your databases and log files. For read/write intensive databases you’ll most likely look at storage that provides high I/O capabilities. However, for high availability purposes, we also have to consider shared storage as well.

Hyper-V supports SMB 3.0, NFS, Fibre, iSCSI, etc. and offers a shared storage option as well.

Hyper-V Generation 2 VMs (VHDX format) – See Hyper-V capabilities as well as the Generation 2 VMs section above.

Shared VHDX

Windows Server 2012 R2 enables you to share a virtual hard disk file (VHDX format), which provides shared storage for a virtual machine failover cluster (also known as a guest cluster). By making shared storage available to virtual machines, you can easily use a virtual machine failover cluster to protect your application services (for example, SQL or file server services) running inside your virtual machines.

More info on how to configure share VHDX: https://technet.microsoft.com/en-us/library/dn265980.aspx

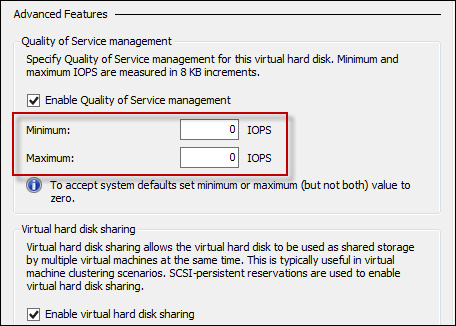

Storage QoS

Storage QoS provides storage performance isolation in a multi-tenant environment and mechanisms to notify you when the storage I/O performance does not meet the defined threshold to efficiently run your virtual machine workloads.

With Storage QoS you have the ability to set Max and Min Input/Output per second (IOPS) where you can throttle the IO of storage to limit other resources from impacting your high performance workloads.

Offloaded Data Transfer (ODX)

When moving around DBs or log files the host OS typically handles the processing and hand off. However with ODX Windows Server 2012 will hand off the work to the storage device. The storage device (if ODX is supported) will handle data movement and tell Windows Server when it’s completed. ODX greatly speeds up the time of moving files, creating virtual machines, etc.

Networking

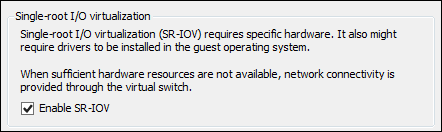

Single-Root I/O Virtualization (SR-IOV)

Single Root I/O Virtualization (SR-IOV) is a standard introduced by the PCI-SIG. SR-IOV works in conjunction with system chipset support for virtualization technologies. This provides remapping of interrupts and DMA and allows SR-IOV capable devices to be assigned directly to a virtual machine.

Hyper-V in Windows Server 2012 enables support for SR-IOV–capable network devices and allows an SR-IOV virtual function of a physical network adapter to be assigned directly to a virtual machine. This increases network throughput and reduces network latency, while also reducing the host CPU overhead required for processing network traffic. ( source: https://technet.microsoft.com/en-us/library/hh831389.aspx ) – Basically network I/O is offloaded to the adapter directly instead of the host having to act as a middle man.

NIC Teaming

NIC Teaming, also known as load balancing and failover (LBFO), allows multiple network adapters on a computer to be placed into a team for the following purposes:

· Bandwidth aggregation

· Traffic failover to prevent connectivity loss in the event of a network component failure

Note: NIC teaming is not supported when utilizing SR-IOV because it bypasses the networking stack. Additional info may be found here: https://technet.microsoft.com/en-us/library/hh831648.aspx

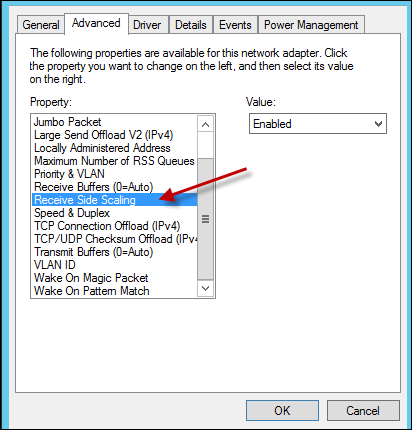

vRSS & dVMQ

Receive Side Scaling (vRSS) is supported on the VM network path, allowing VMs to sustain a larger load of network traffic. vRSS assists network performance (>10G) by splitting processing across multiple cores on the host and VM to achieve greater networking performance. To take advantage of vRSS, VMs must be configured to use multiple cores, and they must support RSS. vRSS is enabled automatically when the VM uses RSS on the VM network path.

Dynamic Virtual Machine Queue (dVMQ) For receive network traffic coming externally from the server and into the Hyper-V Virtual Switch, Dynamic Virtual Machine Queue (dVMQ) performs a function similar to RSS. dVMQ creates virtual network queues for each virtual machine on the host. This allows packets to be pushed directly to the VM from Hyper-V (hypervisor). dVMQ will dynamically load balance as well. This helps to reduce network traffic overhead. dVMQ is enabled by default, however you can manage this setting by using the following PowerShell command (Enable-NetAdapterVmq GuestTrafficNic).

More info on vRSS & dVMQ my be found here: https://technet.microsoft.com/en-us/library/jj679878.aspx & https://technet.microsoft.com/en-us/library/dn383582.aspx

Or enable by using PowerShell: Enable-NetAdapterRSS –Name <AdapterName>

Clustering

Windows Server Failover Clustering (WSFC)

For high availability, clustering is typically the option to go with. Failover clusters provide high availability and scalability to many server workloads. These include server applications such as Microsoft Exchange Server, Hyper-V, Microsoft SQL Server, and file servers. The server applications can run on physical servers or virtual machines. With Windows Server 2012 you can scale up to 64 physical nodes and host 8,000 virtual machines.

More info on WSFC: https://technet.microsoft.com/en-us/library/hh831579.aspx

SQL Server AlwaysOn Availability Groups

SQL Server AlwaysOn Availablity Groups (AGs) are an option as well. AlwaysOn AGs are available for use within your datacenters or via Windows Azure. SQL Server AlwaysOn AGs requires Windows Server Failover Clustering.

An Availability Group supports a failover environment for a discrete set of user databases, known as availability databases that fail over together. An availability group supports a set of primary databases and one to four sets of corresponding secondary databases. Secondary databases are not backups. Continue to back up your databases and their transaction logs on a regular basis. ( source: https://technet.microsoft.com/en-us/library/ff877884.aspx )

More info on SQL AlwaysOn Availability Groups: https://blogs.msdn.com/b/sqlalwayson/archive/2013/08/06/availability-group-listener-in-windows-azure-now-supported-and-scripts-for-cloud-only-configuration.aspx

Information on how to deploy SQL Server cluster on WSFC: https://technet.microsoft.com/en-us/library/hh270278.aspx and https://technet.microsoft.com/en-us/library/hh231721.aspx

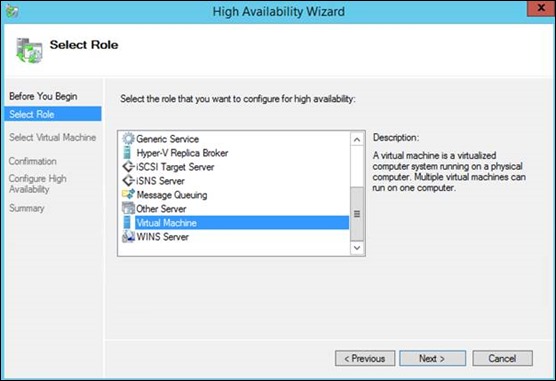

Hyper-V and VM Clustering

Once failover clustering is set up on your Windows Server two options will populate in the configure role list:

- Hyper-V Replica Broker: when using Hyper-V Replica you’re creating a copy of the VM that can be failed over to. However in the absence of System Center 2012 R2 Virtual Machine Manger and Windows Azure (Hyper-V Recovery Manager), failover is a manual process. When using Hyper-V Replica Broker the VM replicas in combination with Clustered Shared Volumes are made highly available.

- Virtual Machine: where you configure VM’s to be highly available.

More info on how to deploy Hyper-V Replica and Hyper-V cluster: https://technet.microsoft.com/en-us/library/jj863389.aspx and https://technet.microsoft.com/library/jj134207.aspx

To create a Hyper-V cluster using System Center 2012 Virtual Machine Manager please visit: https://technet.microsoft.com/en-us/library/gg610576.aspx

Applications and SQL Server in Windows Azure

Another question I receive is: “Can I virtualize SQL Server on Windows Azure Virtual Machines?” Again my answer is typically yes. However there are a couple factors to consider.

Virtual Machine Format

At this time, Windows Azure supports .VHD VM format. What this means is you’re committed to Generation 1 VMs. If you require VMs greater than 8 cores and 56 GB memory then you may want to continue to host SQL Server within your datacenter.

Storage

One of the many scalability advantages Windows Azure offers is around storage. Depending on the type of VM size selected you have the option to add 1 to 16, 1 TB disks to a VM. What this means is you can split off data across multiple disks and potentially see greater IOPS. Disk IOPS are capped at 500 IOPS/disk however, adding multiple disks, splitting data across them (e.g. SQL Log files), and enabling cache can substantially increase IOPS and thus the performance of your SQL servers.

Looking to move your SQL Server instance to Windows Azure? https://msdn.microsoft.com/en-us/library/windowsazure/dn133141.aspx

Need to convert your SQL Server VM to .VHD in Hyper-V? https://blogs.technet.com/b/cbernier/archive/2013/08/29/converting-hyper-v-vhdx-to-vhd-file-formats-for-use-in-windows-azure.aspx

Putting it all together

OK, now that we understand what architecture components to consider when virtualizing applications such as SQL Server on Hyper-V and Windows Azure, let’s put all of the considerations together in a diagram:

Windows Azure Storage and Network Targets Architecture Overview: https://blogs.msdn.com/b/windowsazurestorage/archive/2012/11/04/windows-azure-s-flat-network-storage-and-2012-scalability-targets.aspx

For a list of Applications, Windows Sever Roles and Features that are supported in Windows Azure see: https://support.microsoft.com/kb/2721672

SQL Server Performance

I’ve spent some time looking at the architecture components that should be evaluated when virtualizing applications such as SQL Server. However there is one more item that needs to be considered and that is the performance of the application itself. Focusing on SQL Server, there are numerous performance enhancing options SQL Server offers. At the lowest level SQL Server can be configured to utilize multiple CPUs as well as configured how to utilize and consume assigned memory.

At a higher level, your application and its calls to SQL via SQL queries need to be tuned for the best possible performance. Proper indexing throughout database tables should be set for fast query returns. There are several tools that may be used to look at query performance in the tools section below.

I understand I’ve only scratched the surface of SQL Server performance tuning, however there are many discussions and resources available on the web.

For additional information on how to tune and evaluate SQL Server performance visit the SQL Server Performance Survival Guide: https://social.technet.microsoft.com/wiki/contents/articles/5957.sql-server-performance-survival-guide.aspx

SQL Server 2014 offers new In-Memory database capabilities to help to further boost performance. More info and trial: https://www.microsoft.com/en-us/sqlserver/sql-server-2014.aspx

Tools to measure and monitor performance

Performance Tuning Guidelines for Windows Server 2012

Windows Server (built-in) performance monitoring tools

- Task Manager (taskman) – to get a quick glimpse of real-time performance across your system

- Resource Monitor (resmon) – similar to task manager however you have additional capabilities such as process isolation.

- Performance Monitor (perfmon) – think about performance over time when using performance monitor. You’ll select certain “performance counters” (e.g. %processor time, private bytes, avg disk write queue length, etc.) and monitor system performance over a specified period of time.

Performance Analysis of Logs (PAL) Tool

“The PAL (Performance Analysis of Logs) tool is a powerful tool that reads in a performance monitor counter log and analyzes it using known thresholds.”

Iometer

This is a great tool measure disk I/O performance. Comes in handy especially when determining if your storage is a bottleneck.

https://sourceforge.net/projects/iometer/

SQLIO Disk Subsystem Benchmark Tool

SQLIO is a tool provided by Microsoft which can also be used to determine the I/O capacity of a given configuration.

https://www.microsoft.com/en-us/download/details.aspx?id=20163

SQL Server Profiler

SQL Server Profiler is a rich interface to create and manage traces and analyze and replay trace results. The events are saved in a trace file that can later be analyzed or used to replay a specific series of steps when trying to diagnose a problem.

https://msdn.microsoft.com/en-us/library/ms187929.aspx

Database Engine Tuning Advisor

The Microsoft Database Engine Tuning Advisor (DTA) analyzes databases and makes recommendations that you can use to optimize query performance. You can use the Database Engine Tuning Advisor to select and create an optimal set of indexes, indexed views, or table partitions without having an expert understanding of the database structure or the internals of SQL Server.

https://technet.microsoft.com/en-us/library/hh231122.aspx

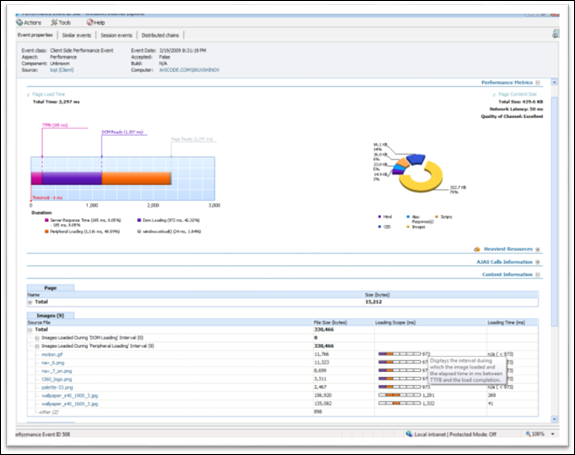

System Center 2012 Operations Manager

System Center is a suite of management technologies that provide deep insight and management across your infrastructure(s) and Windows Azure. Operations Manager 2012 provides monitoring and alerting for monitored applications and services as well as deep application insight via the Application Performance Monitor (APM). APM will show you (textually and graphically) application performance down to the code path, including SQL Queries. For example, if you have an application where you need to know where the performance bottle neck is, APM will provide this insight across .NET, Java, SQL Server, and more.

https://technet.microsoft.com/en-us/library/hh212763.aspx

Example of an APM alert:

Additional Resources

Windows Azure Technical Documentation Library: https://msdn.microsoft.com/en-us/library/windowsazure/dd163896.aspx

Windows Server 2012 R2 Hyper-V Component Architecture: https://www.microsoft.com/en-us/download/details.aspx?id=40732

vRSS: https://technet.microsoft.com/en-us/library/dn343757.aspx

Understanding NUMA: https://technet.microsoft.com/en-us/library/ms178144%28v=sql.105%29.aspx

NUMA Scenarios: https://technet.microsoft.com/en-us/library/ms345345%28v=sql.105%29.aspx