Friday Mail Sack: Robert Wagner Edition

Hello folks, Ned here again. This week, we discuss:

- Computer and user name uniqueness

- DFSR file size matters

- The weird user unlock in ADUC

- RDC extras

- USMT versus full disk encryption

- DFSN and standalone interlink timing

- DFSR conflict folder growth

- Other stuff

Things have been a bit quiet this month blog-wise, but we have a dozen new posts in the pipeline and coming your way next week. Some of them from infamous foreigners! It’s all very exciting, keep your RSS reader tuned to this station.

On to the sackage.

Question

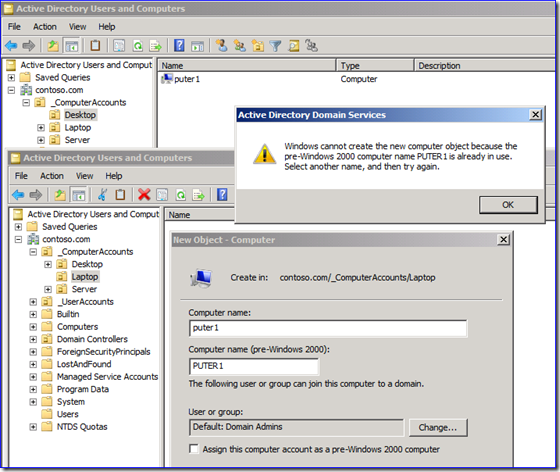

As far as I know, each computer name must be unique within single domain. How do domain controllers check this uniqueness? Most applications (ADUC, ADSIEDIT, etc.) displays entry common name that matches computer account name, which may not be unique.

Answer

The samaccountname attribute – often referred to as the “pre-Windows 2000 name” - is what needs to be unique, as it’s the real “user ID.” That uniqueness isn’t enforced by DCs when you create principals. You can create multiple computers, users, or groups with the same samaccountname. Well-written apps like DSA.MSC or DSAC.EXE will block you, but not because they are abiding by a DC’s rules:

If you use a less polite or more powerful app, a DC will let you create a duplicate samaccountname. At the first logon using that principal though, the DC will notice the duplicate and rename its samaccountname to “ $DUPLICATE- <something> ”.

If you want to see this for yourself:

1. Configure AD Recycle Bin in your lab.

2. Create a user and then delete it.

3. Recreate the user manually in another OU (same name, samaccountname, UPN – just in a different location).

4. Restore the deleted user to its previous location using the recycle bin.

5. Note how the identical users exist and have an identical samaccountname.

6. Logon as that user and the restored user will have its samaccountname mangled with $DUPLICATE.

The “name” of the object is unique because it has to form a distinguished name, so you get that free thanks to LDAP. Only samaccountname and UPN will allow duplicates. And obviously, while I can create two computers with the same name in different OUs of the same domain, DNS is not going to be pleased and name resolution isn’t going to work – so this is all rather moot.

Question

When you were testing DFSR performance, what size file did you use for this statement?

- Turn off RDC on fast connections with mostly smaller files - later testing (not addressed in the chart below) showed 3-4 times faster replication when using LAN-speed networks (i.e. 1GBb or faster) on Win2008 R2. This is because it was faster to send files in their totality than send deltas, when the files were smaller and more dynamic and the network was incredibly fast. The performance improvements were roughly twice as fast on Win2008 non-R2. This should absolutely not be done on WAN networks under 100 Mbit though as it will likely have a very negative affect.

Answer

97,892 files in 32,772,081,549 total bytes for an average file size of 334,777 bytes. That test used a variety of real-world files, so there was no specific size, nor were they automatically generated with random contents like some of the tests.

Question

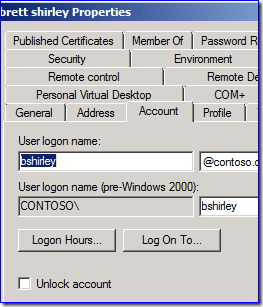

When using AD Users and Computers, what is the difference for unlocking between this:

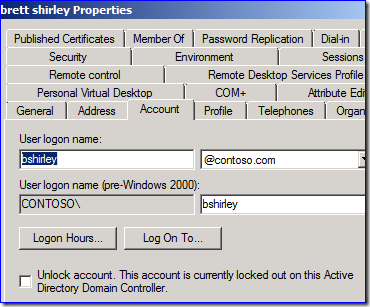

And this:

Answer

The first one is sort of a "placeholder" (it would have been better as a button that grayed out when not needed, in my opinion) to let you know where unlocking happens. An actual account lockout raises the extra text and clicking that checkbox now does something.

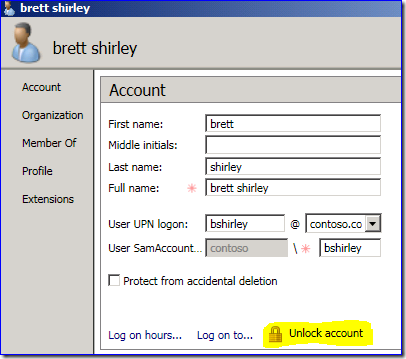

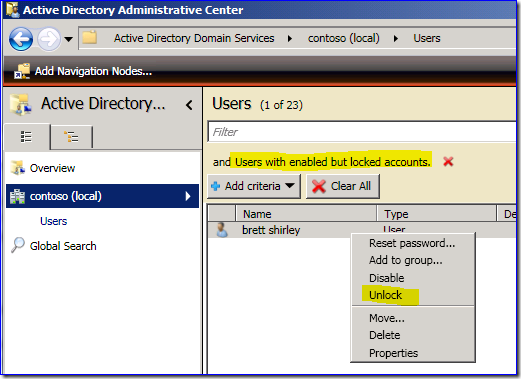

I prefer the way AD Administrative Center handles this:

Even better, I can just find the locked accounts and unlock them right there.

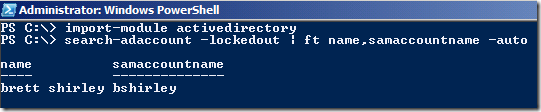

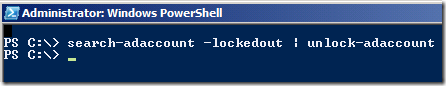

Or even betterer…er:

Reminder: account lockouts are yuck. It’s just a way to create denial of service attacks. Use intrusion detection with auditing to find villains trying to brute force passwords. Even better, use two-factor auth with smart cards, which chemically neuters external brute force. If your security department thinks account lockout is better than this, get a new security department; yours is broken.

Question

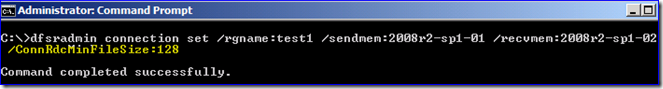

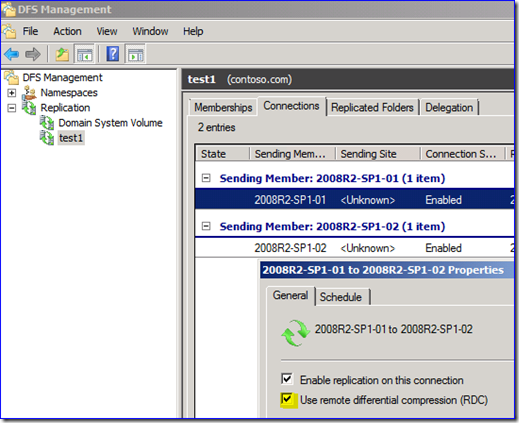

Are RDC Recursion depth, Comparator buffer size, horizon size, hash window size RDC parameters configurable for DFSR?

Answer

No, no, no, and no. :) All you can choose is the minimum size to use RDC, or if you don’t want RDC at all.

That’s a great doc on how to write your own RDC application, by the way. It’s shocking how few there have been; we have an internal RDC copy utility that is the bomb. I wish we’d sell it to you, I like money.

Question

How can I use USMT offline migration with vendor-provided full disk encryption, like McAfee Endpoint Encryption, Symantec PGP Whole Disk Encryption, Check Point Full Disk Encryption, etc. I already know that with Microsoft BitLocker I just need to suspend it temporarily.

Answer

Any official documentation on making WIN PE mount a vendor-encrypted volume would come from the vendor, as they have to provide a driver and installation steps for WIN PE to mount the volume, or steps on how to “suspend” outside of PE like BitLocker. For example, McAfee’s tool of choice seems to be EETech (here is its user guide). I’d highly recommend opening a case with the vendor before proceeding, even if they provide online documentation. Easy to lose your data forever when you start screwing around with encrypted drives.

USMT does not have any concept of an encrypted volume (any more than Notepad.exe would); he’s a user-mode app.

Question

We use DFS Namespace interlinks, where a domain-based namespace links to standalone namespaces which then link to file shares. When we restart a standalone namespace root server though, clients start trying to get referrals as soon as it is available through SMB paths and not when its DFS service is ready to accept referrals. Is this expected?

Answer

This is expected behavior and demonstrates why deploying standalone DFS root servers on non-clustered servers goes against our best practices. The client bases server availability on SMB, which is ready at that point on the standalone server – it doesn’t know that this is yet another DFSN referral, and it’s not going to work yet. Interlinks are gross, for this reason. If you must use this, cluster the standalone servers so that they can survive a node reboot for Patch Tuesday without hurting your users’ feelings.

This is also why Win2008 (V2) namespaces were invented: so that customers could stop creating complex and fragile interlinked domain-standalone DFS namespaces in order to get around scalability limits. V2 scales nearly infinitely and if you deploy it, you can cut out the middle layer of servers and hopefully, save a bunch of dough.

Question

Have you ever seen the DFSR ConflictAndDeleted folder grow larger than the quota set, even when the XML file is not corrupt? E.g. 5GB when quota is set to the default of 660MB.

Answer

Yes, starting in Windows Server 2008. Previously, a damaged conflictanddeletedmanifest.xml required manual deletion. In Win2008 and later, the DFSR service detects errors parsing that XML file. It writes “Deleting ConflictManifest file” in the DFSR debug log and automatically deletes the manifest file, then creates a brand new empty one. Any files that were previously noted in the deleted manifest are no longer tracked, so they become orphaned in the folder. Not an ideal solution, but now you’re less likely to run out of disk space due to a corrupt manifest. That’s the downside to using a non-transactional file like XML– if there’s a disk hiccup, voltage gremlin, or trans-dimensional rift, you get incomplete tags.

I bet a bunch of DFSR admins are now checking their ConflictAndDeleted folders…

Aha, there’s that spreadsheet I was looking for… eww, it’s got eggshell goop on it.

Other Stuff

Black Hat put up their 2011 USA presentations, make sure you browse around. The ones I found most interesting (and include a whitepaper, slide deck, or video):

- How a Hacker Has Helped Influence the Government - and Vice Versa (the writer of L0phtcrack talks about being a PM at DARPA)

- Corporate Espionage for Dummies: The Hidden Threat of Embedded Web Servers (endless web servers you didn’t even know you had running)

- Killing the Myth of Cisco IOS Diversity: Towards Reliable, Large-Scale Exploitation of Cisco IOS (he who controls the spice, controls the universe!)

- Easy and quick vulnerability hunting in Windows (he points out how to examine your vendor apps carefully, as your vendor often isn’t)

- Faces Of Facebook-Or, How The Largest Real ID Database In The World Came To Be (or: the reason Ned does not use social media)

- OAuth – Securing the Insecure (or: the other reason Ned does not use social media)

- Battery Firmware Hacking (Good lord, start FIRES?!)

- Hacking Medical Devices for Fun and Insulin: Breaking the Human SCADA System (Never mind fires, hacking people into diabetic comas!)

There were several Apple and iOS pwnage talks too. I don’t care about those but you might, especially if you’re the new “owner” of those unmanaged boxes in your environment, thanks to the Sales Borgs wanting iPads for no legitimate reason… Another hidden cost of “IT consumerization”.

A few people asked for a DOCX copy of the Accelerating Your IT Career post. Grab it here.

Free Artificial Intelligence class online from a Research Professor of Computer Science at Stanford University. Looks neato for the price.

Did you watch the Futurama season finale last night? The badly dubbed manga was a gigantic trough of awesome. I was right next to Katey Sagal at my hotel at Comic-Con. She is teeny.

Space.com has a terrific infographic of the 45 years of Star Trek.

Source: SPACE.com: All about our solar system, outer space and exploration

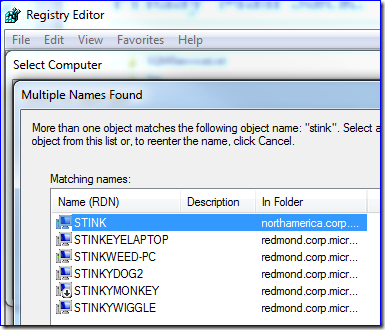

At Microsoft, you name your own computers and dogfooding means you can join as many to the domain as you like. My user domain alone has 58,420 computers and it’s a “small” one in our forest, so trying to control machine names is counterproductive even for bureaucratabots. I have a test server called Stink, and yesterday I needed to remote its registry. When I typed in the name, I found I wasn't the first to think of smelly nomenclature:

For MarkMoro and all those like him, the 10 best ’80s cop show opening credits (warning: a couple sweary words in the text, but all movies totally SFW; this is old American network TV, after all).

Finally, the best email conversation thread of last month:

Jonathan: Darn you, Ned. I lost track of an hour on this ConceptRobots site.

Ned: I should get a commission from the sites I push in Friday mail sacks.

Jonathan: Yes… you wield your influence so adroitly.

Ned: Or was it… androidly?ahahHAHAHAHAHAHHAHAHAHHAHAHAHA

Ha.

Jonathan: I'm going to destroy your cubicle when you go to lunch.

Ned: That's fair.

Have a great weekend, folks.

Ned "still has a thing for Stephanie Powers" Pyle