Replacing DFSR Member Hardware or OS (Part 4: Disk Swap)

Hello folks, Ned here again. Previously I covered how to use an N+1 server placement method to migrate an existing DFSR environment to new hardware or operating system. Now I will show you how to replace servers in an existing Replication Group using the disk swap method.

Make sure you review the first three blog posts before you continue:

- Replacing DFSR Member Hardware or OS (Part 1: Planning)

- Replacing DFSR Member Hardware or OS (Part 2: Pre-seeding)

- Replacing DFSR Member Hardware or OS (Part 3: N+1 Method)

Background

The “Data Disk Swap” method allows a new file server to replace an old one, but does not require new storage as it re-uses existing disks. This method typically entails a SAN or NAS storage backend as local data disks are typically in a RAID format that is difficult to keep intact between servers. A single data disk or RAID-1 configuration would be relatively easy to transfer between servers, naturally.

Because the DFSR data never has to be replicated or copied to the new replacement server, pre-seeding is accomplished for free. The downside here when compared to N+1 is that there will be a replication – and perhaps user access - interruption for as long as it takes to move the disks and reconfigure replication/file shares on the new replacement node. So while there is a significant cost savings, there is more risk and downtime for this method.

Because a new server is replacing an old server in the disk swap method new hardware and a later OS can be deployed. No reinstallation or upgrades are necessary. The old server can be safely repurposed (or returned, if leased). DFSR supports renaming the new server to the old name; this may not be necessary if DFS Namespaces are being utilized.

Requirements

For each computer being replaced, you need the following:

- A replacement server.

- If replacing a server with a cluster, two or more replacement servers will be required (this is typically only done on the hub servers).

- A full backup with bare metal restore capability is highly recommended for each server being replaced. A System State backup of at least one DC in the domain hosting DFSR is also highly recommended.

Repro Notes

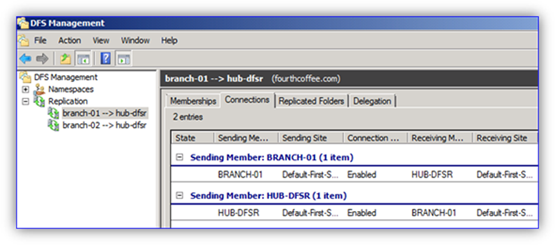

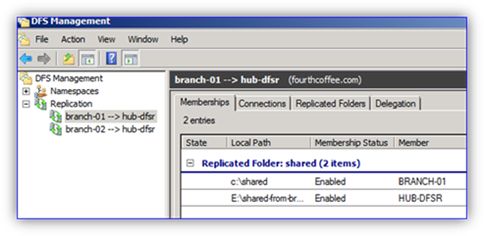

In my sample below, I have the following configuration:

- There is one Windows Server 2003 R2 SP2 hub (HUB-DFSR) using a dedicated data drive provided by a SAN through fiber-channel.

- There are two Windows Server 2003 R2 SP2 spokes (BRANCH-01 and BRANCH-02) that act as branch file servers.

- Each spoke is in its own replication group with the hub (they are being used for data collection so that the user files can be backed up on the hub, and the hub is available if the branch file server goes offline for an extended period).

- DFS Namespaces are generally being used to access data, but some staff connect to their local file servers by the real name through habit or lack of training.

- The replacement computer is running Windows Server 2008 R2 with the latest DFSR hotfixes installed, including KB2285835.

I will replace the hub server with my new Windows Server 2008 R2 cluster and make it read-only to prevent accidental changes in the main office from ever overwriting the branch office’s originating data. Note that whenever I say “server” in the steps you can use a Windows Server 2008 R2 DFSR cluster.

Procedure

Note: this should be done off hours in a change control window in order to minimize user disruption. If the hub server is being replaced there will be no user data access interruption. If a branch server access by users however, the interruption may be several hours while the new server is swapped in. Replication - even with pre-seeding – may take substantial time to converge if there is a significant amount of data to check file hashes on.

1. Inventory your file servers that are being replaced during the migration. Note down server names, IP addresses, shares, replicated folder paths, and the DFSR topology. You can use IPCONFIG.EXE, NET SHARE, and DFSRADMIN.EXE to automate these tasks. DFSMGMT.MSC can be used for all DFSR operations.

2. Stop further user access to the old file server by removing the old shares.

Note: Stopping the Server service with command NET STOP LANMANSERVER will also temporarily prevent access to shares.

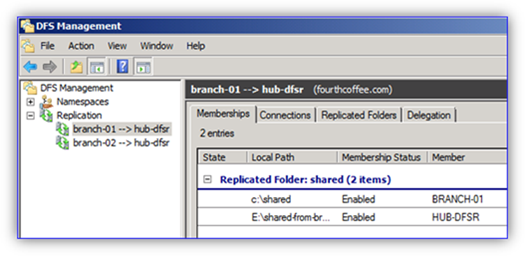

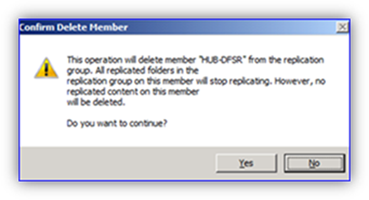

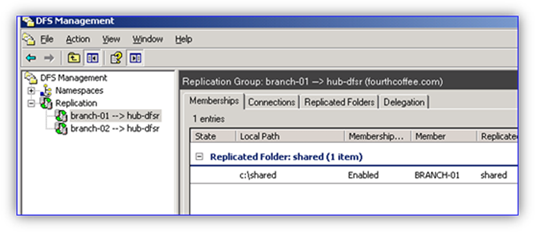

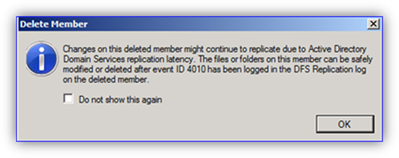

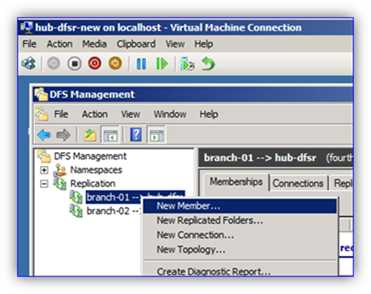

3. Remove the old server from DFSR replication by deleting the Member within all replication groups. This is done on the Membership tab by right-clicking the old server and selecting “Delete”.

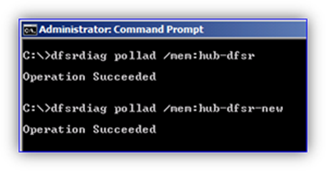

4. Optional, but recommended: Use REPADMIN /SYNCALL and DFSRDIAG POLLAD to force AD replication and polling of configuration changes to occur faster in a widely distributed environment.

5. When the server being removed has logged a DFSR 4010 event log entry for all RG’s it was participating in, the storage being replicated previously can be disconnected from that computer.

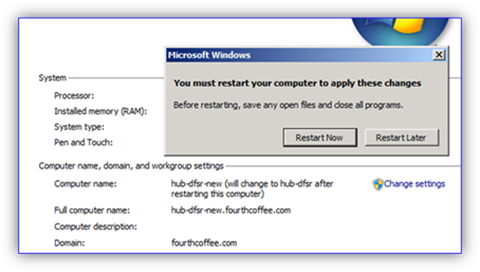

6. Rename the replacement server to the old server name. Change the IP address to match the old server.

Note: This step is not strictly necessary, but provided as a best practice. Applications, scripts, users, or other computers may be referencing the old computer by name or IP even if using DFS Namespaces. If it is against IT policy to use server names and IP addresses instead of DFSN – and this is a recommended policy to have in place – then do not change the name/IP info; this will expose any incorrectly configured systems. Use of an IP address is especially discouraged as it means that Kerberos is not being used for security.

7. Bring the new replacement server or cluster online and attach the old storage. Verify files are accessible at this point before continuing with DFSR-specific steps.

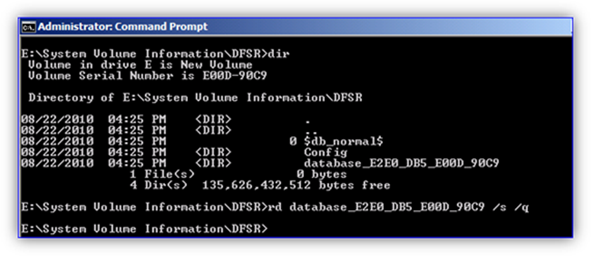

Note: newly attached storage that each volume will have previous DFSR configuration info stored locally, including a database and other folders:

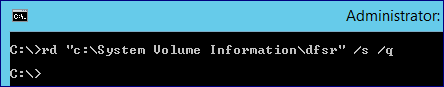

8. Remove the old DFSR configuration folder before configuring DFSR on the replacement server. This requires you delete via a CMD prompt using the RD command as Windows Explorer does not allow file deletion in this folder:

RD “<drive>:\system volume information\DFSR” /s /q

Critical note: this step is necessary to prevent the DFSR service from using previous database, log, or configuration data on the new server and potentially overwriting data incorrectly or accidently causing spurious conflicts. It must not be skipped.

It is also important to note that the "\system volume information" folder is ACLed only to allow SYSTEM access to it, but that all subfolders are ACLed with the built-in Administrators group. If you want to browse or DIR the SVI folder directly, you must add your permissions to it after taking ownership. If you just want to delete its contents directly, you can, thanks to having the Bypass Traverse Checking privilege.

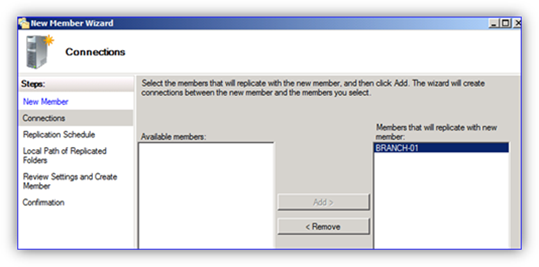

9. Add the new server as a new member of the first replication group if this server is a hub being replaced and is to be considered non-authoritative for data.

Note: For steps on using DFSR clusters, reference:

- Deploying DFS Replication on a Windows Failover Cluster – Part I

- Deploying DFS Replication on a Windows Failover Cluster – Part II

- Deploying DFS Replication on a Windows Failover Cluster – Part III

Critical note: if this server is the originating copy of user data – such as the branch server where all data is created or modified– delete the entire existing replication group and recreate it with this new server specified as the PRIMARY. Failure to follow this step may lead to data loss, as the server being added to an existing RG is always non-authoritative for any data and will lose any conflicts.

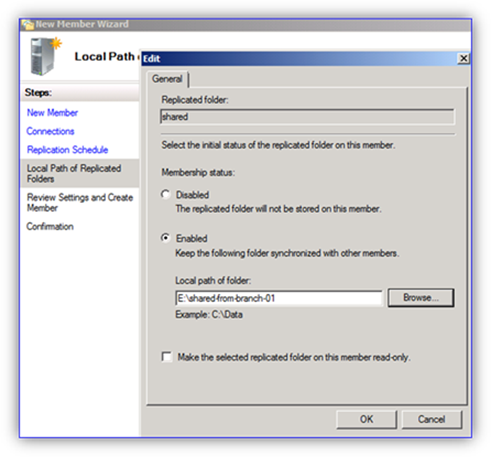

10. Select the previously replicated folder path on the replacement server.

Note: Optionally, you can make this a Read-Only replicated folder if running Windows Server 2008 R2. This must not be done on a server where users are allowed to create data.

11. Complete configuration of replication. Because all data already existed on the old server’s disk that was re-used, it is pre-seeded and replication should finish much faster than if it all had to be copied from scratch.

12. Force AD replication and DFSR polling.

13. When the server has logged a DFSR 4104 event (if non-authoritative) then replication is completed.

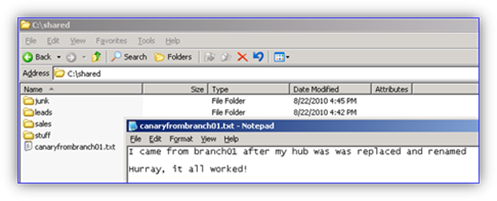

14. Verify that servers are replicating correctly by creating a propagation test file using DFSRDIAG.EXE PropagationTest or DFSMGMT.MSC’s propagation test.

15. Grant users access to their data by configuring shares to match what was used previously on the old server.

Note: This step is recommended after replication to avoid complexity and user data changing while initial sync is being performed. If necessary for business continuity, shares can instead be made available at the phase where the replacement server was brought online.

16. Add the new server as a DFSN link target if necessary or part of your design. Again, it is recommended that file servers be accessed by DFS namespaces rather than server names. This is true even if the file server is the only target of a link and users do not access the other hub servers replicating data.

17. The process is complete.

Final Notes

A data disk swap DFSR migration is less recommended than the N+1 method, as it causes a significant replication outage. During that timeframe, the latest data may not be available on hubs for backup. There is significant opportunity for human error here to make the outage much longer than necessary as well. If using certain local disk options (such as a RAID-5) this method may be totally unavailable to administrators.

On the other hand, this process can be logistically and financially more feasible for many customers and still gives straightforward steps with optimal performance due to inherent pre-seeding. All of these factors make disk swap method a less recommended but still advisable DFSR node replacement strategy.

Series Index

- Replacing DFSR Member Hardware or OS (Part 1: Planning)

- Replacing DFSR Member Hardware or OS (Part 2: Pre-seeding)

- Replacing DFSR Member Hardware or OS (Part 3: N+1 Method)

- Replacing DFSR Member Hardware or OS (Part 4: Disk Swap)

- Replacing DFSR Member Hardware or OS (Part 5: Reinstall and Upgrade)

- Ned “swizzle” Pyle