Friday Mail Sack: Scooter Edition

Hi folks, Ned here again. It’s that time where we look back on the more interesting questions and comments the DS team got this week. Today we talk about FRS, AD Users and Computers, Load-Balancers, DFSR, DFSN, AD Schema extension, virtualization, and Scott Goad.

Let’s ride!

- FRS event 13568 recommendations

- Missing attribute editor in ADUC Find

- DCs and load balancers

- Cross-file RDC behavior

- DFS out of site referrals due to WAN appliances

- Testing AD Schema extension

- VMware is expensive

Question

If you get a journal wrap when using FRS, there is an event 13568 like so:

Event Type: Warning

Event Source: NtFrs

Event Category: None

Event ID: 13568 Date: 12/12/2001

Time: 2:03:32 PM

User: N/A

Computer: DC-01

Description:

The File Replication Service has detected that the replica set " 1 " is in JRNL_WRAP_ERROR.

<snipped out>

Setting the "Enable Journal Wrap Automatic Restore" registry parameter to 1 will cause the following recovery steps to be taken to automatically recover from this error state.

But when I review KB292438 (Troubleshooting journal_wrap errors on Sysvol and DFS replica sets) it specifically states:

Important Microsoft does not recommend that you use this registry setting, and it should not be used post-Windows 2000 SP3. Appropriate options to reduce journal wrap errors include:

- Place the FRS-replicated content on less busy volumes.

- Keep the FRS service running.

- Avoid making changes to FRS-replicated content while the service is turned off.

- Increase the USN journal size.

So which is it?

Answer

The KB is correct, not the event log message. If you enable the registry setting you can get caught in a journal wrap recovery “loop” where the root cause keeps happening and getting fixed, but then happens again immediately and gets fixed, and so on: replication may sort of work – inconsistently – and you are just masking the greater problem. You should be fixing the real cause of the journal wraps.

As to why this message is still there after 10 years and four operating systems? Inertia and our unwillingness to incur the test/localization cost of changing the event. When you have to rewrite something in all these regions and languages, the price really adds up. I am way more likely to get a bug fix from the product group that changes complex code than one that changes some text.

Question

I was wondering if it is intentional that the "attribute editor" tab is not visible when you use "Find" on an object in AD Users and Computers?

Answer

Ughh. Nope, that’s a known issue. Unfortunately for you, the business justification to fix it was not convincing. This happens in Win2008/Vista also and no Premier customer has ever put up a real struggle.

However, you have another option: Use the “Find” in ADAC (aka AD Admin Center, aka DSAC.EXE). This lets you find and when you open those users, you will see the attribute editor property sheet. If everyone here hasn’t already figured it out, ADAC is the future due to its PowerShell integration and ADUC doesn’t appear to be getting any further love.

Question

Are there any issues with putting DC’s behind load-balancers?

Answer

If you put a domain controller behind a load balancer you will often find that LDAP/S or Kerberos authentication fail. Keep in mind that SPN’s can only be associated to one computer account, so Kerberos is going to go kaput. You will have to issue certificates manually to the domain controllers if you are trying to do LDAP/S connectivity because the subject and subject alternative name needs to match the DNS name of the load-balanced address.

Domain controllers are load balanced already in that there are multiples of them. If you need to find a domain controller correctly your application should do a DCLocator or LDAP SRV record lookup like a proper citizen.

Answer courtesy of Rob “Sasquatch” Greene, our tame authentication yeti.

Question

The documentation on DFSR's cross-file RDC is pretty unclear – do I need two Enterprise Edition servers or just one? Also, can you provide a bit more detail on what cross-file RDC does?

Answer

Just one of the two servers in a given partnership – i.e. replicating with DFSR connections – needs to be running Enterprise Edition in order to have both servers use cross-file RDC. Proof. There is no difference in DFSR in Standard Edition versus Enterprise Edition code; once the servers agree that at least one of them is Enterprise, both will use cross-file RDC. Otherwise, anytime you got a hotfix from us there’d be one for each edition, right? But there never are: https://support.microsoft.com/kb/968429 (and yes, this article has gotten a bit out of sync with reality, we’re working on that.)

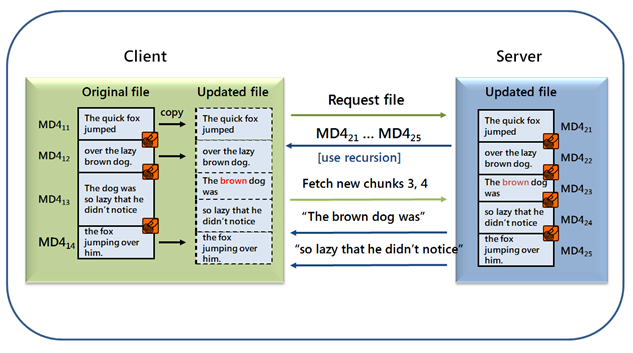

As for what Cross-File RDC does: if you are already familiar with normal Remote Differential Compression, you understand that it takes a staged and compressed copy of a file and creates MD-4 signatures based on “chunks” of files:

This means that when a file is altered (even in the middle), we can efficiently see which signatures changed and then just send along the matching data blocks. So a doc that’s 50MB that changes one paragraph only replicates a few KB. An overall SHA-1 hash is used for the entire file - to include attributes, security info, alternate data streams etc. - as a way to know that two files match perfectly or not. DFSR can also make signatures of signatures, up to 8 levels deep, to more efficiently handle very large changes in a big file.

Cross-file RDC takes this slightly further: by using a special hidden sparse file (located in <drive>:\system volume information\dfsr\similaritytable_1) to track all these signatures, we can use other similar files that we already have to build our copy of a new file locally. Up to five of these similar files can be used. So if an upstream server says “I have file X and here are its RDC signatures”, we the downstream server can say “ah, I don’t have that file X. But I do have files Y and Z that have some of the same signatures, so I’ll grab data from them locally and save you having to transmit it to me over the wire.” Since files are often just copies of other files with a little modification, we gain a lot of over-the-wire efficiency and minimize bandwidth usage.

Slick, eh?

Question

I’m seeing DFS namespace clients going out of site for referrals. I’ve been through this article “What can cause clients to be referred to unexpected targets.” Is there anything else I’m missing?

Answer

There has been an explosion of so-called “WAN optimizer” products in the past few years and it seems like everyone’s buying them. The devices can be very problematic to DFS namespace clients, as the devices tend to use Network Address Translation (NAT). This means that they change the IP header info on all your SMB packets to match the subnets of the appliance endpoints – and that means that when DFS tries to figure out your subnet to give you the nearest targets, it gets the subnet of the WAN appliance, not you. So you end up using DFS targets in a totally different site, defeating the purpose of DFS in the first place – a WAN de-optimizer. :)

A double-sided network capture will show this very clearly – packets that leave one computer will arrive at your DFS root server with a completely different IP address. Reconfigure the WAN appliance not to do this or contact their vendor about other options.

Question

I have created/purchased a product that will extend my active directory schema. Since it was not made or tested by Microsoft, I am understandably nervous that I am about to irrevocably destroy my AD universe. How can I test out the LDF file(s) that will be modifying my schema to ensure it is not going to ruin my weekend?

Answer

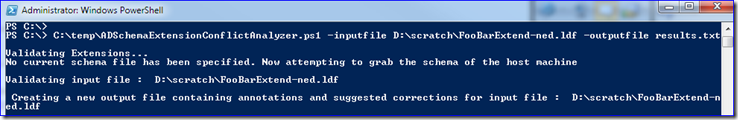

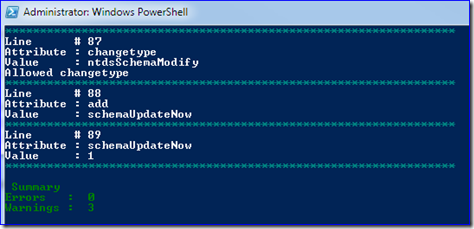

What you need is the free AD Schema Extension Conflict Analyzer. This script can be run anywhere you have installed PowerShell 2.0 and does not require you to use AD PowerShell (for all you late bloomers that have not yet rolled out Win7/R2).

All you do is point this script at your LDF file(s) and your AD schema and let it decide how things look:

set-executionpolicy unrestricted

C:\temp\ADSchemaExtensionConflictAnalyzer.ps1 -inputfile D:\scratch\FooBarExtend-ned.ldf -outputfile results.txt

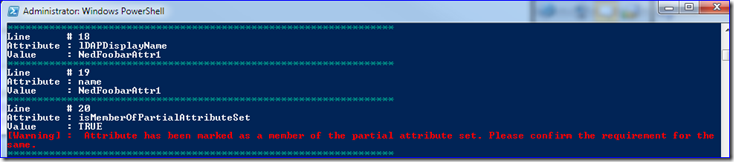

It will find syntax errors, mismatched attribute data types, conflicting objects, etc. plus give advice. Like here it warned me that my new attributes will be in the Global Catalog (in the “partial attribute set”). The script makes no changes to your production forest at all, but if you’re nervous anyway you can export your production schema with:

ldifde.exe –f myschema.ldf –d cn=schema,cn=configuration,dc=contoso,dc=com

… and have the script just compare the two files (if you’re paying attention you’ll see it call LDIFDE in a separate console window already though. You big baby.).

Question

I <blah blah blah> Windows <blah blah blah> running on VMWare.

Answer

You must be made of money, Jack. You’re already paying us for the OS you’re running everywhere. Then instead of using our free hypervisor and way less expensive management system you’re paying someone else a bunch of dough.

- https://www.microsoft.com/virtualization/en/us/cost-advantage.aspx

- https://www.microsoft.com/virtualization/en/us/datacenter-advantage.aspx

“But Ned, we want dynamic memory usage, Linux support, and instantaneous guest migration between hosts”.

Ok:

- https://blogs.technet.com/search/SearchResults.aspx?q=dynamic%20memory§ions=5045

- https://www.microsoft.com/downloads/details.aspx?displaylang=en&FamilyID=eee39325-898b-4522-9b4c-f4b5b9b64551

- https://technet.microsoft.com/en-us/library/dd446679(WS.10).aspx

If you really want to give your CFO a coronary, try this link: https://www.microsoft.com/virtualization/en/us/cost-compare-calculator.aspx

Then while the EMT’s are working on him to start his ticker back up, take out your CIO with this:

Support policy for Microsoft software running in non-Microsoft hardware virtualization software https://support.microsoft.com/kb/897615/

… Microsoft will support server operating systems subject to the Microsoft Support Lifecycle policy for its customers who have support agreements when the operating system runs virtualized on non-Microsoft hardware virtualization software. This support will include coordinating with the vendor to jointly investigate support issues. As part of the investigation, Microsoft may still require the issue to be reproduced independently from the non-Microsoft hardware virtualization software.

This is more common that you might think, we find VMware-only issues all the time and our customer is now up a creek. There are troubleshooting steps - especially with debugging - that we simply cannot do at all due to the VMware architecture. Hence why you will need to reproduce on physical hardware or hyper-v, where we can gather data. Although when we find that it no longer repro’s off VMware… now what?

And of course, when all those VMware ESX servers stopped working for 2 days last year, their workaround could not be performed on DCs as it involved rolling back time. I know that sounds like schadenfreude, but when a customer’s DCs all go offline, we get called in even if it’s nothing to do with us - just ask me how it was when McAfee and CA decided to delete core Windows files. Spoiler alert: it blows.

I feel strongly about this…

Finally, I want to welcome Scott Goad to our fold – you have probably noticed that the KB/Blog aggregations have started again. If you look carefully you’ll see that Scott has taken that over from Craig Landis, who has moved on to getting us better equipped to support ADFS 2.0. Scott used to be a cop and he also has been working on those podcast pieces with Russ.

Welcome Scooter and thanks for all the hard work Craig!

- Ned “I’ll let you try my Clip-Tang style!” Pyle