Windows Server 2008 Failover Clusters: Networking (Part 2)

In Part 1, I discussed Windows Server 2008 Failover Cluster networking features. In this segment, I will discuss implementing networks in a Failover Cluster.

Implementing networks in support of Failover Clusters

The main consideration when designing Failover Cluster networks is to ensure there is built-in redundancy for cluster communications. This is typically accomplished by having a minimum of two physical Network Interface Cards (NICs) installed in each node that will be part of the cluster. These cards must be supported by two separate and distinct buses (e.g. Two PCI NICs). Many people think a single multi-port NIC card meets this requirement – it does not as this configuration creates a single point of failure for all cluster communications. The best configuration would be two multi-port NICs running on separate buses and having fault tolerance implemented by way of NIC Teaming software (provided by 3rd Party vendors.) and being physically connected to separate network switches.

Note: NIC Teaming is not supported on iSCSI connections. Please review the iSCSI Cluster Support: Frequently Asked Questions. The appropriate fault-tolerant mechanism for iSCSI connectivity would be multi-path software. Please review the Microsoft Multi-path I/O: Frequently Asked Questions.

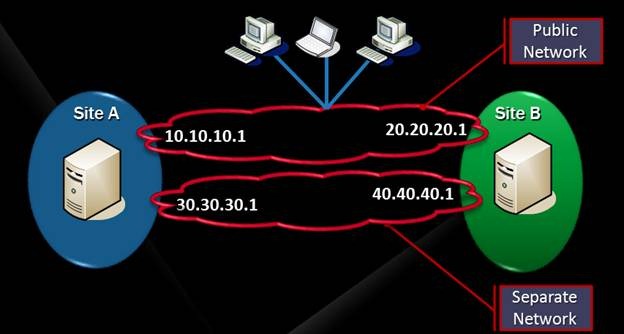

There are two primary design scenarios when planning for Failover Cluster network connectivity. In the first scenario (and the most common), all nodes in the cluster are located on the same networks. In the second scenario, nodes in the cluster are located on separate and distinct routed networks (this is very common in multi-site cluster implementations). Figure 18 shows an example of the second scenario.

Figure 18: Multi-site cluster (network connectivity only)

Note: Even though it is supported to locate cluster nodes on separate, routed networks, it is still supported to connect nodes in a multi-site cluster using stretched Virtual Local Area Networks (VLAN). This configuration places the nodes on the same network(s).

It is important in any cluster that there are no NICs on the same node that are configured to be on the same subnet. This is because the cluster network driver uses the subnet to identify networks and will use the first one detected and ignore any other NICs configured on the same subnet on the same node. The cluster validation process will register a Warning if any network interfaces in a cluster node are configured to be on the same network. The only possible exception to this would be for iSCSI (Internet Small Computer System Interface) connections. If iSCSI is implemented in a cluster, and MPIO (Multi-Path Input/Output) is being used for fault-tolerant connections to iSCSI Storage, then it is possible that the network interfaces could be on the same network. In this configuration, the iSCSI network in the Failover Cluster Manager should be configured such that cluster would not use it for any cluster communications.

Note: Please consult the iSCSI Cluster support: Frequently Asked Question.

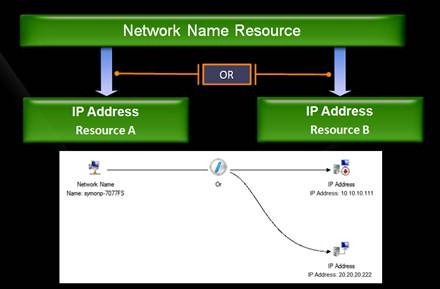

As previously mentioned, Windows Server 2008 accommodates cluster nodes being located on separate, routed networks by including a new logic, called an OR logic, when it comes to IP Address resources. Figure 19 illustrates this.

Figure 19: IP Address Resource OR logic

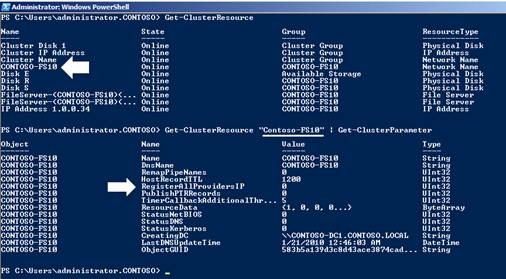

When a Network Name resource is configured with an OR dependency on more than one IP Address resource, this means at least one of the IP Address resources must be able to come Online before the Network Name resource can come Online. Since a Network Name resource can be associated with more than one IP Address, there is a property of a Network Name resource that can be modified so DNS registrations will occur for all of the IP Addresses. The property is called RegisterAllProvidersIP (See Figure 20).

Figure 20: Network Name resource properties

Note: In Figure 20 above, Failover Cluster PowerShell cmdlets were used to access cluster configuration information. This is new in Windows Server 2008 R2. For more information, review the TechNet Cmdlet Reference.

The default registration behavior is to register only the IP Address that can come Online on the node. Implementing this other behavior by modifying the setting to (1) can assist name resolution in a multi-site cluster scenario.

Note: Please review KB 947048 for other things to consider when deploying failover cluster nodes on different, routed subnets (multi-site cluster scenario).

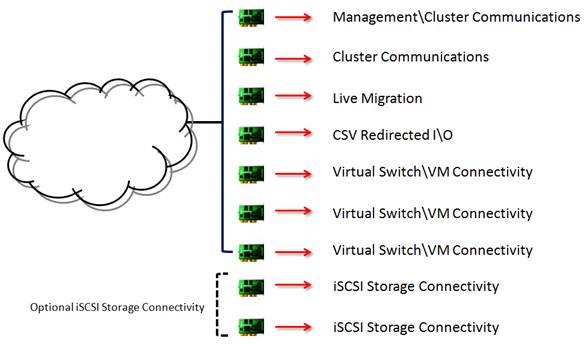

While Failover Clusters require a minimum of two NICs to provide reliable cluster communications, there are scenarios where more NICs may be desired and\or required based on the services or applications that are running in the cluster. One such scenario we already mentioned – iSCSI connectivity to storage. The other scenario involves Microsoft’s virtualization technology – Hyper-V.

The integration of Failover Clustering with Hyper-V was introduced in Windows Server 2008 (RTM) in the form of making Virtual Machines highly available in a cluster by being able to move (Failover) the Virtual Machines between the nodes in the cluster using a process called Quick Migration. In Windows Server 2008 R2, additional capabilities were introduced including Live Migration and Cluster Shared Volumes (CSV). These features improved the high availability story for Virtual machines, but also introduced new networking requirements. The inner workings of Hyper-V networking will not be discussed here. For more information, please download this whitepaper (https://www.microsoft.com/downloads/details.aspx?displaylang=en&FamilyID=3fac6d40-d6b5-4658-bc54-62b925ed7eea).

The networking requirements in a Hyper-V Cluster supporting Live Migration and using Cluster Shared Volumes (CSV) can add up quickly as illustrated in Figure 21.

Figure 21: Hypothetical Networking Requirements

For more information on Live Migration and Cluster Shared Volumes in Windows Server 2008 R2, visit the Microsoft TechNet site.

Using Cluster Shared Volumes in a Failover Cluster in Windows Server 2008 R2

Hyper-V: Using Live Migration with Cluster Shared Volumes in Windows Server 2008 R2

In the next segment I will discuss Troubleshooting cluster networking issues (Part 3). See ya then.

Chuck Timon

Senior Support Escalation Engineer

Microsoft Enterprise Platforms Support