Windows Server 2008 R2 Live Migration – “The devil may be in the networking details.”

Windows Server 2008 R2 has been publicly available now for only a short period of time, but we are already seeing a good adoption rate for the new Live Migration functionality as well as the new Cluster Shared Volumes (CSV) feature. I personally have worked enough issues now where Live Migration is failing that I felt a short blog on what process I have followed to work through these may have some value.

It is important to mention right up front that there is information publicly available on the Microsoft TechNet site that discusses Live Migration and Cluster Shared Volumes. This content also includes some troubleshooting information. I acknowledge that a lot of people do not like to sit in front of a computer monitor and read a lot of text to try and figure out how to resolve an issue. I am one of those people. Having said that, let’s dive in.

It has been my experience thus far that issues that prevent Live Migration from succeeding have to do with proper network configuration. In this blog, I will address the main network related configuration items that need to be reviewed in order to be sure Live Migration has the best chance of succeeding. I begin with an initial set of assumptions which include the R2 Hyper-V Failover Cluster has been properly configured and all validation tests have passed without failure, the highly available VM(s) have been created using cluster shared storage, and the virtual machine(s) are able to start on at least one node in the cluster.

I start off by identifying the virtual machines that will not Live Migrate between nodes in the cluster. While it should not be necessary in Windows Server 2008 R2, I recommend first running a ‘refresh’ process on each virtual machine experiencing an issue with Live Migration. I say it should not be necessary because a lot of work was done by the Product Group to more tightly integrate the Failover Cluster Management interface with Hyper-V. Beginning with R2, virtual machine configuration and management can be done using the Failover Cluster Management interface. Here is a sample of some of the actions that can be executed using the Actions Pane in Failover Cluster Manager.

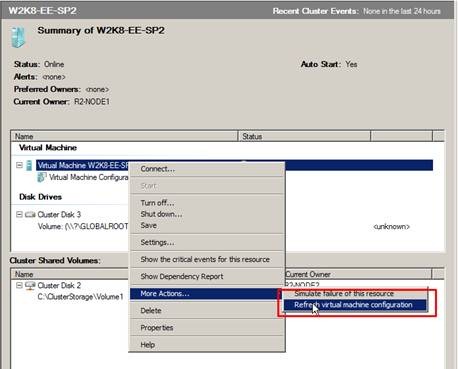

If virtual machine configuration and management is accomplished using the Failover Cluster Management interface, any configuration changes made to a virtual machine should be automatically synchronized across all nodes in the cluster. To ensure this has happened, I begin by selecting each virtual machine resource individually and executing a Refresh virtual machine configuration process as shown here –

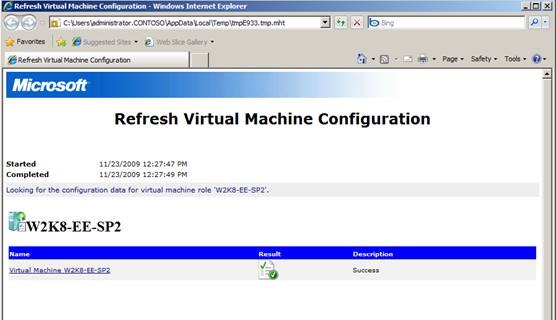

The process generates a report when it completes. The desired result is shown here –

If the process completes with a Warning or Failure, examine the contents of the report and fix the issue(s) that was reported and run the process again until it successfully completes.

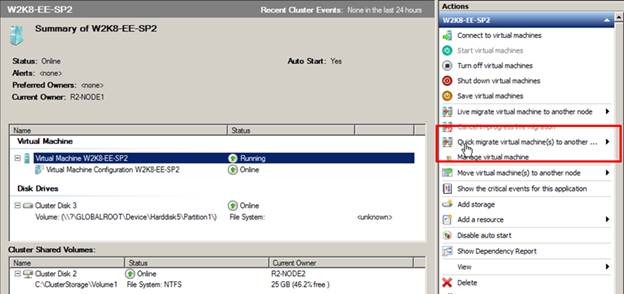

If the refresh process completes without Failure, try to Quick Migrate the virtual machine to each node in the cluster to see if it succeeds.

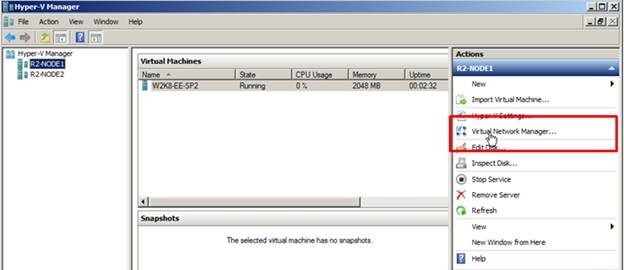

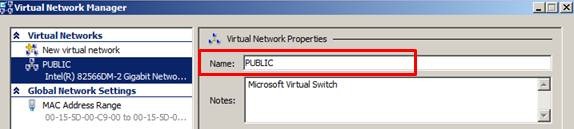

If a Quick Migration completes successfully, that confirms the Hyper-V Virtual Networks are configured correctly on each node and the processors in the Hyper-V servers themselves are compatible. The most common problem with the Hyper-V Virtual Network configuration is that the naming convention used is not the same on every node in the cluster. To determine this, open the Hyper-V Management snap-in, select the Virtual Network Manager in the Actions pane and examine the settings.

The information shown below (as seen in my cluster) must be the same across all the nodes in the cluster (which means each node must be checked). This includes not only spelling but ‘case’ as well (i.e. PUBLIC is not the same as Public) –

It is important to be able to successfully Quick Migrate all virtual machines that cannot be Live Migrated before moving forward in this process. If the virtual machine can Quick Migrate between all nodes in the cluster, we can begin taking a closer look at the networking piece.

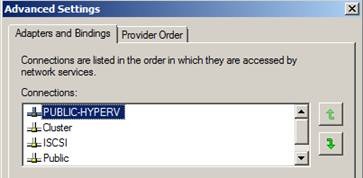

Start verifying the network configuration on each node in the cluster by first making sure the network card binding order is correct. In each cluster node, the Network Interface Card (NIC) supporting access to the largest routable network should be listed first. The binding order can be accessed using the Network and Sharing Center, Change adapter settings. In the Menu bar, select Advanced and from the drop down list choose Advanced Settings. An example from one of my cluster nodes is shown here where the NIC (PUBLIC-HYPERV) that has access to the largest routable network is listed first.

Note: You may also want to review all the network connections that are listed and Disable those that are not being used by either the Hyper-V server itself or the virtual machines.

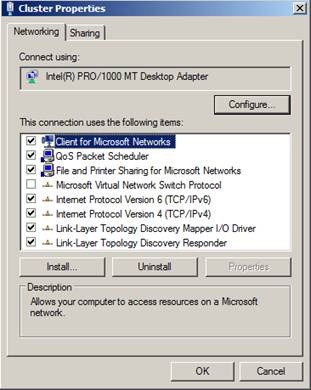

On each NIC in the cluster, ensure Client for Microsoft Networks and File and Printer Sharing for Microsoft Networks is enabled (i.e. checked). This is a requirement for CSV which requires SMB (Server Message Block).

Note: Here is where people get into trouble usually because they are familiar with clusters and have been working with them for a very long time, maybe even as far back at NT 4.0 days. Because of that, they have developed a habit for configuring cluster networking which basically is outlined in KB 258750. This article does not apply to Windows Server 2008.

Note: If CSV is configured, all cluster nodes must reside on the same non-routable network. CSV (specifically for re-directed I/O) is not supported if cluster nodes reside on separate, routed networks.

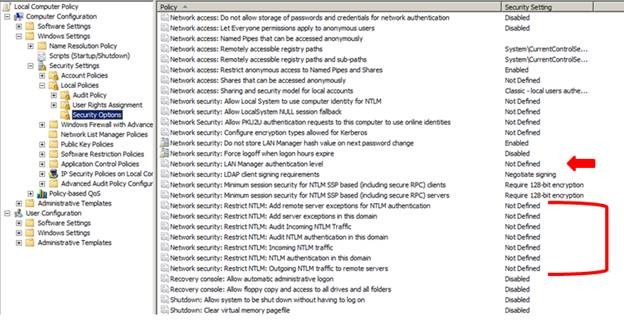

Next, verify the local security policy and ensure NTLM security is not being restricted by a local or domain level policy. This can be determined by Start > Run > gpedit.msc > Computer Configuration > Windows Settings > Security Settings > Local Policies > Security Options. The default settings are shown here –

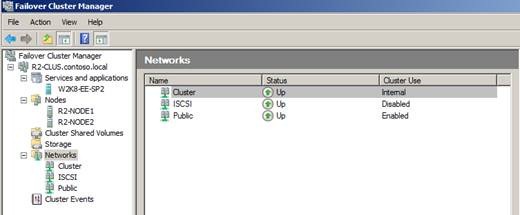

In the virtual machine resource properties in the Failover Cluster Management snap-in, set the Network for Live Migration ordering such that the highest speed network that is enabled for cluster communications and is not a Public network is listed first. Here is an example from my cluster. I have three networks defined in my cluster –

The Public network is used for client access, management for the cluster, and for cluster communications. It is configure with a Default Gateway and has the highest metric defined in the cluster for a network the cluster is allowed to use for its own internal communications. In this example, since I am also using ISCSI, the ISCSI network has been excluded from cluster use. The corresponding listing on the virtual machine resource in the Network for live migration tab looks like this –

Here, I have unchecked the iSCSI network as I do not want Live Migration traffic being sent over the same network that is supporting the storage connection. The Cluster network is totally dedicated to cluster communications only so I have moved that to the top as I want that to be my primary Live Migration network.

Note: Once the live migration network priorities have been set on one virtual machine, they will apply to all virtual machines in the cluster (i.e. it is a Global setting).

Once all the configuration checks have been verified and changes made on all nodes in the cluster, execute a Live Migration and see if it completes successfully.

Bonus material:

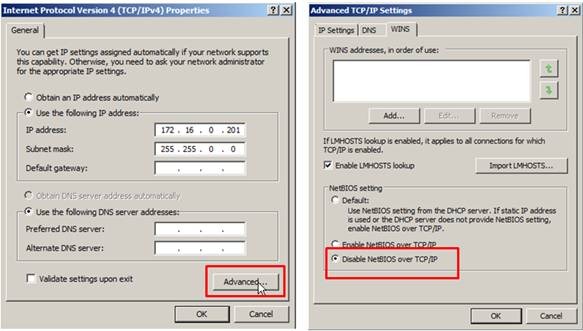

There are configurations that can be put in place that can help live migrations run faster and CSV to perform better. One thing that can be done, is to Disable NetBIOS on the NIC that will be supporting the primary network used by CSV for re-directed I/O. This should be a dedicated network and should not be supporting any other traffic other than internal cluster communications, redirected I/O for CSV and\or live migration traffic.

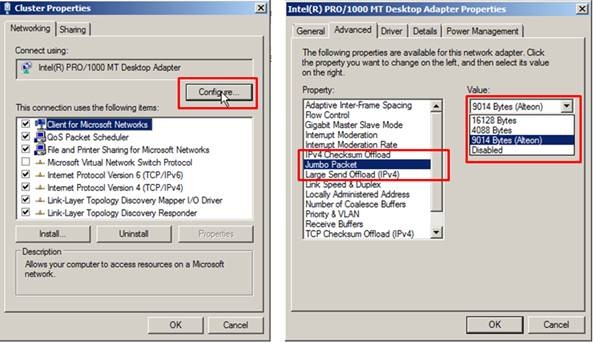

Additionally, on the same network interface supporting live migration, you can enable larger packet sizes to be transmitted between all the connected nodes in the cluster.

If, after making all the changes discussed here, live migration is still not succeeding, then perhaps it is time to open a case with one of our support engineers.

Thanks again fro you time, and I hope you have found this information useful. Come back again.

Additional resources:

Using Live Migration with Cluster Shared Volumes in Windows Server 2008 R2

High Availability Product Team Blog

Hyper-V and Virtualization on Microsoft TechNet

Windows Server 2008 R2 Hyper-V Forum

Windows Server 2008 R2 High Availability Forum

Chuck Timon

Senior Support Escalation Engineer

Microsoft Enterprise Platforms Support