SQL Server Databases and Cubes on one Server

I have put Business Intelligence into a number of small businesses and these guys typically have one server set a side for BI, so how do you cram the database and the cubes into one box to get the best performance?

SQL Server databases and Analysis Services (SSAS) both love memory, and even windows server gets upset if you deprive it of more than certain amount so here’s some guidelines:

You can decide on different values based on your workload. Keep in mind that less memory will mean less pages in memory (more IO) on SQL and potentially less space for Analysis Services to cache queries. Processing will also take a hit depending on size since it copies the cube into memory for processing.

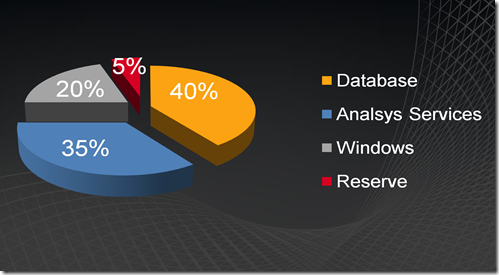

- SQL Server Database - Set SQL to use max 40% physical memory

- Windows - Set <LimitSystemFileCacheSizeMB> to 20% of total, 65022 MB * .20 = 13004 MB. This limits Windows file system cache usage of memory.

- Analysis Services- Set SSAS to use max 35% memory, edit the msmdsrv.ini file (make backup first) and set <TotalMemoryLimit>35

Set <PreAllocate>35 (this means total percent of physical), to preallocate memory at server startup. This helps for NUMA enabled servers especially I found. More on SSAS properties can be found here and more tuning analysis Services can be found in this white paper.

If there are problems with runaway queries using too much memory, you can run ASGovernor to cancel queries if memory usage is greater than 40%. (5% past target for SSAS) It might be that this is too large and it needs to have a smaller threshold.

Technorati Tags: Memory allocation,SQL Server,Analysis Services